When connecting to a local MySQL instance, you have two commonly used methods: use TCP/IP protocol to connect to local address – “localhost” or 127.0.0.1 – or use Unix Domain Socket.

When connecting to a local MySQL instance, you have two commonly used methods: use TCP/IP protocol to connect to local address – “localhost” or 127.0.0.1 – or use Unix Domain Socket.

If you have a choice (if your application supports both methods), use Unix Domain Socket as this is both more secure and more efficient.

How much more efficient, though? I have not looked at this topic in years, so let’s see how a modern MySQL version does on relatively modern hardware and modern Linux.

I’m testing Percona Server for MySQL 8.0.19 running on Ubuntu 18.04 on a Dual Socket 28 Core/56 Threads Server. (Though I have validated results on 4 Core Server too, and they are comparable.)

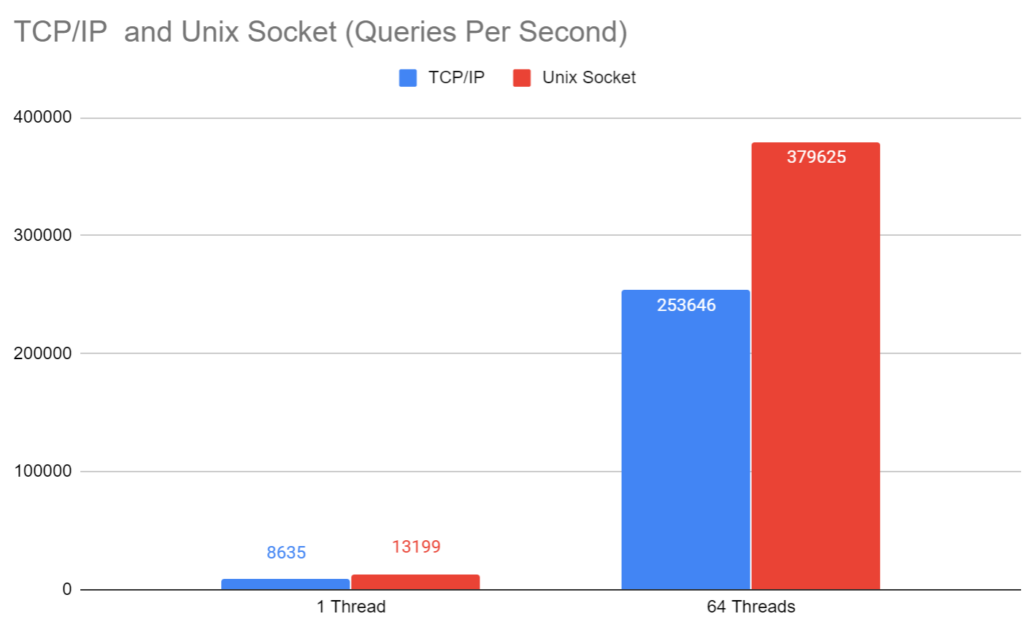

As in the test we run sysbench doing a simple primary key lookup on the small table using prepared statements, this benchmark should have one of the shortest execution paths in the MySQL Server code, hence stressing the TCP/IP Stack. As such, I would expect these results to show a closer to worst-case scenario for TCP/IP overhead versus Unix Domain Socket.

I also run mysqldump on the sysbench table which shows the “streaming” overhead and which should be closer to a best-case scenario.

A single thread run allows us to see the difference in the throughput when there is no contention in MySQL Server or Linux Kernel, while a 64 thread run shows what happens when there is significant contention.

For single thread, we’re seeing a massive 35 percent reduction in throughput by going through TCP/IP instead of the more efficient Unix Domain Socket. For 64 threads, the difference is similar – 33 percent – perhaps highlighting less efficient execution outside of the communication between Sysbench and MySQL.

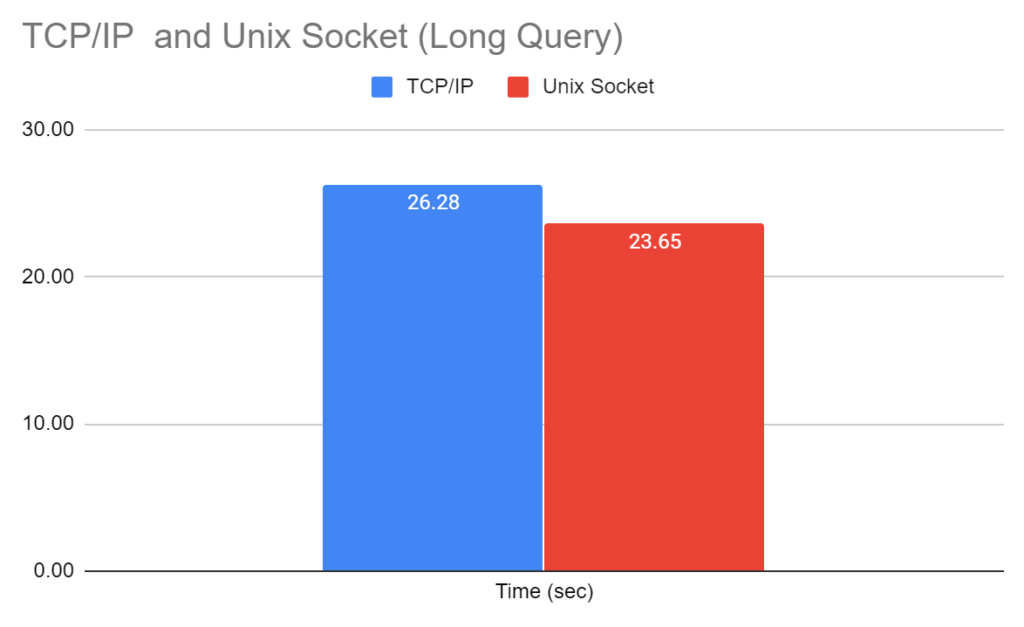

MySQLDump on a 10 million row Sysbench table (about 2GB in size) should be dominated by the overhead of streaming data through socket versus TCP/IP connection, rather than the overhead of passing small packets, which we see with short queries.

|

1 |

time mysqldump -u sbtest -psbtest --host=127.0.0.1 --port=3306 sbtest sbtest1 > /dev/null |

We can see the operation through TCP/IP takes 11 percent longer to complete, so there is overhead for streaming, too. Note that when MySQLDump does data conversion and pushes the output to /dev/null, the actual overhead of running the query is higher than 11 percent.

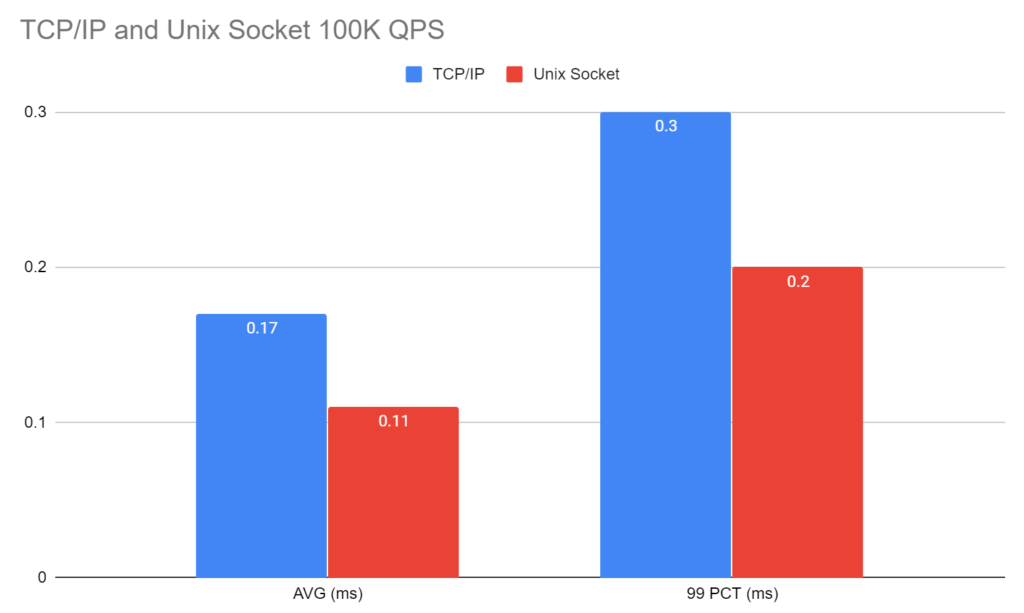

In this benchmark and the next one, instead of pushing the system to the limit, we put a certain load on it and see how it performs. Such a workload tends to be closer to many real-world applications, and it stresses the kernel differently.

With the system capable of handling some 250K queries/sec at full load, 100K QPS corresponds to light load – about 40 percent of full capacity.

In this case, we see a 54 percent increase in average latency and a 50 percent increase in 99 percentile latency, which is pretty much in line with the above throughput tests at peak load.

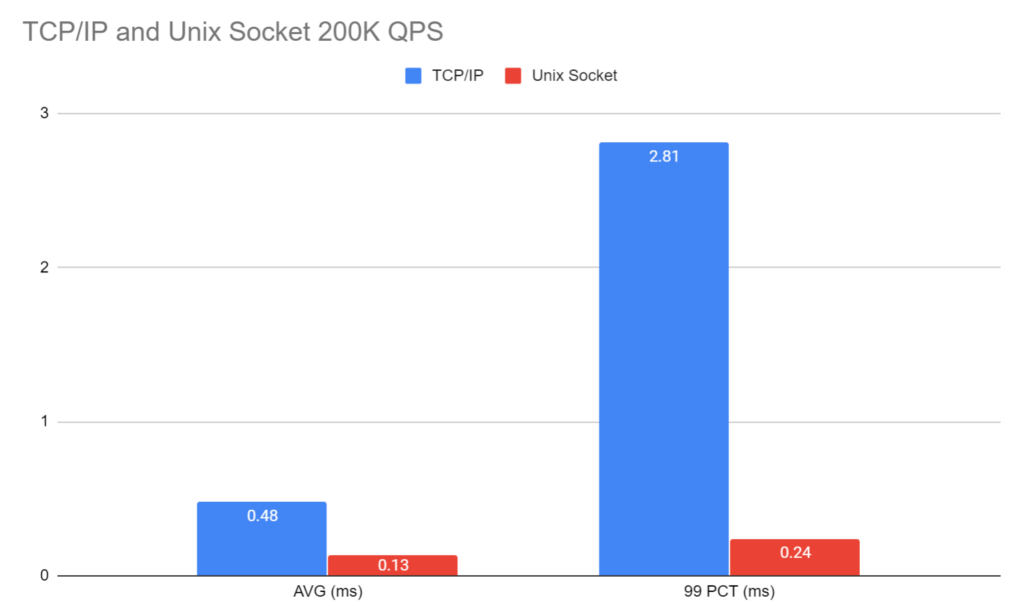

At 200K QPS, we’re driving a system much closer to capacity. With some 250K queries/sec capacity at full load, we’re driving 80 percent of the load for TCP/IP connection. Because the system can handle some 380K queries/sec with Unix Domain Socket, this only corresponds to only 53 percent of the full load.

We can see for this workload that the average latency is 3.7 times better for the Unix Domain Socket and 99 percentile latency is almost 12 times better.

These well may be unusual results but they serve as a great illustration to the following effect: when a system is operating close to its capacity, the latency can be very sensitive to load, and reducing load through performance optimization can have outsized gains on such latency, especially worst-case-scenario latencies.

If you want to run the test on your system, raw results and sysbench command line for runs are in this public Google spreadsheet.

As Unix Domain Sockets are much simpler and tuned for local process communication, you would expect them to perform better than TCP/IP on the loopback interface. Indeed they perform significantly better! So if you have a choice, use Unix Domain Sockets to connect to your local MySQL System!

Hi Peter,

Thank you for the article providing quantitative measurements how connection

using Unix socket is faster than TCP/IP connection.

However, I it was not clear for me from your article how to choose between

those two connection types when you make a connection to the MySQL server.

You wrote that “…use TCP/IP protocol to connect to local address – “localhost” or

127.0.0.1 – or use Unix Domain Socket.”

From that statement it looks like by using “localhost” or 12.0.0.1 address you get

TCP/IP type of connection.

However, I always thought that if you use localhost parameter you get Unix

socket connection type, but if you use 127.0.0.1 you get TCP/IP connection

type.

For example, on my 5.6 MySQL server:

This is socket connection:

[root@ODSDB01 mysql # mysql -u root -pmysql11 -h localhost

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

mysql> s

————–

mysql Ver 14.14 Distrib 5.6.44, for linux-glibc2.12 (x86_64) using EditLine wrapper

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

Server version: 5.6.44-enterprise-commercial-advanced MySQL Enterprise Server – Advanced Edition (Commercial)

Protocol version: 10

Connection: Localhost via UNIX socket

Server characterset: utf8mb4

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

————–

mysql> s

This is TCP/IP connection:

[root@ODSDB01 mysql # mysql -u root -pmysql11 -h 127.0.0.1

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

mysql> s

————–

mysql Ver 14.14 Distrib 5.6.44, for linux-glibc2.12 (x86_64) using EditLine wrapper

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

Server version: 5.6.44-enterprise-commercial-advanced MySQL Enterprise Server – Advanced Edition (Commercial)

Protocol version: 10

Connection: 127.0.0.1 via TCP/IP

Server characterset: utf8mb4

. . . . . . . . . . . . . . . . . . . . . . . . . . . .

————–

mysql>

Am I correct here?

Can you test using the pool of threads plugin? I am curious how much of the TCP/IP overhead is connection negotiation, since the streaming test was a lot better than the OLTP test.

You can ignore that, of course the client still has not negotiate each thread regardless.

Hi Jacob,

How to chose what connection type to use will depend on the program or programming language. Typically they have configuration options to allow using socket instead of TCP/IP.

MySQL Command line clients have weird behavior what localhost!=127.0.0.1 and if you use “localhost” unix socket is to be chosen by default.

nice article, but you don’t provide a reference how to set up mysql server to provide access through a unix domain socket. and searching only returns documentation on connector/j. five minutes of not finding it and i’m wasting my time.

not really much point in making a recommendation without providing links to the docs describing how to implement it on the server, is there?

https://dev.mysql.com/doc/connector-j/8.0/en/connector-j-unix-socket.html

You use the –socket option. By default the socket is created as /tmp/mysql.sock

Connector/J – JDBC pretty much the only one on the “harder side” – early versions of it did not implement Unix Domain Socket support But it is available now as Justin posted

How to tell MySQL client to connect to the server using TCP or Unix socket:

The straightforward way is to use –protocol option:

https://dev.mysql.com/doc/refman/8.0/en/connection-options.html#option_general_protocol

–protocol={TCP|SOCKET|PIPE|MEMORY}

mysql –host=localhost –protocol=TCP

provides TCP connection

mysql –host=localhost –protocol=SOCKET

provides Unix socket connection

mysql –host=127.0.0.1 –protocol=SOCKET

ERROR 2047 (HY000): Wrong or unknown protocol

Nice catch. SOCKET protocol is only available when you use “localhost” – 127.0.0.1 is IP Address so it implies the protocol is TCP/IP.

Ok, I’m convinced, and currently changing all applications on my server to use Unix domain sockets instead. Do you recommend any special

sysctlsettings? I’ve seen a few elsewhere (to deal with increased filesystem I/O, as opposed to network traffic) and I wondered if you have tweaked any such settings and saw any difference in performance.Interestingly, when reading about the

nginx-PHP-FPM (FastCGI for PHP) connection, the recommendation goes in the opposite way: although Unix domain sockets apparently also give a ‘small gain’ in performance, it sort of gets ‘lost’ because this combination tends to be unable to deal with as many open connections…