Complexity:Medium

Potential Impact: High

A poorly configured MySQL Server can cause severe issues, especially under high load during a traffic spike, yet getting the basics right is not that hard. While MySQL Server has more than 400 variables you can tune, you rarely need to change more than 10-20 of them to get 95% of the possible performance for your workload.

This blog post covers the most important basics.

Complexity: Medium

Potential Impact: Medium

Everything being equal, the more data you have the slower the database will operate. Getting rid of (or archiving) data you do not need to have available online is a great way to improve performance.

In many cases, you find applications keep various logs in the database going back many years, where they have little use beyond a few weeks or few months.

Pt-archiver from Percona Toolkit is a great tool to archive old data.

Note: Although after the purge is completed you will have leaner, faster, database archiving, the process itself takes additional resources and is not something you should do while your database is already overloaded.

Complexity: Medium

Potential Impact: Medium

When things were quiet, you can get by without maintaining your database. However, as such, database statistics may be outdated and your tables may be fragmented and otherwise not in the most optimal state.

Run OPTIMIZE TABLE on your tables to re-build them to become more efficient and to update stats.

To run OPTIMIZE for all tables you can run mysqlcheck –optimize -A.

Keep in mind, optimizing is likely to impact your system even more than purging old data so you may not want to do it during high load. A good approach may be to remove your replicas (slaves) from serving the traffic and running the process on them one by one when promoting one of those slaves to a master.

Complexity: Medium

Potential Impact: Medium

Background jobs such as Backup, Maintenance, Report Generation, and Large Data Loads are often not very well optimized — they can be run in a slower period where the MySQL Server can handle the additional load. During traffic spikes, they may be causing database overload and downtime.

Another problem with background jobs running during traffic spike events is overlapping or snowballing. If your background job normally runs 15 minutes and you scheduled two of them at 2 AM and 3 AM, only one of them usually runs at a time. However, due to the additional load, it now may take two hours to run, and you could have several background jobs running at the same time, causing additional load and possible data corruption.

Check your background jobs and ask the following questions:

Complexity: High

Potential Impact: High

Some applications scale very well with hardware scaling, others not so much. The difference typically is whenever applications rely on “hotspots” — data that needs to be updated so frequently it becomes a bottleneck. For example, if you create a single counter in the database where every single transaction will need to update it, it would not scale well.

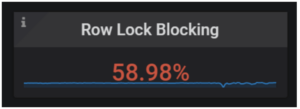

There are many different types of hotspots, and some of them are hard to find and diagnose. The most common one is similar to described above and shows itself as high row-level lock waits (and high deadlock rate).

Within Percona Monitoring and Management you can check the MySQL Innodb Details dashboard to see what portion of time overall is spent waiting on row-level locks:

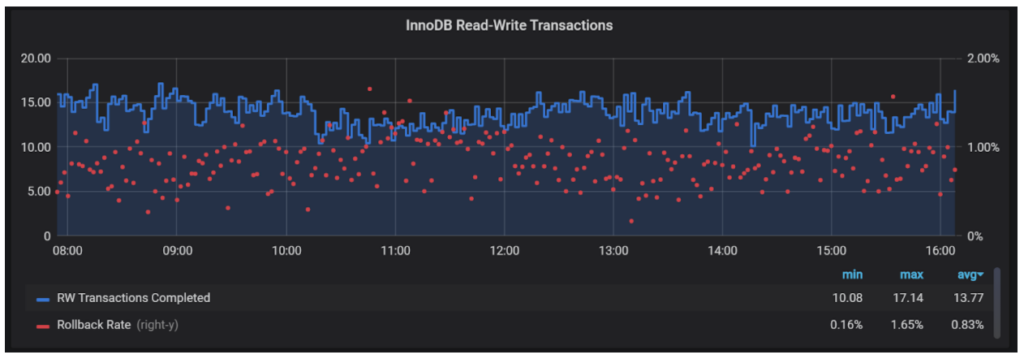

Or see the rollback rates:

Note that different applications could have different normal values for these if you have seen them outside of the norm during your high traffic event.

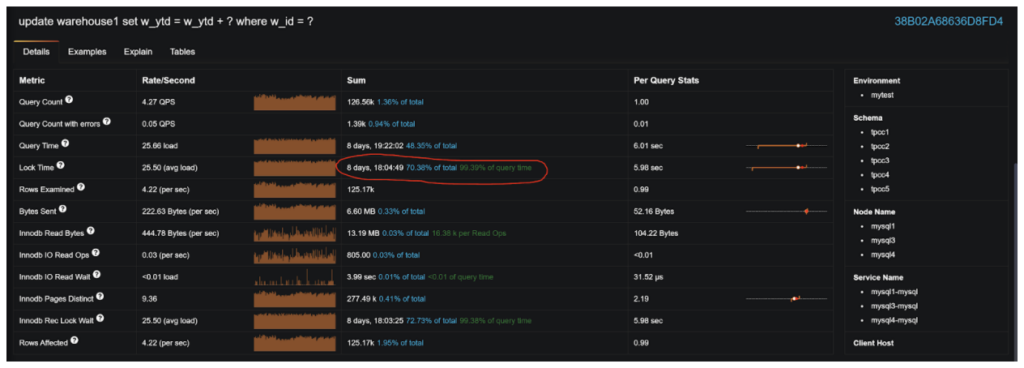

You can also examine what specific queries are having long row-level lock waits:

Reducing hotspots may be as easy as adding a better index, or it could be more difficult, requiring application re-engineering. Regardless, I included it here because if you have designed an application with very bad data hotspots, easier optimization techniques mentioned may not work for you.

Complexity: Medium

Potential Impact: Medium

While configuring MySQL Server it is extremely important to use the proper settings on your application server-side. You want to make sure you’re using persistent connections and not re-connecting for every tiny transaction, especially if you’re using TLS/SSL for database connections. If you’re using a connection pool, make sure it is configured correctly, especially if you’re not using ProxySQL or Threadpool. Specific performance optimization recommendations will vary depending on the programming language, ORM framework, or connection pool used — go Google for them!

Summary

This is quite a list of recommendations, and indeed there is a lot of things you can do during traffic spike events to bring things under control. The good news is you will not need to follow every one of these suggestions to get performance gains and ultimately delight your customers with fantastic application performance (or at least make your development team happy when the database is not the problem). Look at these recommendations as a menu — see what is easiest for you to apply in your environment and what is likely to provide the biggest gain, and use them to guide your actions!