The common wisdom with database performance management is that a “cold” database server has poor performance. Then, as it “warms up”, performance improves until finally you reach a completely warmed up state with peak database performance. In other words, that to get peak performance from MySQL you need to wait for database warm up.

This thinking comes from the point of view of database cache warmup. Indeed from the cache standpoint, you start with an empty cache and over time the cache is filled with data. Moreover the longer the database runs, the more statistics about data access patterns it has, and the better it can manage database cache contents.

Over recent years with the rise of SSDs, cache warmup has become less of an issue. High Performance NVMe Storage can do more than 1GB/sec read, meaning you can warm up a 100GB database cache in less than 2 minutes. Also, SSD IO latency tends to be quite good so you’re not paying as high a penalty for a higher miss rate during the warm up stage.

It is not all so rosy with database performance over time. Databases tend to delay work when possible, but there is only so much delaying you can do. When the database can’t delay work any longer performance tends to be negatively impacted. Here are some examples of delaying work:

In all these cases database performance can be a lot better almost immediately after start compared to when it is completely “warmed up”.

Let’s illustrate this with a little experiment running Sysbench with MySQL and Innodb storage engine for 15 minutes:

|

1 |

sysbench --db-driver=mysql --threads=200 --rand-type=uniform --report-interval=10 --percentile=99 --time=900 --mysql-user=root --mysql-password= /usr/share/sysbench/oltp_update_index.lua --table_size=100000000 run |

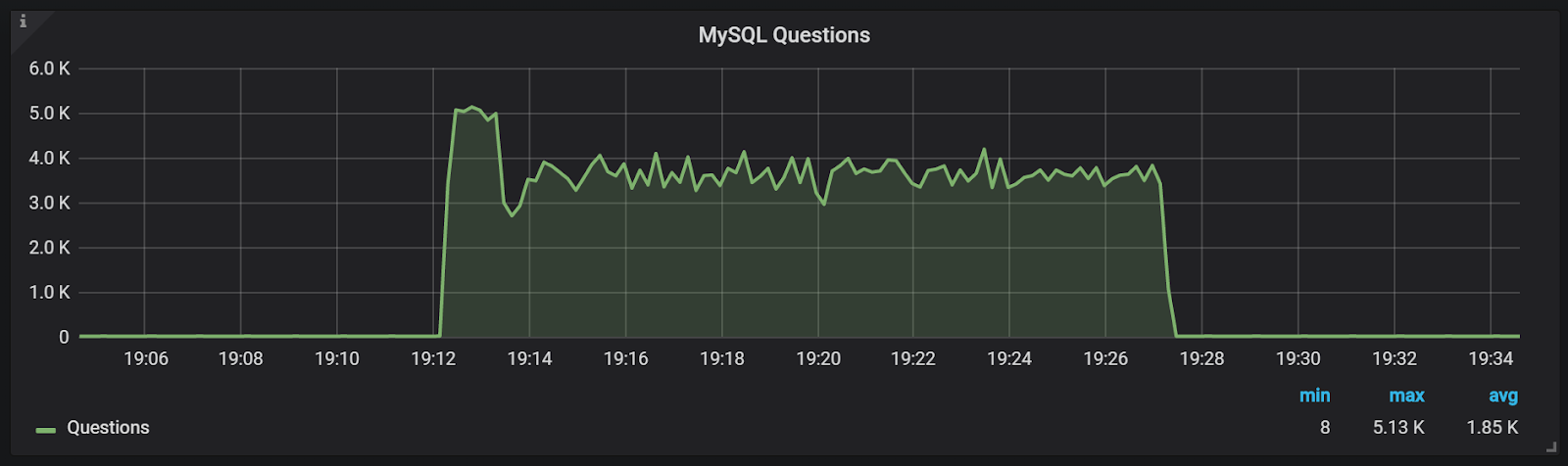

Let’s look in detail at what happens during the run using graphs from Percona Monitoring and Management

As you can see the number of updates/sec we’re doing actually gets worse (and more uneven) after the first 3 minutes, while a jump to peak performance is almost immediate

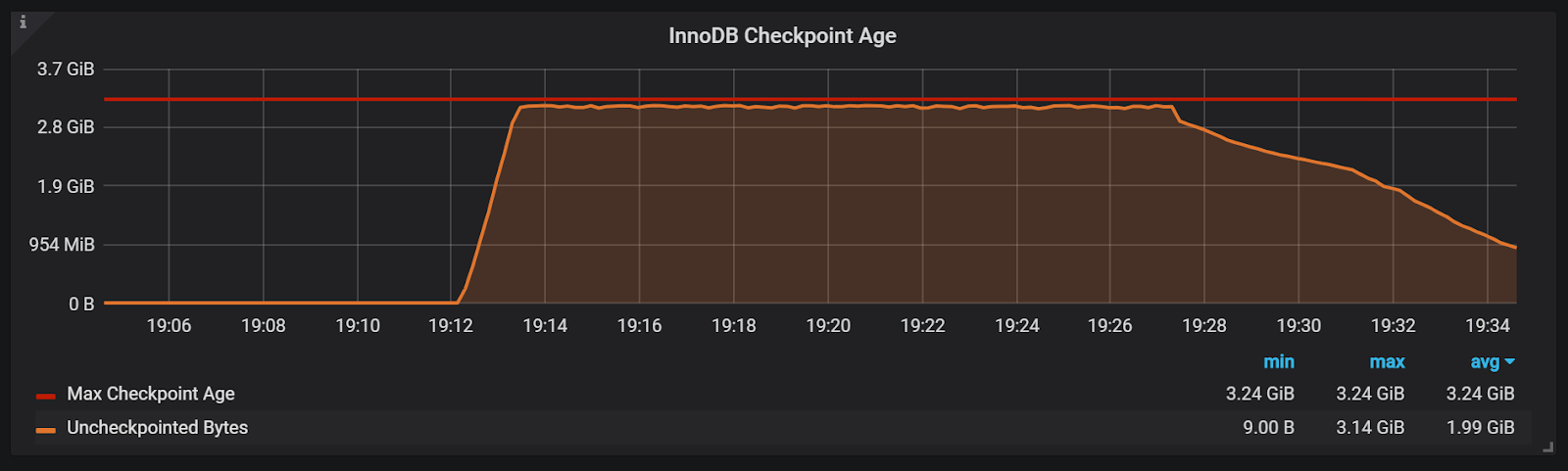

The log space usage explains some of this—in the first few minutes, we did not need to do as aggressive flushing as we had to do later.

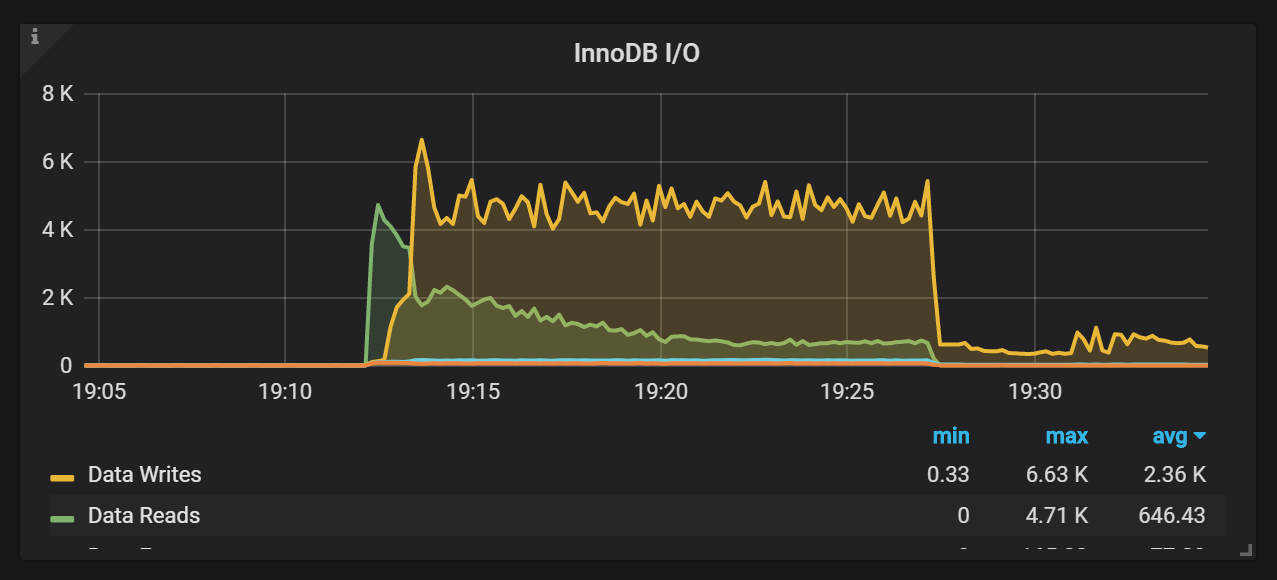

On the InnoDB I/O graph we can see a couple of interesting things. First, you can see how quickly warm up happens—in 2 minutes the IO is already at half of its peak. You can also see the explanation for the little performance dip after its initial high performance (around 19:13)—this is where we got close to using all log space, so active flushing was required while, at the same time, a lot of IO was still needed for cache warmup.

Reaching Steady State is another term commonly used to describe the stage after warm up completes. Note though that such steady state is not guaranteed to be steady at all. In fact, the most typical steady state is unsteady. For example, you can see in this blog post both InnoDB and MyRocks have quite a variance.

While the term database warm up may imply performance after warm up will be better, it is often not the case. “Reaching Steady State” is a better term as long as you understand that “steady” does not mean uniform performance.

Resources

RELATED POSTS