Following my previous benchmarks of SATA SSD cards I got Intel SSD 520 240GB into my hands. In this post I show the results of raw IO performance of this card.

The benchmark methodology I described in previous posts, so let me jump directly to results.

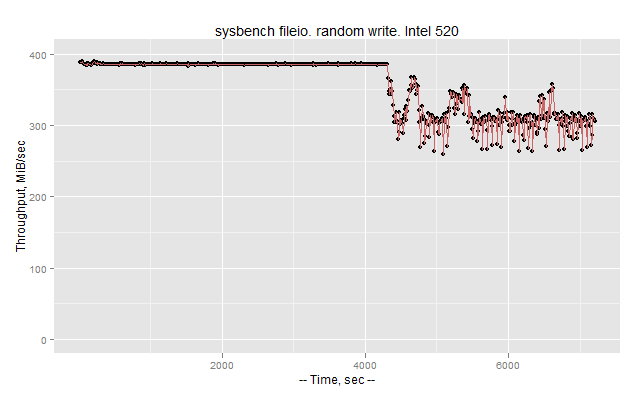

First case is random write asynchronous 8 threads IO, the test is done just after a secure erase operation on the card.

The card is doing stable 380 MiB/sec level, but after around 4000 sec, as garbage collector kicks in, we see a performance drop to around 300 MiB/sec with some instability, which I will research in later charts.

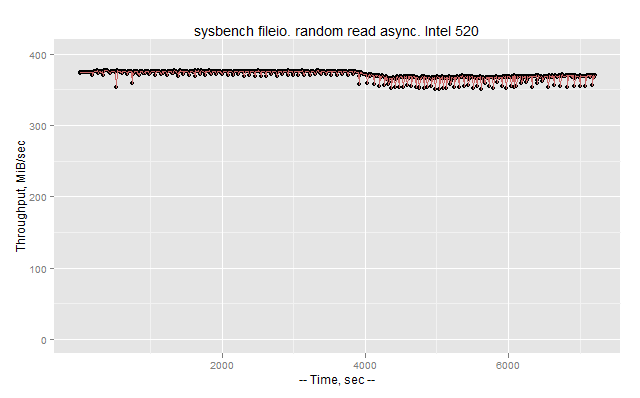

Now, random reads, still asynchronous

It gives almost stable 370 MiB/sec throughput, with some strange small periodic drops.

To better understand response time ranges, we need to switch to synchronous IO and vary amount of threads.

We still see small hiccups in throughput and response times even for small amount of threads.

For 8 threads the 95% response time is 0.69ms.

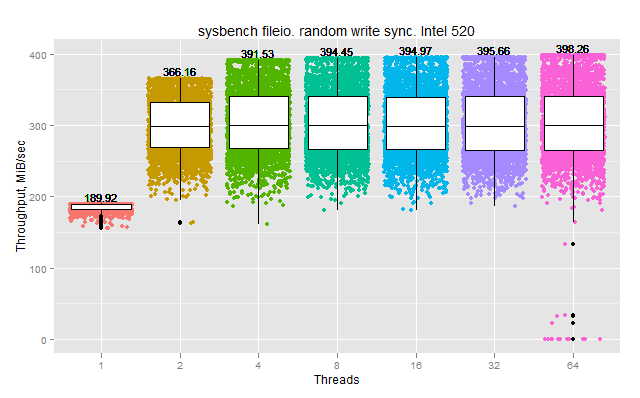

Now let me get back to random write case. I will try synchronous IO varying amount of threads and with measurements every 1 sec to see how bad are drops.

So there is more or less stable performance only for 1 thread. For 2 or more, the throughput varies a lot from second to second. I draw boxplots, which show 25-50-75 percentiles. So there is no grow in throughput after 2 threads, and the result averages at 300 MiB/sec.

I am still interesting in asynchronous IO, as MySQL 5.5 uses async IO for writes. Maybe 8 threads in the first graph is too much and we should go with 1 thread?

So even with 1 async write thread the throughput jumps a lot in range 200 – 400 MiB/sec.

As conclusion, I should say that 300 MiB/sec level for random reads and writes is very decent result for SATA card. I think with this performance SATA is getting closer to level of PCIe cards. Of course PCIe still provides better numbers, but the question is how much MySQL can use. In his keynote Mark Callaghan mentioned that Fusion-io cards they use are highly underutilized.

With the performance variance we see it is a good question how does it affect MySQL performance, and I am going to run some MySQL workloads on these cards to understand it better.

If you are interested more in SSD and MySQL questions – I will be giving a webinary “MySQL and SSD” on May-9. It will be the same as my talk on Percona Live MySQL Conference 2012, if you did not attend my talk – you are welcome to join the webinar.

Can you benchmark actual MySQL performance on the SSD? Not sure how well sysbench results translate into actual MySQL results.

Curious .. how are people deploying these SSDs on DB servers? Are they behind a regular raid controller? Or using software RAID? Or simply straight up direct /dev/sdx?

AD

AD,

Most usage is behind a regular raid controller.

LSI 9211, LSI 9260, Dell Perc H800 are popular ones for this case.

Vadim,

I see you measured response time distribution for reads in sync mode but only Throughput every 10 seconds for random writes. Any reason for that ?

It seems that Intel 520 doesn’t have any power loss protection (capactiors) like the Intel 320. The Intel website states: “Enhanced Power Loss Data Protection: No”. This is a show-stopper for me.

Vojtech,

If that is the case, that is really stupid step from Intel.

On this page

http://www.intel.com/content/www/us/en/solid-state-drives/solid-state-drives-520-series.html

I see “End-to-End Data Protection”, not sure what does that mean.

And I just figured that Intel 520 uses compression.

http://www.intel.com/content/www/us/en/solid-state-drives/ssd-520-tech-brief.html

That may explain why do we see such variance in throughput.

Vadim, “End-to-End Data Protection” means it uses checksums when retrieving the data from flash chips.

Compression is used, because in 520 Intel uses the SandForce controller and not their own controller like in 320. Good point that it’s the fact, that may spoil the results!

Were the sysbench tests run with the same options as those in the Samsung 830 post? If so, Intel 520 seems to behave much better. Ref: http://www.mysqlperformanceblog.com/2012/04/25/testing-samsung-ssd-sata-256gb-830-not-all-ssd-created-equal/

Vadim, did you test this SSD in raid10, and if yes, how do you setup over-provisioning (20% recomend from intel) in raid10, will it give more perfomance in sql environment? I saw intresting opinion there http://thessdreview.com/Forums/ssd-optimization-guide/1824-post19294.htm#post19294

” I would be curious to see what data compressibility StorageReview used in their test and how the results would have changed with different levels of data compressibility. SandForce not only uses compression to improve performance of compressible data, but to also increase the spare area to improve wear-leveling. So if they used highly compressible data in their IOMeter tests, I would expect totally different results to if they used 50% compressible (typical to file servers), 80% compressible (typical for HTML, documents or databases) or incompressible data (Video, Audio & photos).

For example, if a SandForce based SSD had 120GB of user capacity and filled to capacity with data that the SandForce processor could compress by 80%, then in theory only 24GB of the flash cells are filled with the rest available. As long as similar compressible data kept being written to the SSD, even when operated at near 100% user capacity, then it would continuously have an additional ~96GB of spare area for over provisioning, much like a non-SandForce based SSD with a single 24GB partition. An SSD that does not take advantage of compression with this same data would just have the normal spare area to work with, i.e. a tiny fraction of what the SandForce based SSD has. Of course, if the SandForce SSD was used to store photos in a photography studio and kept near full capacity, then it would be forced to rely on its limited spare area and thus wear much more quickly.”

Could You comment this? And how data compression will work in RAID10 array? I would be happy to answer any. I finally get confused.

Roman,

I did not test SSD in RAID configurations, but actually I am going to.

I am going to work with biggest sizes, say 512GB+, as 120GB is too small for MLC to provide a decent lifetime I think (at least in workloads I am looking for)