Percona Monitoring and Management (PMM) v2.12.0 comes with a lot of improvements and one of the most talked-about is the usage of VictoriaMetricsDB. The reason we are doing this comparison is that PMM 2.12.0 is a release in which we integrate VictoriaMetricsDB and replace Prometheus as its default method of data ingestion.

Percona Monitoring and Management (PMM) v2.12.0 comes with a lot of improvements and one of the most talked-about is the usage of VictoriaMetricsDB. The reason we are doing this comparison is that PMM 2.12.0 is a release in which we integrate VictoriaMetricsDB and replace Prometheus as its default method of data ingestion.

A reason for this change was also driven by motivation for improved performance for PMM Server, and here we will look at an overview of why users must definitely consider using the 2.12.0 version if they have always been looking for a less resource-intensive PMM. This post will try to address some of those concerns.

The benchmark was performed using a virtualized system with PMM Server running on an ec2 instance, with 8 cores, 32 GB of memory, and SSD Storage. The duration of the observation is 24 hours, and for clients, we set up 25 virtualized client instances on Linode with each emulating 10 Nodes running MySQL with real workloads using Sysbench TPC-C Test.

Both PMM 2.11.1 and PMM 2.12.0 were set up in the same way, with client instances running the exact same load, and to monitor the difference in performance, we used the default metrics mode for 2.12.0 for this observation.

Sample Ingestion rate for the load was around 96.1k samples/sec, with around 8.5 billion samples received in 24 hours.

A more detailed benchmark for Prometheus vs. VictoriaMetrics was done by the VM team and it clearly shows how efficient VictoriaMetrics is and how better performance can be achieved with Victoria Metrics.

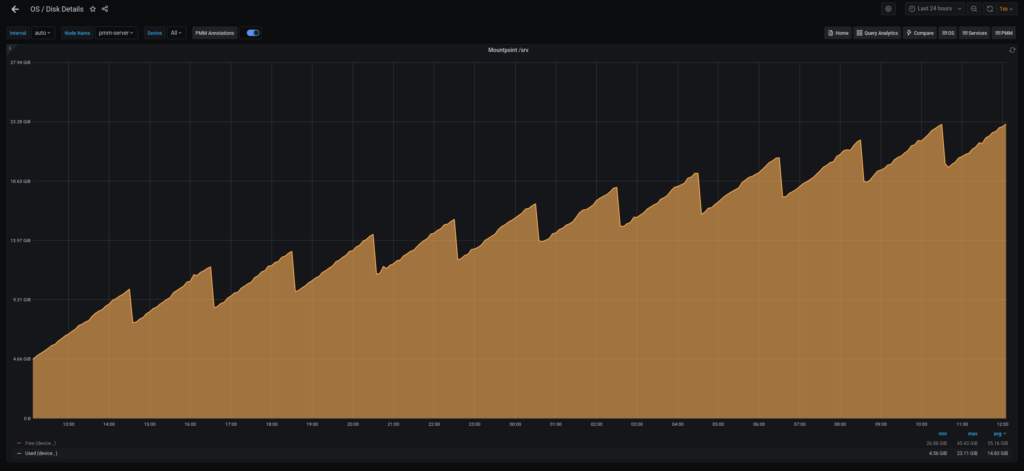

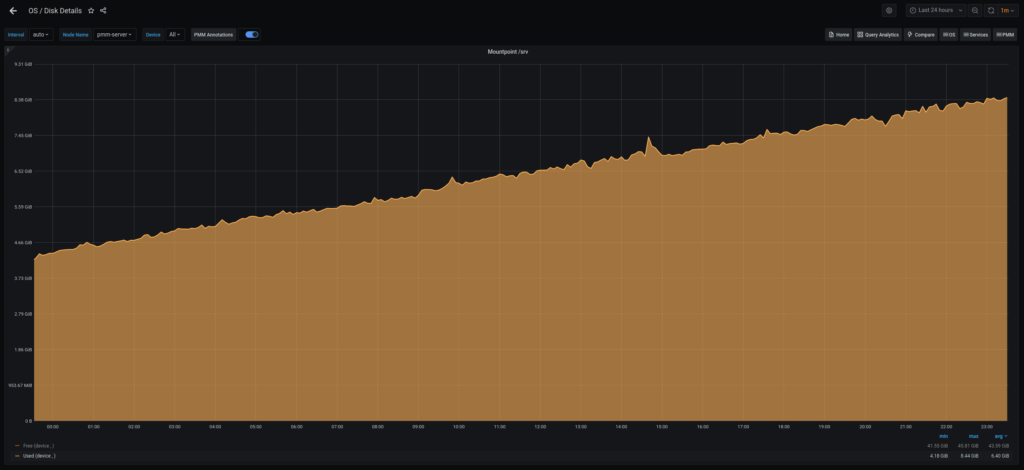

VictoriaMetrics has really good efficiency when it comes to disk usage of the host system, and we found that 2.11.1 generates a lot of disk usage spikes with the maximum storage touching around 23.11 GB of storage space. If we compare the same for PMM 2.12.0, the disk usage spikes are not as high as 2.11.1 while the maximum disk usage is around 8.44 GB.

It is clear that PMM 2.12.0 needs 2.7 times less disk space for monitoring the same number of services for the same duration as compared to PMM 2.11.1.

Disk Usage 1: PMM 2.11.1

Disk Usage 2: PMM 2.12.0

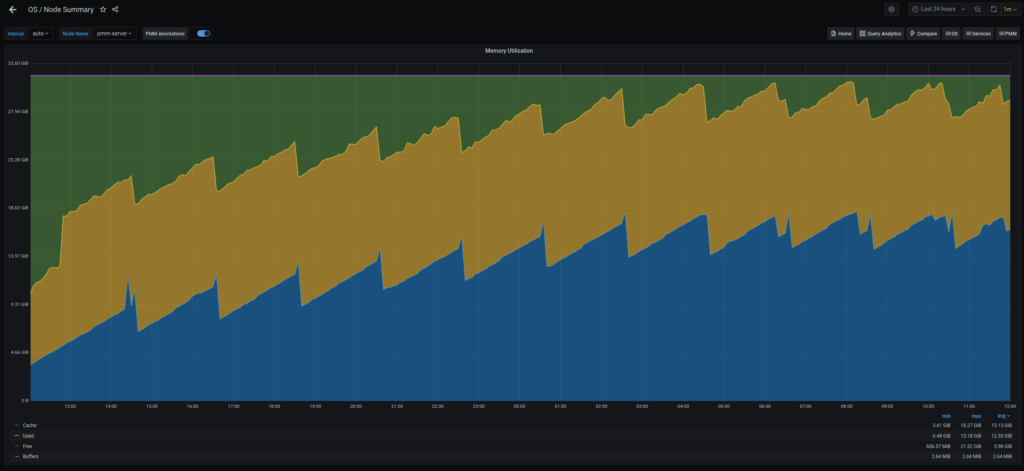

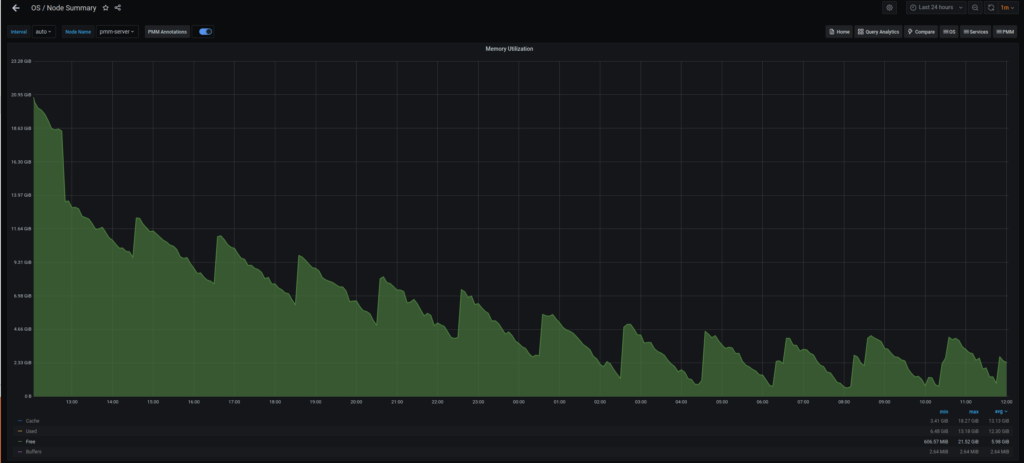

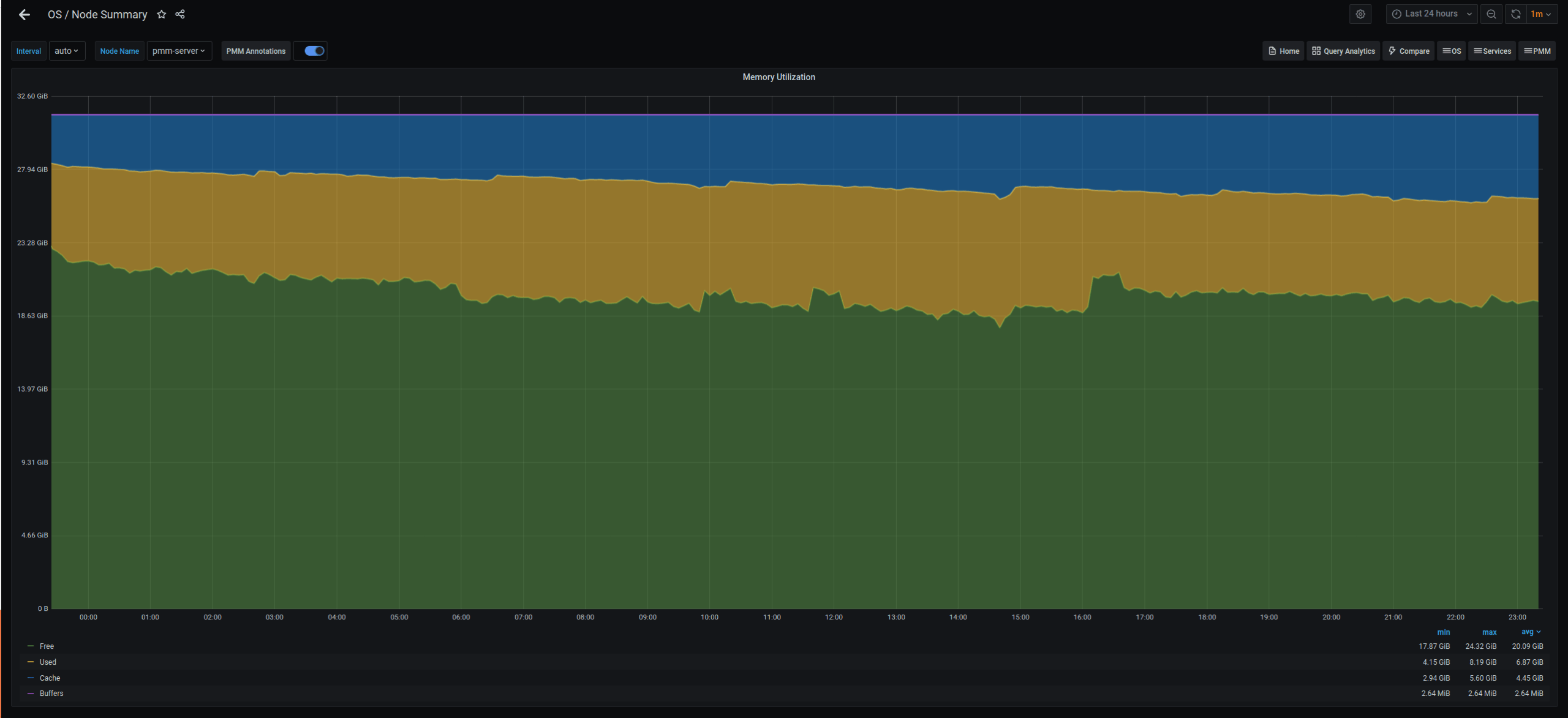

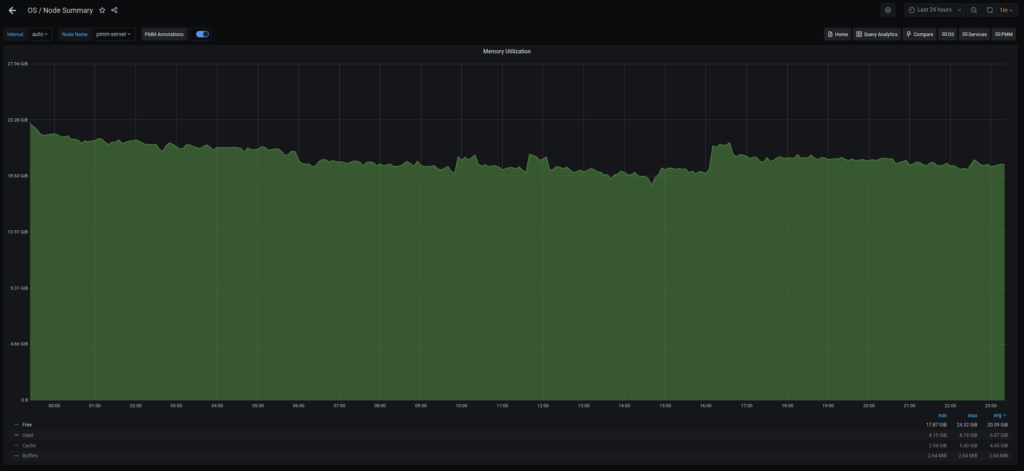

Another parameter on which PMM 2.12.0 performs better is Memory Utilization. During our testing, we found that PMM 2.11.1 was using two times more memory for monitoring the same number of services. This is indeed a significant improvement in terms of performance.

The memory usage clearly shows several spikes for PMM 2.11.1, which is not the case with PMM 2.12.0.

Memory Utilization: PMM 2.11.1

Free Memory PMM 2.11.1

Memory Utilization PMM 2.12.0

The Memory Utilization for 2.12.0 clearly shows more than 55% of memory is available across the 24 hours of our observation, which is a significant improvement over 2.11.1.

Free Memory PMM 2.12.0

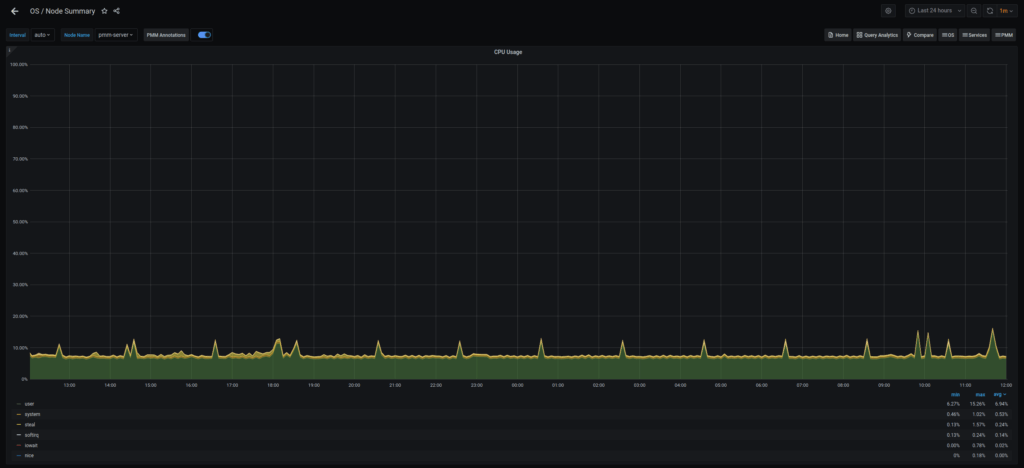

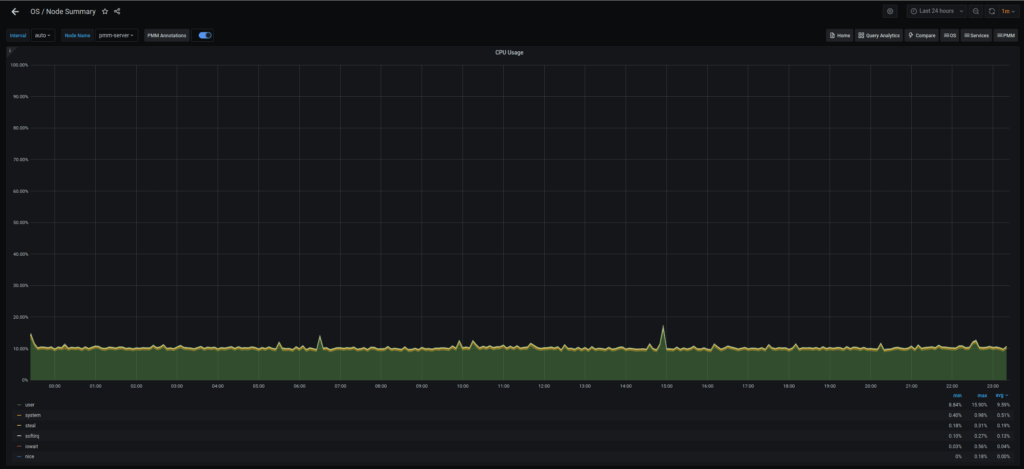

During the observation we noticed a slight increase in CPU usage for PMM 2.12.0, the average CPU usage was about 2.6% more than PMM 2.11.1, but the max CPU usage for both versions did not have any significant difference.

CPU Usage: PMM 2.11.1

CPU Usage: PMM 2.12.0

The overall performance improvements are around the Memory Utilization and Disk Usage, and we also observed a significantly less disk I/O bandwidth with far fewer spikes in the write operations for PMM 2.12.0. This behavior is observed and articulated in the VictoriaMetrics benchmarking. CPU Usage and Memory are two important resource factors when planning to setup PMM Server, and with PMM 2.12.0 we can safely say that it will cost about half in terms of Memory and Disk resources when compared to any other previously released PMM versions. This would also likely encourage current users to be able to add more instances for monitoring without the need to think about the cost of extra infrastructure.

Resources

RELATED POSTS