This post is a step-by-step guide to set up Percona XtraDB Cluster (PXC) in a virtualized test sandbox. I used Amazon EC2 micro instances, but the content here is applicable for any kind of virtualization technology (for example VirtualBox). The goal is to give step by step instructions, so the setup process is understandable and it could serve as a reference architecture.

You will need 4 virtual machines. 3 for PXC and 1 for the client, which will have HaProxy. I used CentOS 6 as the operating system, the instructions are similar for any Linux distribution.

The client node will have HaProxy installed and it will redirect requests to PXC nodes. This approach works well in real-world scenarios too. Running HaProxy on the application servers instead of having them as dedicated entities gives you benefits like you don’t need an extra network roundtrip because of a loadbalancer and scalability of PXC’s load balancing layer scales simply with application servers.

We will use Percona and EPEL repositories for software installation.

To add these follow the instructions for

Percona and EPEL to enable those.

After configuring the repositories you will be able to install software that we will use. First, install PXC on the database nodes.

|

1 |

# yum -y install Percona-XtraDB-Cluster-server Percona-XtraDB-Cluster-client percona-xtrabackup |

Install haproxy and sysbench on the client node.

|

1 |

# yum -y install haproxy sysbench |

After installing everything, let’s configure PXC first. On the first node, my.cnf should look something like this on a relatively weak machine.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

[mysqld] server_id=1 binlog_format=ROW log_bin=mysql-bin wsrep_cluster_address=gcomm:// wsrep_provider=/usr/lib/libgalera_smm.so datadir=/var/lib/mysql wsrep_slave_threads=2 wsrep_cluster_name=pxctest wsrep_sst_method=xtrabackup wsrep_node_name=ip-10-112-39-98 log_slave_updates innodb_locks_unsafe_for_binlog=1 innodb_autoinc_lock_mode=2 innodb_buffer_pool_size=400M innodb_log_file_size=64M |

You can start your first node now. Make sure that you only start second and third nodes when the first node is up and running (it will serve as a donor for SST).

This configuration is for the first node. For the second and third node, you need to change wsrep_cluster_address (alternatively, you can use wsrep_urls in [mysqld_safe] section), which should point to a node in the cluster which is already up, so it will join the cluster. The server_id and wsrep_node_name variables have to be different on each host, for wsrep_node_name, you can use the output of hostname command.

Based on that, for the second node, the differences in the configuration should be the following.

|

1 2 3 |

server_id=2 wsrep_cluster_address=gcomm://10.116.39.76 # replace this with the IP of your first node wsrep_node_name=ip-10-244-33-92 |

For the third node, the differences look like this.

|

1 2 3 |

server_id=3 wsrep_cluster_address=gcomm://10.116.39.76 # replace this with the IP of your first node wsrep_node_name=ip-10-194-10-179 |

For SST we use xtrabackup. This means at startup time, the new node will connect to an existing node in the cluster and it takes a backup of that node with xtrabackup and copies it to the new node with netcat. After a successful SST, you should see this in the error log.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 |

120619 13:20:17 [Note] WSREP: State transfer required: Group state: 77c9da88-b965-11e1-0800-ea53b7b12451:97 Local state: 00000000-0000-0000-0000-000000000000:-1 120619 13:20:17 [Note] WSREP: New cluster view: global state: 77c9da88-b965-11e1-0800-ea53b7b12451:97, view# 18: Primary, number of nodes: 3, my index: 0, protocol version 2 120619 13:20:17 [Warning] WSREP: Gap in state sequence. Need state transfer. 120619 13:20:19 [Note] WSREP: Running: 'wsrep_sst_xtrabackup 'joiner' '10.195.206.117' '' '/var/lib/mysql/' '/etc/my.cnf' '20758' 2>sst.err' 120619 13:20:19 [Note] WSREP: Prepared SST request: xtrabackup|10.195.206.117:4444/xtrabackup_sst 120619 13:20:19 [Note] WSREP: wsrep_notify_cmd is not defined, skipping notification. 120619 13:20:19 [Note] WSREP: Assign initial position for certification: 97, protocol version: 2 120619 13:20:19 [Warning] WSREP: Failed to prepare for incremental state transfer: Local state UUID (00000000-0000-0000-0000-000000000000) does not match group state UUID (77c9da88-b965-11e1-0800-ea53b7b12451): 1 (Operation not permitted) at galera/src/replicator_str.cpp:prepare_for_IST():439. IST will be unavailable. 120619 13:20:19 [Note] WSREP: Node 0 (ip-10-244-33-92) requested state transfer from '*any*'. Selected 1 (ip-10-112-39-98)(SYNCED) as donor. 120619 13:20:19 [Note] WSREP: Shifting PRIMARY -> JOINER (TO: 102) 120619 13:20:19 [Note] WSREP: Requesting state transfer: success, donor: 1 120619 13:20:59 [Note] WSREP: 1 (ip-10-112-39-98): State transfer to 0 (ip-10-244-33-92) complete. 120619 13:20:59 [Note] WSREP: Member 1 (ip-10-112-39-98) synced with group. 120619 13:21:17 [Note] WSREP: SST complete, seqno: 105 120619 13:21:17 [Note] Plugin 'FEDERATED' is disabled. 120619 13:21:17 InnoDB: The InnoDB memory heap is disabled 120619 13:21:17 InnoDB: Mutexes and rw_locks use GCC atomic builtins 120619 13:21:17 InnoDB: Compressed tables use zlib 1.2.3 120619 13:21:17 InnoDB: Using Linux native AIO 120619 13:21:17 InnoDB: Initializing buffer pool, size = 400.0M 120619 13:21:17 InnoDB: Completed initialization of buffer pool 120619 13:21:18 InnoDB: highest supported file format is Barracuda. 120619 13:21:18 InnoDB: Waiting for the background threads to start 120619 13:21:19 Percona XtraDB (https://www.percona.com) 1.1.8-rel25.3 started; log sequence number 246661644 120619 13:21:19 [Note] Recovering after a crash using mysql-bin 120619 13:21:19 [Note] Starting crash recovery... 120619 13:21:19 [Note] Crash recovery finished. 120619 13:21:19 [Note] Server hostname (bind-address): '(null)'; port: 3306 120619 13:21:19 [Note] - '(null)' resolves to '0.0.0.0'; 120619 13:21:19 [Note] - '(null)' resolves to '::'; 120619 13:21:19 [Note] Server socket created on IP: '0.0.0.0'. 120619 13:21:19 [Note] Event Scheduler: Loaded 0 events 120619 13:21:19 [Note] WSREP: Signalling provider to continue. 120619 13:21:19 [Note] WSREP: Received SST: 77c9da88-b965-11e1-0800-ea53b7b12451:105 120619 13:21:19 [Note] WSREP: SST received: 77c9da88-b965-11e1-0800-ea53b7b12451:105 120619 13:21:19 [Note] WSREP: 0 (ip-10-244-33-92): State transfer from 1 (ip-10-112-39-98) complete. 120619 13:21:19 [Note] WSREP: Shifting JOINER -> JOINED (TO: 105) 120619 13:21:19 [Note] /usr/sbin/mysqld: ready for connections. Version: '5.5.24-log' socket: '/var/lib/mysql/mysql.sock' port: 3306 Percona XtraDB Cluster (GPL), wsrep_23.6.r340 120619 13:21:19 [Note] WSREP: Member 0 (ip-10-244-33-92) synced with group. 120619 13:21:19 [Note] WSREP: Shifting JOINED -> SYNCED (TO: 105) 120619 13:21:20 [Note] WSREP: Synchronized with group, ready for connections |

For debugging information about the SST, you can check the sst.err file and the error log too.

After the SSTs are done, you should check if you have a 3 node cluster.

|

1 2 3 4 5 6 7 |

mysql> show global status like 'wsrep_cluster_size'; +--------------------+-------+ | Variable_name | Value | +--------------------+-------+ | wsrep_cluster_size | 3 | +--------------------+-------+ 1 row in set (0.00 sec) |

When all nodes are started, we will set up HaProxy on the client. The point of this is that the application will be able to connect to localhost as mysql server, so although we are using PXC, the application will see this as a single MySQL server running on localhost.

In order to achieve this, we will configure HaProxy on the client node. There are 2 possible configurations here.

First is configuring round robin, which means we will connect and write to all cluster nodes. This can be done, but because of optimistic locking at commit time, rollbacks can happen if we have conflicting writes. In the second configuration, we will configure haproxy in a way that it writes only to one node, so the application doesn’t have to be prepared about unexpected rollbacks. The first configuration is a good choice in most cases, not handling rollbacks is not healthy in a well behaving application anyway.

HaProxy can be configured in the /etc/haproxy/haproxy.cfg and it should look like this.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 |

global log 127.0.0.1 local0 log 127.0.0.1 local1 notice maxconn 4096 chroot /usr/share/haproxy user haproxy group haproxy daemon defaults log global mode http option tcplog option dontlognull retries 3 option redispatch maxconn 2000 contimeout 5000 clitimeout 50000 srvtimeout 50000 frontend pxc-front bind *:3307 mode tcp default_backend pxc-back frontend stats-front bind *:80 mode http default_backend stats-back frontend pxc-onenode-front bind *:3306 mode tcp default_backend pxc-onenode-back backend pxc-back mode tcp balance leastconn option httpchk server c1 10.116.39.76:3306 check port 9200 inter 12000 rise 3 fall 3 server c2 10.195.206.117:3306 check port 9200 inter 12000 rise 3 fall 3 server c3 10.202.23.92:3306 check port 9200 inter 12000 rise 3 fall 3 backend stats-back mode http balance roundrobin stats uri /haproxy/stats stats auth pxcstats:secret backend pxc-onenode-back mode tcp balance leastconn option httpchk server c1 10.116.39.76:3306 check port 9200 inter 12000 rise 3 fall 3 server c2 10.195.206.117:3306 check port 9200 inter 12000 rise 3 fall 3 backup server c3 10.202.23.92:3306 check port 9200 inter 12000 rise 3 fall 3 backup |

In this configuration, we defined 3 frontend-backend pairs. The stats pair is for haproxy’s statistics page, and the others are for PXC. We will have MySQL listening on 3306 and 3307. If we connect to port 3306, we will connect to pxc-onenode, so we will only use one node at a time (to avoid rollbacks bcause of optimistic locking). If that node goes offline, we will start using an other one.

However if we connect to port 3307, we will use all the 3 nodes for reads and writes too. We are using the leastconn load balancing method instead of round robin, which means we always connect to the backend with the least connections established.

The statistics page is accessible on the client node with a browser pointed to /haproxy/stats, the stats auth parameter in the configuration has the credentials for that in plain text. You can also use this for monitoring purposes (the CSV version is good for trending and alerting).

Here MySQL is checked via HTTP checks. MySQL won’t serve these requests. As part of PXC packages, we distribute the clustercheck utility which has to be set up. After that, HaProxy will be able to use check MySQL via HTTP. The clustercheck script is a simple shell script, which accepts HTTP requests, and checks MySQL on incoming request. If the PXC node is ok, it will emit a reposnse with HTTP code 200 OK, otherwise, it emits 503. The script examines wsrep_local_state variable.

To set it up, create the clustercheck user.

|

1 2 3 4 5 |

mysql> grant process on *.* to 'clustercheckuser'@'localhost' identified by 'clustercheckpassword!'; Query OK, 0 rows affected (0.00 sec) mysql> flush privileges; Query OK, 0 rows affected (0.00 sec) |

If you want to use a different username or password, you have to modify them in the script too.

Let’s test.

|

1 2 3 4 5 6 7 8 |

# clustercheck HTTP/1.1 200 OK Content-Type: Content-Type: text/plain Node is running. |

We will use xinetd to daemonize the script. If xinetd is not installed yet, you can install it with yum.

|

1 |

# yum -y install xinetd |

The servie itself should be configured in /etc/xinetd.d/mysqlchk.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# default: on # description: mysqlchk service mysqlchk { # this is a config for xinetd, place it in /etc/xinetd.d/ disable = no flags = REUSE socket_type = stream port = 9200 wait = no user = nobody server = /usr/bin/clustercheck log_on_failure += USERID only_from = 0.0.0.0/0 # recommended to put the IPs that need # to connect exclusively (security purposes) per_source = UNLIMITED } |

Also, we should add the new service to /etc/services.

|

1 |

mysqlchk 9200/tcp # mysqlchk |

Clustercheck will now listen on port 9200 after xinetd restart, and haproxy is ready to check MySQL via HTTP.

|

1 |

# service xinetd restart |

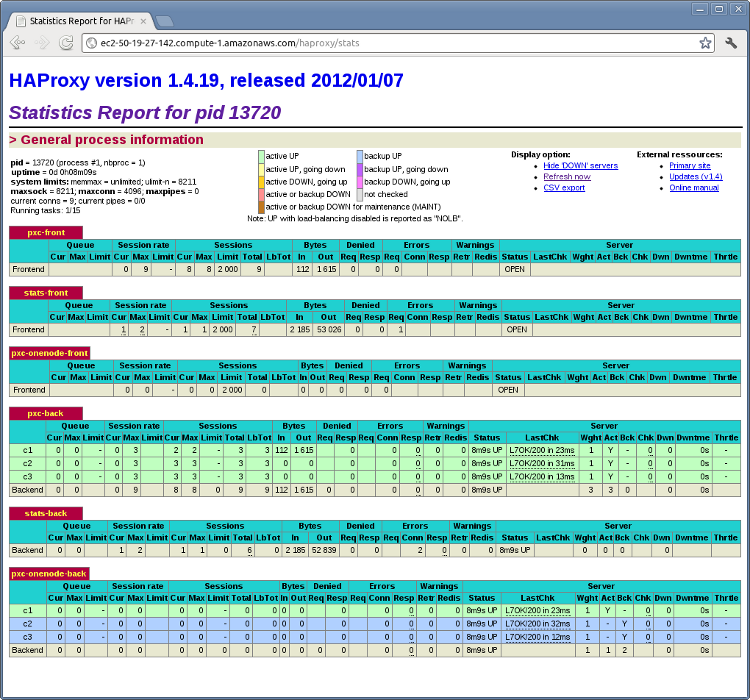

If you did everything right so far, the statictics page of HaProxy should look like this.

Next we will try to use the cluster with sysbench. We will use sysbench from the EPEL repo. First, let’s create a database and a userfor it.

|

1 2 3 4 5 6 7 8 |

mysql> create database sbtest; Query OK, 1 row affected (0.01 sec) mysql> grant all on sbtest.* to 'sbtest'@'%' identified by 'sbpass'; Query OK, 0 rows affected (0.00 sec) mysql> flush privileges; Query OK, 0 rows affected (0.00 sec) |

Populate the table with data for the benchmark.

|

1 |

# sysbench --test=oltp --db-driver=mysql --mysql-engine-trx=yes --mysql-table-engine=innodb --mysql-host=127.0.0.1 --mysql-port=3307 --mysql-user=sbtest --mysql-password=sbpass --oltp-table-size=10000 prepare |

Let’s run the bechmark against the 3307 port.

|

1 |

# sysbench --test=oltp --db-driver=mysql --mysql-engine-trx=yes --mysql-table-engine=innodb --mysql-host=127.0.0.1 --mysql-port=3307 --mysql-user=sbtest --mysql-password=sbpass --oltp-table-size=10000 --num-threads=8 run |

This is the status of pxc-back backend while the sysbench above is running. If you look at Cur column under Session, you can see, that c1 has 2 threads connected, c2 and c3 has 3.

If we run the same benchmark, but against the 3306 backend, haproxy stats will show us that the all the threads are going to hit the c1 server.

|

1 |

# sysbench --test=oltp --db-driver=mysql --mysql-engine-trx=yes --mysql-table-engine=innodb --mysql-host=127.0.0.1 --mysql-port=3306 --mysql-user=sbtest --mysql-password=sbpass --oltp-table-size=10000 --num-threads=8 run |

This is the status of pxc-onenode-back while sysbench above is running. Here only c1 has 8 connected threads, c2 and c3 are acting as backup nodes.

If you are using HaProxy for MySQL you can break the privilege system’s host part, because MySQL will think that the connections are always coming from the load balancer. You can work this around using T-Proxy patches and some iptables magic for the backwards connections. However in the setup described in this post this is not an issue, since each application server has it’s own HaProxy instance, each application server connects to 127.0.0.1, so MySQL will see that connections are coming from the application servers. Just like in the normal case.

Great read, Peter. Thanks.

Running ha-proxy on the app server is a good one as you’re still able to use the security features for connections. And I assume it scales quite well. It also removes a possible spof.

Why do you use ‘FLUSH PRIVILEGES’ after a GRANT?

Daniël, you are right, it’s not necessary to use it here, but doesn’t hurt too.

Running in Ubuntu Server 12.04 i get the message:

[ALERT] 173/171337 (13252) : Starting frontend pxc-onenode-front: cannot bind socket

for

frontend pxc-onenode-front

bind *:3306

mode tcp

default_backend pxc-onenode-back

Panagiotis: most likely port 3306 is used already, choose an other one for HaProxy to listen on.

Hi,

Thanks for this useful document.

Could you please detailed the choice/reason of having a 3306 port to have only one node being used for R/W queries and above all the mention : (to avoid rollbacks bcause of optimistic locking) ?

In a production environment with a reasonable traffic (500 req/sec on each node), would you recommend to use 3307 port to have all requests R/W balanced through all nodes, would it be a problem concerning conflicts (higher risk of queries that can be conflictual from node to node and so more queries ‘rejected’) please ?

Thanks for your answers.

Regards,

Laurent

The clustercheck user creation and the xinetd installation and configuration have to be done in 3 pxc nodes, not in client with haproxy. Is this ok? OR i am wrong?

Thanks for your answers.

Regards,

Panagiotis

Laurent: this is a very good question, would actually worth an other post.

If you lock records in InnoDB with PXC, records are only locked on the local node. Let’s say you open up 2 mysql sessions in HaProxy, and you get to machines 2 and 3, and let’s suppose you have a table called t, with a column called id which is the primary key. You start a new transaction on both sessions and do update t set name=’foo’ where id=1, this won’t block on the second session, because you are on a different database instance. However, at commit time cluster communication happens. One of these transaction has to roll back, because the same record was changed on both nodes. This can be handled on the application level by retrying transaction. This is called optimistic locking, because here we are being optimistic. This means we assume that for most of the cases, we won’t write conflicting data on 2 nodes. A rollback is a much more expensive operation than a commit, so ideally, we want these to be rare. On the other side, pessimistic locking across nodes would involve much more network roundtrips, which would limit performance.

I think this kind of answers your second question. It is not the request/sec which matters here, but the possbility of writing the same data on multiple nodes. If you are suffering from this, the configuration for port 3307 can be a solution for you, in which case you will use PXC only as a HA solution.

Panagiotis: You are right. In fact, you don’t even need clustercheck at all. You just need something which checks you PXC nodes, and emits HTTP codes for HaProxy based on status. It can be written in any language, we just wanted to provide something which does this as part of the software. If you take a look at the script, it only examines wsrep status variables.

Hi Peter,

Thanks for your answer, this helps to clarify the situation for me, so choice of solution A (R/W to a single node) or solution B (R/W to all nodes) would mainly depend of what can be done or not at the application level if I understand well ? If I consider pros and cons of these two solutions :

With Solution A, I can still have the advantage of a High Availability solution without having to deal with rollbacked transaction that needs to be retried at application level because as I can R/W to/from a single node, behaviour will be the same as if I were having only one single standard MySQL server in front ? Cons of this solution is maybe that traffic cannot be load balanced through all nodes so maybe a lower throughput than solution B ? Is it really the case or even measurable ?

With Solution B, I do not have to worry about load balancing queries across the nodes so consequently maybe a higher number of queries and throughput that can be managed ? But for the cons, I almost “have to” modify my applications in front of the cluster so they can manage the case where transaction will be rejected by the cluster because of conflictual queries (probability is higher in this solution I think?)

So maybe solution A would be the best one because it provides less problems at query level, benefits of cluster for High Availability and maybe a good throughput if I’m able to combined it with an API that is able to manage load balancing Reads to 3 nodes so reads ? like this one : http://dev.mysql.com/doc/refman/5.5/en/apis-php-mysqlnd-ms.setup.html#apis-php-mysqlnd-ms.installation

Thks for sharing your experience, skills and opinion on the subject ! 😉

Regards,

Laurent

Hi Laurent,

With solution B, according to our benchmarks you will have a higher throughput. If an application doesn’t handle rollbacks well, it can bite you anyway, even in a single server case.

You can create an other haproxy frontend-backend pair just for reads with solution A, or use any other load balancing solution too. In this case you have to do R/W splitting somehow, either with mysqlnd, or with your custom solution from your application.

Hey

This might sound like a stupid question from a guy trying to read up on ha-proxy, but I can’t see what makes pxc-onenode-back and pxc-back different in how they would handle a rollback depending on if the connection goes to port 3306/3307.

Anyone care to explain it to me? 🙂

Ok, when testing it actually does work this way. I guess onenode in the name is a keyword for this?

Hi Tom,

Onenode is the keyword for doing writes only on one node. When you issue an update command in innodb, record is locked, so concurrent updates won’t happen until you commit. If an other transaction tries to update that very same record, it will be blocked until the former transaction ends. This is not necessarily the case with pxc-back. The behaviour described previously is pessimistic locking, which means that if we “work” on a records, we lock it, because there will be some other transaction which wants to mess with it anyway. InnoDB uses that within the instance. However between the cluster nodes, PXC uses optimistic locking, which means we don’t lock the record, because most likely transactions won’t be conflicting (this would require inter-node communication inside a transaction, not just commit time). So, 2 conflicting update transactions updating the same record issued on pxc-back won’t necessarily block each other (because they are issued on different nodes in reality), so one of them will be rolled back at commit time.

I hope this helps, I will try to write a separate blogpost about this with examples this week.

Hi Peter,

we have implemented your reference architecture on some virtual machines and were wondering if you really need the fourth machine. With HaProxy installed on the three PXC-nodes and UCARP to provide one IP address for HaProxy everything works fine.

Are there any reasons against this kind of setup? Of course, one node would experience some additional load (and it would be always the same node until it fails).

Regards

Jens

Hi Jens,

The fourth node in the example is not a dedicated load balancing node, but rather one application server. You will have many of this in your environment, serving your wordpress/drupal/whatever application. With this setup, on each application server the application can connect to localhost as mysql server.

Technically, nothing is wrong with your setup, although it has a few caveats.

– Using a floating IP address is not always possible (thinking about cloud).

– You will break mysql’s privilege system.

This is because mysql will see that everybody is coming from localhost (from the haproxy to be more precise). If you install haproxy on the application servers, and connect to localhost, mysql will identify that those connections are from the application server and privilege system will work as expected, because nothing is changed here from mysql’s perspective.

– It is more likely that you may hit haproxy performance bottlenecks, like one haproxy process can use only 1 cpu core, spreading your database load balancing layer between your application servers (typically you have many of those anyway), it’s less likely you will run into this problem. Not to mention that people like to use cpus in the database server to run database workload.

What would you say about running a Percona MySQL server on each application server instead of haproxy (if localhost security credentials mentioned above is acceptable and application is chrooted)?

Hi Tom,

If you mean running a PXC node on each application server, that will probably bite you sooner or later, because in that case you are replicating synchronously to a relatively large number of nodes.

If you mean a standalone Percona server, than I think I don’t get what you mean.

I meant an PXC node on each app server, and yes you’re probably right, I suppose it will. Just got a crazy idea 😉

How do I separate read vs write traffic for different algorithms (i.e.RR(read) or Master/failover(write)) in case of typical jdbc web application ?

Thanks for your answer! I did’nt know about the 1-core-bottleneck.

Just played a bit with a four-node-cluster. If one node is shut down and starts again it gets synced automatically. But if the first node fails (the one with wsrep_cluster_address=gcomm://) it obviously can’t connect to the cluster after a restart.

Starting the cluster once, changing the cluster_address and restarting the first node solved the problem but does not seem right. Is there another way to ensure that every node can reconnect to the cluster?

Hi Jens,

See the updated docs on https://www.percona.com/doc/percona-xtradb-cluster/howtos/singlebox.html for a better way to set cluster addresses.

Hi Vanne,

thank you for the link!

Since I really use four machines I tried to adopt the configuration mentioned there and came up with this:

http://paste.jdsv.de/view/raw/66778546

This is for the first node with the IP addresse 10.2.10.128

My error-log shows the following:

http://paste.jdsv.de/view/raw/65552680

Any clues?

Jens,

Can you try adding back the :4567 to all IPs in the wsrep_urls?

It’s the default, i know, but if i remember correctly i made the same “mistake”.

cheers

Thank you, Vanne. Correcting the mistake helped and everything works 🙂

Hi Peter, Thanks for writing such a detailed and articulate document. Very much helpful. i have a few question though on the configurations

on node 1 you had

wsrep_cluster_address=gcomm://

and node2 and node3 you have:

wsrep_cluster_address=gcomm://

Question 1: Dont we need to add the ip addresses of nodes 2 and nodes 3 in the config, if so how? and i

Question 2: If you are doing a in-place upgrade on a server that was working as a master, do we need to ommit some configurations or add anything extra?

Question 3: Here was my test scenario, tell me if i was missing something,

I used the same configurations as mentioned by you

i created a schema on node1 (sbtest) it propagated fine to node2 and node3

then created table on node1, inserted some rows, saw those records on node2 and node3

then i shutdown node1,

created a table on node2, i see the table on node3 too

inserted some records and they are on both the node2 and node3

then i started node1, it started fine, but as the document mentions, that paramert(wsrep_sst_method=xtrabackup) should pickup the changes and just apply them to this node….”that did not happen” and

now node1 is out of sync with node 2 and node3

so no changes are getting propagated from node1 to node2 and 3 and vice-versa

any clues? is it the gcomm://config that is incorrect?

If you use Xtrabackup as SST, the current clustercheck script will see the donor as down (503). If you have 2 nodes only (which is of course not recommended at all) you will have then your database completely unavailable the time of the SST.

You can then modify clustercheck to allow connections to the donor anyway (only if you use a non blocking SST method, xtrabackup):

-if [ “$WSSREP_STATUS” == “4” ]

+if [ “$WSSREP_STATUS” == “4” ] || [ “$WSSREP_STATUS” == “2” ]

Hi mandm,

For node2 and node3 I had wsrep_cluster_address configured to node1’s IP. Basically, this option tells the node where can it find an existing cluster member (this is when it has an IP address), or if it should form a new cluster (so, this is the first node in the cluster, the configuration in this case is wsrep_cluster_address=gcomm://). Since you restarted node1 with gcomm://, it forms a new cluster, so, it won’t pick up the changes from the other nodes. If you want node1 to join the cluster, you should specify wsrep_cluster_address=gcomm://(node2 or node3 ip here), or you can use wsrep_urls in [mysqld_safe] section of the configuration file if you don’t want to change configuration.

‘@Frederic, i have 3 cluster node..

@ Peter thanks for your response, yes so after researching a bit, i have my changed my config on all the 3 nodes as

# This was formally known as [safe_mysqld]. Both versions are currently parsed.

[mysqld_safe]

socket = /var/run/mysqld/mysqld.sock

nice = 0

wsrep_urls = gcomm://10.1.1.1:4567,gcomm://10.2.2.2:4567,gcomm://10.3.3.3:4567,gcomm://

[mysqld]

#

# * Basic Settings

#

server_id=1

binlog_format=ROW

wsrep_provider=/usr/lib64/libgalera_smm.so /

wsrep_slave_threads=2

wsrep_cluster_name=dev_cluster

wsrep_sst_method=rsync

wsrep_node_name=node1 (this variable changes as per different nodes)

innodb_locks_unsafe_for_binlog=1

innodb_autoinc_lock_mode=2

log_slave_updates

wsrep_replicate_myisam=1

now by adding the value in mysqld_safe section the only concern i have on ubuntu 10.04 LTS is that when i reboot the machine and let the rsync process complete….the synced node does not let me make connections…so i have to stop the db as “service mysql stop” and then restart as /etc/init.d/mysql start….and then after resynching and letting the rsync process finish the node start correctly…have you see this behaviour?

and is their a way to modify the on startup of VM to start using /etc/init.d/mysql start..?

Thanks again…i am just taking small steps and trying to understand this cluster setup and your suggestions are valuable..

Hi

I’ve tried this configuration on a 2 node (third is garbd).

I’ve notice that when a 2 node join the cluster with the xtrabackup sst method enable the haproxy stops responding to sql query because the 1 node is busy to be a donor.

Is it a normal behaviour ? this means that this kind of configuration requires at least 3 operational nodes in order to be reliable.

Thx

Hi Davide,

The supplied monitoring script for HaProxy takes the node out if it’s a donor for xtrabackup SST. In some cases this might make sense, since the IO pressure is increased on this node. However, if you want to allow queries on a node, which is a donor for xtrabackup SST, you can just follow Frederic’s advice above and modify clustercheck in the following way.

-if [ “$WSSREP_STATUS” == “4” ]

+if [ “$WSSREP_STATUS” == “4” ] || [ “$WSSREP_STATUS” == “2” ]

Hi mandm,

No, I haven’t seen anything like this. Can you describe it in a little more detail? What version is this? Did you install from packages? What does the error log say and what exactly does does not let me make connections mean? Also, this discussion might be better moved to [email protected] mailing list.

yes i will join the google group and create a topic there…Thanks

Hi Peter

Thx for help .

I’ve tried to apply the same concept of using the script clustercheck to check the mysql health with keepalived and vip.

Basically by assuming 1 node is master and the 2 is slave each keepalived instance on both nodes checks the health of node 1.

When health is not ok the vip is switched on node 2.

Node 3 is always garbd.

Is it a reliable config this kind of vip management ?

Thx.

Hi Peter,

When i use the sys bench tests…

sysbench –test=oltp –db-driver=mysql –mysql-engine-trx=yes –mysql-table-engine=innodb –mysql-host=127.0.0.1 –mysql-port=3306 –mysql-user=sbtest –mysql-password=sbpass –oltp-table-size=10000 –num-threads=8 run

and if i run some other application at the same time accessing a different schema other that subtest…i see that the other application has lot of sessions disconnected…with the error connecting to mysql..

have you seen anything like this?…i am trying hard to debug this issue but no luck so far

does the cluster have a limit to any commits? ..or does it wait on any sessions to complete..?

Hi mandm,

I can confirm what you’re saying because I’m also seeing the same behaviour only by running sysbench on an empty cluster which have no traffic at all ( 3 cluster nodes each with 32GB RAM, Intel(R) Xeon(R) CPU E31270 @ 3.40GHz and 146GB 10k rpm HDD ).

I think this could be a problem for us in our environment because this cannot be explained at all, no errors on node’s log and only these errors displayed by sysbench when doing the bench :

FATAL: error 2003: Can’t connect to MySQL server on ‘172.16.29.238’ (99)

FATAL: failed to connect to database server!

FATAL: unable to connect to MySQL server, aborting…

FATAL: error 2003: Can’t connect to MySQL server on ‘172.16.29.238’ (99)

FATAL: failed to connect to database server!

FATAL: unable to connect to MySQL server, aborting…

FATAL: error 2003: Can’t connect to MySQL server on ‘172.16.29.238’ (99)

FATAL: failed to connect to database server!

Any informations or advises about this behaviour would be very appreciated.

Regards,

Laurent

‘@Laurent, @mandm,

Can you provide more details on this? I tested the same setup described in blog post but with 3 cluster nodes on 3 KVM guests (VDE + dnsmasq) and then running haproxy from the host (with necessary privs).

I tested with two different sysbench on two different tables to test this and I didn’t see any errors or disconnects.

Hi Raghavendra,

Sure, thanks for your interest in this problem, you will find below my informations :

HAProxy configuration :

http://pastebin.com/Gc1hcxBc

Percona XtraDB my.cnf for 1 node, others are almost the same except for ip addresses :

http://pastebin.com/d7U1Je0d

And finally my sysbench command line and outputs :

http://pastebin.com/VS3H8kHy

Don’t hesitate if you need others informations.

Thanks, regards

Laurent

ok so after debugging for a few days i think the issue was with the machine settings..

in the /var/log/messages i saw these messages “nf_conntrack: table full, dropping packet”

and i updated my server config to

$ cat /proc/sys/net/ipv4/netfilter/ip_conntrack_generic_timeout

$ echo 120 > /proc/sys/net/ipv4/netfilter/ip_conntrack_generic_timeout

$ cat /proc/sys/net/ipv4/netfilter/ip_conntrack_tcp_timeout_established

$ echo 54000 > /proc/sys/net/ipv4/netfilter/ip_conntrack_tcp_timeout_established

$ cat /sys/module/nf_conntrack/parameters/hashsize

$ echo 32768 > /sys/module/nf_conntrack/parameters/hashsize

$ echo 131072 > /proc/sys/net/ipv4/netfilter/ip_conntrack_max

$ cat /proc/sys/net/ipv4/netfilter/ip_conntrack_max

131072

and that seems to have resolved the issue…

so to help you debug…please check the values during the load test…do this from both the machines (db and load balancer )

sudo sysctl net.netfilter.nf_conntrack_count net.netfilter.nf_conntrack_max

once you see the number reach a certain limit , you will see the error for connection timeout..

Let me know if that fixes it

Thanks for the post!

Hi!

I’ve got a question about mysql + haproxy. Here, the mysql user is always granted access since it was created using something like this: ‘sbtest’@’%’, am I right?

But how can I grant access to, for example, ‘sbtest’@’10.5.4.20’? In this case, won’t Mysql get the haproxy’s IP instead of the client’s IP (10.5.4.20)?

Thanks!

Hi Matias,

You are right. If you run haproxy on each application server and your application connects to localhost everything remains the same from the privilege system’s perspective, otherwise, you will see connections from haproxy’s IP address. This also means that you lose client level access control granularity in MySQL, but in my opinion, this is not that big of a limitation. You can still implement access control for example on the TCP level on haproxy. In most cases haproxy will run on each application or mysql is on a trusted network, where the haproxy instance responsible for database connection is most likely on the same network as the application servers with the same host part and MySQL has that network in the host part of user account.

Hello, Piter!

Laurens told that there is solution A (using 3306 port) and B (using 3307 port) with their pluses and minuses.

But what if to combine both of this methods, when all write operations goes to 3306 port (to one specific PXC node) and all read operations goes to 3307 port (to all PXC nodes). As I read, Percona Cluster will not give much performance in write operations, but read operations will gain much spead. That scheme will slightly reduces write speed (because it will also execute read operations while reading from 3307 port), but no rollback transactions will be done at all, because write executes only on one node.

In such scheme HAProxy probably should be placed to separate node so the 3306 port points to the same PXE node for all application servers (HA for that node should be also implemented in case of one HAProxy node crash). But as HAProxy a very light, no powerfull servers needed for it.

Does such scheme make sense these scheme?

P.S. Sorry for my english, I’m not a native speaker.

Laurent, Peter, sorry for misspelled your names 🙁

Just completed the setup, and restored a database. Everything looked OK, until I use pt-log-player, and discovered that after 30 seconds, HAProxy drops the session. I have never used HAProxy before so am a little in the dark. I looked at mandm’s suggestion, but that does not help me.

Basically, if I log into the cluster directly from a remote machine wit mysql then the session stays alive, however, if I log in to the 127.0.0.1 host with port 3306 or 3307, the session stays alive for 30 seconds and then haproxy debug says :

00000001:pxc-front.accept(0004)=0008 from [127.0.0.1:59633]

00000001:pxc-back.srvcls[0008:0009]

00000001:pxc-back.clicls[0008:0009]

00000001:pxc-back.closed[0008:0009]

This causes errors with pt-log-player, as sometimes the queries on a perticular thread are more than 30 seconds apart, nd then all other queries on that thread fail.

pt-log-player says :

in proc 5 session 233461088 caused an error: MySQL server has gone away

just after the session has closed. Not all pt-log-player-threads close, but it does get annoying with the error messages clogging up the log files

Can anyone help me with a keepalive solution?

I have the same problem with HAProxy.

A large bulk of queries (reads and writes) causes ‘MySQL server has gone away’, but really mysqld process is alive.

Error log does not contain anything about process crash.

If connection via local socket used everything is OK, so problem with HAProxy.

Debug in the same as Mike has:

00000000:pxc-referencenode-front.accept(0006)=0009 from [10.0.0.70:34658]

00000000:pxc-onenode-back.srvcls[0009:000a]

00000000:pxc-onenode-back.clicls[0009:000a]

00000000:pxc-onenode-back.closed[0009:000a]

My suggestion was to increase memory limit for haproxy process, I have increased it to 1GB (bulk size = 700M) but still have the error. My /etc/default/haproxy (I use Ubuntu):

ENABLED=1

EXTRAOPTS=”-d -V -de -m 1024″

percona-xtradb-cluster version is the latest – 5.5-28

Is here anyone who has already experienced in this and have a solution?

Thanks in advance.

A little late, but nonetheless: If you are running into the ‘MySQL server has gone away’ problem, you should change the “timeout server” and “timeout client” values in the haproxy.cfg file according to your “wait_timeout” value of MySQL (28800 by default).

In my case, I have set the MySQL “wait_timeout” to 3600. So in HAProxy haproxy.cfg, I would add 1 second extra to the “timeout client” and “timeout server” values:

—

frontend pxc-front

timeout client 3601s

… etc

frontend pxc-onenode-front

timeout cilent 3601s

… etc

backend pxc-back

timeout server 3601s

… etc

backend pxc-onenode-back

timeout server 3601s

… etc

—

Again, just make sure it corresponds to your MySQL timeout value.

Also, if you’re running into problems with exhausted sockets, for one set “net.ipv4.tcp_tw_reuse” to 1 in your /etc/sysctl.conf file (don’t forget to apply with “sysctl -p” 😉 ). You can also add to the “pxc-back” and “pxc-onenode-back” a “source” parameter that includes a very wide port range that overrides the automatic kernel selection of a free port (which often is set to about 28K sockets per source), ie:

server c1 10.116.39.76:3306 check port 9200 inter 12000 rise 3 fall 3 source 10.116.39.76:10240-65000

Hello Peter,

Can you please provide an example how would I need to set up the haproxy for separating the traffic via mysql read for roundrobin and the mysql write failover configuration.

It would help me alot.

Thanks

Bulk answer for the last few comments, sorry for being late on that one.

For haproxy and mysql server has gone away: this is usually timeout/maxconn mismatch on either haproxy or mysql side. If you are not using persistent connections, it’s relatively easy to use haproxy for queueing with the appropriate maxconn/queue values so your database won’t meet a workload that’s over the desired degree of parallelism.

Zoltan,

Haproxy won’t split read and write traffic for you. You need to do that from the application. Haproxy is only a tcp level load balancer here. Just it can make sense to write only to 1 node if you have conflicting writes. With PXC, conflicting writes are causing rollbacks, not locks waits like in a single node case. Rollbacks are io intensive, so they are much more expensive. If you don’t have conflicting write transactions (parallel threads updating the same record for example), you can use just the 3307 port backend in the case above.

Hi,

I have implemented the HA proxy and with the XtraDB cluster. I was using your B configuration everything seamed to work just fine but after two days I have find out that certain mysql updates and inserts are not sent to the database by the application via HAproxy. When I have disabled the HAproxy and I have set the application to work directly with one of the Xtradb nodes everything works properly. Would you have information about this how can I fix this behaviour?

Thanks for your help in advance.