Traditionally the most benchmarks are focusing on throughput. We all get used to that, and in fact in our benchmarks, sysbench and tpcc-mysql, the final result is also represents the throughput (transactions per second in sysbench; NewOrder transactions Per Minute in tpcc-mysql). However, like Mark Callaghan mentioned in comments, response time is way more important metric to compare.

I want to pretend that we pioneered (not invented, but started to use widely) a benchmark methodology when we measure not the final throughput, but rather periodic probes (i.e. every 10 sec).

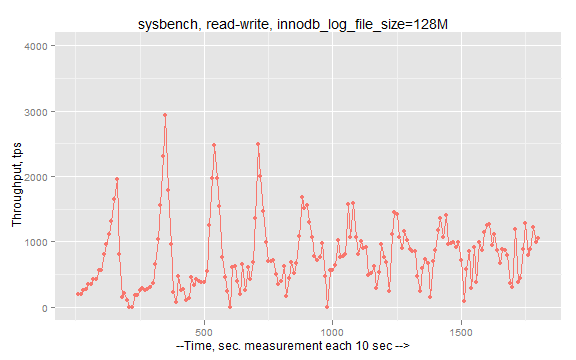

It allows us to draw “stability” graphs, like this one

where we can see not only a final result, but how the system behaves in dynamic.

What’s wrong with existing benchmarks?

Well, all benchmarks are lie, and focusing on throughput does not get any closer to reality.

Benchmarks, like sysbench or tpcc-mysql, start N threads and try to push the database as much as possible, bombarding the system with queries with no pause.

That rarely happens in real life. There are no systems that are pushed to 100% load all time.

So, how we can model it? There are different theories, and the one which describes user’s behavior, is Queueing theory. In short we can assume that users send requests with some arrival rate (which can be different in the different part of day/week/month/year though). And what is important for an end user is response time, that is how long the user has to wait on results. E.g. when you go to your Facebook profile or Wikipedia page, you expect to get response within second or two.

How we should change the benchmark to base on this model ?

There are my working ideas:

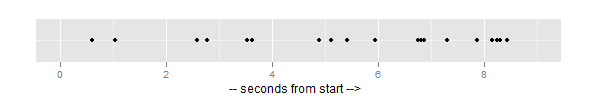

Or if we represent graphically (point means even arrival):

As you see interval is far from being strict 0.5 sec, but 0.5 is the mean of this random generation function. On the graph you see 20 events arrived within 9 seconds.

What does it give to us? It allows to see:

When it is useful?

At this moment I am looking to answer on questions like:

– When we add additional node to a cluster (e.g. XtraDB Cluster), how does it affect the response time ?

– When we put a load to two nodes instead of three nodes, will it help to improve the response time ?

– Do we need to increase number of working threads when we add nodes ?

Beside cluster testing, it will also help to see an affect of having a side on the server. For example, the famous problem with DROP TABLE performance. Does DROP TABLE, running in separate session, affect a response time of queries that handle user load ? The same for mysqldump, how does it affect short user queries ?

In fact I have a prototype based on sysbench. It is there lp:~vadim-tk/sysbench/inj-rate/. It works like a regular sysbench, but you need to specify the additional parameter tx-rate, which defines an expected arrival rate (in transactions per second).

There are some results from my early experiments. Assume we have an usual sysbench setup, and we target an arrival rate as 3000 transactions per second (regular sysbench transactions). We vary working threads from 1 to 128.

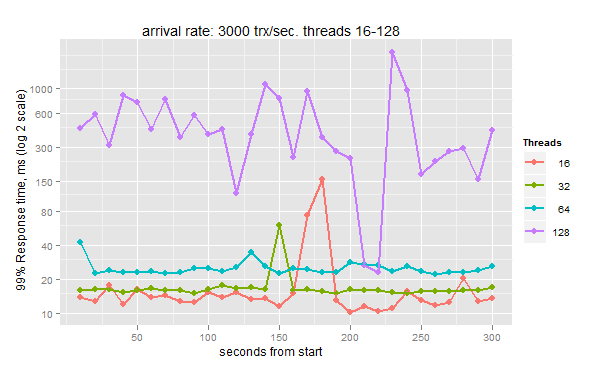

There are results for 16-128 threads (the result is 99% response time, taken every 10 sec. the less is better)

We can see that 16 threads give best 99% response time (15.74ms final), 32 threads: 16.75 ms, 64 threads: 25.14ms.

And with 128 threads we have pretty terrible unstable response times, with 1579.91ms final.

That means that 16-32 threads is probably best number of concurrently working threads (for this kind of workload and this arrival rate).

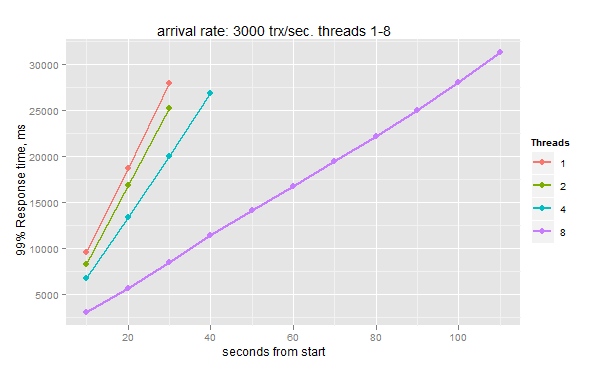

Ok, but what happens if we have not enough working threads? You can see it from following graph (1-8 threads):

The queue piles up, waiting time grows, and the final response time grows linearly up to ~30 sec, where benchmark stops, because the queue is full.

I am looking for your comments, do you find it useful?

Vadim,

This is probably harder to do, but what I’ve always wanted is to have a benchmark tool that finds the system’s maximum usable capacity. It would work like this: you tell it either how many threads to run or the desired throughput, and the maximum allowable 99% (or other percentile) response time, and it varies the arrival rate or the threads. If the 99% response time is less than the threshold, it increases the work on the server until it stops performing acceptably; it decreases when the server is not behaving acceptably. It uses an exponentially decaying weighted moving average to determine how long of a window to do the 99% measurement.

This is kind of complicated, so maybe I can make it easier. I want to know how much throughput I can expect with a 16-thread workload and a 30ms 99th percentile response time over 1 hour, so it varies the arrival rate until the server is just barely responding acceptably, and eventually reports the throughput. The inputs/options in this case are 16 threads, .03 seconds, .99, and 3600 seconds. The variable it adjusts automatically is the arrival rate.

The other example would be “how many threads can the system handle with good behavior,” but that would require you to specify a target throughput or arrival rate, which might be very hard to do, or it might actually be an unknown that you want to determine. So, to answer this question, I would suggest using the approach in my previous paragraph, and running many benchmarks at different numbers of threads until you find the workload where the system behaves the best AND gives the best throughput.

To answer your final question in the blog post, though, “yes, I find this useful.” This is a great step in the right direction. Typical benchmarks are just a square peg in a round hole, and the tools need to support something much more advanced and meaningful.

What a great post. I will take some of these ideas to heart. The same methodology applies for any web-server or web-service.

What I would add to your methodology is to provide latency histograms. That way a single chart can provide all the interesting percentiles at once.

This would also allow to visually detect anomalies like a bimodal distribution.

The 99%ile is, IMHO, too little information. The average, 90%, 95% and 99% numbers together give a much more thorough view on the actual latency experienced by customers.

It is always an interesting question is how to model real world to get useful results yet avoid system being too complicated. I think injection rate is a very good metric for describing real systems yet I think it is underused for benchmarks because it requires guesswork. Set it too low and you will be barely loading system. Set it too high and queue will get full and benchmark essentially fail.

Fixing number of variables is another interesting question. It is one way to do it – if there is not enough load many of these threads will be idle anyway, the other would be to emulate Apache/PHP or even JDBC connection pool behavior where additional threads are created to serve requests as needed up to defined maximum. Probably both setting maximum number of threads or fixed number of threads would make sense.

I would see benchmark to set maximum queue length and maximum amount of threads (or just amount of threads);

As an input we can vary injection rate and run time. When we have maximum queue length reached the transactions are “failed”. Our guidance for “pass” for given injection rate would be certain 99% response time and no (or some very small rate of) failed transactions. Typically you would try benchmark with different injection rates to see what responses you get for transactions and how high injection rate you can pass.

In fact this all gets it similar to SpecJAppServer benchmarks http://www.spec.org/jAppServer2004/

In that benchmark you could actually vary JDBC pool configuration (and hence number of threads on DB server side) to your liking.

Excellent Suite of OLTP/Web Benchmarks for Relational Databases that addresses some cited questions.

http://code.google.com/p/oltpbenchmark/

To answer your final question, I definitely find this useful.

Benchmarks are written to provide the end user with a perspective of how a piece of software will perform in the real world as much as possible, so they’re often coded to suit how they’ll likely be used. Your tpcc-mysql for example doesn’t use JOINs, something we know is expensive in the MySQL world, yet Oracle and to some extent SQL Server handle better. JOIN has long been something MySQL has done badly, people have been ragging on about it for as long as I remember, so you have to wonder why it has taken several years before we’ve seen MySQL look to actually overhaul the optimizer to start to resolve this?

It seems to me the unfortunate consequence of the existence of these benchmarks is that the main stream developers are tuning their engines towards them (which is not what they’re there for.) It becomes a kind of self-fulfilling prophesy, we don’t benchmark joins because they’re slow and we avoid them in the real world, so they don’t do anything to speed them up, so we don’t use them in the real world.. ad infinitum.

We’re not going to get developers to stop using major benchmarks for their tuning, so why not make them dance to our tune instead, become the Puppeteer and not the Puppet, writing and emphasising benchmarks that benchmark performance across the board?

With the increasing emergence of drop-in replacements for MySQL like Percona and MariaDB which are either tweaking or flat out overhauling their query engines in their own ways this presents an ideal opportunity and a chance to more accurately reflect the advantages of each over the other.

Twirrim,

Our tpcc-mysql has JOINs, there are queries like

“SELECT c_discount, c_last, c_credit, w_tax FROM customer, warehouse WHERE w_id = ? AND c_w_id = w_id AND c_d_id = ? AND c_id = ?”

“SELECT o_id, o_entry_d, COALESCE(o_carrier_id,0) FROM orders WHERE o_w_id = ? AND o_d_id = ? AND o_c_id = ? AND o_id = (SELECT MAX(o_id) FROM orders WHERE o_w_id = ? AND o_d_id = ? AND o_c_id = ?)””

But in general I agree that benchmark heavily shifted into single table queries. Though you need to remember this is a TPC-C specification and not our invention.

Having some experience in benchmarks I can say that writing a balanced and well designed benchmark is not easy piece of work. You need to invest significant time (which is money) and this investment has to be justified.

tobi,

Thanks, yes, I was playing with set 90-95-99% response times.

You know at some point you are getting too much data, much more than you can analyze in sane

amount of time, that becomes full-time job. So for now I decided to keep it simplier.

Peter,

I think many of what you are saying already implemented.

I have a queue size ( it is hardcoded 100000 events for now), reaching the end of queue will stop a benchmark.

A varying arrival rate is not implemented in sysbench by itself, but it can be easily done in calling scripts.

I reckon its pretty cool – I will have a look at it! 🙂

I think you’re spot on with the use cases – I know I only use sysbench to evaluate hardware to gage what I reckon we can see in terms of benefit but to be honest, its all a bit smoke and mirrors.

You dont know your 9x percentile response time performance until you use it against your work load with your dataset with your queries; thats what I dont like.

Additionally agile development is really kicking along so I think the value proposition is more around knowing the cost benefit immediately in terms of those incremental schema changes or query changes instead of a tool to gage scaling limitations of server x. (Kinda a once off exercise vs something that should be done with iterative code releases)

I work for realestate.com.au; we have a few key databases but our dataset is 150G for one, 500G for another (for databases that are our lively hood). So we’re not at a scale of Facebook or Twitter, and given we’re pretty cashed up its really easy for us to scale up and not out – in fact thats our solution – and interms of development costs to rearchitect vs cost-making initiatives; its the right way. (Shading is frickin hard and can burn you if its done wrong).

So we have the ability to do things on the application side of things (eg have some users see version X, others version y), but we dont have the scale to be able to do that on the database side of things. So I cannot certain whether the schema change will cause additional IO that will really blow out the QRT which is what I dont like.

So I still think the gap is around being able to gage this type of information for people that are not at the scale of Facebook and/or have a sharded datastore. A possible solution is to use mysql proxy and an alterate server with a snapshotted dataset and compare apples to apples there. I did dick around with proxy a long time ago and recall I ran into some problems under high concurrency.

I guess what are your guys thoughts on agile software development and building confidence on code and database changes?

Kind regards,

Trent Hornibrook

@mysqldbahelp

It is quite common to use this approach when load-testing webservers. It just makes sens to apply it to databases too.

. single-file serving is tested by setting a number of concurrent requests and letting the test run for eg. 5 minutes. Concurrent requests are then increased and the test run again. This can be done twice, first on a very small static file, and is taken as baseline “best case scenario”, then on a dynamic web page – usually the homepage (it’s the one that gets most traffic) or one representative of typical web page complexity

. immediately afterwards, “scenario testing” kicks in: a random delay is set up between successive requests (should be exponential, but not all tools actually get it right), and a navigation pattern chosen to represent a typical user session

If we are to translate this to databases, we could replace “static file” with a “simple select”, and “dynamic page” with a query-with-joins.

Needless to say, there are many variables to take into account (size of data set, usage of persistent connection) – and there are even more in web benchmarking: how long does a user wait between switching web page, is the browser using keepalive, is it ok with compressed content, etc…

This is way every real-life load test is in fact a project by itself – and why a lot of trust is put in the human driving the tests: by tweaking the settings used, he could get completely different results. The downside is that no comparison of two tests is possible…

Hi Vadim

I find this very useful and also agree with Tobi that a latency distribution will be very useful.

Many times applications need to meet response time SLAs for 95 or 99 percentile of requests, so knowing a distribution will be useful.

Vadim,

This is indeed very useful.

We (at the Sizing Servers Lab, a university lab) have implemented this in our stresstesting vApus Client. I have described vApus here: http://www.anandtech.com/show/3846/quad-xeon-7500-the-best-virtualized-datacenter-building-block-/4. We went a step further

1) By turning logs into benchmarks so you use real user input (real queries) and not “made up” queries

2) with a master, slave system so we could also benchmark virtualized servers with different workloads.

We have made the same observations as you, but from a different angle. In fact the servers that offers the best throughput are not the ones you should buy. See here: http://www.anandtech.com/show/5279/the-opteron-6276-a-closer-look/3. We have applied it to MS SQL Server, not to MySQL yet, but it is the same idea and only a matter of time before we do.

Johan De Gelas

Hi,

Interesting approach. On my end i have always tried to bench mark this scenario but every time i am getting different results.

Using memcache with mysql, in reality alot of requests goes to memcache and few writes and reads to database itself, which is all great. But how about bench marking this scenario with accuracy, instead of benchmarking database and mem separately.

Alam

I’ve used Poisson load generators for blackbox perf testing of how the system responds to requests produced by independent irregular sources. The gist of a load generator is that it must generate the load with a given intensity regardless of the system response rate (compare to throughput measuring benchmarks: they don’t issue another request until they get a reply from the system, so their intensity is throttled by the system response rate). This is similar to what you describe in this post (though it looks like you still throttle the intensity on the client by spinning only N threads; my load generators would just keep generating requests with a given intensity spinning up as many threads as needed).

For whitebox perf testing, I usually use two simple benchmarks and then apply a simplified model that works pretty well. The two measurements are:

1. One-request latency benchmark. Run one request of interest from one thread (usually in a loop to get an average of multiple executions) to get the time it takes to execute one request.

2. The max system throughput benchmark. Run as many parallel requests as needed to make the system throughput (TPS in the case of a database) not increase any more.

As you can see, these can be done by the traditional benchmarks 🙂 and they immediately get the properties of the system (compare to Poisson load generators: you need to have an idea of the intensity to get useful results).

Then I use the following simplified model: the system has one shared processing component with processing time P, plus the system has request delivery latency L (i.e. the time it takes for a request to reach the processing component – it’s convenient to think about it as networking latency).

The one-request latency benchmark gives you the single request response time: T = P + L. If it’s too high – you’ve got a problem that you’d generally want to address before getting to the throughput tests :-).

The max system throughput test gives the shared component processing time P – it’s the reciprocal of the max system throughput (1/TPS in the case of a database).

The response time of the system under load is R = P + L + Q, where is Q is the average time a request is waiting to be processed by the shared processing component. A mistake that I’ve seen people to make when reasoning about response time is they make an assumption that Q is going to be close to zero until the shared processing component is fully utilized, and that’s not true with Poisson distribution!

According to the queuing theory, Q = P * U / ( 1 – U ) where U is the system utilization, i.e. the request intensity divided by the system’s maximum throughput. So when the system is loaded at 66%, Q = 2 * P, at 75% utilization Q = 3 * P, at 83% utilization Q = 5 * P, at 90% utilization Q = 10 * P, at 99% utilization Q = 100 * P, etc.

This model obviously only helps with reasoning about the system response time given the system max throughput. It doesn’t help to reason about what workload mix is going to better represent the user’s workload – both the max throughput and response time are going to change together when the workload mix changes.

Artem