When I mention Galera replication as in my previous post on this topic, the most popular question is how does it affect performance.

Of course you may expect performance overhead, as in case with Galera replication we add some network roundtrip and certification process. How big is it ? In this post I am trying to present some data from my benchmarks.

For tests I use tpcc-mysql, datasize 600W (~60GB) with buffer pool 52GB. Workload is run under 48 user connections.

Hardware:

Software: Percona-Server-5.5.15 regular and with Galera replication.

During tests I measure throughput each 10 sec, so it allows to observe stability of results. As final result I take median (line that divides top 50% measurements and bottom 50%).

In text I use following code names:

And now go to results.

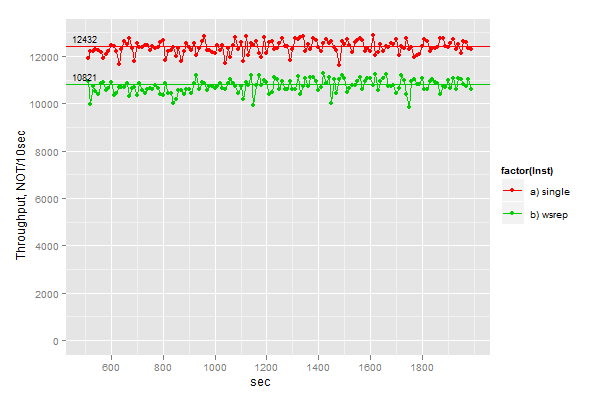

1. Compare baseline to the same node but with enabled wsrep.

That is we have 12432 vs 10821 NOT/10sec.

I think main overhead may coming from writing write sets to disk. Galera 1.0 stores events on disk.

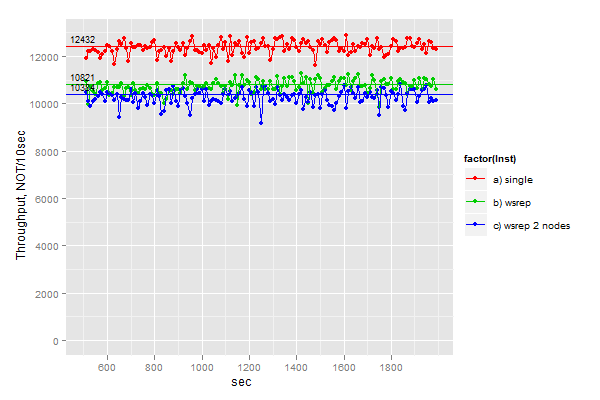

2. We add second node connected to first.

The result drops a bit further, network communication adds its overhead.

We have 10384 for 2 nodes vs 10821 for 1 node.

The drop is just somewhat 4.2%

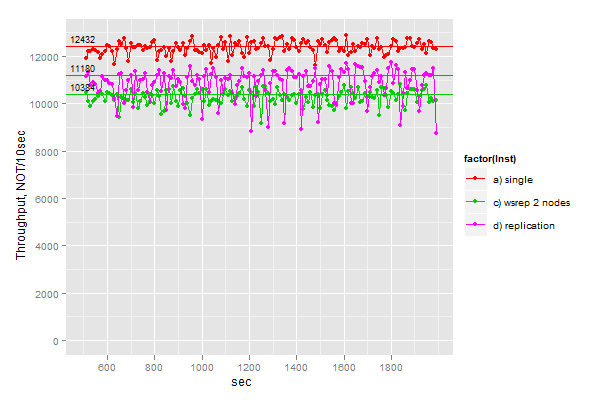

3. It is unfair to compare two nodes system to single node, so let’s see how regular MySQL replication performs there.

So we see that running regular replication we have better result:

11180 for MySQL replication vs 10384 for 2 Galera nodes.

However there two things to consider:

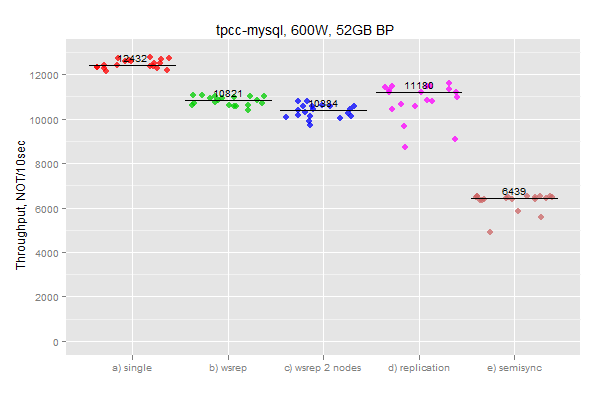

4. Now it is interesting how Semi-Sync replication will do under this workload.

For Semi-Sync replication we have 6439 NOT/10sec which is significantly slower than

in any other run.

And even with that, slave was still behind Master by 300 sec after 1800 sec of run.

And there to gather all result together:

I personally consider results with Galera very good, taking into account that we do not have second node getting behind and this node is consistent with first node.

For further activity it will be interesting to see what overhead we can get in 3-nodes setup,

and also what total throughput we can get if we put load on ALL nodes, but not only on single.

Scripts and raw results on Benchmarks Launchpad

Vadim,

Very interesting results indeed – I’ll admit that I am surprised, and it looks as if things are moving in the right direction with MySQL and Galera.

Curiously, has much been said about bringing Galera in to official builds from Percona and maintaining them in the future? We’ve got a project beginning soon, and I’d love to have the opportunity to look at rolling out Galera for it, but don’t want to give up Percona builds of MySQL, or be stuck on 5.5.15.

Hi Vadim. Nice to see you do TPPC-like test, so I can compare with my sysbench runs http://openlife.cc/blogs/2011/august/galera-disk-bound-workload-revisited and Codership’s own that also tend to be sysbench: http://www.codership.com/blog/

You chose an interesting workload: Data set is a little larger than buffer pool, but not much. In my tests, when data set fits in memory, I see very small overhead from Galera, even less than here. When the workload was heavily disk bound, I do see more than 50% overhead from using Galera, such as here: http://openlife.cc/blogs/2011/august/galera-disk-bound-workload-revisited My observations suggest Galera somehow triggers bad behavior in InnoDB log purging, same problem as you recently reported on independently of Galera: http://www.mysqlperformanceblog.com/2011/09/18/disaster-mysql-5-5-flushing/

It would be interesting to see if you test with a more disk bound load and you can reproduce the same behavior, and in that case hear what your opinion is of the reasons.

Chris,

We are still on evaluation phase, and actually we are inviting to try our builds also and give us feedback.

So far the results look quite promising, but we still want to test it more.

If there is no serious problems we will provide regular binaries in future.

Henrik,

The problem I have is that my hardware base is very non-uniform.

I do not have identical boxes, that why running IO-bound workload will be tricky.

As for problem you mention, it is actually a bit different. Flushing problem like

http://www.mysqlperformanceblog.com/2011/09/18/disaster-mysql-5-5-flushing/

appears when you have very big buffer pool (100GB+) and data (also 100GB+) fits into memory,

then InnoDB has problems with flushing fast changing data.

Vadim,

1) For single, wsrep, wsrep 2 nodes did you have binlog enabled or disabled?

2) For replication & semisync which settings did you use for sync_binlog, innodb_support_xa?

3) What values of innodb_flush_log_at_trx_commit did you use?

4) You mentioned “main overhead may coming from writing write sets to disk.” Does Galera fsync the write set to disk after every single transaction? Or does it use some sort of “group commit” for writing to write sets to disk?

5) I’m actually surprised that Galera is slower than regular replication. When binlog & sync_bonlog are enabled, group commit is broken and performance can drop by over 90%. Since Galera doesn’t need binlog I expected it to be much faster than regular replication. Any idea why that’s not the case in this benchmark?

Thanks

Andy,

I do have innodb_flush_log_at_trx_commit=2 and sync_binlog=0. Yes I know it is not crash safe.

With wsrep I do not write binary logs.

I am not yet sure how Galera writes caching data on disk. I do not think fsync is needed,

as information from disk is used rather for other nodes joining cluster.

Please note that effect broken group commit you will see rather on slow disks or RAID with write-through cache mode.

On RAID with BBU and write-back, or with Fusion-io cards as in this case, the problem with group commit is not significant.

Vadim,

Thanks.

In that case I think you’re comparing things that are not entirely equivalent.

As you mentioned, your setup for replication is not crash safe. On the other hand your setup for Galera is crash safe.

Maybe you could also test replication with innodb_flush_log_at_trx_commit=1, sync_binlog=1, innodb_support_xa=1? That would be a more apple-to-apple comparison with Galera.

Andy,

I agree that Galera vs MySQL replication comparison is not ideal, but the more I think about it,

the fair comparison is not really possible.

Galera and MySQL replication provide different characteristics as HA solutions.

Looking on famous CAP theorem : Galera gives you Consistency and Availability,

while MySQL replication: Availability and Partition-Tolerant.

That is it will be very hard to compare these systems as apple-to-apple.

So I rather focus on question what overhead from Galera by itself we can expect.

Vadim,

That’s a good point.

Vadim,

You’re correct, there is no flushing involved in writeset caching. Cache is not supposed to be durable. Although we didn’t do any formal benchmarking yet, in a day to day testing caching didn’t display any noticeable performance degradation. On the contrary, 1.0 seems to perform a tad faster than 0.8.

Andy,

With all other things equal it is understandable that Galera overhead is higher than that of stock MySQL replication: not only it has to generate and replicate the same binlog events, it also has to collect and manage row keys. Plus it adds latency to transaction processing, which may result in longer lock waits. Yet I do believe that we have some room for improvement there.

Vadim,

It is not entirely clear what happens in “wsrep 2 nodes” case – are you running benchmark on single node in this case and using Galera just as replication or do you use it as a “cluster” and run benchmark on both nodes at once ?

Results look pretty good indeed as if you would look at more “clasiscal” cases when there is a lot more IO going on the overhead is likely to be less.

Great work Galera project!

I found similar, impressive results in my testing as well. I never saw the comparison to semisync though, which is shocking considering the similarities (sort of). Any thoughts as to why semisync is so much slower?

Cheers,

Tim

Vadim,

thank you for your investigation. I’m new to Galera, however I was able to setup 3 node cluster

(using MariaDB or Percona). My problem is that when I go from single node to Galera (for example

single node with wsrep* in my.cnf) performance have decreased about 10-15 fold (without wsrep node

1000-1500% faster).

What may be wrong with my Galera setup ?

thank you,

Sergey

I use mariadb galera cluster, find the performance is very poor, about 10 times slower