(cross post from SSD Performance Blog )

To get meaningful performance results on SSD storage is not easy task, let’s see why.

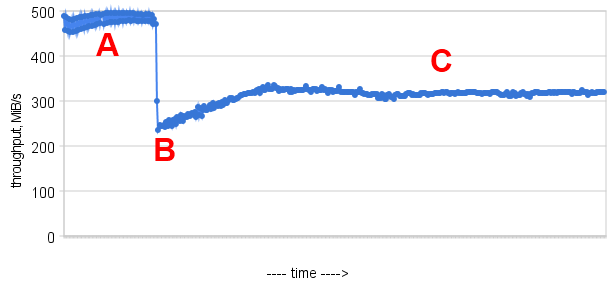

There is graph from sysbench fileio random write benchmark with 4 threads. The results were taken on PCI-E SSD card ( I do not want to name vendor here, as the problem is the same for any card).

The benchmark starts on the newly formatted card, and some period (fresh period A) you see line with high result, which at some point of time drops (point B) and after some recovery period there is steady state ( state C ).

What happens there, as you may know, SSD has garbage collector activity, and the point B is time when garbage collector starts its work. You can read more on this topic on

Write amplification wiki page.

So as you understand it is important to know, what the state the card was in, when the benchmark was running. Apparently, many manufactures like to put in the specification of device the result from fresh period A, while I think steady state C is more important for end users. So in my further results I will point what was the state of the card during benchmark.

However it makes task of running benchmark on SSD trickier. It is similar to benchmarks on database but up-down. The database just after start is in “cold state” and you need to make sure you have enough warmup and only take results in the hot state, when all internal buffers are filled and populated.

Well, you may say – just to put card in steady state C and run the benchmark, but it is only part of the problem.

The next issue comes from TRIM command. TRIM command is the command sent to device when the file is deleted, and it allows for SSD controller to mark all space related to file as free and reuse it immediately. Not all devices support TRIM command, for example the first generation of Intel SSD cards did not support it, while G2 does.

So why TRIM is the problem for the benchmark – basically if you delete all files, it returns the card to fresh state A. The many benchmark scenarios ( and my initial sysbench fileio scripts) suppose to create files at the start of benchmark and remove afterward. The similar issue is when you restore database from backup, run benchmark, and remove files. That it may happen during your run you cover all states A->B->C, and the final result is pretty much useless. So as the conclusion if you want to see the result from steady state you should make sure you have it in your benchmark.

As we speak about benchmark results, there is another trick from vendors, I want to put your attention. Quite often you can see in specification from imaginary Vendor X say:

The good thing there is that vendor put both maximal write ( most likely from state A) and Sustained Write ( I guess from state C).

However if you multiply 4KB*70000IOS, you will get 280000KB/s = 274MB/s, which is quite far from

declared 520MB/s.

What is the trick there: the trick is that maximal throughput in MB/sec you are getting when you use big block size, say 64K or 128K, and maximal throughput in IOPS you are getting when you use small block size, 4K in this case.

So when you read Write:

|

1 |

Up to 480 MB/s, Random Write 4KB : 70,000 IOPS |

, you should know that 480MB/s was received with big block size, and for 4KB block size you should expect only 274MB/s ( and most likely in fresh state A).

As SSD market involving, we will see more and more the benchmark results, so be ready to read it carefully.

Resources

RELATED POSTS

Wouldn’t mounting the device via the loopback device hide the fact it’s an ssd from the os, making the trim command not work. Then your one step closer to better results, at the expense of some more cpu overhead.

Patrick,

I would be careful with approaches like this. You do not really know what other support from OS and filesystem for SSD devices may be disabled… plus With reads/writes sometimes at 1GB/sec rate the overhead on CPU/Memory bus from extra copying may not be trivial.

I think it is practical to run benchmarks as close to production setups as possible for them to have highest relevance

Patrick,

Be careful of the loopback device. In our testing it shows that it lies about fsync, so the process that calls fsync on loopback device file may think data is persistent but that is not usually the case.

great write up, heres a video I did on my SSD: http://www.youtube.com/watch?v=gZJ-pPeE5lA