For the my previous post, there was comment to suggest to test db_STRESS benchmark on XtraDB by Dimitri. And I tested and tuned for the benchmark. I will show you the tunings. It should be also tuning procedure for general heavy writing workloads.

At first, <tuning peak performance>. The next, <tuning purge operation> to stabilize performance and to avoid decreasing performance.

<test condition>

Server:

PowerEdge R900, Four Quad Core E7320 Xeon, 2.13GHz, 32GB Memory, 16X2GB, 667MHz

db_STRESS:

32 sessions, RW=1, dbsize = 1000000, no thinktime

XtraDB: (mysql-5.1.39 + XtraDB-1.0.4-current)

innodb_io_capacity = 4000

innodb_support_xa = false

innodb_file_per_table = true

innodb_buffer_pool_size = 16G

innodb_read_io_threads = 8

innodb_write_io_threads = 8

innodb_flush_log_at_trx_commit = 2

innodb_log_buffer_size = 128M

innodb_log_file_size = 512M

innodb_log_files_in_group = 2

innodb_max_dirty_pages_pct = 90

innodb_flush_method = O_DIRECT

(the followings are XtraDB specific general settings)

innodb_ibuf_active_contract = 1

innodb_adaptive_flushing = false

innodb_adaptive_checkpoint = estimate

<tuning peak performance>

At first, tuning the peak performance to use CPU and IO resource more effectively. To avoid mutex/lock contentions are good to use more CPU resource of many CPUs.

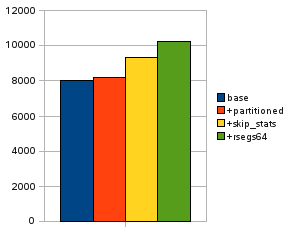

This graph shows the peak performance in tps of db_STRESS.

At current settings, “base” in the graph is the perfomance. We can confirm the mutex/lock contention roughly by the SEMAPHORES sction of SHOW INNODB STATUS output.

“xx-lock on RW-latch at 0x7f2ff40a3dc0 created in file dict/dict0dict.c line 1627”

It is index->lock, viewing the source file (and it may be HISTORY table). This is the lock for each index tree. We may be able to disperse the lock using by the partitioning of MySQL. Added the following clause to the HISTORY table definition.

“PARTITION BY HASH(REF_OBJECT) PARTITIONS 16”

Now the performance became to “+partitioned” in the graph. Looking the SEMAPHORES section again,

“has waited at handler/ha_innodb.cc line 7275 for 0.0000 seconds the semaphore:

X-lock on RW-latch at 0xd30320 created in file dict/dict0dict.c line 623”

may be the line which appears for the most times (it is dict_operation_lock). It may be partition specific lock contention. The current XtraDB has the variable to tune the contention.

innodb_stats_update_need_lock = 0 (default 1)

It skip the updating statistics which needs the lock. (it only affects for “Data_free:” value of TABLE STATS). And the performance became “+skip_stats” in the graph. Then, the next contention at SEMAPHORES section is…

“Mutex at 0x1b3e3e78 created file trx/trx0rseg.c line 167”

may be remarkable (it is rseg->mutex). The mutex is for each rollback segments, so we can increase the rsegs to solve the contention problem. XtraDB can increase the rseg.

innodb_extra_rsegments = 64 (affects to initialization of InnoDB)

Recreated database files with the parameter. Then the performance became “+rsegs64”. At last, the next contention may be “Mutex at 0x28ce8e0 created file srv/srv0srv.c line 982”. It is kernel_mutex, currently we don’t have proper solution for that. The setting seems to be enough for now.

<tuning purge operation>

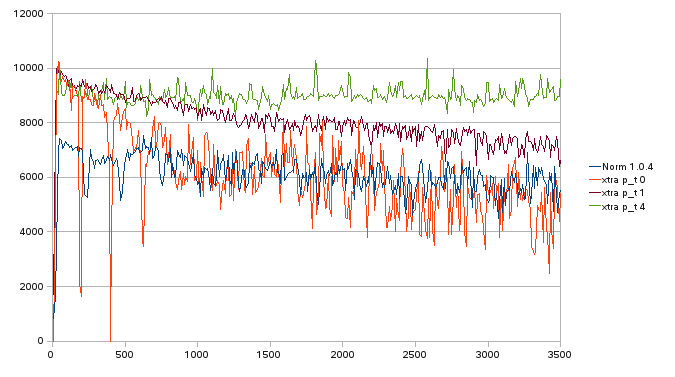

Next, looking the sequential result in more long term.

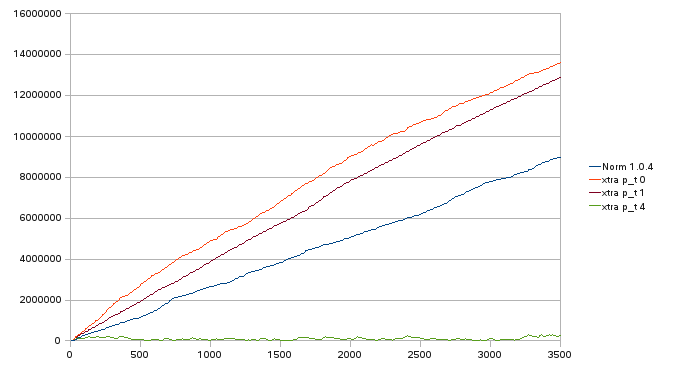

The next problem is “History list length” growing to huge size. The value is the number of entries in rollback segment. The entries are used for consistent reading of the older transactions. They can be removed when any transactions doesn’t refer the entry. This removing operation for the entries is called “purge” in InnoDB. The purge operation should be done enough on time, because the huge history list affects to performance.

Basically, the purging is done by master_thread (general background thread of InnoDB). The huge history list makes the purge operation slow, and it interferes with the other tasks of the master_thread (e.g. flushing dirty blocks, treating insert buffer, etc…). Dimitri implemented a purge_thread to devote to the purging, and also XtraDB has similar purge_thread. Though it seems to make the throughput stabilize, it is not enough still for heavy update workloads. A single purge_thread on one CPU is not enough for updates from user threads on the all of other CPUs.

XtraDB can increase the purge_threads from the next release.

innodb_use_purge_thread = 4

seems to be enough for this workload on the server.

The first graph of followings is sequential throughput [tps] up to 3500 sec.

The next is tracking the “History list length” at the same time.

|

1 2 3 4 |

"Norm 1.0.4": Normal InnoDB Plugin 1.0.4 without XtraDB specific options "xtra p_t 0": XtraDB 1.0.4-new (no purge_thread) "xtra p_t 1": XtraDB 1.0.4-new (single purge_thread similar to Dimitri's) "xtra p_t 4": XtraDB 1.0.4-new (4 purge threads) |

The graphs show…

And the last 300secs’ average tps are…

|

1 2 3 4 |

"Norm 1.0.4": 5725.47 "xtra p_t 0": 4699.33 "xtra p_t 1": 7130.3 "xtra p_t 4": 9118 (about 60%up from Normal Plugin 1.0.4) |

In the end, the faster and more stable performance of db_STRESS benchmark is obtained by these tunings of XtraDB.

—————————————–

(Added 2009.10.29)

<FAQ: Is XtraDB slower than Plugin?>

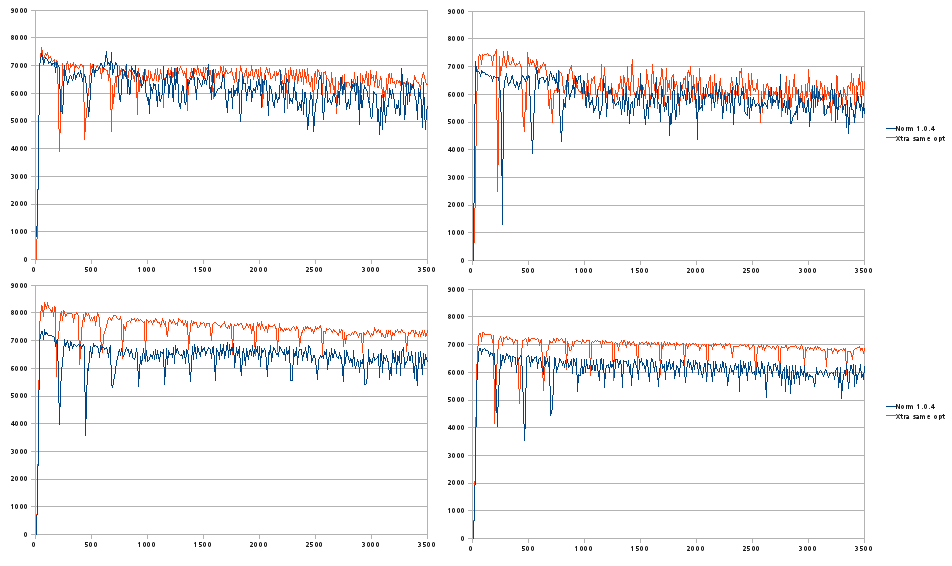

I’d like to say “no” to this question. We have been adding many tuning options to XtraDB. But they are effective not for all cases, sometimes the performance may get worse because of “not proper” or “too much value”. We should choose the options correctly. XtraDB is based on InnoDB Plugin and we can set XtraDB same to InnoDB Plugin at least. The following graphs are results of XtraDB and Plugin with same options and same database.

top-left (same condition to above graphs):

innodb_flush_log_at_trx_commit = 2

innodb_doublewrite = true

top-right:

innodb_flush_log_at_trx_commit = 1

innodb_doublewrite = true

bottom-left:

innodb_flush_log_at_trx_commit = 2

innodb_doublewrite = false

bottom-right:

innodb_flush_log_at_trx_commit = 1

innodb_doublewrite = false

It seems that XtraDB is not slower than Plugin here at least.

We can start tuning based on these performances using XtraDB specific options!

Why do you make XtraDB slower than Plugin? 🙂

Couple questions on your settings. What are the disadvantages of setting innodb_stats_update_need_lock = 0? What about innodb_support_xa = false, does that introduce possible issues with synchronization with the binlogs?

Ryan,

The disadvantages of “innodb_stats_update_need_lock = 0” is only that “DATA_FREE” column of “SHOW TABLE STATUS” is not updated. The value is only used for the “SHOW TABLE STATUS” for now. So, there are no influence to the other operations.

And I intend “innodb_support_xa = false” as a little optimization. The undo entry may be different a little from “true”. And this test doesn’t use binlogs.

Thank you

Yasufumi,

Is it safe to not use binlog in production? I thought binlog is needed for recovery purpose.

If binlog is needed, then innodb_support_xa is also needed, right?

How much of a performance penalty does enabling binlog & innodb_support_xa incur then?

Andy,

This benchmark is for discussing about the pure performance problem in InnoDB engine. The setting is preferred as simple not safer. The binlog may have the another bound. But for real systems, binlog is important of course. Discussing about performance with binlog is the next step, I think. I will test it as you say in the next post and discuss performance disadvantages (or fix it).

Whether innodb_support_xa is needed or not is depend on the each user. If innodb_support_xa=1 and using binlog, 2 times fsync() is done for the each transaction. It is “prepare” fsync() and “commit” fsync(), the writing binlog for the transaction is done between the fsyncs. So, it affects to performance clearly. But MySQL seems to adjust consistency between InnoDB and binlog when crash recovery. “prepared but not committed transaction” seems to be rollbacked if the transaction is not committed in the binlog yet, and be committed if the transaction is committed in the binlog. So innodb_support_xa=1 seems to be safer than innodb_support_xa=0, but it is not perfect.

There are possibility still that the transaction which is committed in InnoDB but not written in binlog exists, when power trouble for example.

Thank you

Yasufumi,

great analyze of bottlenecks and very constructive approach in proposed solutions! – I’m impatient to test the new XtraDB! :-))

few questions so far:

– do you mean that database/datafile should be recreated to be able to use several rollback segments? (setting the option is not enough?)

– from your TPS graph is not seen when XtraDB outperforms the “Normal 1.0.4”, anyway to see the “whole” picture?

Thanks a lot for sharing it!

Rgds,

-Dimitri

Yasufumi,

Great. Looking forward to your next post on binlog.

Would love to see how innodb_support_xa=1 affects performance too!

Recently we’ve found a lot of clients (they are using Pythian’s auditing services, for one-time short-term health checks) that have heavy writing workloads solve problems very easily:

Change the table to MyISAM. Many people are using MySQL to keep logs, or other information that’s mostly INSERT. I think it’s important to mention that option as well, especially since this solution doesn’t seem to be good for lots of UPDATE statements either.

(ps the challenge question is somewhat broken, because 4+8 does in fact equal 12

(previously when I put 12, I got denied with “you failed the challenge!” but when I put 11 in, it worked. Is there an off-by-1 error?)

Yasufumi,

I see you’re using innodb_log_buffer_size=128M is it indeed required value or you just set it so high to make sure is not the bottleneck ?

It is my assumption there is no need for log buffer more than twice of peak amount of log records which can be generated a second.