Accidentally me and Baron played with InfoBright (see https://www.percona.com/blog/2009/09/29/quick-comparison-of-myisam-infobright-and-monetdb/) this week. And following Baron’s example I also run the same load against MonetDB. Reading comments to Baron’s post I tied to load the same data to LucidDB, but I was not successful in this. I tried to analyze a bigger dataset and I took public available data http://www.transtats.bts.gov/DL_SelectFields.asp?Table_ID=236&DB_Short_Name=On-Time about USA domestic flights with information about flight length and delays. The data is available from 1988 to 2009 in chunks per month, so I downloaded 252 files (for 1988-2008 years) with size from 170MB to 300MB each. In total raw data is about 55GB. Average amount of rows in each chunk is 483762.46 (the query Q0 is:

|

1 |

select avg(c1) from (select year,month,count(*) as c1 from ontime group by YEAR,month) t; |

for InfoBright and

|

1 |

with t as (select yeard,monthd,count(*) as c1 from ontime group by YEARD,monthd) select AVG(c1) FROM t |

for MonetDB. For InfoBright it took 4.19 sec to execute and 29.9 sec for MonetDB, but it’s almost single case where MonetDB was significantly slower) Few words about environment: server Dell SC1425, with 4GB of RAM and Dual Intel(R) Xeon(TM) CPU 3.40GHz. InfoBright (ICE) version: 5.1.14-log build number (revision)=IB_3.2_GA_5316(ice) MonetDB version: server v5.14.2, based on kernel v1.32.2 LucidDB was 0.9.1 The table I loaded data is:

|

1 |

CREATE TABLE `ontime` ( `Year` year(4) DEFAULT NULL, `Quarter` tinyint(4) DEFAULT NULL, `Month` tinyint(4) DEFAULT NULL, `DayofMonth` tinyint(4) DEFAULT NULL, `DayOfWeek` tinyint(4) DEFAULT NULL, `FlightDate` date DEFAULT NULL, `UniqueCarrier` char(7) DEFAULT NULL, `AirlineID` int(11) DEFAULT NULL, `Carrier` char(2) DEFAULT NULL, `TailNum` varchar(50) DEFAULT NULL, `FlightNum` varchar(10) DEFAULT NULL, `Origin` char(5) DEFAULT NULL, `OriginCityName` varchar(100) DEFAULT NULL, `OriginState` char(2) DEFAULT NULL, `OriginStateFips` varchar(10) DEFAULT NULL, `OriginStateName` varchar(100) DEFAULT NULL, `OriginWac` int(11) DEFAULT NULL, `Dest` char(5) DEFAULT NULL, `DestCityName` varchar(100) DEFAULT NULL, `DestState` char(2) DEFAULT NULL, `DestStateFips` varchar(10) DEFAULT NULL, `DestStateName` varchar(100) DEFAULT NULL, `DestWac` int(11) DEFAULT NULL, `CRSDepTime` int(11) DEFAULT NULL, `DepTime` int(11) DEFAULT NULL, `DepDelay` int(11) DEFAULT NULL, `DepDelayMinutes` int(11) DEFAULT NULL, `DepDel15` int(11) DEFAULT NULL, `DepartureDelayGroups` int(11) DEFAULT NULL, `DepTimeBlk` varchar(20) DEFAULT NULL, `TaxiOut` int(11) DEFAULT NULL, `WheelsOff` int(11) DEFAULT NULL, `WheelsOn` int(11) DEFAULT NULL, `TaxiIn` int(11) DEFAULT NULL, `CRSArrTime` int(11) DEFAULT NULL, `ArrTime` int(11) DEFAULT NULL, `ArrDelay` int(11) DEFAULT NULL, `ArrDelayMinutes` int(11) DEFAULT NULL, `ArrDel15` int(11) DEFAULT NULL, `ArrivalDelayGroups` int(11) DEFAULT NULL, `ArrTimeBlk` varchar(20) DEFAULT NULL, `Cancelled` tinyint(4) DEFAULT NULL, `CancellationCode` char(1) DEFAULT NULL, `Diverted` tinyint(4) DEFAULT NULL, `CRSElapsedTime` INT(11) DEFAULT NULL, `ActualElapsedTime` INT(11) DEFAULT NULL, `AirTime` INT(11) DEFAULT NULL, `Flights` INT(11) DEFAULT NULL, `Distance` INT(11) DEFAULT NULL, `DistanceGroup` TINYINT(4) DEFAULT NULL, `CarrierDelay` INT(11) DEFAULT NULL, `WeatherDelay` INT(11) DEFAULT NULL, `NASDelay` INT(11) DEFAULT NULL, `SecurityDelay` INT(11) DEFAULT NULL, `LateAircraftDelay` INT(11) DEFAULT NULL, `FirstDepTime` varchar(10) DEFAULT NULL, `TotalAddGTime` varchar(10) DEFAULT NULL, `LongestAddGTime` varchar(10) DEFAULT NULL, `DivAirportLandings` varchar(10) DEFAULT NULL, `DivReachedDest` varchar(10) DEFAULT NULL, `DivActualElapsedTime` varchar(10) DEFAULT NULL, `DivArrDelay` varchar(10) DEFAULT NULL, `DivDistance` varchar(10) DEFAULT NULL, `Div1Airport` varchar(10) DEFAULT NULL, `Div1WheelsOn` varchar(10) DEFAULT NULL, `Div1TotalGTime` varchar(10) DEFAULT NULL, `Div1LongestGTime` varchar(10) DEFAULT NULL, `Div1WheelsOff` varchar(10) DEFAULT NULL, `Div1TailNum` varchar(10) DEFAULT NULL, `Div2Airport` varchar(10) DEFAULT NULL, `Div2WheelsOn` varchar(10) DEFAULT NULL, `Div2TotalGTime` varchar(10) DEFAULT NULL, `Div2LongestGTime` varchar(10) DEFAULT NULL, `Div2WheelsOff` varchar(10) DEFAULT NULL, `Div2TailNum` varchar(10) DEFAULT NULL, `Div3Airport` varchar(10) DEFAULT NULL, `Div3WheelsOn` varchar(10) DEFAULT NULL, `Div3TotalGTime` varchar(10) DEFAULT NULL, `Div3LongestGTime` varchar(10) DEFAULT NULL, `Div3WheelsOff` varchar(10) DEFAULT NULL, `Div3TailNum` varchar(10) DEFAULT NULL, `Div4Airport` varchar(10) DEFAULT NULL, `Div4WheelsOn` varchar(10) DEFAULT NULL, `Div4TotalGTime` varchar(10) DEFAULT NULL, `Div4LongestGTime` varchar(10) DEFAULT NULL, `Div4WheelsOff` varchar(10) DEFAULT NULL, `Div4TailNum` varchar(10) DEFAULT NULL, `Div5Airport` varchar(10) DEFAULT NULL, `Div5WheelsOn` varchar(10) DEFAULT NULL, `Div5TotalGTime` varchar(10) DEFAULT NULL, `Div5LongestGTime` varchar(10) DEFAULT NULL, `Div5WheelsOff` varchar(10) DEFAULT NULL, `Div5TailNum` varchar(10) DEFAULT NULL ) ENGINE=BRIGHTHOUSE DEFAULT CHARSET=latin1; |

Last fields starting with “Div*” are not really used. Load procedure: Infobright: the loader that comes with ICE version is very limited and I had to transform files to quote each field. After that load statement is:

|

1 |

mysql -S /tmp/mysql-ib.sock -e "LOAD DATA INFILE '/data/d1/AirData_ontime/${YEAR}_$i.txt.tr' INTO TABLE ontime FIELDS TERMINATED BY ',' ENCLOSED BY '"'" ontime |

The load time for each chunk was about 30s/chunk in initial years and up to 48s/chunk for 2008 year. And total load time is 8836 sec (2.45h). The size of database after load is 1.6G which is impressive and give 1:34 compress ratio. MonetDB: It took some time to figure out how to load text data ( I really wish developers improve documentation), but finally I ended up with next load statement:

|

1 |

/usr/local/monetdb/bin/mclient -lsql --database=ontime -t -s "COPY 700000 records INTO ontime FROM '/data/d1/AirData_ontime/${Y EAR}_$i.txt' USING DELIMITERS ',','n','"' NULL AS '';" |

Load time: 13065 sec ( 3.6h) Database size after load is 65G , which is discouraging. It seems it does not use any compression, and it’s bigger than original data. LucidDB Here it took time to find how to execute command from command line using included

|

1 |

sqlline |

utility, and I did not understand how to do that, so I generated big SQL file which contained load statements. Load of each chunk was significantly slower starting with about 60 sec/chunk for initial year and constantly growing to 200 sec / chunk for 2000 year. On 2004 year (after about 5h of loading) the load failed by some reason and I did not try to repeat, as I would not fit in timeframe I allocated for this benchmark. Maybe I will try sometime again. Query execution So I really have data for InfoBright and MonetDB, let see how fast they are in different queries. First favorite query for any database benchmarker is

|

1 |

SELECT count(*) FROM ontime; |

. Both InforBritgh and MonetDB executes it immediately with result 117023290 rows Now some random queries I tried again both databases: -Q1: Count flights per day from 2000 to 2008 years

|

1 |

SELECT DayOfWeek, count(*) AS c FROM ontime WHERE YearD BETWEEN 2000 AND 2008 GROUP BY DayOfWeek ORDER BY c DESC |

with result: [ 5, 7509643 ] [ 1, 7478969 ] [ 4, 7453687 ] [ 3, 7412939 ] [ 2, 7370368 ] [ 7, 7095198 ] [ 6, 6425690 ] And it took 7.9s for MonetDB and 12.13s for InfoBright. -Q2: Count of flights delayed more than 10min per day of week for 2000-2008 years

|

1 |

SELECT DayOfWeek, count(*) AS c FROM ontime WHERE DepDelay>10 AND YearD BETWEEN 2000 AND 2008 GROUP BY DayOfWeek ORDER BY c DESC |

Result: [ 5, 1816486 ] [ 4, 1665603 ] [ 1, 1582109 ] [ 7, 1555145 ] [ 3, 1431248 ] [ 2, 1348182 ] [ 6, 1202457 ] And 0.9s execution for MonetDB and 6.37s for InfoBright. -Q3: Count of delays per airport for years 2000-2008

|

1 |

SELECT Origin, count(*) AS c FROM ontime WHERE DepDelay>10 AND YearD BETWEEN 2000 AND 2008 GROUP BY Origin ORDER BY c DESC LIMIT 10 |

[ “ORD”, 739286 ] [ “ATL”, 736736 ] [ “DFW”, 516957 ] [ “PHX”, 336360 ] [ “LAX”, 331997 ] [ “LAS”, 307677 ] [ “DEN”, 306594 ] [ “EWR”, 262007 ] [ “IAH”, 255789 ] [ “DTW”, 248005 ] with 1.7s for MonetDB and 7.29s for InfoBright -Q4: Count of delays per Carrier for 2007 year

|

1 |

SELECT carrier, count(*) FROM ontime WHERE DepDelay>10 AND YearD=2007 GROUP BY carrier ORDER BY 2 DESC |

[ “WN”, 296293 ] [ “AA”, 176203 ] … With 0.27s for MonetDB and 0.99sec for InfoBright But it obvious that the more flight carrier has, the more delays, so to be fair, let’s calculate -Q5: Percentage of delays for each carrier for 2007 year. It is a bit more trickier, as for InfoBright and MonetDB you need different query: MonetDB:

|

1 |

WITH t AS (SELECT carrier, count(*) AS c FROM ontime WHERE DepDelay>10 AND YearD=2007 GROUP BY carrier), t2 AS (SELECT carrier, count(*) AS c2 FROM ontime WHERE YearD=2007 GROUP BY carrier) SELECT t.carrier, c, c2, c*1000/c2 as c3 FROM t JOIN t2 ON (t.Carrier=t2.Carrier) ORDER BY c3 DESC |

InfoBright:

|

1 |

SELECT t.carrier, c, c2, c*1000/c2 as c3 FROM (SELECT carrier, count(*) AS c FROM ontime WHERE DepDelay>10 AND Year=2007 GROUP BY carrier) t JOIN (SELECT carrier, count(*) AS c2 FROM ontime WHERE Year=2007 GROUP BY carrier) t2 ON (t.Carrier=t2.Carrier) ORDER BY c3 DESC; |

I am using c*1000/c2 here, because MonetDB seems using integer arithmetic and, with c/c2 I received just 1. So result is: [ “EV”, 101796, 286234, 355 ] [ “US”, 135987, 485447, 280 ] [ “AA”, 176203, 633857, 277 ] [ “MQ”, 145630, 540494, 269 ] [ “AS”, 42830, 160185, 267 ] [ “B6”, 50740, 191450, 265 ] [ “UA”, 128174, 490002, 261 ] … with execution time: 0.5s for MonetDB and 2.92s for InfoBright. Warnings: do not try EXPLAIN this query in InfoBright. MySQL is really stupid here, and EXPLAIN for this query took 6 min! If you wonder about carriers – EV is Atlantic Southeast Airlines and US is US Airways Inc. 35.5% flights of Atlantic Southeast Airlines was delayed on more than 10 mins! -Q6: Let’s try the same query for wide range of years 2000-2008: Result is: [ “EV”, 443798, 1621140, 273 ] [ “AS”, 299282, 1207960, 247 ] [ “B6”, 191250, 787113, 242 ] [ “WN”, 1885942, 7915940, 238 ] [ “FL”, 287815, 1220663, 235 ] … And execution 12.5s MonetDB and 21.83s InfoBright. (AS is Alaska Airlines Inc. and B6 is JetBlue Airways) -Q7: Percent of delayed (more 10mins) flights per year: MonetDB:

|

1 |

with t as (select YEARD,count(*)*1000 as c1 from ontime WHERE DepDelay>10 GROUP BY YearD), t2 as (select YEARD,count(*) as c2 from ontime GROUP BY YEARD) select t.YEARD, c1/c2 FROM t JOIN t2 ON (t.YEARD=t2.YEARD) |

InfoBright:

|

1 |

SELECT t.YEARD, c1/c2 FROM (select YEARD,count(*)*1000 as c1 from ontime WHERE DepDelay>10 GROUP BY YearD) t JOIN (select YEARD,count(*) as c2 from ontime GROUP BY YEARD) t2 ON (t.YEARD=t2.YEARD) |

with result: [ 1988, 166 ] [ 1989, 199 ] [ 1990, 166 ] [ 1991, 147 ] [ 1992, 146 ] [ 1993, 154 ] [ 1994, 165 ] [ 1995, 193 ] [ 1996, 221 ] [ 1997, 191 ] [ 1998, 193 ] [ 1999, 200 ] [ 2000, 231 ] [ 2002, 163 ] [ 2003, 153 ] [ 2004, 192 ] [ 2005, 210 ] [ 2006, 231 ] [ 2007, 245 ] [ 2008, 219 ] And with execution time 27.9s MonetDB and 8.59s InfoBright. It seems MonetDB does not like scanning wide range of rows, the slowness here is similar to Q0. -Q8: As final I tested most popular destination in sense count of direct connected cities for different diapason of years.

|

1 |

SELECT DestCityName, COUNT( DISTINCT OriginCityName) FROM ontime WHERE Year BETWEEN N and M GROUP BY DestCityName ORDER BY 2 DESC LIMIT 10; |

Years, InfoBright, MonetDB 1y, 5.88s, 0.55s 2y, 11.77s, 1.10s 3y, 17.61s, 1.69s 4y, 37.57s, 2.12s 10y, 79.77s, 29.14s UPDATE (5-Oct-2009): James Birchall recommended to use hint COMMENT ‘lookup’ for fields in InfoBright that have less 10.000 distinct values. I tried that, and it affected only these queries. Results with changes: Years, InfoBright, MonetDB 1y, 1.74s, 0.55s 2y, 3.68s, 1.10s 3y, 5.44s, 1.69s 4y, 7.22s, 2.12s 10y, 17.42s, 29.14s -Q9: And prove that MonetDB does not like to scan many records, there is query

|

1 |

select year,count(*) as c1 from ontime group by YEAR |

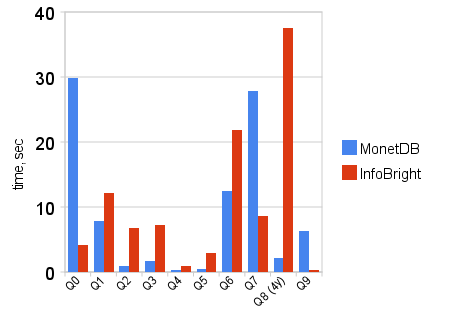

which shows how many records per years +——+———+ | year | c1 | +——+———+ | 1989 | 5041200 | | 1990 | 5270893 | | 1991 | 5076925 | | 1992 | 5092157 | | 1993 | 5070501 | | 1994 | 5180048 | | 1995 | 5327435 | | 1996 | 5351983 | | 1997 | 5411843 | | 1998 | 5384721 | | 1999 | 5527884 | | 2000 | 5683047 | | 2001 | 5967780 | | 2002 | 5271359 | | 2003 | 6488540 | | 2004 | 7129270 | | 2005 | 7140596 | | 2006 | 7141922 | | 2007 | 7455458 | | 2008 | 7009728 | +——+———+ And execution time: MonetDB: 6.3s and InfoBright: 0.31s To group all results there is graph:  Conclusions:

Conclusions:

I am open to run any other queries if you want to compare or get info about air performance.

Hi guys,

We’ll download the public data set and send you instructions for setting up a successful bulk load for LucidDB.

JVS

John,

Sure, thank you for help. I’d happy to run similar queries against LucidDB.

Hi Vadim,

“I do not know what is the magic behind the curtain, they also do not have indexes like InfoBright.”

MonetDB does have indexes, but in contrast to most other engines, MonetDB creates and destroys them itself upon need. This comes from the “fewer knobs to tune is better” approach that MonetDB takes.

I’m still trying to understand here why “MonetDB does not like to scan many records”, as it seems to me at first sight that a full scan is made in most queries. Anyhow, if it does a plain full scan, it for sure loses from Infobright which really benefits from their excellent compressed storage in that case.

Thanks.

“Worth to note that MongoDB supports all INSERT / UPDATE / DELETE statements (and space is price for that as I understand), while InfoBright ICE edition allows you only LOAD DATA. InfoBright Enterprise allows INSERT / UPDATE but that also is not for online transactions processing.”

I guess, here you meant MonetDB, instead of MongoDB? 🙂

Anyway, good post.

How come people from InfoBright did not offer you a full commercial edition right after your first post on InfoBright?

Vadim, very interesting results.

You can also try sphinxsearch (http://www.sphinxsearch.com/) for some of those queries (it can now group by and order by). I’ve done some tests on the smaller dataset, and it gives very good results: http://www.arubin.org/blog/2009/10/01/reporting-queries-with-sphinx/

Will be interesting to see how sphinx performs on 55G

Tomaz,

Sure MonetDB in this case.

MongoDB is also getting popular and we discuss it periodically, but that’s different story

Alexander,

Sure I know that feature in Sphinx. I am going to have talk on OpenSQL Camp about this side of Sphinx, I may use this dataset.

What would be interesting to see it on multi-cpu box and run Sphinx query in N parallel processes.

Vadim,

Couple of questions and notes

1) Did you run query once or several times (so if any self tuning needs to be done it is done) ?

2) I’m wondering if data set you have was giving undue advantage to MonetDB. I see you have touch may be 1/10 of columns which with 4GB of memory gets close to amount of memory you have. I trust Infobright and compression can cost you when data is in memory but if working set is significantly more than amount of memory things may change.

3) What is about Joins ? So far we have only looked at single table queries which do not stress the optimizer a lot. It would be very interesting to see some more complicated multiple table queries too.

4) What is about MyISAM ? It would be really nice to keep it as a baseline.

Peter,

1) I did run queries in loop until I got stable repeatable number.

as 2-4) there indeed much more experiments can be done.

Hi Vadim!

thanks – really nice to see you guys looking into these analytical databases. I’m just writing to inform you that the link to the graph seems broken.

Roland,

Thanks, I fixed images, posted to localhost instead of Google Docs.

Any points on way into analytical databases ?

Vadim

“Any points on way into analytical databases”

no I have no particular on-topic points. I just added a comment as FYI re. the broken link, and used the opportunity to thank you for the comparison. I happen to do some BI/DWH for my job, but our volumes are so modest that I haven’t had the need for a special purpose product yet. I’m just interested and try to keep up with all those developments.

Vadim,

Infobright ICE does some serious optimisations on columns if they have less then 10,000 unique entries in the column and if you specify “COMMENT ‘lookup'” in the definition (they automatically generate and maintain an ENUM, I think). Think of them like indexes.

If you change the definitions for the key columns in the queries, does that change your results at all?

James,

That’s good point, I will try to change column definition for fields affected in queries.

Good post, but why not try to run TPC-H test ?

David,

TPC-H is boring and everyone can run it 🙂 Also it uses some unreal data. Database vendors often publish TPC-H by themselves: see e.g. http://www.monetdb.nl/projects/monetdb/SQL/Benchmark/TPCH/index.html.

And I know some companies optimizes performance of database especially for TPC-H load ( I do not point here to InfoBright nor MonetDB, I do not have that facts).

So better to take some independent and something that is more interesting for me.

James,

I did changes you mentioned, it really affected only Q8, but really affected significantly.

The execution time for InfoBright now is:

1y: 1.74 s

2y: 3.68 s

3y: 5.44 s

4y: 7.22 s

10y: 17.42 s

Thanks Vadim!

I was hoping you’d do something like this! I really appreciate the use of real world data because in my experience random, dummy data without any similarity to real world data just doesn’t give realistic info when it comes to large datasets.

I’m using ICE in production & the data compression is impressive (think green! as Brian Aker says). The query performance is impressive. But queries really have to be optimized and that is a challenge without explain. We’re not using joins (but unlike mysql you can have more columns) and we’re only using INTs, no varchars or date (tho my test with using date resulted in a very very slight increase in load time, not anything noticeable in query time). But if you mess up & run a query with curdate() in it, oh boy is that slow. Then do NOT kill it. That’s a bug (reported & they are working on it).

Thanks for doing these benchmarks.

erin

Hey Vadim,

One more variation for InfoBright…

You might try using the

FlightTimefields instead of theYear,Month,DayOfWeekstuff for your range queries. I found that the Knowledge Grid does some wonderful things with range queries over dates that it didn’t do for straight integers (even simple equality).James.

Hi Vadim,

We’ve written up a page with instructions on the LucidDB load (including experimental support for parallel load):

http://pub.eigenbase.org/wiki/LucidDbOtp

Best results will probably be achieved with one year per INSERT statement (theoretically you could use a massive UNION ALL to do them all at once, but I haven’t tested that).

We’re guessing that the performance degradation and eventual failures you hit may have been due to the blanks for number fields (which LucidDB is picky about); the cleansing CASE expressions are supplied to fix those. In case there are other problems, we’ve included a section on how to enable row rejection for debugging them.

Please let me know if you need any help with getting it working or tuned; we’ll be interested to see your results. LucidDB is targeted at complex schemas and queries, but we’d like to know where we stand even on simple table+query environments.

John,

Thank you, I will try it in next couple days.

Do you have public examples with complex queries and schemas, beside TPC-H ?

Thanks,

Vadim

It’s great to see these independent tests of Infobright Community Edition (ICE) and other open source products. The massive compression you saw is typical of what ICE and IEE (enterprise edition) users see.

A couple of comments in regards to Infobright:

While we have lots of ICE users with relatively small databases (which we would consider under 200GB), the real power of the product can be seen when the database is considerably larger(hundreds of gigabytes to tens of terabytes.) That’s when the Knowledge Grid advantages really show, as it’s ability to eliminate the need to access large amounts of data results in great query performance versus other products. Typically we also see that the kinds of queries people run don’t have a where clause that encompasses half the rows in the databases. How about doing a test against a 500 GB database? Try it with putting in a typical report date range (a month?) and see what kind of results you get.

As far as getting access to IEE as mentioned by someone above, a free trial is available via download on the Infobright.com website, or we could send it to you if you want. The compression and query speed will be comparable to ICE, but there are more options for loading data and faster load speed.

Carl Gelbart

Sr. S.E.

Infobright

Carl,

Thank you for following our blog.

I do not have bigger dataset on the hands that I can publish on public. When I have something interesting I will ask access to IEE.

Do you have data that you can share ?

Vadim,

The best examples of high schema and query complexity I know of are locked away inside of the now-defunct LucidEra. CRM analytic schemas there involved numerous fact tables (e.g. leads, opportunities, orders, forecasts, quotas), joining on many conforming dimensions (e.g. time, lead owners, salesreps, territories, campaigns, prospects, customers, opportunity type/status, pricing, partners, discounts, on and on…), and in fact many of the fact and dimension “tables” were actually views joining underlying base tables in order to make the warehouse schema accessible via Mondrian’s dimensional model.

But if you want to see a reasonable amount of query complexity on a public dataset, use Mondrian’s FOODMART schema with LucidDB:

http://pub.eigenbase.org/wiki/LucidDbOlap

And then execute MDX queries involving lots of filtered dimensions, and trace the underlying SQL (you’ll see a lot of star joins). The data volume is piddling though.

For schema complexity, I’m not aware of any, but if you can find something that already has Mondrian set up with PostgreSQL or MySQL, we have an automated procedure for replicating that into LucidDB:

http://pub.eigenbase.org/wiki/LucidDbMondrianReplication

Dear Vadim,

We appreciate your tryout of the system. We did not found the time yet to load the referenced data and re-run the experiments to shed some light on the questions raised. (Does RITA http://www.transtats.bts.gov/DL_SelectFields.asp?Table_ID=236&DB_Short_Name=On-Time has a (hidden) textual interface to quickly download the files? Can I download your cvs files?)

– we are aware that the documentation can be improved, especially in areas where confusion may arise due to SQL 2003 compliance.

– the parallel loading in 5.14 was turned off due to a design error that could materialize in COPY with initial offsets. The November release has it fixed. (on tpch-sf10 loading it is locally 3 x faster then the release you used)

– MonetDB does not rely heavily on value compression (the X100 project, now part of VectorWise/Ingres does)

MonetDB does, however, use the minimal data types required to store information.

– MonetDB automatically builds a dictionary system for strings

string heaps = 64KB are opportunistically (imperfect) duplicate eliminated

– The growth in database size most likely comes from the logical references (8bytes) into the dictionary(short strings)

– Q7 would be a target for us to run with the statement modifiers EXPLAIN (for plan) and TRACE (for detailed performance trace)

We would appreciate those for inspection.

– same for Q9. Note that MonetDB does not create any a priory (persistent) index, sorting or clustering to answer aggregate queries.

Everything is done at runtime and upon need. Including sampling for grouping.

I guess that Infobright benefits here from its partitioning information and compression over sorted tables (year).

Q3 is an example that highlights the differences in the ‘rough’.

regards,

Martin Kersten

The MonetDB Team

‘- MonetDB automatically builds a dictionary system for strings

string heaps smaller then 64KB are fully duplicate eliminated

string heaps larger then 64KB are opportunistically (imperfect) duplicate eliminated

Matin,

Thank you for follow up.

you can download data by direct links like

http://www.transtats.bts.gov/Download/On_Time_On_Time_Performance_1988_1.zip

just change year and month in loop.

I am going to try another benchmark, suggested by John Sichi http://www.cs.umb.edu/~poneil/StarSchemaB.PDF, but on 500GB+ data size. Should I wait on your

November release, I guess parallel load will be useful. What is ETA for release ?

Can I try X100 project or it is not available yet ?

Thanks,

Vadim

Vadim,

Thanks for the url. That looks a lot easier then a webform. I will install it as well.

We have a monthly bug fix release cycle and a 3-4 month feature release cycle.

The feature release date is the last days of November. That version is now internally used

as a release candidate. (if you are in a hurry we can provide you with a copy)

The SSB is a reduced version of TPC-H. We have looked at it shortly.

X100 is a product under development (http://www.vectorwise.com/index_js.php?page=mission_overview).

Presumably becoming available for a wider public Q1 2010.

regards, Martin

Dear Vadim,

The csv files have been obtained without problem. Can you sent me the SQL schema used for MonetDB?

It appears that some input files have errors (or I used wrong column types).

thanks, Martin

Martin,

I used next schema to load files

CREATE TABLE ontime (

YearD int DEFAULT NULL,

Quarter tinyint DEFAULT NULL,

MonthD tinyint DEFAULT NULL,

DayofMonth tinyint DEFAULT NULL,

DayOfWeek tinyint DEFAULT NULL,

FlightDate date DEFAULT NULL,

UniqueCarrier char(7) DEFAULT NULL,

AirlineID int DEFAULT NULL,

Carrier char(2) DEFAULT NULL,

TailNum varchar(50) DEFAULT NULL,

FlightNum varchar(10) DEFAULT NULL,

Origin char(5) DEFAULT NULL,

OriginCityName varchar(100) DEFAULT NULL,

OriginState char(2) DEFAULT NULL,

OriginStateFips varchar(10) DEFAULT NULL,

OriginStateName varchar(100) DEFAULT NULL,

OriginWac int DEFAULT NULL,

Dest char(5) DEFAULT NULL,

DestCityName varchar(100) DEFAULT NULL,

DestState char(2) DEFAULT NULL,

DestStateFips varchar(10) DEFAULT NULL,

DestStateName varchar(100) DEFAULT NULL,

DestWac int DEFAULT NULL,

CRSDepTime int DEFAULT NULL,

DepTime int DEFAULT NULL,

DepDelay int DEFAULT NULL,

DepDelayMinutes int DEFAULT NULL,

DepDel15 int DEFAULT NULL,

DepartureDelayGroups int DEFAULT NULL,

DepTimeBlk varchar(20) DEFAULT NULL,

TaxiOut int DEFAULT NULL,

WheelsOff int DEFAULT NULL,

WheelsOn int DEFAULT NULL,

TaxiIn int DEFAULT NULL,

CRSArrTime int DEFAULT NULL,

ArrTime int DEFAULT NULL,

ArrDelay int DEFAULT NULL,

ArrDelayMinutes int DEFAULT NULL,

ArrDel15 int DEFAULT NULL,

ArrivalDelayGroups int DEFAULT NULL,

ArrTimeBlk varchar(20) DEFAULT NULL,

Cancelled tinyint DEFAULT NULL,

CancellationCode char(1) DEFAULT NULL,

Diverted tinyint DEFAULT NULL,

CRSElapsedTime int DEFAULT NULL,

ActualElapsedTime int DEFAULT NULL,

AirTime int DEFAULT NULL,

Flights int DEFAULT NULL,

Distance int DEFAULT NULL,

DistanceGroup TINYINT DEFAULT NULL,

CarrierDelay int DEFAULT NULL,

WeatherDelay int DEFAULT NULL,

NASDelay int DEFAULT NULL,

SecurityDelay int DEFAULT NULL,

LateAircraftDelay int DEFAULT NULL,

FirstDepTime varchar(10) DEFAULT NULL,

TotalAddGTime varchar(10) DEFAULT NULL,

LongestAddGTime varchar(10) DEFAULT NULL,

DivAirportLandings varchar(10) DEFAULT NULL,

DivReachedDest varchar(10) DEFAULT NULL,

DivActualElapsedTime varchar(10) DEFAULT NULL,

DivArrDelay varchar(10) DEFAULT NULL,

DivDistance varchar(10) DEFAULT NULL,

Div1Airport varchar(10) DEFAULT NULL,

Div1WheelsOn varchar(10) DEFAULT NULL,

Div1TotalGTime varchar(10) DEFAULT NULL,

Div1LongestGTime varchar(10) DEFAULT NULL,

Div1WheelsOff varchar(10) DEFAULT NULL,

Div1TailNum varchar(10) DEFAULT NULL,

Div2Airport varchar(10) DEFAULT NULL,

Div2WheelsOn varchar(10) DEFAULT NULL,

Div2TotalGTime varchar(10) DEFAULT NULL,

Div2LongestGTime varchar(10) DEFAULT NULL,

Div2WheelsOff varchar(10) DEFAULT NULL,

Div2TailNum varchar(10) DEFAULT NULL,

Div3Airport varchar(10) DEFAULT NULL,

Div3WheelsOn varchar(10) DEFAULT NULL,

Div3TotalGTime varchar(10) DEFAULT NULL,

Div3LongestGTime varchar(10) DEFAULT NULL,

Div3WheelsOff varchar(10) DEFAULT NULL,

Div3TailNum varchar(10) DEFAULT NULL,

Div4Airport varchar(10) DEFAULT NULL,

Div4WheelsOn varchar(10) DEFAULT NULL,

Div4TotalGTime varchar(10) DEFAULT NULL,

Div4LongestGTime varchar(10) DEFAULT NULL,

Div4WheelsOff varchar(10) DEFAULT NULL,

Div4TailNum varchar(10) DEFAULT NULL,

Div5Airport varchar(10) DEFAULT NULL,

Div5WheelsOn varchar(10) DEFAULT NULL,

Div5TotalGTime varchar(10) DEFAULT NULL,

Div5LongestGTime varchar(10) DEFAULT NULL,

Div5WheelsOff varchar(10) DEFAULT NULL,

Div5TailNum varchar(10) DEFAULT NULL

);

As MonetDB did not allow me to use “Year” and “Month” columns names, I renamed to “YearD”, “MonthD”.

I did some pre-processing of csv files, i.e. I put all columns into quotes ” “.

Statement to load data into tables was:

/usr/local/monetdb/bin/mclient -lsql –database=ontime -t -s “COPY 700000 records INTO ontime FROM ‘/data/d1/AirData_ontime/${YEAR}_$i.txt.clean’ USING DELIMITERS ‘,’,’n’,'”‘ NULL AS ”;”

Dear Vadim et.al.

This data warehouse is interesting, as it is one from the ‘wild’.

The dimension and fact tables have been combined into a single table.

Furthermore, the varchar(10) used often in the schema is an

overshoot for the actual values stored.

I have ran the load and queries on the MonetDB Nov 2009 RC.

The results can be seen here http://www.cwi.nl/~mk/ontimeReport

The results align with those reported here. However, we may consider

reviving an old manual technique applied in an older version of MonetDB,

which would bring the storage down to ca 10 GB.

regards, Martin

Vadim et.al.

(not sure if my earlier message got through, if so ignore this one)

I have installed the database and ran the queries against

the MonetDB Nov 2009 RC.

The results are summarized in http://www.cwi.nl/~mk/ontimeReport.

regards, Martin

Martin,

I see you have comment about load time and datasize.

Is there something I should change to get better results ?

Thanks.

Vadim

Vadim

The load time is with the default loader settings in the Nov 2009 RC.

Unlike the Aug 2009 release, it uses all possible threads to act in

the loading.

Further improvements, which is still possible, would call for more

development in the loader algorithms itself. There is still quite

some room to improve further, because we have not reached our CPU

nor IO capacity limit. The current algorithm properly deals with

variable sized input and requests for OFFSET into the input stream.

(which may be coming from stdin)

For utmost loading speed there is an attachment option, but that

is a totally different story and only relevant for dealing with

tables of >1TB.

The database size was a little surprise. The OnTime table looks

like a flattened star-schema. This means we have a lot of small

dimension tables, as indicated in the count overview. It would

not be a design aimed at scalability.

Furthermore, any varchar() object in MonetDB leads to an overhead

of 8 bytes, a logical reference from a column to a string area.

This hurts if the actual collection of strings only consists of

a handful values and we have 120M references.

The way to deal with is well known, and the functionality

is part of MonetDB4 as ENUM types. We might put a little compression

optimizer in the pipeline, that recognizes and handles this case

directly and transparently for the user.

SQL users would obtain the same result, if they replaced the

corresponding columns with a dictionary table and a TINYINT or

SMALLINT and relied on the join to couple them.

This, however, would not be our preferred way.

regards, Martin

There is comment from David Lutz, InfoBright:

Hello! I have been testing with this data set, as well, thanks to your suggestion. There are many differences in our two environments and I don’t want to post the whole test as it would be almost as big as your original post.

What I did want to offer is that I performed the test on 10 years of data using the current version of Infobright Enterprise Edition (3.2.2) which has a multi-threaded loader. I loaded different years and my total raw data was only ~27GB in size (~1.5GB compressed). My experience in loading was very consistent for each subsequent year at between 4m12s and 4m20s for each complete year (concatenated the months into a single file) or around 2,560 seconds. The total record count was 70,097,260 records.

Another way of saying that would be 273,817 records/second versus your experience of 13,244 records/second (117,023,290 records in 8,836 seconds) with the single-threaded loader provided in Infobright Community Edition, a ~20X improvement (on my server) and using the DDL below.

The DDL is the other thing. When appropriate I used the COMMENT ‘LOOKUP’ attribute offered by Infobright to transparently convert repetitive text strings to internal, integer lookup values. This improves load times, compression on those columns, and query performance when one of these columns is used for filtering, joining or aggretating on. This is apparent in the improvements in response time for several of the queries above.

The DDL I used is included below:

DROP TABLE IF EXISTS ontime;

CREATE TABLE ontime(

Year SMALLINT NOT NULL, — 1 Year

Quarter TINYINT NOT NULL, — 2 Quarter (1-4)

Month TINYINT NOT NULL, — 3 Month

DayofMonth TINYINT NOT NULL, — 4 Day of Month

DayOfWeek TINYINT NOT NULL, — 5 Day of Week

FlightDate DATE NOT NULL, — 6 Flight Date (YYYY-MM-DD) [not (yyyymmdd)]

UniqueCarrier CHAR(2) NOT NULL COMMENT ‘LOOKUP’, — 7 Unique Carrier Code. When the same code has been used by multiple …

— field for analysis across a range of years.

AirlineID SMALLINT NOT NULL, — 8 An identification number assigned by US DOT to identify a unique …

Carrier CHAR(2) NOT NULL COMMENT ‘LOOKUP’, — 9 Code assigned by IATA and commonly used to identify a carrier. As the …

TailNum CHAR(6) NOT NULL COMMENT ‘LOOKUP’, — 10 Tail Number

FlightNum SMALLINT NOT NULL, — 11 Flight Number

Origin CHAR(3) NOT NULL COMMENT ‘LOOKUP’, — 12 Origin Airport

OriginCityName VARCHAR(50) NOT NULL COMMENT ‘LOOKUP’, — 13 Origin Airport, City Name

OriginState CHAR(2) NOT NULL COMMENT ‘LOOKUP’, — 14 Origin Airport, State Code

OriginStateFips TINYINT NOT NULL, — 15 Origin Airport, State Fips

OriginStateName VARCHAR(50) NOT NULL COMMENT ‘LOOKUP’, — 16 Origin Airport, State Name

OriginWac TINYINT NOT NULL, — 17 Origin Airport, World Area Code

Dest CHAR(3) NOT NULL COMMENT ‘LOOKUP’, — 18 Destination Airport

DestCityName VARCHAR(50) NOT NULL COMMENT ‘LOOKUP’, — 19 Destination Airport, City Name

DestState CHAR(2) NOT NULL COMMENT ‘LOOKUP’, — 20 Destination Airport, State Code

DestStateFips TINYINT NOT NULL, — 21 Destination Airport, State Fips

DestStateName VARCHAR(50) NOT NULL COMMENT ‘LOOKUP’, — 22 Destination Airport, State Name

DestWac TINYINT NOT NULL, — 23 Destination Airport, World Area Code

CRSDepTime CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 24 CRS Departure Time (local time: hhmm)

DepTime CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 25 Actual Departure Time (local time: hhmm)

DepDelay DECIMAL(6,2) NOT NULL, — 26 Difference in minutes between scheduled and actual departure time. Early …

DepDelayMinutes DECIMAL(6,2) NOT NULL, — 27 Difference in minutes between scheduled and actual departure time. Early …

DepDel15 TINYINT NOT NULL, — 28 Departure Delay Indicator, 15 Minutes or More (1=Yes)

DepartureDelayGroups TINYINT NOT NULL, — 29 Departure Delay intervals, every (15 minutes from 180)

DepTimeBlk CHAR(9) NOT NULL, — 30 CRS Departure Time Block, Hourly Intervals

TaxiOut DECIMAL(6,2) NOT NULL, — 31 Taxi Out Time, in Minutes

WheelsOff CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 32 Wheels Off Time (local time: hhmm)

WheelsOn CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 33 Wheels On Time (local time: hhmm)

TaxiIn DECIMAL(6,2) NOT NULL, — 34 Taxi In Time, in Minutes

CRSArrTime CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 35 CRS Arrival Time (local time: hhmm)

ArrTime CHAR(4) NOT NULL COMMENT ‘LOOKUP’, — 36 Actual Arrival Time (local time: hhmm)

ArrDelay DECIMAL(6,2) NOT NULL, — 37 Difference in minutes between scheduled and actual arrival time. Early …

ArrDelayMinutes DECIMAL(6,2) NOT NULL, — 38 Difference in minutes between scheduled and actual arrival time. Early …

ArrDel15 TINYINT NOT NULL, — 39 Arrival Delay Indicator, 15 Minutes or More (1=Yes)

ArrivalDelayGroups TINYINT NOT NULL, — 40 Arrival Delay intervals, every (15-minutes from 180)

ArrTimeBlk CHAR(9) NOT NULL, — 41 CRS Arrival Time Block, Hourly Intervals

Cancelled TINYINT NOT NULL, — 42 Cancelled Flight Indicator (1=Yes)

CancellationCode VARCHAR(100), — 43 Specifies The Reason For Cancellation

Diverted TINYINT NOT NULL, — 44 Diverted Flight Indicator (1=Yes)

CRSElapsedTime DECIMAL(6,2) NOT NULL, — 45 CRS Elapsed Time of Flight, in Minutes

ActualElapsedTime DECIMAL(6,2) NOT NULL, — 46 Elapsed Time of Flight, in Minutes

AirTime DECIMAL(6,2) NOT NULL, — 47 Flight Time, in Minutes

Flights TINYINT NOT NULL, — 48 Number of Flights

Distance DECIMAL(6,2) NOT NULL, — 49 Distance between airports (miles)

DistanceGroup TINYINT NOT NULL, — 50 Distance Intervals, every 250 Miles, for Flight Segment

CarrierDelay DECIMAL(6,2), — 51 Carrier Delay, in Minutes

WeatherDelay DECIMAL(6,2), — 52 Weather Delay, in Minutes

NASDelay DECIMAL(6,2), — 53 NAS Delay, in Minutes

SecurityDelay DECIMAL(6,2), — 54 Security Delay, in Minutes

LateAircraftDelay DECIMAL(6,2), — 55 Late Aircraft Delay, in Minutes

FirstDepTime CHAR(4), — 56 First Gate Departure Time at Origin Airport

TotalAddGTime DECIMAL(6,2), — 57 Total Ground Time Away from Gate for Gate Return or Cancelled Flight

LongestAddGTime DECIMAL(6,2), — 58 Longest Time Away from Gate for Gate Return or Cancelled Flight

DivAirportLandings TINYINT, — 59 Number of Diverted Airport Landings

DivReachedDest TINYINT, — 60 Diverted Flight Reaching Scheduled Destination Indicator (1=Yes)

DivActualElapsedTime DECIMAL(6,2), — 61 Elapsed Time of Diverted Flight Reaching Scheduled Destination, in …

DivArrDelay DECIMAL(6,2), — 62 Difference in minutes between scheduled and actual arrival time for a …

DivDistance DECIMAL(6,2), — 63 Distance between scheduled destination and final diverted airport …

Div1Airport CHAR(3), — 64 Diverted Airport Code1

Div1WheelsOn CHAR(4), — 65 Wheels On Time (local time: hhmm) at Diverted Airport Code1

Div1TotalGTime DECIMAL(6,2), — 66 Total Ground Time Away from Gate at Diverted Airport Code1

Div1LongestGTime DECIMAL(6,2), — 67 Longest Ground Time Away from Gate at Diverted Airport Code1

Div1WheelsOff CHAR(4), — 68 Wheels Off Time (local time: hhmm) at Diverted Airport Code1

Div1TailNum CHAR(6), — 69 Aircraft Tail Number for Diverted Airport Code1

Div2Airport CHAR(3), — 70 Diverted Airport Code2

Div2WheelsOn CHAR(4), — 71 Wheels On Time (local time: hhmm) at Diverted Airport Code2

Div2TotalGTime DECIMAL(6,2), — 72 Total Ground Time Away from Gate at Diverted Airport Code2

Div2LongestGTime DECIMAL(6,2), — 73 Longest Ground Time Away from Gate at Diverted Airport Code2

Div2WheelsOff CHAR(4), — 74 Wheels Off Time (local time: hhmm) at Diverted Airport Code2

Div2TailNum CHAR(6), — 75 Aircraft Tail Number for Diverted Airport Code2

Div3Airport CHAR(3), — 76 Diverted Airport Code3

Div3WheelsOn CHAR(4), — 77 Wheels On Time (local time: hhmm) at Diverted Airport Code3

Div3TotalGTime DECIMAL(6,2), — 78 Total Ground Time Away from Gate at Diverted Airport Code3

Div3LongestGTime DECIMAL(6,2), — 79 Longest Ground Time Away from Gate at Diverted Airport Code3

Div3WheelsOff CHAR(4), — 80 Wheels Off Time (local time: hhmm) at Diverted Airport Code3

Div3TailNum CHAR(6), — 81 Aircraft Tail Number for Diverted Airport Code3

Div4Airport CHAR(3), — 82 Diverted Airport Code4

Div4WheelsOn CHAR(4), — 84 Wheels On Time (local time: hhmm) at Diverted Airport Code4

Div4TotalGTime DECIMAL(6,2), — 85 Total Ground Time Away from Gate at Diverted Airport Code4

Div4LongestGTime DECIMAL(6,2), — 86 Longest Ground Time Away from Gate at Diverted Airport Code4

Div4WheelsOff CHAR(4), — 87 Wheels Off Time (local time: hhmm) at Diverted Airport Code4

Div4TailNum CHAR(6), — 88 Aircraft Tail Number for Diverted Airport Code4

Div5Airport CHAR(3), — 89 Diverted Airport Code5

Div5WheelsOn CHAR(4), — 90 Wheels On Time (local time: hhmm) at Diverted Airport Code5

Div5TotalGTime DECIMAL(6,2), — 91 Total Ground Time Away from Gate at Diverted Airport Code5

Div5LongestGTime DECIMAL(6,2), — 92 Longest Ground Time Away from Gate at Diverted Airport Code5

Div5WheelsOff CHAR(4), — 93 Wheels Off Time (local time: hhmm) at Diverted Airport Code5

Div5TailNum CHAR(6) — 94 Aircraft Tail Number for Diverted Airport Code5

)

ENGINE=BRIGHTHOUSE

CHARACTER SET ascii

COLLATE ascii_bin

;

I would like to see the complete Infobright report, or at least

the basics of his server and an update of the summary table.

One remark, 70079260/2560 = 27374 records/second, so the

parallel loader is only twice as fast as the original number reported.

Ofcourse subject to hardware configuration settings.

regards, Martin

Dear Vadim,

I run the same benchmark against MonetDB5, the execution time is very short, however, after I run this same query for 10000 times, the mserver5 process used up to 1.5G memory and crashed. I can see some crash reports in the monetdb forum, but no memory leak report, could you give me some advices? Did you encouter this problem when you run the benchmark?

Thanks

Dear Frank,

I am not expert in MonetDB, but Martin Kersten is.

If he does not notice your question, I will pass it to him.

Dear Frank,

Sounds as an error I would like to reconfirm (and solve if correct).

Can you provide more information on this experiment on the MonetDB users’ list.

[email protected]

In particular, version used, platform characteristics, and experiment script.

Dear Vadim and Martin,

Thanks for your quick reply, I have post my experiment to the MonetDB users’list.

Dear all,

This benchmark was a good exercise to re-prioritize some of our development efforts towards improvements for daily BI use.

Although the original results were quite promising, we knew that low hanging fruit in the MonetDB stack was not harvested.

A peek preview on the performance results for the next feature release can be found in http://www.cwi.nl/~mk/ontimeReport

Happy newyear. Martin

Martin,

That’s impressive. I can’t recall so fast response with performance fixes with significant improvements.

Is that version available to download ?

First of all, I would like to thank Vadim and mysqlperformanceblog team for very interesting posts – I am a regular visitor of your web-site.

In our company we are looking for D/W solution which will allow us to do a real-time (10-15 sec) queries on 1B+ row tables. InfoBright, MonetDB, InfiniDB are in my short lists. Having read your article first time I was impressed by performance numbers of columnar databases, but now … I think that there are a lot of room for query optimization inside both MonetDB and Infobright.

For example, this query takes 7.9 sec for MonetDB and 12 sec for Infobright.

-Q1: Count flights per day from 2000 to 2008 years

SELECT DayOfWeek, count(*) AS c FROM ontime WHERE YearD BETWEEN 2000 AND 2008 GROUP BY DayOfWeek ORDER BY c DESC

This is a full column scan query. Group BY column has very low cardinality (7) => do grouping and aggregation in memory.

DayOfWeek requires only 3 bits per row => for 117M row table, it will need ~30Mb to store the whole column.

Loading column from disk (30MB), decompressing it and aggregating 117M numbers should not take more than 1 sec (actually, I think I can do it on my laptop in less than 0.5 sec).

Vadim, can you forward my e-mail address and question to MonetDB and Infobright guys?

This is query:

SELECT T.C FROM T WHERE (up to 4 simple filter conditions ANDed) ORDER BY T.B DESC LIMIT N;

The good selectivity is not guaranteed. It may require almost full table scan in a worst case. Can they do this type of query under 15 sec in a worst case on 1B table which is similar to the table from this post (120 columns – most of them with low cardinality – no strings only numbers)

Forgot to mention YearD column in my example. Of course we need to scan through 2 columns, but both are with very low cardinality and must be very compressible ones. The second column will add possibly 60Mb more data to load from disk.

Vlad,

I forwarded your question to my contacts in MonetDB and InfoBright.

Dear Vlad,

Sorry for the delay in answering your question regarding Q1 and compression.

Bit-wise compression is a two-edge sword. You save on IO and pay with CPU cycles.

The numbers reported for both MonetDB and Infobright on this blog already show

that compression is just one ingredient in the equation.

MonetDB used more diskspace and still performed very well.

In http://homepages.cwi.nl/~mk/ontimeReport you find more recent numbers.

In particular, Q1 runs currently within 1 sec on the 118M tuples.

It is certainly worth trying out the 1B version using the upcoming Feb2010 release.

Thanks, Martin

I will definitely give MonetDB a try.

hi Vadim,

what’s king of software are you using to make picture graph ? thanks in advance 😉

For anyone who’s interested in this data and/or in these queries as a benchmark, I’ve created a github repository:

https://github.com/eyalroz/usdt-ontime-tools

for automating the download of the CSVs and the loading of the data – at least into MonetDB. The numbers have changed significantly since 2009 – but there are also schema changes because of what BTS provides; some make the schema ‘tighter’ – e.g. the fact that the decimal values are not really decimal, they always end with .00 and are really ints, so my loading script cleans them up; but on the other hand, you can’t rely on having exactly 6 chars in the tailnum, and sometimes it’s even missing entirely.

I’ll be using this to try out some GPU decompression primitives.