Recently I was doing a little work for a client who has MyISAM tables with many columns (the same one Peter wrote about recently). The client’s performance is suffering in part because of the number of columns, which is over 200. The queries are generally pretty simple (sums of columns), but they’re ad-hoc (can access any columns) and it seems tailor-made for a column-oriented database.

I decided it was time to actually give Infobright a try. They have an open-source community edition, which is crippled but not enough to matter for this test. The “Knowledge Grid” architecture seems ideal for the types of queries the client runs. But hey, why not also try MonetDB, another open-source column-oriented database I’ve been meaning to take a look at?

What follows is not a realistic benchmark, it’s not scientific, it’s just some quick and dirty tinkering. I threw up an Ubuntu 9.04 small server on Amazon. (I used this version because there’s a .deb of MonetDB for it). I created a table with 200 integer columns and loaded it with random numbers between 0 and 10000. Initially I wanted to try with 4 million rows, but I had trouble with MonetDB — there was not enough memory for this. I didn’t do anything fancy with the Amazon server — I didn’t fill up the /mnt disk to claim the bits, for example. I used default tuning, out of the box, for all three databases.

The first thing I tried doing was loading the data with SQL statements. I wanted to see how fast MyISAM vs. MonetDB would interpret really large INSERT statements, the kind produced by mysqldump. But MonetDB choked and told me the number of columns mismatched. I found reference to this on the mailing list, and skipped that. I used LOAD DATA INFILE instead (MonetDB’s version of that is COPY INTO). This is the only way to get data into Infobright, anyway.

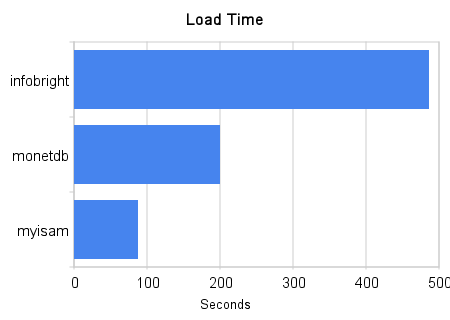

I loaded 1 million rows into the table. Here’s a graph of the times (smaller is better):

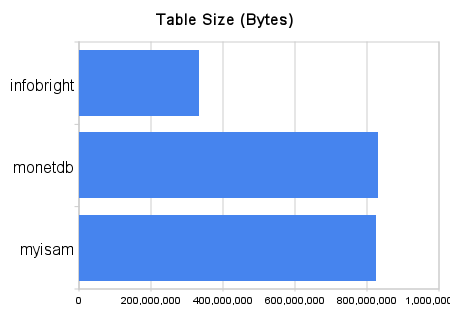

MyISAM took 88 seconds, MonetDB took 200, and Infobright took 486. Here’s the size of the resulting table on disk (smaller is better):

MyISAM is 787MB, MonetDB is 791MB, and Infobright is 317MB. Next I ran three queries:

|

1 2 3 |

select sum(c19), sum(c89), sum(c129) from t; select sum(c19), sum(c89), sum(c129) from t where c11 > 5; select sum(c19), sum(c89), sum(c129) from t where c11 < 5; |

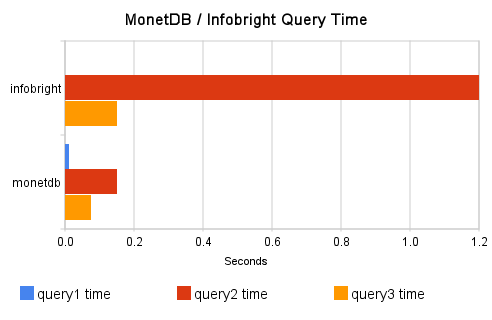

Graphs of query performance time for all three databases are really not very helpful, because MyISAM is so much slower that you can’t see the graphs for the others. So I’ll give the numbers and then omit MyISAM from the graphs. Here are the numbers for everything I measured:

| myisam | monetdb | infobright | |

|---|---|---|---|

| size (bytes)Â Â Â | 826000000Â Â Â | 829946723 | 332497242 |

| load time (seconds)Â Â Â | 88Â Â Â | 200Â Â Â | 486 |

| query1 time   | 3.4   | 0.012   | 0.0007 |

| query2 time   | 3.4   | 0.15   | 1.2 |

| query3 time   | 2.5   | 0.076   | 0.15 |

And here is a graph of Infobright duking it out with MonetDB on the three queries I tested (shorter bar is better):

I ran each query a few times, discarded the first run, and averaged the next three together.

A few miscellaneous notes: don’t forget that Infobright is not just a storage engine plugged into MySQL. It’s a complete server with a different optimizer, etc. This point was hammered home during the LOAD DATA INFILE, when I looked to see what was taking so long (I was tempted to use oprofile and see if there are sleep() statements). What did I see in ‘top’ but a program called bhloader. This bhloader program was the only thing doing anything; mysqld wasn’t doing a thing. LOAD DATA INFILE in Infobright isn’t what it seems to be. Otherwise, Infobright behaved about as I expected it to; it seemed pretty normal to a MySQL guy.

MonetDB was a bit different. I had to be a bit resourceful to get everything going. The documentation was for an old version, and was pretty sparse. I had to go to the mailing lists to find the correct COPY syntax — it wasn’t that listed in the online manual. And there were funny things like a “merovingian” process (think “angel”) that had to be started before the server would start, and I had to destroy the demo database and recreate it before I could start it as shown in the tutorials.

MonetDB has some unexpected properties; it is not a regular RDBMS. Still, I’m quite impressed by it in some ways. For example, it seems quite nicely put together, and it’s not at all hard to learn.

It doesn’t really “speak SQL” — it speaks relational algebra, and the SQL is just a front-end to it. You can talk XQuery to it, too. I’m not sure if you can talk dirty to it, but you can sure talk nerdy to it: you can, should you choose to, give it instructions in MonetDB Assembly Language (MAL), the underlying language. An abstracted front-end is a great idea; MySQL abstracts the storage backend, but why not do both? Last I checked, Drizzle is going this direction, hurrah!

EXPLAIN is enlightening and frightening! You get to see the intermediate code from the compiler. The goggles, they do nothing!

From what I was able to learn about MonetDB in an hour, I believe it uses memory-mapped files to hold the data in-memory. If this is true, it explains why I couldn’t load 4 million rows into it (this was a 32-bit Amazon machine).

The SQL implementation is impressive. It’s a really solid subset of SQL:2003, much more than I expected. It even has CTEs, although not recursive ones. (No, there is no REPLACE, and there is no INSERT/ON DUPLICATE KEY UPDATE.) I didn’t try the XQuery interface.

Although I didn’t try it out, there are what looks like pretty useful instrumentation interfaces for profiling, debugging and the like. The query timer is in milliseconds (why doesn’t mysql show query times in microseconds? I had to resort to Perl + Time::HiRes for timing the Infobright queries).

I think it can be quite useful. However, I’m not quite sure it’s useful for “general-purpose” database use — there are a number of limitations (concurrency, for one) and it looks like it’s still fairly experimental.

I am an Infobright user for a year and a half now.

One of the main differences between ICE (Infobright Community Edition) and IEE (Infobright Entreprise Edition) is the loader which is several times faster. So the performance would be similar to MonetDB on all point. The choice was made for me because I could leverage my MySQL expertise using Infobright.

Baron, I’m surprised by those LOAD DATA INFILE results. I didn’t catch how many rows you ended up loading, but from the size of the MyISAM table it looks like about a million. Infobright’s loader should do way better than 2000 rows per second, never mind what version you were using. For that sort of data set, even on the smallest Amazon VM, more than a minute of processing sounds excessive. Then again, my experience is on fairly beefy dedicated hardware so maybe I’m just off. I wouldn’t have expected it to be slower than MyISAM, though. On the other hand, if that result came from enterprise edition eval version using INSERT INTO, then I’m not surprised; that execution path is essentially useful only in the form of INSERT INTO .. SELECT .. and large batches, and even so, going through a loader file or better yet, a named pipe would be preferable for big imports.

The difference query2 and query3 times is another interesting anomaly. You might want to repeat with a count(*) included to see how much data it inspected. Another thing where you’d find a difference between a test with a random data set and most real-world material is that if the row values have any sort of pattern of repeating blocks or sequential values (rather than random), the KN evaluation power kicks in and allows the engine to skip entire chunks of data. The easy typical case is incremental time-series data where the timestamp essentially automatically partitions the data set.

EXPLAIN out of Infobright is entirely missing, and the closest thing to one is a spewage at the start of query execution into an optional debug log which looks surprisingly similar to the output you got from MonetDB.

PS. Your captcha mechanism seems to think 6+4 does not equal 10.

Osma, I was also surprised by the slow loading on Infobright. That’s why I joked that I wondered if the open-source version has been intentionally crippled with sleep(). (There’s no use dancing around it: the open-source version is crippleware. The only question is how badly crippled. Since it’s open-source, it might be fixable if anyone wants to sponsor it… hint hint. I have NOT looked at the code.)

I used the open-source version, ICE, and used LOAD DATA INFILE. It is a million rows from a flat comma-delimited file, the same file I loaded into MyISAM and into MonetDB.

The data’s random distribution surely works against the “knowledge grid” and “data pack” layout. I did not check, but I fully expect that it wasn’t able to skip most of the blocks. However, I agree that the difference between the less-than-five and greater-than-five results merits more investigation. My superficial knowledge of the Infobright data layout led me to believe the SUM(), MIN() and MAX() would be computed and stored per “data pack” so the query could be simply answered from the meta-data when all the values meet the WHERE clause. I see this is apparently true, but when there’s a WHERE clause naturally all the rows in the pack have to be examined — but I would expect that to have about an equal cost for both of the WHERE clauses. I saw in the manual that there are some ways to get data distribution statistics spit out into the debug log, so I might check that if I get a chance and see if I can learn something.

Our captcha is a constant pain in the rear end, but I don’t know exactly what the problem is — some caching or something. Apologies to anyone who gets bitten by it.

As a developer from the MonetDB team I am pleasantly surprised by the interest for MonetDB/SQL. Thank you very much for this interesting comparison. We are aware that our documentation is not in shape, but we welcome all suggestions and/or remarks! We are also all ears to hearing about issues with MonetDB. If you have any, please file a bug at our SourceForge bugtracker (easily found through our website). We also appreciate questions, suggestions, etc. through our mailing lists (also hosted at SourceForge). Thanks.

Fabian, the MonetDB team is doing a good job. I know how much work good documentation is!

What about storing the data in mysql but then loading it into Sphinx and querying that? I once used Sphinx for full table scans and it was much faster than doing it in myisam tables (mostly because multiple joins were involved and things would lock up like mad in a write heavy environment though).

I suppose scanning without indexing any textual data is kind of abusing a full-text search engine but I think that could be an interesting avenue to explore.

Guillaume, that’s actually one of Sphinx’s strengths, and I’m aware of a number of implementations of that type of thing. Sphinx is more than just full-text search.

I use both the Enterprise version and the community edition. I have not noticed that ICE would be crippled in any way other than what they state: it doesn’t support INSERT, UPDATE or DELETE, and it doesn’t parallelize data loading. I’ve never noted that the ICE loader would be slower otherwise; though any time you have to change the delimiter defaults or do any other data type conversion (as you’d have to do with a comma-delimited input file), it does become slower. I haven’t analyzed whether that’s caused by ICE or the MySQL upper layers. In my opinion, both are “crippled” by lack of ALTER TABLE. A columnar engine could be expected to easily support adding or dropping columns, but unfortunately Infobright does not (yet, anyway, though my guess would be they’re working on that).

Your guess regarding the knowledge grid and aggregation functions match my understanding and experience of how it works. In many cases, data layout naturally ends up, or can be semi-trivially designed to end up so that common queries can exclude most of the database by examining just the “knowledge grid”, thus saving significant amounts of I/O (in addition to all disk I/O also being compressed). The effect is even more dramatic when the queries have joins in them; taking the engine behavior and knowledge grid structure into consideration in the schema (typically by ADDING a column or two, rather than trying minimize their number as would be the case with row-based engines), ad-hoc joins can be extremely quick (not as quick as avoiding them, though). The more you have rows, and the more the join/where columns have distinct values with some sort of pattern to their distribution, the bigger the benefit — ie, it has a pretty excellent scale factor for many workloads.

Interesting. So using a tab-delimited file should load more quickly, you think? I wouldn’t mind testing that.

Baron,

Hopefully you will find customers who are interested in LucidDB (http://www.luciddb.com). It is another open-source RDBMS that is specialized for data warehouse queries. John spoke about it at the Percona Performance conference.

A feature that I always want to have is some form of MVCC so that I can do long-running queries while continuously updating the database from replication. I get that from InnoDB+MySQL minus the support for fast DW queries. I don’t get that from MyISAM+MySQL. IIRC LucidDB supports 1 writer with N readers via explicit snapshots.

I think InfoBright is amazing technology but I am not a fan of the names for it (knowledge grid, etc). Using an index that stores the min,max per column for each block of a database file (data packs or data pack nodes in Infobright) is also used by Netezza (they call it zone maps) and Oracle (not sure what they call it). Although Infobright does more this storing aggregated values per data pack. Monty also spoke at the MySQL Conference about this and called it ‘XDB indexes’. I hope a standard name emerges for this.

Mark, I figured it was better to play along with the silly names. How about “block” and “block meta-data” for standard names.

Mark, LucidDB does seem very interesting but right now it’s very Java-centric. If you’re using a different language you have to do something along the lines of writing java middleware to pass your queries along to LucidDB and pass the results back.

I have high hopes for the move to the http protocol though. Once that’s done if it has the performance characteristics I think it does I would consider writing a client for it.

Java centric == JDBC from http://www.luciddb.org/roadmap.html

When I said Java-centric I did indeed mean because you have to access the db through JDBC but also because there seems to be effort being put in to make sure LucidDB integrates nicely with other Java projects such as Pentaho’s Mondrian. That’s a very good thing but doesn’t help me much personally since introducing a Java dependency is something I want to avoid.

The great thing about open source though is that if it doesn’t exist and you have enough time you can hack it in yourself. Perhaps integrating a Thrift interface would help decouple the project from Java and make it easier to write client libraries to access the db. Something to add to my todo list I guess.

(Sorry for derailing the topic)

Baron,

Did you just run query once or did you run several times ? As I remember Infobright builds knowledge grid based on the queries which are being ran.

I ran them once, then ran them three times and averaged the three runs. I set global query_cache_size=0 first (ok, so it wasn’t out of the box after all).

Peter,

From my understanding “knowledge grid” is built for JOINs, but not for single table queries.

But anyway it is good to run several times 🙂

The data size is too small (fits in the ram) for the column layout to shine.

Regarding LucidDB clients for other languages: someone recently contributed a .net client, and the same person is working on getting the Tungsten MySQL->JDBC bridge working, which would give us a C/C++ client solution (and hopefully any other language which already works with a MySQL client, i.e. all of them).

Additional options for specific languages are here:

http://pub.eigenbase.org/wiki/LucidDbNonJavaClients

Why you don’t put the version used of each software? that’s is very important!! there are differences between infobright 3.1.1 and 3.2 (ICE vs IEE), MySQL 4.1 vs 5.0 vs 5.1, for example.

Because saying the version number crosses the line from a quick test to a lengthy test? 😉 But seriously,

./mysqld Ver 5.1.14-log for pc-linux-gnu on i686 (build number (revision)=IB_3.2_GA_5316(ice))

./mysqld-ubuntu Ver 5.0.75-0ubuntu10.2 for debian-linux-gnu on i486 ((Ubuntu))

MonetDB doesn’t seem to have such a simple way to find out, but dpkg says:

ii monetdb5-server 5.14.2-20090924 MonetDB database server version 5

By the way, in reply to an earlier comment/question about Infobright, I get

Baron, the “simple” way to find out MonetDB’s version is either by looking at merovingian’s logfile (the server issues a startup notice with its version), or by running mserver5 –version.

Thanks for the tip! That is what I was looking for, but I was out of my familiar terrain.

What’s Infobright’s sync behaviour? If it forces sync after each transaction (as is the default setting in InnoDB), then that could be rather slow on an Amazon machine if the transactions are small or implicit; Amazon’s service doesn’t deal with high IO rates too well.

LOAD DATA INFILE is one transaction. Infobright is transactional even over LOAD DATA, eg that needs to be committed, either automatically or explicitly, and can be rolled back as well. Easy to do when there can be only one client writing… Anyway, sync behavior isn’t really relevant in an ADMBS workload loading and querying thousands, if not millions of rows at a time. I did some followup testing as well, and that 2000 rows/sec figure seems about right after all — it’s the 200 columns that do it. Loading speed is in proportion to number of columns, and I’ve been dealing with 10-50 column tables myself..

More in http://www.fishpool.org/post/2009/10/03/Some-scaling-observations-on-Infobright

How does its performance compare to Hyperdex, Aerospike or RocksDB?

Hello Bason,

I have been comparing MySQL with MonetDB. As mentioned here, queries that took minutes in MySQL got executed in a matter of few seconds in Monet.

However, I found a real blockade with joins.

I have 2 tables – each with 150 columns. Among these (150+150) columns, around 60 are CHARACTER LARGE OBJECT type. Both the tables are populated with around 50,000 rows – with data in all the 150 columns. The average length of data in a CLOB type column is 9,000 (varying from 2 characters to 20,000 characters). The primary key of both the tables have same values and the join is always based on the primary key. The rows are by default inserted in ascending order on the primary key.

When I ran an inner join query on these two tables with about 5 criteria and with limit 1000, Monet processed this query in 5 seconds, which is completely impressive compared to MySQL’s (19 seconds).

But when I ran the same query with same criteria and limits using a left or right joins, Monet took around 5 minutes, which is clearly way behind MySQL’s (just 22 seconds).

I went through the logs using trace statement, but the traces of inner and left joins are more or less the same, except that time for each action is far higher in left join trace.

Also, the time taken for the same join query execution varies by 2 or 3 seconds when run at several time intervals.

Having read a lot about Monet’s speed compared to traditional relation row based DBs, I could feel that I am missing something, but couldn’t figure out what.

Can you please tell me why there is such a huge difference in such query execution time and how I can prevent it?

Much grateful to have any help. Thanks a lot in advance.

P.S.: I am running Monet on Macbook Pro – 2.3 GHz Core i7 – Quad core processor with 8 GB RAM.