In a previous post, Using DBdeployer to manage MySQL, Percona Server, and MariaDB sandboxes, we covered how we use DBdeployer within the Support Team to easily create MySQL environments for testing purposes. Here, I will expand on what Peter wrote in Installing MySQL with Docker, to create environments with more than one node. In particular, we will set up one master node, two slaves replicating from it, and a node using Orchestrator.

In a previous post, Using DBdeployer to manage MySQL, Percona Server, and MariaDB sandboxes, we covered how we use DBdeployer within the Support Team to easily create MySQL environments for testing purposes. Here, I will expand on what Peter wrote in Installing MySQL with Docker, to create environments with more than one node. In particular, we will set up one master node, two slaves replicating from it, and a node using Orchestrator.

Before we start, let me say that this project should be used for testing or doing proof of concepts only and that we don’t suggest running this in production. Additionally, even if Percona provides support for these tools individually, this project in particular is an in-house development and is used here for demonstration purposes only.

Docker Compose is a tool that lets us define and run many containers with one simple command:

|

1 |

shell> docker-compose up |

A YAML file is used for all the related definitions needed (docker-compose.yml), like container names and images, and networks to be created. If needed, the online documentation is a good place to start getting acquainted with it, since it’s out of scope for this blog post in particular. However, let us know if you’d like to hear more about it in future blogs. Additionally, one can use a special .env file to have variables (or constants) defined.

Orchestrator is a tool for managing MySQL replication and to provide high availability for it. Again, refer to the online documentation (or this blog post from Tibor, Orchestrator: MySQL Replication Topology Manager) if you need more insight into the tool since we won’t discuss that here.

If you haven’t yet heard about it, this is a great chance for you to set up a test environment in which you can check it out in more depth, easily! All you need is to have Docker and Docker Compose installed.

In the Support Team, we need to be flexible enough to cater to our customer’s diverse set of versions deployed, without having to spend much time setting up the testing environments needed. Getting the test environments up and running fast is not only desirable but needed.

All the files needed to run this are in this GitHub project. To clone it:

|

1 2 |

shell> git clone https://github.com/guriandoro/docker.git shell> cd docker/ |

First, we need to run the MySQL replication environment. For this we can use the following commands:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

shell> cd replication/master-slave/ shell> ./make.sh up Creating network "global_docker_network" with driver "bridge" Creating replication_agustin.286589_master ... done Creating replication_agustin.286589_slave_1 ... done Creating replication_agustin.286589_slave_2 ... done ---> Waiting for master node to be up... ............mysqld is alive ---> Creating repl user in master ---> Setting up slaves ---> Waiting for slave01 to be up... .mysqld is alive ---> Waiting for slave02 to be up... .mysqld is alive Use the following commands to access BASH, MySQL, docker inspect and logs -f on each node: run_bash_replication_agustin.286589_master run_mysql_replication_agustin.286589_master run_inspect_replication_agustin.286589_master run_logs_replication_agustin.286589_master [...output trimmed...] Name Command State Ports ------------------------------------------------------------------------------------ replication_agustin.286589_master /docker-entrypoint.sh mysqld Up 3306/tcp replication_agustin.286589_slave_1 /docker-entrypoint.sh mysqld Up 3306/tcp replication_agustin.286589_slave_2 /docker-entrypoint.sh mysqld Up 3306/tcp |

There are several interesting things to note here. The first is that the script dynamically creates some wrappers for us so that accessing the containers is easy. For instance, to access the MySQL client for the master node, just execute ./run_mysql_orchestrator_agustin.73b9f7_master; or to access BASH for slave01 use ./run_bash_orchestrator_agustin.73b9f7_slave01. The script will get your OS user name and the current working directory to come up with a unique string for the container names (to avoid collisions with other running containers). Additionally, we will get wrappers for inspecting container information (docker inspect …) and checking logs (docker logs -f …), which can come handy for debugging issues.

The second thing to note is that at the end, the script provides the output for docker-compose ps which will show us each container that was created, their state, and port redirections if any.

Now we have the three MySQL containers running: one master and two slaves pointing to it. Note down the master node name, since we will need it for when we start Orchestrator. In this case, it is replication_agustin.286589_master, as seen in the last outputs from the make.sh script output.

To deploy the Orchestrator container, run the following commands:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

shell> cd ../../orchestrator/single-node/ shell> ./make.sh up replication_agustin.286589_master Creating orchestrator_agustin.8b5818_orchestrator ... done ---> Waiting for MYSQL master node to be up... .mysqld is alive ---> Setting up Orchestrator on MySQL master node ---> Running Orchestrator discovery on MySQL master node replication_agustin.286589_master:3306 Use the following commands to access BASH, MySQL, docker inspect and logs -f on each node: run_bash_orchestrator_agustin.8b5818_orchestrator run_inspect_orchestrator_agustin.8b5818_orchestrator run_logs_orchestrator_agustin.8b5818_orchestrator Name Command State Ports ------------------------------------------------------------------------------------------------------ orchestrator_agustin.8b5818_orchestrator /bin/sh -c /entrypoint.sh Up 0.0.0.0:32887->3000/tcp |

In this case, we have a port redirection enabled, so host port 32887 is mapped to the Orchestrator container’s port 3000. This is important so that we can access the Orchestrator web UI. We decided the easiest way to handle this is to let Docker choose a random (free) port in the host. If you want, you can easily change this in the docker-compose.yml file.

If you are using a remote server, you can use SSH to do forwarding to your localhost port 3000, like:

|

1 |

ssh -L 3000:localhost:32887 username@host |

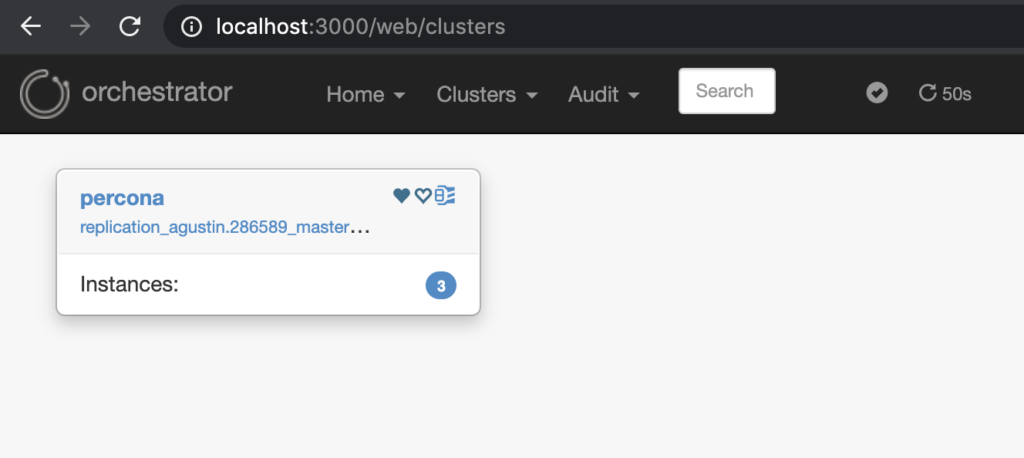

After this, you’ll be able to access localhost:3000 in your browser (or localhost:32887 if deploying this locally), and should see something like the following:

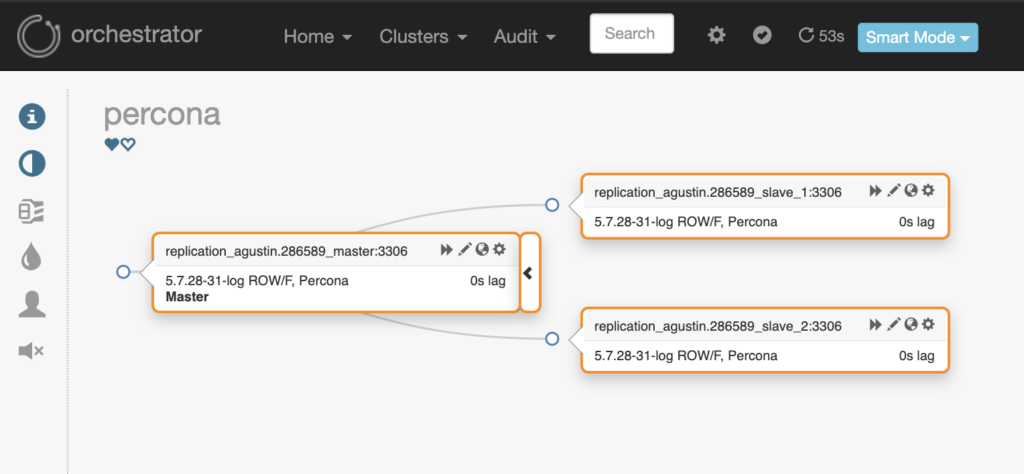

If you click on the cluster name (in our case it will be “percona” by default):

To see the same from the CLI, we can run the following command. Note how we use the wrapper for accessing bash within the Orchestrator container.

|

1 2 3 4 5 6 |

shell> <span style="font-weight: 400;">./run_bash_orchestrator_agustin.8b5818_orchestrator</span> bash-4.4# /usr/local/orchestrator/resources/bin/orchestrator-client -c topology -alias percona replication_agustin.286589_master:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] + replication_agustin.286589_slave_1:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] + replication_agustin.286589_slave_2:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] bash-4.4# exit |

When you are done testing, you should call the scripts with the down argument.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

shell> ./make.sh down Stopping containers and cleaning up... Stopping orchestrator_agustin.8b5818_orchestrator ... done Removing orchestrator_agustin.8b5818_orchestrator ... done Removing network global_docker_network ERROR: error while removing network: network global_docker_network id 33d0b75a941c9bff3937edee72ceac41c7f0df60d395942e3f49a4de3a99071e has active endpoints Deleting run_* scripts... shell> cd ../../replication/master-slave/ shell> ./make.sh down Stopping containers and cleaning up... Stopping replication_agustin.286589_slave_2 ... done Stopping replication_agustin.286589_slave_1 ... done Stopping replication_agustin.286589_master ... done Removing replication_agustin.286589_slave_2 ... done Removing replication_agustin.286589_slave_1 ... done Removing replication_agustin.286589_master ... done Removing network global_docker_network Deleting run_* scripts... |

The error shown in the first make.sh run is expected, because we are using a shared network so that the containers see each other transparently. You can disregard them if you see exactly those messages, and only for the first run.

Orchestrator has several ways to run in a highly available mode. You can read more about it here. We’ll present one of the ways for it, using Raft and sqlite (as documented here).

The first step is to, again, have a working MySQL setup:

|

1 2 3 4 5 6 7 |

shell> ./make.sh up [...output trimmed...] Name Command State Ports ------------------------------------------------------------------------------------ replication_agustin.286589_master /docker-entrypoint.sh mysqld Up 3306/tcp replication_agustin.286589_slave_1 /docker-entrypoint.sh mysqld Up 3306/tcp replication_agustin.286589_slave_2 /docker-entrypoint.sh mysqld Up 3306/tcp |

Then, we can start our Orchestrator HA setup (in the same way as before, only using another docker compose project):

|

1 2 3 4 5 6 7 8 |

shell> cd ../../orchestrator/raft-3-nodes/ shell> ./make.sh up replication_agustin.286589_master [...output trimmed...] Name Command State Ports -------------------------------------------------------------------------------------------------------- orchestrator_agustin.5af896_orchestrator_1 /bin/sh -c /entrypoint.sh Up 0.0.0.0:32888->3000/tcp orchestrator_agustin.5af896_orchestrator_2 /bin/sh -c /entrypoint.sh Up 0.0.0.0:32889->3000/tcp orchestrator_agustin.5af896_orchestrator_3 /bin/sh -c /entrypoint.sh Up 0.0.0.0:32890->3000/tcp |

Note how we now have three Orchestrator containers, each with a port redirection to the host. The leader will be node1: orchestrator_agustin.5af896_orchestrator_1. Again, we can use the CLI to check the topology:

|

1 2 3 4 5 6 |

shell> ./run_bash_orchestrator_agustin.5af896_orchestrator_1 bash-4.4# /usr/local/orchestrator/resources/bin/orchestrator-client -c topology -alias percona replication_agustin.286589_master:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] + replication_agustin.286589_slave_1:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] + replication_agustin.286589_slave_2:3306 [0s,ok,5.7.28-31-log,rw,ROW,>>,P-GTID] bash-4.4# exit |

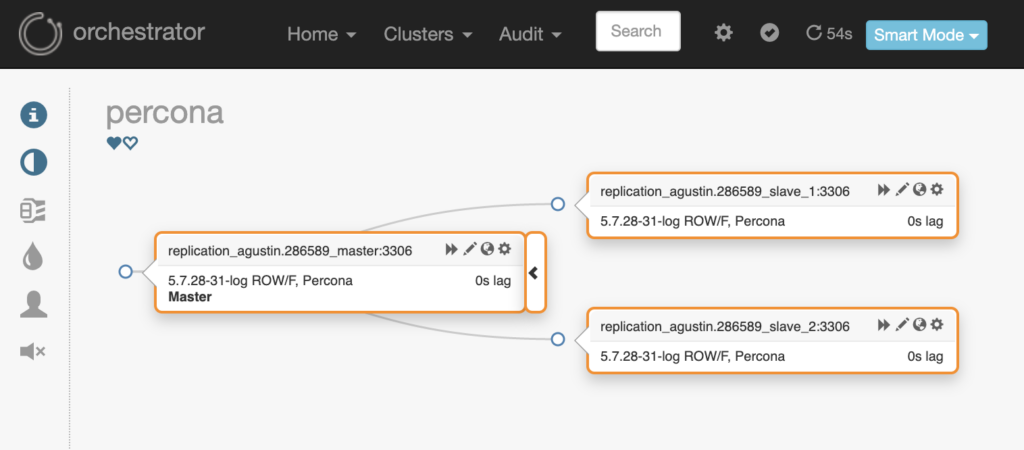

Or do it via the web UI (in my case, after creating the SSH tunnel to port 3000, as shown above):

Since we have Orchestrator up and running, let’s try to do a manual slave promotion:

After some seconds passed, the final status was successfully shown in the web UI (sometimes you need to wait some time, or reload the page without going through the cache, even).

To remove all containers and created scripts, run the following command:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

shell> ./make.sh down Stopping containers and cleaning up... Stopping orchestrator_agustin.5af896_orchestrator_3 ... done Stopping orchestrator_agustin.5af896_orchestrator_2 ... done Stopping orchestrator_agustin.5af896_orchestrator_1 ... done Removing orchestrator_agustin.5af896_orchestrator_3 ... done Removing orchestrator_agustin.5af896_orchestrator_2 ... done Removing orchestrator_agustin.5af896_orchestrator_1 ... done Removing network global_docker_network ERROR: error while removing network: network global_docker_network id 2ff6470ad512b513b4e2ffbf32049b3f18b79c768ad48da25654b917f533e0c9 has active endpoints Deleting run_* scripts... shell> cd ../../replication/master-slave/ shell> ./make.sh down Stopping containers and cleaning up... Stopping replication_agustin.286589_slave_1 ... done Stopping replication_agustin.286589_slave_2 ... done Stopping replication_agustin.286589_master ... done Removing replication_agustin.286589_slave_1 ... done Removing replication_agustin.286589_slave_2 ... done Removing replication_agustin.286589_master ... done Removing network global_docker_network Deleting run_* scripts... |

We have seen how to create three MySQL nodes and use Orchestrator with both a single node and a three-node HA setup. If you want to make any changes to the configurations provided by default, you can find all configuration files under the cnf_files/ subdirectory, in each of the projects’ directories. Enjoy testing!

Shlomi’s recent blog post on deploying testing instances: Orchestrator: what’s new in CI, testing & development

Resources

RELATED POSTS

Great Job Agus!

Why this cant be used in prod ? What

Is the recommendation steps to create something similar in prod ? Thanks

I have customized docker-entrypoint.sh to run docker swarm for autodiscovery and Orchestrator tool in this post I don’t understand so much

Hi Kip,

It is not recommended to use in production because we haven’t devised it for that. We are lacking not only a QA process, but also don’t have things like data persistence in consideration. We use these for testing purposes, and treat them as disposable. If you want to tune them to your liking, you are free to do so, but you are also on your own 🙂

Bang,

I’m not sure what you don’t understand, or if what you sent is a question. If you need help with anything, please let us know in more detail. Note that you should be using the forums for these kinds of questions: https://forums.percona.com/. You can link back to this post if needed, for additional reference.