In one of my previous posts, I started a series on data compression options with MySQL. The first post focused on the more traditional compression options like InnoDB Barracuda page compression and MyISAM packing. With this second part, I’ll discuss a newer compression option, InnoDB transparent page compression with punch holes available since 5.7. First, I’ll describe the transparent page compression method and how it works. Then I’ll present similar results as in the first post.

Before we can discuss transparent page compression, we must understand how InnoDB accesses its data pages. To access an InnoDB page, you need to know the tablespace (the file) and the offset of the page within the tablespace file. The offset is the tough part with data compression. If you just compress pages and concatenate them one after the other, the offsets will no longer be at known intervals. InnoDB Barracuda page compression solves the problem by asking the DBA to guess the compression ratio of the pages with the compressed block size setting. For example, you have to tell InnoDB to use a disk block size of 8KB if you think the compression ratio will be around 2. Transparent page compression uses another approach, sparse files.

A sparse file is a file with holes in it. Even though a sparse file may be very large, if there are a lot of holes in it, it may end up using a small amount of storage. On almost every Linux system, the /var/log/lastlog file is sparse:

|

1 2 3 4 |

yves@ThinkPad-P51:/var/log$ ls -lah lastlog -rw-rw-r-- 1 root utmp 18M jan 5 16:09 lastlog yves@ThinkPad-P51:/var/log$ du -hs lastlog 56K lastlog |

While the ls command reports an apparent size of 18MB, the du command tells us the file actually uses only 56KB. Most of the space in the file is actually unallocated. When you access a sparse file, the filesystem has to map the actual physical offsets in the file with the logical offsets seen by the application. A logical offset is no longer directly the number of bytes since the beginning of the file.

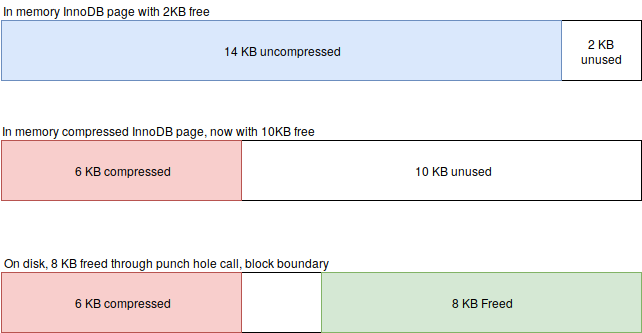

Now that we understand a bit what sparse files are, let’s talk about the punch hole aspect. When you write something to disk, you can use the fallocate call to free up, punch, part of it. The freed/punched portion is thus a hole in the file, and the filesystem can later reuse the hole to store something else. Let’s follow a simplified view of the steps required to write a transparently compressed InnoDB page.

In figure 1, an in memory 16KB InnoDB page with 14KB of data is going to be written to disk. As part of the write process, the data is compressed to 6KB and the page is written to the disk. Once written, InnoDB uses the fallocate call to release the 10KB of unused space. Since only full blocks are release, only 8KB is really freed. The remaining space unreleased space (2KB) is just zeroed. The freed space will be reused, either for the same file or by another one. For simplicity, let’s assume the space is reused by the same InnoDB file.

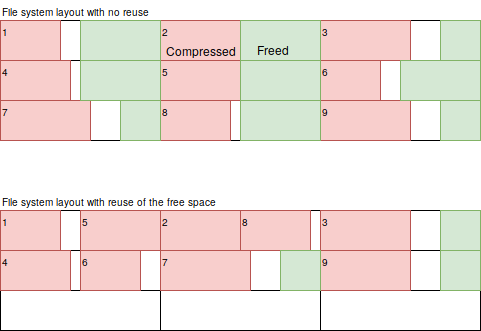

If there is no immediate reuse, a portion of the InnoDB file will look like the top file layout of figure 2. The pages (numbers) are still sequentially laid out but there are holes in between. As the file system gets full, it will start to reuse the freed space so eventually, the file layout will look like the bottom one. If you notice, in the bottom layout, the pages are no longer in sequential order. There are consequences to that: the notion of disk sequential access is gone. The most stunning example is a simple file copy on a spinning device. While copying a 1GB regular file may take only 30 seconds, the copy of a 1GB sparse file can take much longer, up to 30 minutes in the worst cases. The impact on physical backup tools, like Percona Xtrabackup, are thus important. Normally physical backups are much faster than logical ones (ex: mysqldump), but with sparse files, it may no longer be true.

There are also consequences of the use of sparse files on the design of a MySQL database server. The added random operations increase the importance of using SSD/Flash based storage. Also some settings must be considered with a different perspective:

There are likely to be other affected settings.

Just to refresh memories, I am using two datasets for the basic benchmarks. The first, Wikipedia, consists in about 1B rows of Wikipedia access logs. It is moderately compressible. The second dataset, o1543, is from the defunct Percona cloud tool project. It has only 77M rows but they are much wider with 134 columns. The o1543 dataset is highly compressible.

On these two datasets, the following steps were executed:

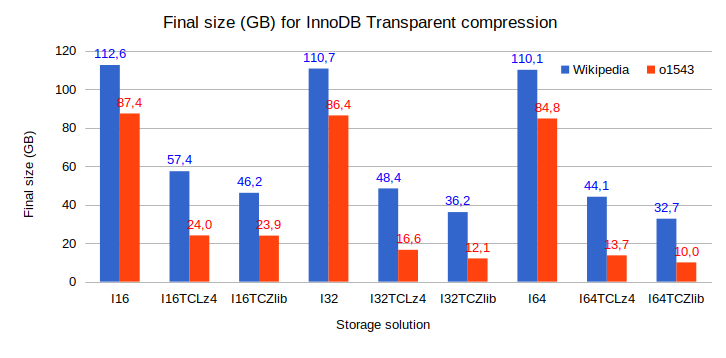

One of the most critical metrics with compression is the final dataset size, as shown in figure 3. The possibility to use larger InnoDB pages is a big thing with transparent page compression. Larger pages allow for more repetitive patterns to be present within a page, and that improves the compression ratio. Results using page sizes of 16KB, 32KB and 64KB are shown. The uncompressed results are used as references, transparent compression (TC) using Lz4 and Zlib are the actual compressed datasets. First, we see that larger page sizes barely affect the size of the uncompressed dataset (I16, I32 and I64). Since the datasets were inserted in primary key order, the only possible impact is the filling factor of the pages. When InnoDB fills a page in PK order, even when the innodb_fill_factor is set to 100, it always leaves 1KB free per 16KB. With an amount of free space that scales with the page size, the final size doesn’t change much.

The impacts of larger page sizes on the compression ratio are important. The most drastic example is with the o1543 dataset and Zlib compression. While with a 16KB page, the compression ratio was already decent, at 3.65, it grows to an amazing 8.7 (I16/I64TCZlib) with pages of 64KB. Larger page sizes have also a positive impact on the compression ratio of the Wikipedia dataset. The original compression ratio with Zlib and 16KB pages is 2.4 and it grows to 3.4 with 64KB pages. Datasets compressed with Lz4 behave similarly to the Zlib ones but the compression ratio are slightly lower.

Overall, the I64TCZlib results for the Wikipedia dataset is the most compressed form we have so far. For the o1543 dataset, the MyISAMPacked compressed size is still slightly smaller but is read-only.

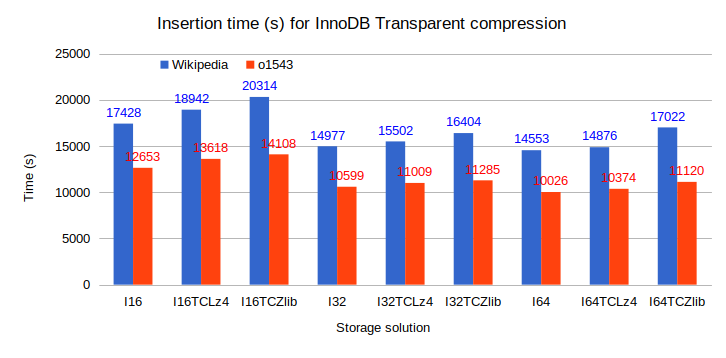

We normally expect compression to add an overhead but here, the insertion speed improves with larger page sizes (figure 4). The reason is likely to be because we are using spinning disks. Spinning disks have a high latency so doing larger IO operations helps. The time overhead of compression with transparent page compression hovers between 10 and 17%. That’s much less than 60% overhead we observed for the Barracuda table compression in the previous post for the Wikipedia dataset (InnoDBCmp8k/InnoDB). We can conclude the insert rates, when inserts are in PK order, are not much affected by transparent page compression. If you are mostly inserting data, it is a nice win.

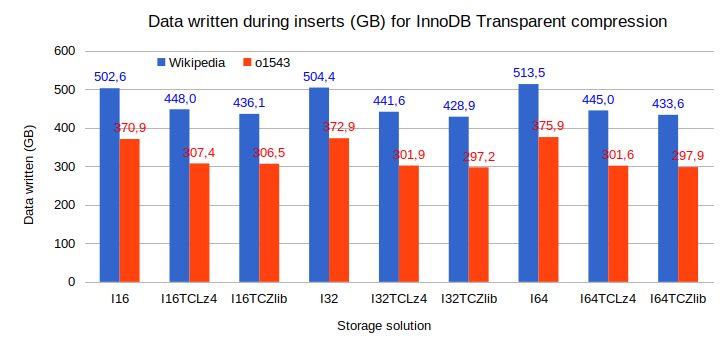

The amount of data written is not much affected by the transparent compression and the larger page sizes (figure 5) . That’s reasonable as many of the writes are not compressed, only the final write to the tablespace is. Neither the writes to the double write buffer, or to the InnoDB log files, or for the tablespace pre-allocation, are compressed. The differences we see are essentially the same as the ones for the final sizes. Only the uncompressed results do not fit that view but these are rather small deviations.

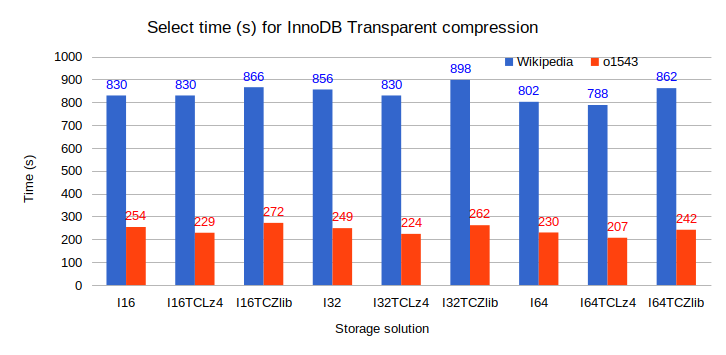

The range select benchmarks are really a means of testing the decompression overhead. As you notice in figure 6, the time variations are not large. For the Wikipedia dataset, the faster range select is I64TCLz4, and it completed in 788 seconds. That’s almost two minutes slower than the faster results using InnoDB Barracuda compression (block_size=4KB). How can we explain such results? If the freed space is reused, transparent compression causes sequential operations to become random ones. The time should increase. Without space reuse, the storage layer will merge many small reads into a sequential one, and then discard the holes. Effectively, the disk will read the same amount of data, compressed or not. The only difference will come from decompression. Lz4 is extremely fast while Zlib is slower.

Going back to the Wikipedia dataset, it took the exact same time, 830s, for I16, I16TCLz4 and I32TCLz4. That seems to indicate there was no space reuse. With the xfs xfs_bmap tool on a TC compressed file, I listed the blocks used. Here is the command I used and the first lines of the output (with blocks of 512 bytes):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

root@LabPS57kvm_1:/tmp# xfs_bmap /var/lib/mysql/test/query_class_metrics.ibd | more /var/lib/mysql/test/query_class_metrics.ibd: 0: [0..31]: 1013168..1013199 1: [32..39]: 1014544..1014551 2: [40..63]: hole 3: [64..71]: 1016976..1016983 4: [72..95]: hole 5: [96..103]: 1017008..1017015 6: [104..127]: hole 7: [128..135]: 1016880..1016887 8: [136..159]: hole 9: [160..167]: 1016912..1016919 10: [168..191]: hole ... |

We have the list:

So the layout actually looks indeed like the filesystem with no reuse case (top layout) of figure 2. When InnoDB extends the tablespace, it of course proceeds by entire pages. The filesystem will try, as much as possible, to allocate continuous blocks. Initially, the tablespace increases one page at a time but rapidly it grows by extent of 64 pages. The space reuse will start only when there are no more continuous areas large enough to satisfy the allocation requests. Until then, the filesystem still performs mostly sequential operations. The performance characteristics will thus change once the freed blocks start to be reused. On a smaller server, I continued to insert data well after the filesystem would have been full without the holes. The insertion rate fell by about half but the read performance appeared unchanged.

The times of the range selects for the o1543 dataset are more predictable. In all cases, larger pages increase performance. That kind of makes sense – InnoDB needs less IOPS. With Lz4, InnoDB spends less time to decompress the pages than it would need to read the complete uncompressed pages. The opposite is also true for Zlib. The Lz4 results are the fastest, Zlib the slowest, and in between we have the uncompressed results.

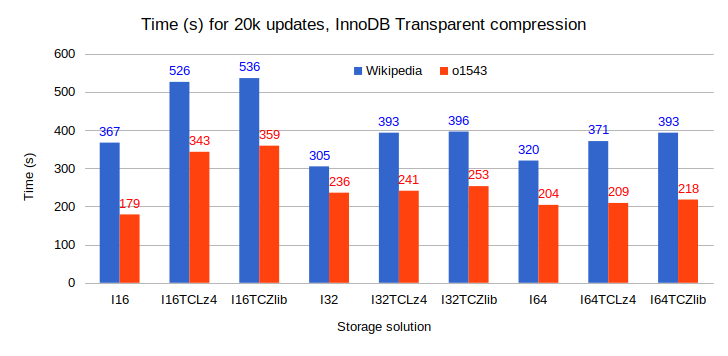

Intuitively, I was expecting the larger pages to slow down the updates. Similarly, I was also expecting Lz4 compressed pages to be slower than uncompressed pages, but faster than the ones compressed with Zlib. The above figure shows the times to perform approximately 20k single row updates for both datasets. We performed the updates to the Wikipedia dataset in small separate transactions, while we used a single large update statement for the o1543 dataset.

While the compression algorithm assumption appears to hold true, the one for the page sizes is plainly wrong. Of course, the storage consists of spinning disks so the latency of random IO dominates. The important factor becomes the number of levels in the b-tree of the table. In the root node of the b-tree and all intermediate nodes, bigger pages mean more pointers to the next level. More pointers causes a bigger fan-out –ratio of nodes between levels – and fewer levels. Bigger pages also cause fewer leaf level pages which in turn require less upper level node pages.

Let’s dive a bit more into this topic. The Wikipedia dataset table has an int unsigned primary key. Considering InnoDB always leaves 1KB free in a page and, along with the primary key, each entry in a node (non-leaf) has an extra 9 bytes for the pointer to the next level page. Let’s do some math:

Of course, our calculations are an approximation. With a 16KB page size, there are three levels above the leaves for a total of 6253 pages and a size of 98MB. It thus requires 6253 IOPS to warm up the buffer pool with the all nodes. A SATA 7200 rpm disk delivers at best 120 IOPS (one per rotation) so that’s about 51 second. Now, let’s redo the same calculations but with a page size of 32KB:

Using 32KB pages, we have one level less and only 1487 node pages for a combined size of 47MB. To warm up, the buffer pool we have to load at least the node pages, an operation requiring only a quarter of the IOPS compared to when 16KB pages were used. That’s where most of the performance gains come from. The reduced number of IOPS more than compensates for the longer time to read a large page. Again, in this setup, we used spinning disks.

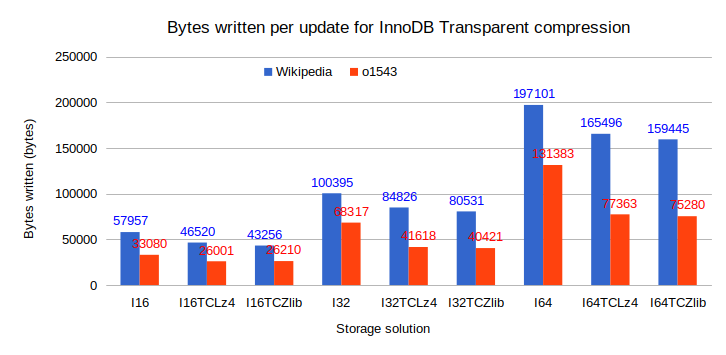

Now, the last set of results concerns the number of bytes written per update statement (figure 8). There is a big price to pay when you want to use larger InnoDB pages, the write amplification is huge. The number of bytes approximately scales roughly with the page size. The worse case is the I64 result, about 192KB written for a single row update of an integer field (Wikipedia). If your database workload includes a large number of small single row updates, you should avoid expensive flash devices with 64KB InnoDB pages as you’ll burn your devices rapidly.

When is it good idea to use transparent compression? When should you use a larger InnoDB page size? One valid use case is a database storing large quantities of operational metrics, like the o1543 dataset. The compression ratio will be fantastic and the performance penalty limited, at least until the filesystem starts reusing the holes.

If you collect data from a large number of devices and you are likely struggling with TBs of highly compressible data, transparent compression might be an interesting option. The only issue I see, but it is a major one, is how to backup large sparse files. InnoDB transparent page compression with punch holes is an interesting solution but, unless I am missing something, it has a somewhat limited scope. There are other compression options with similar compression ratios and less drawbacks.

In this post we explored a feature available since MySQL 5.7, InnoDB transparent compression with punch holes. Performance-wise, we have an interesting solution which offers excellent compression ratio, especially when larger page sizes are used. The transparent compression with punch holes technique suffers from its foundations, sparse files. Backing up very large sparse files is a slow and IO intensive process. Instead of performing large sequential IO operations, the backup process will require millions of small random IO operations.

So far we have discussed the traditional approaches to compression in MySQL (previous post) and Innodb transparent page compression. The next post of the series on data compression with MySQL will introduce the ZFS filesystem. ZFS externalizes the compression to the filesystem in a way that is pretty similar to InnoDB transparent page compression, but the ZFS b-tree file structure removes the inconvenience of sparse files.

Stay tuned, more results are coming.

Transparent page compression was designed to be used with SSD. MariaDB version was even designed with FusionIO. Why do you insist testing it on HDDs especially when SSD / NVMe are the norms ?

Very large datasets, where compression is required, still have HDDs more often than you would think but I do agree SSDs/NVMe is more the norm. The main issue was the environment I was using for these benchmarks and HDDs highlight the storage impacts more. I do mention you should run TC on SSDs.

As Vadim once said here :

https://www.percona.com/blog/2017/11/20/innodb-page-compression/

“Having considered all this, it is hard to recommend that you use InnoDB page compression for serious production. Well, unless you power your database servers with NVMe storage.”

I agree with that. That’s why InnoDB Barracuda compression, RocksDB, ZFS are better solutions. I am not even sure xtrabackup would take care of the punched holes ! If you restore, the space would increase to its original uncompressed size !

https://jira.mariadb.org/browse/MDEV-13023

‘@Yves Trudeau, I’m going to use Percona Server 8 with some kind of compression and do currently test transparent page compression which doesn’t look bad. Is it really and still the case – like in Arnaud’s comment – that you shouldn’t use it in production? I’m using HDDs, because of a lot of data.

Or it’s better to think about ZFS, where I was looking for your post that was mentioned here. 😉

Thank you for this great post.

The main issue with transparent page compression is the filesystem. If you can experiment with a slave for some time and understand and feel the pain of sparse files, that would be best. Personally, I do like ZFS a lot but, it requires a bit more tuning. With HDDs, a ZFS L2ARC could be very interesting performance wise for a rather cheap cost.

We used TokuDB in the past, which isn’t an option for our new installation. It didn’t feel very stable.

InnoDB transparent page compression seems to be a very straight forward thing. Yeah, sparse files are a bit “weird”. But actually, this article affirmed it’s the right approach. At least I hope so. 😉

When we are not talking about copying sparse files, or some backup solutions etc., – talking just about the DB itself – is transparent page compression such a bad thing (with HDDs and XFS)?

I’m wondering if one should even think about the compressed row format way. Which should be what you talked about in your other post about compression.

I haven’t tested transparent page compression on HDD for more than a few hours. For sure, you should disable all the assumptions about spinners like innodb_read_ahead and innodb_flush_neighbors. Other than that, I don’t see any reason for problems. For sure it pushes the filesystems into less known territories…

Hey Yves, I did some tests with zfs, too. No special configuration. zfs “RAID 10” with lz4 vs software raid 10 with transparent page compression. Especially with empty cache, the zfs installation is way faster. And even with cached data it usually wins. I like the idea, that the filesystem handles the compression instead of the database. Thanks for your input.