Parallel replication has been around for a few years now but is still not that commonly used. I had a customer where the master had a very large write workload. The slave could not keep up so I recommended to use parallel slave threads. But how can I measure if it really helps and is working?

At my customer the slave_parallel_workers was 0. But how big should I increase it, maybe to 1? Maybe to 10? There is a blog post about how can we see how many threads are actually used, which is a great help.

We changed the following variables on the slave:

|

1 2 3 |

slave_parallel_type = LOGICAL_CLOCK; slave_parallel_workers = 40; slave_preserve_commit_order = ON; |

40 threads sounds a lot, right? Of course, this is workload specific: if the transactions are independent it might be useful.

Let’s have a look, how many threads are working:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

mysql> SELECT performance_schema.events_transactions_summary_by_thread_by_event_name.THREAD_ID AS THREAD_ID , performance_schema.events_transactions_summary_by_thread_by_event_name.COUNT_STAR AS COUNT_STAR FROM performance_schema.events_transactions_summary_by_thread_by_event_name WHERE performance_schema.events_transactions_summary_by_thread_by_event_name.THREAD_ID IN (SELECT performance_schema.replication_applier_status_by_worker.THREAD_ID FROM performance_schema.replication_applier_status_by_worker); +-----------+------------+ | THREAD_ID | COUNT_STAR | +-----------+------------+ | 25882 | 442481 | | 25883 | 433200 | | 25884 | 426460 | | 25885 | 419772 | | 25886 | 413751 | | 25887 | 407511 | | 25888 | 401592 | | 25889 | 395169 | | 25890 | 388861 | | 25891 | 380657 | | 25892 | 371923 | | 25893 | 362482 | | 25894 | 351601 | | 25895 | 339282 | | 25896 | 325148 | | 25897 | 310051 | | 25898 | 292187 | | 25899 | 272990 | | 25900 | 252843 | | 25901 | 232424 | +-----------+------------+ |

You can see all the threads are working. Which is great.

But did this really speed up the replication? Could the slave write more in the same period of time?

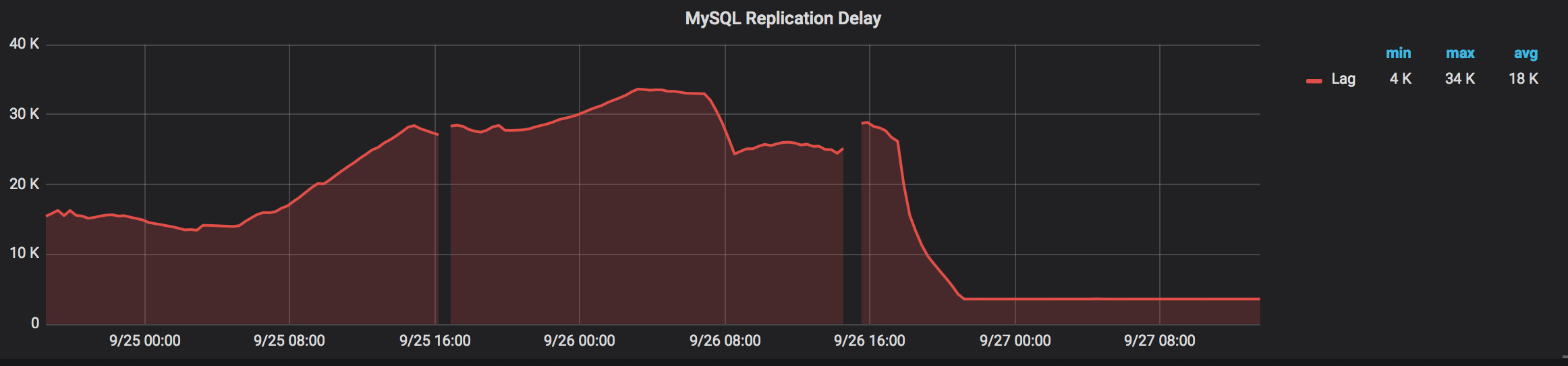

Let’s see the replication lag:

As we can see, lag goes down quite quickly. Is this because the increased thread numbers? Or because the job which generated the many inserts finished and there are no more writes coming? (The replication delay did not go to 0 because this slave is deliberately delayed by an hour.)

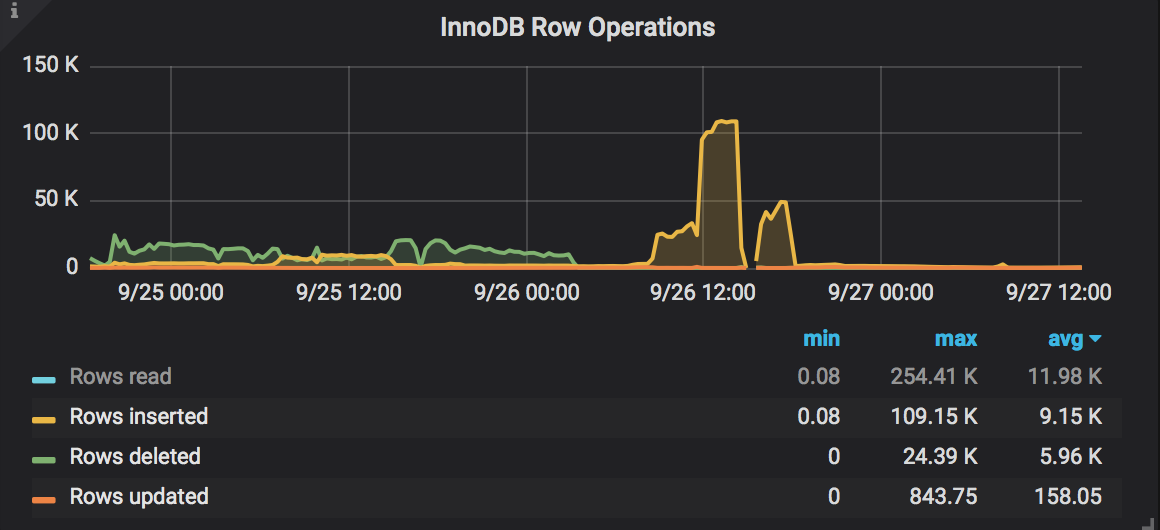

Luckily in PMM we have other graphs as well that can help us. Like this one showing InnoDB row operations:

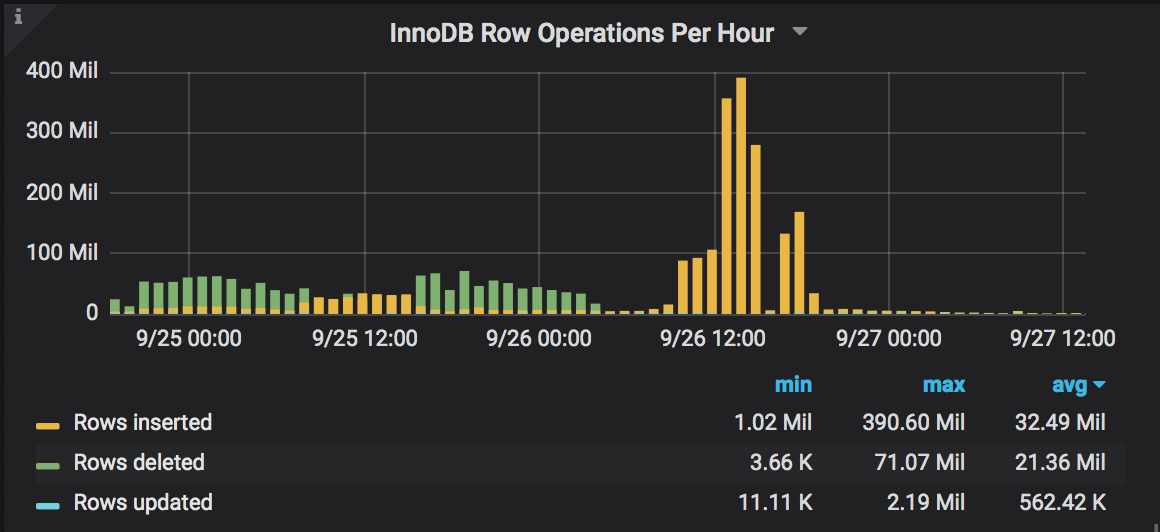

That looks promising: the slave now inserts many more rows than usual. But how much rows were inserted, actually? Let’s create a new graph to see how many rows were inserted per hour. In PMM we already have all this information, we just have to create a new graph using the following query:

|

1 |

increase(mysql_global_status_innodb_row_ops_total{instance="$host",operation!="read"}[1h]) |

We can see a huge jump in the number of inserted rows per hour, it went from ~50Mil to 200-400Mil per hours. We can say that increasing the number of slave_parallel_workers really helped.

In this case, parallel replication was extremely useful and we could confirm that using PMM and Performance Schema. If you tune the slave_parallel_workers check the graphs. You can show the impact to your boss. 🙂

Hi Tibor,

Setting parallel workers is usually not sufficient because they can only parallelize across different db’s. If you want to reduce lag for load on a single db you will need to adjust group_commit_sync_delay.

Hi dharshanr,

That is not completely true. As in this blog post you can see as well I was changing the slave_parallel_type to LOGICAL_CLOCK, which means:

“Transactions that are part of the same binary log group commit on a master are applied in parallel on a slave. The dependencies between transactions are tracked based on their timestamps to provide additional parallelization where possible. When this value is set, the binlog_transaction_dependency_tracking system variable can be used on the master to specify that write sets are used for parallelization in place of timestamps, if a write set is available for the transaction and gives improved results compared to timestamps.”

Source: https://dev.mysql.com/doc/refman/8.0/en/replication-options-slave.html#sysvar_slave_parallel_type

So this is not based on databases which makes it very useful and if you even writing the same database or same table it might can be applied in parallel.

group_commit_sync_delay is also a useful parameter but you have to be careful because it can slow down commits on the master.

Thanks.

Hi, and what about MariaDB?

Hi, It looks like MariaDB uses and older version of Performance Schema which does not have the

performance_schema.events_transactions_summary_by_thread_by_event_nametable, so this will not work on MariaDB. There is a table calledevents_statements_summary_by_thread_by_event_namewhich might can be used but that would require some testing.