In this blog post, we’ll look at the performance of SST data transfer using encryption.

In my previous post, we reviewed SST data transfer in an unsecured environment. Now let’s take a closer look at a setup with encrypted network connections between the donor and joiner nodes.

The base setup is the same as the previous time:

-

- Database server: Percona XtraDB Cluster 5.7 on donor node

-

- Database: sysbench database – 100 tables 4M rows each (total ~122GB)

-

- Network: donor/joiner hosts are connected with dedicated 10Gbit LAN

-

- Hardware: donor/joiner hosts – boxes with 28 Cores+HT/RAM 256GB/Samsung SSD 850/Ubuntu 16.04

The setup details for the encryption aspects in our testing:

-

- Cryptography libraries: openssl-1.0.2, openssl-1.1.0, libgcrypt-1.6.5(for xbstream encryption)

-

- CPU hardware acceleration for AES – AES-NI: enabled/disabled

-

- Ciphers suites: aes(default), aes128, aes256, chacha20(openssl-1.1.0)

Several notes regarding the above aspects:

-

- Cryptography libraries. Now almost every Linux distribution is based on the openssl-1.0.2. This is the previous stable version of the OpenSSL library. The latest stable version (1.1.0) has various performance/scalability fixes and also support of new ciphers that may notably improve throughput, However, it’s problematic to upgrade from 1.0.2 to 1.1.0, or just to find packages for openssl-1.1.0 for existing distributions. This is due to the fact that replacing OpenSSL triggers update/upgrade of a significant number of packages. So in order to use openssl-1.1.0, most likely you will need to build it from sources. The same applies to socat – it will require some effort to build socat with openssl-1.1.0.

-

- AES-NI. The Advanced Encryption Standard Instruction Set (AES-NI) is an extension to the x86 CPU’s from Intel and AMD. The purpose of AES-NI is to improve the performance of encryption and decryption operations using the Advanced Encryption Standard (AES), like the AES128/AES256 ciphers. If your CPU supports AES-NI, there should be an option in BIOS that allows you to enabled/disable that feature. In Linux, you can check /proc/cpuinfo for the existence of an “aes” flag. If it’s present, then AES-NI is available and exposed to the OS.There is a way to check what acceleration ratio you can expect from it:

|

|

# AES_NI disabled with OPENSSL_ia32cap OPENSSL_ia32cap="~0x200000200000000" openssl speed -elapsed -evp aes-128-gcm ... The 'numbers' are in 1000s of bytes per second processed. type 16 bytes 64 bytes 256 bytes 1024 bytes 8192 bytes aes-128-gcm 57535.13k 65924.18k 164094.81k 175759.36k 178757.63k # AES_NI enabled openssl speed -elapsed -evp aes-128-gcm The 'numbers' are in 1000s of bytes per second processed. type 16 bytes 64 bytes 256 bytes 1024 bytes 8192 bytes aes-128-gcm 254276.67k 620945.00k 826301.78k 906044.07k 923740.84k |

Our interest is the very last column: 178MB/s(wo AES-NI) vs 923MB/s(w AES-NI)

-

- Ciphers. In our testing for network encryption with socat+openssl 1.0.2/1.1.0, we used the following ciphers suites:

DEFAULT – if you don’t specify a cipher/cipher string for OpenSSL connection, this suite will be used

AES128 – suite with aes128 ciphers only

AES256 – suites with aes256 ciphers onlyAdditionally, for openssl-1.1.0, there is an extra cipher suite:

CHACHA20 – cipher suites using ChaCha20 algoIn the case of xtrabackup, where internal encryption is based on libgcrypt, we use the AES128/AES256 ciphers from this library.

-

- SST methods. Streaming database files from the the donor to joiner with the rsync protocol over an OpenSSL-encrypted connection:

|

|

(donor) rsync | socat+ssl socat+ssl| rsync(daemon mode) (joiner) |

The current approach of wsrep_sst_rsync.sh doesn’t allow you to use the rsync SST method with SSL. However, there is a project that tries to address the lack of SSL support for rsync method. The idea is to create a secure connection with socat and then use that connection as a tunnel to connect rsync between the joiner and donor hosts. In my testing, I used a similar approach.

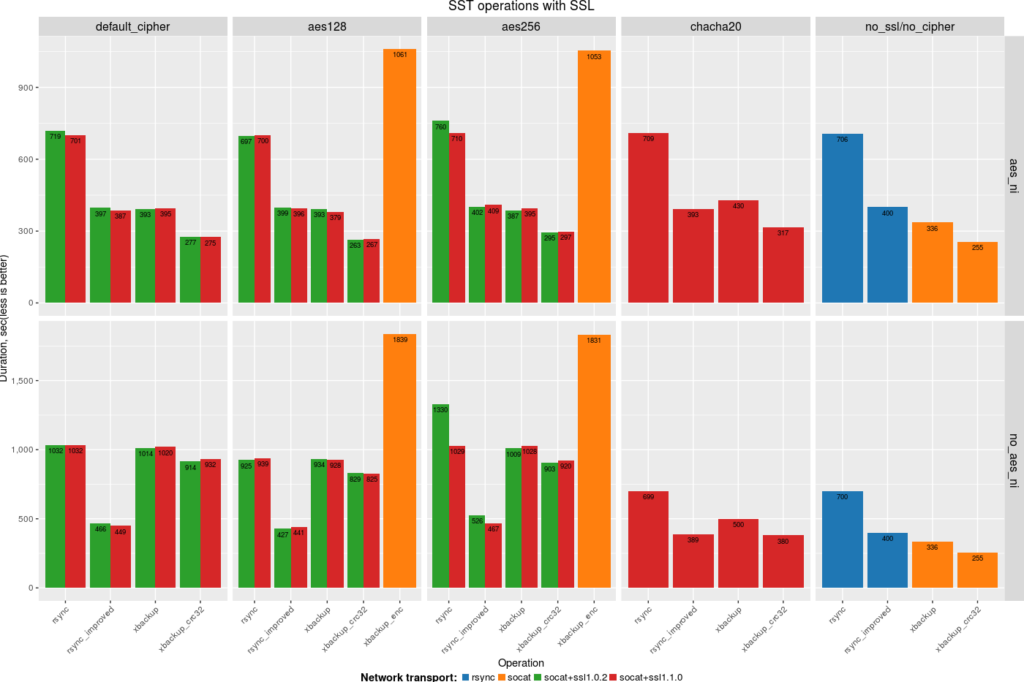

Also take a note that in the chart below, there are results for two variants of rsync: “rsync” (the current approach), and “rsync_improved” (the improved one). I’ve explained the difference between them in my previous post.

-

- Backup data on the donor side and stream it to the joiner in xbstream format over an OpenSSL encrypted connection

|

|

(donor) xtrabackup| socat+ssl socat+ssl | xbstream (joiner) |

In my testing for streaming over encrypted connections, I used the

--parallel=4 option for xtrabackup. In my previous post, I showed that this is important factor to get the best time. There is also a way to pass the name of the cipher that will be used by socat for the OpenSSL connection in the wsrep_sst_xtrabackup-v2.sh script with the

sockopt option. For instance:

|

|

[sst] inno-backup-opts="--parallel=4" sockopt=",cipher=AES128" |

-

- Backup data on the donor side/encrypt it internally(with libgcrypt) and stream the data to the joiner in xbstream format, and afterwards decrypt files on the joiner

|

|

(donor) xtrabackup | socat socat | xbstream ; xtrabackup decrypt (joiner) |

The xtrabackup tool has a feature to encrypt data when performing a backup. That encryption is based on the libgcrypt library, and it’s possible to use AES128 or AES256 ciphers. For encryption, it’s necessary to generate a key and then provide it to xtrabackup to perform encryption on fly. There is a way to specify the number of threads that will encrypt data, along with the chunk size to tune process of encryption.

The current version of xtrabackup supports an efficient way to read, compress and encrypt data in parallel, and then write/stream it. From the other side, when we accept a stream we can’t decompress/decrypt stream on the fly. At first, the stream should be received/written to disk with the xbstream tool and only after that can you use xtrabackup with

--decrypt/--decompress modes to unpack data. The inability to process data on the fly and save the stream to disk for later processing has a notable impact on stream time from the donor to the joiner. We have a plan to fix that issue, so that encryption+compression+streaming of data with xtrabackup happens without the necessity to write stream to the disk on the receiver side.

For my testing, in the case of xtrabackup with internal encryption, I didn’t use SSL encryption for socat.

Results (click on the image for an enlarged view):

Observations:

-

- Transferring data with rsync is very inefficient, and the improved version is 2-2.5 times faster. Also, you may note that in the case of “no-aes-n”, the rsync_improved method has the best time for default/aes128/aes256 ciphers. The reason is that we perform both data transfers in parallel (we spawn rsync process for each file), as well as encryption/decryption (socat forks extra processes for each stream). This approach allows us to compensate for the absence of hardware acceleration by using several CPU cores. In all other cases, we only use one CPU for streaming of data and encryption/decryption.

-

- xtrabackup (with hardware optimized crc32) shows the best time in all cases, except for the default/aes128/aes256 ciphers in “no-aes-ni” mode (where rsync_imporved showed the best time). However I would like to remind you that SST with rsync is a blocking operation, and during the data transfer the donor node becomes READ-ONLY. xtrabackup, on the other hand, uses backup locks and allows any operations on donor node during SST.

-

- On the boxes without hardware acceleration (no-aes-ni mode), the chacha20 cipher allows you to perform data transfer 2-3 times faster. It’s a very good replacement for “aes” ciphers on such boxes. However, the problem with that cipher is that it is available only in openssl-1.1.0. In order to use it, you will need a custom build of OpenSSL and socat for many distros.

-

- Regarding xtrabackup with internal encryption (xtrabackup_enc): reading/encrypting and streaming data is quite fast, especially with the latest libgcrypt library(1.7.x). The problem is decryption. As I’ve explained above, right now we need to get the stream and save encrypted data to storage first, and then perform the extra step of reading/decrypting and saving the data back. That extra part consumes 2/3 of the total time. Improving the xbstream tool to perform steam decryption/decompression on the fly would allow you to get very good results.

Testing Details

For purposes of the testing, I’ve created a script “sst-bench.sh” that covers all the methods used in this post. You can use it to measure all the above SST methods in your environment. In order to run the script, you have to adjust several environment variables at the beginning of the script:

joiner ip, datadirs location on joiner and donor hosts, etc. After that, put the script on the “donor” and “joiner” hosts and run it as the following:

|

|

#joiner_host> sst_bench.sh --mode=joiner --sst-mode=<tar|xbackup|rsync> --cipher=<DEFAULT|AES128|AES256|CHACHA20> --ssl=<0|1> --aesni=<0|1> #donor_host> sst_bench.sh --mode=donor --sst-mode=<tar|xbackup|rsync|rsync_improved> --cipher=<DEFAULT|AES128|AES256|CHACHA20> --ssl=<0|1> --aesni=<0|1> |

I know it’s off topic but you should maybe post something about this bug:

https://bugs.launchpad.net/percona-server/+bug/1676401

It broke the backup cron jobs on a couple of my servers. Yes the workaround works but it would have been nice to get warned from here.

There is bug-fix release https://www.percona.com/blog/2017/04/05/percona-server-for-mysql-5-7-17-13-is-now-available/