In this blog, we’ll look at evaluating the performance of an SST data transfer without encryption.

A State Snapshot Transfer (SST) operation is an important part of Percona XtraDB Cluster. It’s used to provision the joining node with all the necessary data. There are three methods of SST operation available: mysqldump, rsync, xtrabackup. The most advanced one – xtrabackup – is the default method for SST in Percona XtraDB Cluster.

We decided to evaluate the current state of xtrabackup, focusing on the process of transferring data between the donor and joiner nodes tp find out if there is any room for improvements or optimizations.

Taking into account that the security of the network connections used for Percona XtraDB Cluster deployment is one of the most important factors that affects SST performance, we will evaluate SST operations in two setups: without network encryption, and in a secure environment.

In this post, we will take a look at the setup without network encryption.

Setup:

In our test, we will measure the amount of time it takes to stream all necessary data from the donor to the joiner with the help of one of SST’s methods.

Before testing, I measured read/write bandwidth limits of the attached SSD drives (with the help of sysbench/fileio): they are ~530-540MB/sec. That means that the best theoretical time to transfer all of our database files (122GB) is ~230sec.

Schematic view of SST methods:

|

1 |

(donor) tar | socat socat | tar (joiner) |

|

1 |

(donor) rsync rsync(daemon mode) (joiner) |

|

1 |

(donor) xtrabackup | socat socat | xbstream (joiner) |

At the end of this post, you will find the command lines used for testing each SST method.

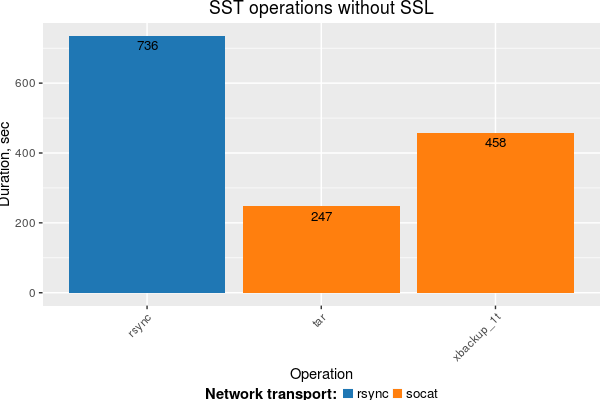

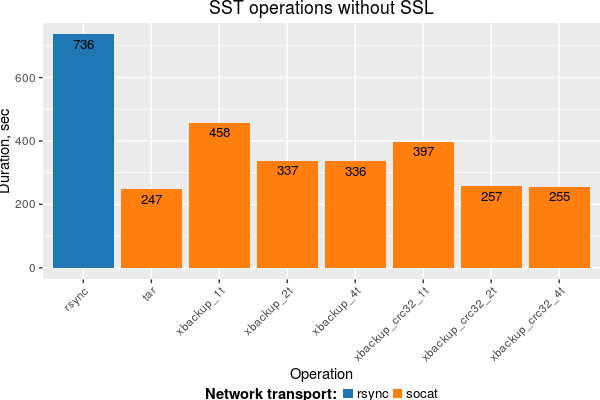

Streaming of our database files with tar took a minimal amount of time, and it’s very close to the best possible time (~230sec). xtrabackup is slower (~2x), as is rsync (~3x).

From profiling xtrabackup, we can clearly see two things:

Issue 1

xtrabackup can process data in parallel, however by default it does it with a single thread only. Our tests showed that increasing the number of parallel threads to 2/4 with the

--parallel option allows us to improve IO utilization and reduce streaming time. One can pass this option to xtrabackup by adding the following to the [sst] section of my.cnf:

|

1 2 |

[sst] inno-backup-opts="--parallel=4" |

Issue 2

By default xtrabackup uses software-based crc32 functions from the libz library. Replacing this function with a hardware-optimized one allows a notable reduction in CPU usage and a speedup in data transfer. This fix will be included in the next release of xtrabackup.

We ran more tests for xtrabackup with the parallel option and hardware optimized crc32, and got results that confirm our analysis. Streaming time for xtrabackup is now very close to baseline and storage limits.

Testing details

For the purposes of testing, I’ve created a script “sst-bench.sh” that covers all the methods used in this post. You can try to measure all the above SST methods in your environment. In order to run script, you have to adjust several environment variables in the beginning, such as joiner ip, datadirs location on the joiner and donor hosts, etc. After that, put the script to the “donor” and “joiner” hosts and run it as the following:

|

1 2 |

#joiner_host> sst_bench.sh --mode=joiner --sst-mode=<tar|xbackup|rsync> #donor_host> sst_bench.sh --mode=donor --sst-mode=<tar|xbackup|rsync|rsync_improved> |

Thanks for the info. We were already using extra memory on the apply side and your suggestion seems to be worthwhile to help on the donor side, however, our bottleneck appears to be the socat transfer for a 3TB database. Any thoughts on how this can be made parallel as well?

John,

there are few aspects here:

– with help of bbcp tool you can measure amount of time it will take in ‘ideal’ case to transferr 3TB of data in your environment. That tool allows to transfer data in parallel very efficiently: -O’ :’tar -C -xf -‘

time bbcp -P 2 -w 8M -s8 -N io ‘tar -c

– for socat you may try to adjust data transfer block size (-b) and rcvbuf/sndbuf

– ensure that storage/fs on joiner and donor sides tuned properly

– in general with current design xtrabackup may read/write/(de|en)crypt/(de)compress in parallel but streaming is serialized so even if you will make network streaming(socat or something else) in parallel xtrabackup still will output 1 stream only. However I would note that with new crc32 algo and “–parallel” option overhead from this serialization is quite low so difference in time for transferring of ‘pure’ data with bbcp and xtrabackup should be not so significant.