In this blog post, we’ll look at how to set up ProxySQL for high availability.

During the last few months, we’ve had a lot of opportunities to present and discuss a very powerful tool that will become more and more used in the architectures supporting  MySQL: ProxySQL.

MySQL: ProxySQL.

ProxySQL is becoming more flexible, solid, performant and used every day (http://www.proxysql.com/ and recent http://www.proxysql.com/compare).

The tool is a winner when compared to similar ones, and we should all have a clear(er) idea of how to integrate it in our architectures in order to achieve the best results.

The first thing to keep in mind is that ProxySQL doesn’t natively support any high availability solution. We can setup a cluster of MySQL(s) and achieve four or even five nines of HA. But if we include ProxySQL as it is, and as a single block, our HA has a single point of failure (SPOF) that can drag us down in the case of a crash.

To solve this, the most common solution is setting up ProxySQL as part of a tile architecture, where Application/ProxySQL are deployed together.

To solve this, the most common solution is setting up ProxySQL as part of a tile architecture, where Application/ProxySQL are deployed together.

This is a good solution for some cases, and it for sure reduces the network hops, but it might be less than practical when our architecture has a very high number of tiles (hundreds of application servers, which is not so unusual nowadays).

In that case, managing ProxySQL is challenging. But more problematically, ProxySQL must perform several checks on the destination servers (MySQL). If we have 400 instances of ProxySQL, we end up keeping our databases busy just performing the checks.

In short, this is not a smart move.

Another possible approach is to have two layers of ProxySQL, one close to the application and another in the middle to connect to the database.

Another possible approach is to have two layers of ProxySQL, one close to the application and another in the middle to connect to the database.

I personally don’t like this approach for many reasons. The most relevant reasons are that this approach creates additional complexity in the management of the platform, and it adds network hops.

So what can be done?

I love the KISS principle because I am lazy and don’t want to reinvent the wheel someone else has already invented. I also like it when my customers don’t need to depend on me or any other colleagues after I am done and gone. They must be able to manage, understand and fix their environment by themselves.

To keep things simple, here is my checklist:

What can I use for the remaining cases?

The answer comes with combining existing solutions and existing blocks: KeepAlived + ProxySQl + MySQL.

For an explanation of KeepAlived, visit http://www.keepalived.org/.

Short description

“Keepalived is a routing software written in C. The main goal of this project is to provide simple and robust facilities for load balancing and high-availability to Linux system and Linux-based infrastructures. The load balancing framework relies on well-known and widely used Linux Virtual Server (IPVS) kernel module providing Layer4 load balancing. Keepalived implements a set of checkers to dynamically and adaptively maintain and manage load-balanced server pool according to their health. On the other hand, high-availability is achieved by VRRP protocol. VRRP is a fundamental brick for router failover. Also, Keepalived implements a set of hooks to the VRRP finite state machine providing low-level and high-speed protocol interactions. Keepalived frameworks can be used independently or all together to provide resilient infrastructures.”

Bingo! This is exactly what we need for our ProxySQL setup.

Below, I will explain how to set up:

All we want to do is to prevent ProxySQL from becoming a SPOF. And while doing that, we need to reduce network hops as much as possible (keeping the solution SIMPLE).

Another important concept to keep in mind is that ProxySQL (re)start takes place in less than a second. This means that if it crashes, assuming it can be restarted by the angel process, doing that is much more efficient than any kind of failover mechanism. As such, your solution plan should keep in mind the ~1 second time of ProxySQL restart as a baseline.

Ready? Let’s go.

Choose three machines to host the combination of Keepalived and ProxySQL.

In the following example, I will use three machines for ProxySQL and Keepalived, and three hosting Percona XtraDB Cluster. You can have the Keepalived+ProxySQL whenever you like (even on the same Percona XtraDB Cluster box).

For the following examples, we will have:

|

1 2 3 4 5 6 7 8 9 10 11 |

PXC node1 192.168.0.5 galera1h1n5 node2 192.168.0.21 galera2h2n21 node3 192.168.0.231 galera1h3n31 ProxySQL-Keepalived test1 192.168.0.11 test2 192.168.0.12 test3 192.168.0.235 VIP 192.168.0.88 /89/90 |

To check, I will use this table (please create it in your MySQL server):

|

1 2 3 4 5 6 7 8 9 |

DROP TABLE test.`testtable2`; CREATE TABLE test.`testtable2` ( `autoInc` bigint(11) NOT NULL AUTO_INCREMENT, `a` varchar(100) COLLATE utf8_bin NOT NULL, `b` varchar(100) COLLATE utf8_bin NOT NULL, `host` varchar(100) COLLATE utf8_bin NOT NULL, `userhost` varchar(100) COLLATE utf8_bin NOT NULL, PRIMARY KEY (`autoInc`) ) ENGINE=InnoDB ROW_FORMAT=DYNAMIC; |

And this bash TEST command to use later:

|

1 |

while [ 1 ];do export mydate=$(date +'%Y-%m-%d %H:%M:%S.%6N'); mysql --defaults-extra-file=./my.cnf -h 192.168.0.88 -P 3311 --skip-column-names -b -e "BEGIN;set @userHost='a';select concat(user,'_', host) into @userHost from information_schema.processlist where user = 'load_RW' limit 1;insert into test.testtable2 values(NULL,'$mydate',SYSDATE(6),@@hostname,@userHost);commit;select * from test.testtable2 order by 1 DESC limit 1" ; sleep 1;done |

Once you have your ProxySQL up (run the same configuration on all ProxySQL nodes, it is much simpler), connect to the Admin interface and execute the following:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

DELETE FROM mysql_replication_hostgroups WHERE writer_hostgroup=500 ; DELETE FROM mysql_servers WHERE hostgroup_id IN (500,501); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.5',500,3306,1000000000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.5',501,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.21',500,3306,1000000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.21',501,3306,1000000000); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.231',500,3306,100); INSERT INTO mysql_servers (hostname,hostgroup_id,port,weight) VALUES ('192.168.0.231',501,3306,1000000000); LOAD MYSQL SERVERS TO RUNTIME; SAVE MYSQL SERVERS TO DISK; DELETE FROM mysql_users WHERE username='load_RW'; INSERT INTO mysql_users (username,password,active,default_hostgroup,default_schema,transaction_persistent) VALUES ('load_RW','test',1,500,'test',1); LOAD MYSQL USERS TO RUNTIME;SAVE MYSQL USERS TO DISK; DELETE FROM mysql_query_rules WHERE rule_id IN (200,201); INSERT INTO mysql_query_rules (rule_id,username,destination_hostgroup,active,retries,match_digest,apply) VALUES(200,'load_RW',500,1,3,'^SELECT.*FOR UPDATE',1); INSERT INTO mysql_query_rules (rule_id,username,destination_hostgroup,active,retries,match_digest,apply) VALUES(201,'load_RW',501,1,3,'^SELECT ',1); LOAD MYSQL QUERY RULES TO RUNTIME;SAVE MYSQL QUERY RULES TO DISK; |

Create a my.cnf file in your default dir with:

|

1 2 3 |

[mysql] user=load_RW password=test |

First, setup the Keepalived configuration file (/etc/keepalived/keepalived.conf):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

global_defs { # Keepalived process identifier lvs_id proxy_HA } # Script used to check if Proxy is running vrrp_script check_proxy { script "killall -0 proxysql" interval 2 weight 2 } # Virtual interface # The priority specifies the order in which the assigned interface to take over in a failover vrrp_instance VI_01 { state MASTER interface em1 virtual_router_id 51 priority <strong><calculate on the WEIGHT for each node></strong> # The virtual ip address shared between the two loadbalancers virtual_ipaddress { 192.168.0.88 dev em1 } track_script { check_proxy } } |

Given the above, and given I want to have TEST1 as the main priority, we will set as:

|

1 2 3 |

test1 = 101 test2 = 100 test3 = 99 |

Modify the config in each node with the above values and (re)start Keepalived.

If all is set correctly, you will see the following in the system log of the TEST1 machine:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

Jan 10 17:56:56 mysqlt1 systemd: Started LVS and VRRP High Availability Monitor. Jan 10 17:56:56 mysqlt1 Keepalived_healthcheckers[6183]: Configuration is using : 6436 Bytes Jan 10 17:56:56 mysqlt1 Keepalived_healthcheckers[6183]: Using LinkWatch kernel netlink reflector... Jan 10 17:56:56 mysqlt1 Keepalived_vrrp[6184]: Configuration is using : 63090 Bytes Jan 10 17:56:56 mysqlt1 Keepalived_vrrp[6184]: Using LinkWatch kernel netlink reflector... Jan 10 17:56:56 mysqlt1 Keepalived_vrrp[6184]: VRRP sockpool: [ifindex(2), proto(112), unicast(0), fd(10,11)] Jan 10 17:56:56 mysqlt1 Keepalived_vrrp[6184]: VRRP_Script(check_proxy) succeeded Jan 10 17:56:57 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Transition to MASTER STATE Jan 10 17:56:57 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Received lower prio advert, forcing new election Jan 10 17:56:57 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Received higher prio advert Jan 10 17:56:57 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Entering BACKUP STATE Jan 10 17:56:58 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) forcing a new MASTER election ... Jan 10 17:57:00 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Transition to MASTER STATE Jan 10 17:57:01 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) <strong>Entering MASTER STATE <-- MASTER</strong> Jan 10 17:57:01 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) setting protocol VIPs. Jan 10 17:57:01 mysqlt1 Keepalived_healthcheckers[6183]: Netlink reflector reports IP 192.168.0.88 added Jan 10 17:57:01 mysqlt1 avahi-daemon[937]: Registering new address record for 192.168.0.88 on em1.IPv4. Jan 10 17:57:01 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Sending gratuitous ARPs on em1 for 192.168.0.88 |

In the other two machines:

|

1 |

Jan 10 17:56:59 mysqlt2 Keepalived_vrrp[13107]: VRRP_Instance(VI_01) <strong>Entering BACKUP STATE <--- </strong> |

Which means the node is there as a Backup. 😀

Now it’s time to test our connection to our ProxySQL pool. From an application node, or just from your laptop, open three terminals. In each one:

|

1 |

watch -n 1 'mysql -h <IP OF THE REAL PROXY (test1|test2|test3)> -P 3310 -uadmin -padmin -t -e "select * from stats_mysql_connection_pool where hostgroup in (500,501,9500,9501) order by hostgroup,srv_host ;" -e " select srv_host,command,avg(time_ms), count(ThreadID) from stats_mysql_processlist group by srv_host,command;" -e "select * from stats_mysql_commands_counters where Total_Time_us > 0;"' |

Unless you are already sending queries to proxies, the proxies are doing nothing. Time to start the test bash as I indicated above. If everything is working correctly, you will see the bash command reporting this:

|

1 2 3 4 |

+----+----------------------------+----------------------------+-------------+----------------------------+ | 49 | 2017-01-10 18:12:07.739152 | 2017-01-10 18:12:07.733282 | galera1h1n5 | load_RW_192.168.0.11:33273 | +----+----------------------------+----------------------------+-------------+----------------------------+ ID execution time in the bash exec time inside mysql node hostname user and where the connection is coming from |

The other three running bash commands will show that ONLY the ProxySQL in TEST1 is currently getting/serving requests, because it is the one with the VIP:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

+-----------+---------------+----------+--------+----------+----------+--------+---------+---------+-----------------+-----------------+------------+ | hostgroup | srv_host | srv_port | status | ConnUsed | ConnFree | ConnOK | ConnERR | Queries | Bytes_data_sent | Bytes_data_recv | Latency_ms | +-----------+---------------+----------+--------+----------+----------+--------+---------+---------+-----------------+-----------------+------------+ | 500 | 192.168.0.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 629 | | 500 | 192.168.0.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 510 | | 500 | 192.168.0.5 | 3306 | ONLINE | 0 | 0 | 3 | 0 | 18 | 882 | 303 | 502 | | 501 | 192.168.0.21 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 629 | | 501 | 192.168.0.231 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 510 | | 501 | 192.168.0.5 | 3306 | ONLINE | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 502 | +-----------+---------------+----------+--------+----------+----------+--------+---------+---------+-----------------+-----------------+------------+ +---------+---------------+-----------+-----------+-----------+---------+---------+----------+----------+-----------+-----------+--------+--------+---------+----------+ | Command | Total_Time_us | Total_cnt | cnt_100us | cnt_500us | cnt_1ms | cnt_5ms | cnt_10ms | cnt_50ms | cnt_100ms | cnt_500ms | cnt_1s | cnt_5s | cnt_10s | cnt_INFs | +---------+---------------+-----------+-----------+-----------+---------+---------+----------+----------+-----------+-----------+--------+--------+---------+----------+ | BEGIN | 9051 | 3 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | | COMMIT | 47853 | 3 | 0 | 0 | 0 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | | INSERT | 3032 | 3 | 0 | 0 | 1 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | | SELECT | 8216 | 9 | 3 | 0 | 3 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | | SET | 2154 | 3 | 0 | 0 | 3 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | +---------+---------------+-----------+-----------+-----------+---------+---------+----------+----------+-----------+-----------+--------+--------+---------+----------+ |

This is all as expected. Time to see if the failover-failback works along the chain.

Let us kill the ProxySQL on TEST1 while the test bash command is running.

|

1 |

killall -9 proxysql |

Here is what you will get:

|

1 2 3 4 5 6 7 |

+----+----------------------------+----------------------------+-------------+----------------------------+ | 91 | 2017-01-10 18:19:06.188233 | 2017-01-10 18:19:06.183327 | galera1h1n5 | load_RW_192.168.0.11:33964 | +----+----------------------------+----------------------------+-------------+----------------------------+ ERROR 2003 (HY000): Can't connect to MySQL server on '192.168.0.88' (111) +----+----------------------------+----------------------------+-------------+----------------------------+ | 94 | 2017-01-10 18:19:08.250093 | 2017-01-10 18:19:11.250927 | galera1h1n5 | load_RW_192.168.0.12:39635 | <-- note +----+----------------------------+----------------------------+-------------+----------------------------+ |

The source changed, but not the Percona XtraDB Cluster node. If you check the system log for TEST1:

|

1 2 3 4 5 |

Jan 10 18:19:06 mysqlt1 Keepalived_vrrp[6184]: VRRP_Script(check_proxy) failed Jan 10 18:19:07 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Received higher prio advert Jan 10 18:19:07 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) Entering BACKUP STATE Jan 10 18:19:07 mysqlt1 Keepalived_vrrp[6184]: VRRP_Instance(VI_01) removing protocol VIPs. Jan 10 18:19:07 mysqlt1 Keepalived_healthcheckers[6183]: <strong>Netlink reflector reports IP 192.168.0.88 removed</strong> |

While on TEST2:

|

1 2 3 4 5 |

Jan 10 18:19:08 mysqlt2 Keepalived_vrrp[13107]: VRRP_Instance(VI_01) Transition to MASTER STATE Jan 10 18:19:09 mysqlt2 Keepalived_vrrp[13107]: VRRP_Instance(VI_01) Entering MASTER STATE Jan 10 18:19:09 mysqlt2 Keepalived_vrrp[13107]: VRRP_Instance(VI_01) setting protocol VIPs. Jan 10 18:19:09 mysqlt2 Keepalived_healthcheckers[13106]: <strong>Netlink reflector reports IP 192.168.0.88 added</strong> Jan 10 18:19:09 mysqlt2 Keepalived_vrrp[13107]: VRRP_Instance(VI_01) Sending gratuitous ARPs on em1 for 192.168.0.88 |

Simple, and elegant. No need to re-invent the wheel to get a smooth working process.

The total time for the recovery for the ProxySQL crash is about 5.06 seconds, considering the wider window (last application start, last recovery in Percona XtraDB Cluster 2017-01-10 18:19:06.188233|2017-01-10 18:19:11.250927).

As such this is the worse scenario, keeping in mind that we run the check for the ProxySQL every two seconds (real recovery max window 5-2=3 sec).

OK, what about fail-back?

Let us restart the proxysql service:

|

1 |

/etc/init.d/proxysql start (or systemctl) |

Here’s the output:

|

1 2 3 4 5 6 |

+-----+----------------------------+----------------------------+-------------+----------------------------+ | 403 | 2017-01-10 18:29:34.550304 | 2017-01-10 18:29:34.545970 | galera1h1n5 | load_RW_192.168.0.12:40330 | +-----+----------------------------+----------------------------+-------------+----------------------------+ +-----+----------------------------+----------------------------+-------------+----------------------------+ | 406 | 2017-01-10 18:29:35.597984 | 2017-01-10 18:29:38.599496 | galera1h1n5 | load_RW_192.168.0.11:34640 | +-----+----------------------------+----------------------------+-------------+----------------------------+ |

The worst recovery time is 4.04 seconds, of which 2 seconds are a delay due to the check interval.

Of course, the test is running every second and is running a single operation. As such, the impact is minimal (no error in fail-back) and the recovery longer.

Let’s check another thing: is the failover working as expected? Test1 -> 2 -> 3? Let’s kill 1 and 2 and see:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

Kill Test1 : +-----+----------------------------+----------------------------+-------------+----------------------------+ | 448 | 2017-01-10 18:35:43.092878 | 2017-01-10 18:35:43.086484 | galera1h1n5 | load_RW_192.168.0.11:35240 | +-----+----------------------------+----------------------------+-------------+----------------------------+ +-----+----------------------------+----------------------------+-------------+----------------------------+ | 451 | 2017-01-10 18:35:47.188307 | 2017-01-10 18:35:50.191465 | galera1h1n5 | load_RW_192.168.0.12:40935 | +-----+----------------------------+----------------------------+-------------+----------------------------+ ... Kill Test2 +-----+----------------------------+----------------------------+-------------+----------------------------+ | 463 | 2017-01-10 18:35:54.379280 | 2017-01-10 18:35:54.373331 | galera1h1n5 | load_RW_192.168.0.12:40948 | +-----+----------------------------+----------------------------+-------------+----------------------------+ +-----+----------------------------+----------------------------+-------------+-----------------------------+ | 466 | 2017-01-10 18:36:08.603754 | 2017-01-10 18:36:09.602075 | galera1h1n5 | load_RW_192.168.0.235:33268 | +-----+----------------------------+----------------------------+-------------+-----------------------------+ |

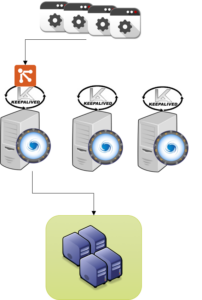

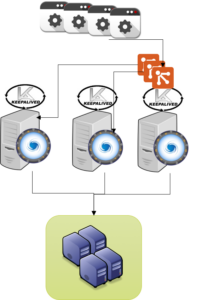

This image is where you should be at the end:

Where the last server is Test3. In this case, I have killed one server immediately after the other. Keepalived had to take a bit longer failing over, but it still did it correctly and following the planned chain.

Fail-back is smooth as usual:

|

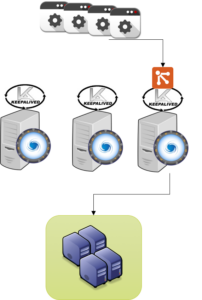

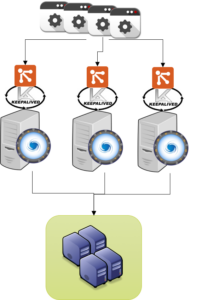

The case above is simple. But as a caveat, I can only access one ProxySQL a time. This might be good or not. In any case, it might be nice to have the possibility to choose the ProxySQL. With Keepalived, you can. We can actually set an X number of VIPs and associate them to each test box.

The result will be that each server hosting ProxySQL will also host a VIP, and will eventually be able to fail-over to any of the other two servers.

The result will be that each server hosting ProxySQL will also host a VIP, and will eventually be able to fail-over to any of the other two servers.

Failing-over/back is fully managed by Keepalived, checking as we did before if ProxySQL is running. An example of the configuration of one node can be seen below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 |

global_defs { # Keepalived process identifier lvs_id proxy_HA } # Script used to check if Proxy is running vrrp_script check_proxy { script "killall -0 proxysql" interval 2 weight 3 } # Virtual interface 1 # The priority specifies the order in which the assigned interface to take over in a failover vrrp_instance VI_01 { state MASTER interface em1 virtual_router_id 51 priority 102 # The virtual ip address shared between the two loadbalancers virtual_ipaddress { 192.168.0.88 dev em1 } track_script { check_proxy } } # Virtual interface 2 # The priority specifies the order in which the assigned interface to take over in a failover vrrp_instance VI_02 { state MASTER interface em1 virtual_router_id 52 priority 100 # The virtual ip address shared between the two loadbalancers virtual_ipaddress { 192.168.0.89 dev em1 } track_script { check_proxy } } # Virtual interface 3 # The priority specifies the order in which the assigned interface to take over in a failover vrrp_instance VI_03 { state MASTER interface em1 virtual_router_id 53 priority 99 # The virtual ip address shared between the two loadbalancers virtual_ipaddress { 192.168.0.90 dev em1 } track_script { check_proxy } } |

The tricky part, in this case, is to play with the PRIORITY for each VIP and each server, such that you will NOT assign the same IP twice.

Performing a check with the test bash, we have:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

Test 1 crash +-----+----------------------------+----------------------------+-------------+----------------------------+ | 422 | 2017-01-11 18:30:14.411668 | 2017-01-11 18:30:14.344009 | galera1h1n5 | load_RW_192.168.0.11:55962 | +-----+----------------------------+----------------------------+-------------+----------------------------+ ERROR 2003 (HY000): Can't connect to MySQL server on '192.168.0.88' (111) +-----+----------------------------+----------------------------+-------------+----------------------------+ | 426 | 2017-01-11 18:30:18.531279 | 2017-01-11 18:30:21.473536 | galera1h1n5 | load_RW_192.168.0.12:49728 | <-- new server +-----+----------------------------+----------------------------+-------------+----------------------------+ .... Test 2 crash +-----+----------------------------+----------------------------+-------------+----------------------------+ | 450 | 2017-01-11 18:30:27.885213 | 2017-01-11 18:30:27.819432 | galera1h1n5 | load_RW_192.168.0.12:49745 | +-----+----------------------------+----------------------------+-------------+----------------------------+ ERROR 2003 (HY000): Can't connect to MySQL server on '192.168.0.88' (111) +-----+----------------------------+----------------------------+-------------+-----------------------------+ | 454 | 2017-01-11 18:30:30.971708 | 2017-01-11 18:30:37.916263 | galera1h1n5 | load_RW_192.168.0.235:33336 | <-- new server +-----+----------------------------+----------------------------+-------------+-----------------------------+ |

The final state of IPs on Test3:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

enp0s8: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 08:00:27:c2:16:3f brd ff:ff:ff:ff:ff:ff inet 192.168.0.235/24 brd 192.168.0.255 scope global enp0s8 <-- Real IP valid_lft forever preferred_lft forever inet 192.168.0.90/32 scope global enp0s8 <--- VIP 3 valid_lft forever preferred_lft forever inet 192.168.0.89/32 scope global enp0s8 <--- VIP 2 valid_lft forever preferred_lft forever inet 192.168.0.88/32 scope global enp0s8 <--- VIP 1 valid_lft forever preferred_lft forever inet6 fe80::a00:27ff:fec2:163f/64 scope link valid_lft forever preferred_lft forever |

And this is the image:

Recovery times:

|

1 2 |

test 1 crash = 7.06 sec (worse case scenario) test 2 crash = 10.03 sec (worse case scenario) |

In this example, I used a test that checks the process. But a check can be anything reporting 0|1. The limit is defined only by what you need.

The failover times can be significantly shorter by reducing the check time, and only counting the time taken to move the VIP. I preferred to show the worst-case scenario, using an application with a one-second interval. This is a pessimistic view of what normally happens with real traffic.

I was looking for a simple, simple, simple way to add HA to ProxySQL – something that can be easily integrated with automation, and that is actually also well-established and maintained. In my opinion, using Keepalived is a good solution because it matches all the above expectations.

Implementing a set of ProxySQL nodes, and having Keepalived manage the failover between them, is pretty easy. But you can expand the usage (and the complexity) if you need to, counting on tools that are already part of the Linux stack. There is no need to re-invent the wheel with a crazy mechanism.

If you want to have fun doing crazy things, at least start with something that helps you to go beyond the basics. For instance, I was also playing a bit with Keepalived and a virtual server, creating a set of redundant ProxySQL with load balancers and . . . but that is another story (blog).

😀

Great MySQL and ProxySQL to all!

Hi Marco,

Nice write up and experimentation. I understand the idea to simplify yet improve availability of ProxySQL. But I still do not understand how can multiple VIPs on your last case actually work for applications accessing ProxySQL? Isn’t an application will only point to just one of the VIPs?

Thank you.

Best regards,

Okky

If the solution dictated the use of ProxySQL as part of a tile architecture.. What would you recommend as an orchestration tool to keep the ProxySQL config+users in sync?

Hi,

How do we address Split brains with keepalived in “A simple solution based on a single VIP” setup?

Great article Marco. May I suggest removing the weight from check_proxy script? e.g.

vrrp_script check_proxy {

script “killall -0 proxysql”

interval 2

}

Not sure if there is another reason for having that, but I found keepalived behaviour a bit more straight forward this way. It will simply fail over if the script is not successful, otherwise it will substract the script weight you specify from the priority of the vrrp_instance and decide wether to failover based on that.

Hello

How you will replicate the creation of new users on the 3 proxysql

‘@Lina you have to implement proxysql Cluster more details here https://www.percona.com/blog/2018/06/11/proxysql-experimental-feature-native-clustering/