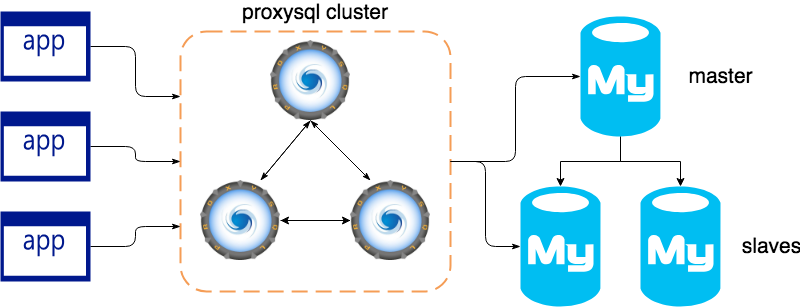

ProxySQL 1.4.2 introduced native clustering, allowing several ProxySQL instances to communicate with and share configuration updates with each other. In this blog post, I’ll review this new feature and how we can start working with 3 nodes.

Before I continue, let’s review two common methods to installing ProxySQL.

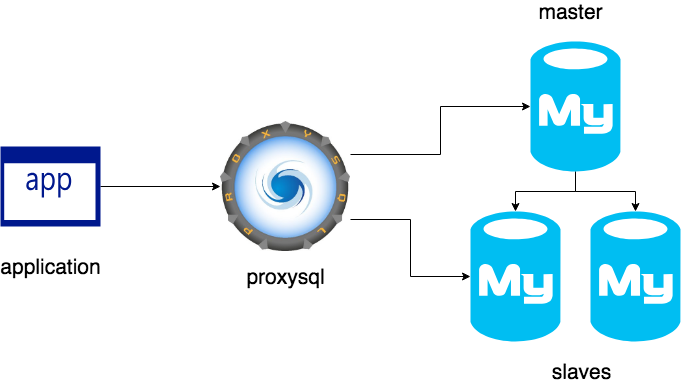

This is the most common installation, where ProxySQL is between application servers and the database. It is simple, but without any high availability. If ProxySQL goes down you lose all connectivity to the database.

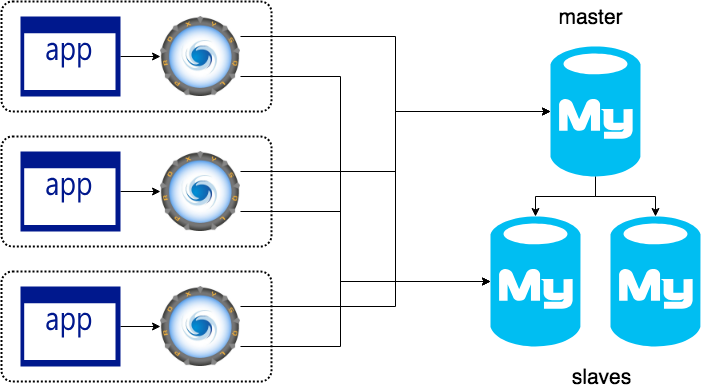

Another common setup is to install ProxySQL onto each application server. This is good because the loss of one ProxySQL/App server will not bring down the entire application.

For more information about the previous installation, please visit this link Where Do I Put ProxySQL?

Sometimes our application and databases grow fast. Maybe you need add a loadbalancer, for example, and in that moment you start thinking … “What could I do to configure and maintain all these ProxySQL nodes without mistakes?”

To do that, there are many tools like Ansible, Puppet, and Chef, but you will need write/create/maintain scripts to do those tasks. This is really difficult to administer for one person.

Now, there is a native solution, built into ProxySQL, to create and administer a cluster in an easy way.

At the moment this feature is EXPERIMENTAL and subject to change. Think very carefully before installing it in production, in fact I strongly recommend you wait. However, if you would like to start testing this feature, you need to install ProxySQL 1.4.2, or better.

This clustering feature is really useful if you have installed one ProxySQL per application instance, because all the changes in one of the ProxySQL nodes will be propagated to all the other ProxySQL nodes. You can also configure a “master-slave” style setup with ProxySQL clustering.

There are only 4 tables where you can make changes and propagate the configuration:

It’s easy. When you make a change like INSERT/DELETE/UPDATE on any of these tables, after running the command LOAD … TO RUNTIME , ProxySQL creates a new checksum of the table’s data and increments the version number in the table runtime_checksums_values. Below we can see an example.

|

1 2 3 4 5 6 7 8 9 10 11 |

admin ((none))>SELECT name, version, FROM_UNIXTIME(epoch), checksum FROM runtime_checksums_values ORDER BY name; +-------------------+---------+----------------------+--------------------+ | name | version | FROM_UNIXTIME(epoch) | checksum | +-------------------+---------+----------------------+--------------------+ | admin_variables | 0 | 1970-01-01 00:00:00 | | | mysql_query_rules | 1 | 2018-04-26 15:58:23 | 0x0000000000000000 | | mysql_servers | 1 | 2018-04-26 15:58:23 | 0x0000000000000000 | | mysql_users | 4 | 2018-04-26 18:36:12 | 0x2F35CAB62143AE41 | | mysql_variables | 0 | 1970-01-01 00:00:00 | | | proxysql_servers | 1 | 2018-04-26 15:58:23 | 0x0000000000000000 | +-------------------+---------+----------------------+--------------------+ |

Internally, all nodes are monitoring and communicating with all the other ProxySQL nodes. When another node detects a change in the checksum and version (both at the same time), each node will get a copy of the table that was modified, make the same changes locally, and apply the new config to RUNTIME to refresh the new config, make it visible to the applications connected and automatically save it to DISK for persistence.

The following setup creates a “synchronous cluster” so any changes to these 4 tables on any ProxySQL server will be replicated to all other ProxySQL nodes. Be careful!

1) To start we need to get at least 2 nodes. Download and install ProxySQL 1.4.2 or higher and start a clean version.

2) On all nodes, we need to update the following global variables. These changes will set the username and password used by each node’s internal communication to cluster1/clusterpass. These must be the same on all nodes in this cluster.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

update global_variables set variable_value='admin:admin;cluster1:clusterpass' where variable_name='admin-admin_credentials'; update global_variables set variable_value='cluster1' where variable_name='admin-cluster_username'; update global_variables set variable_value='clusterpass' where variable_name='admin-cluster_password'; update global_variables set variable_value=200 where variable_name='admin-cluster_check_interval_ms'; update global_variables set variable_value=100 where variable_name='admin-cluster_check_status_frequency'; update global_variables set variable_value='true' where variable_name='admin-cluster_mysql_query_rules_save_to_disk'; update global_variables set variable_value='true' where variable_name='admin-cluster_mysql_servers_save_to_disk'; update global_variables set variable_value='true' where variable_name='admin-cluster_mysql_users_save_to_disk'; update global_variables set variable_value='true' where variable_name='admin-cluster_proxysql_servers_save_to_disk'; update global_variables set variable_value=3 where variable_name='admin-cluster_mysql_query_rules_diffs_before_sync'; update global_variables set variable_value=3 where variable_name='admin-cluster_mysql_servers_diffs_before_sync'; update global_variables set variable_value=3 where variable_name='admin-cluster_mysql_users_diffs_before_sync'; update global_variables set variable_value=3 where variable_name='admin-cluster_proxysql_servers_diffs_before_sync'; load admin variables to RUNTIME; save admin variables to disk; |

3) Add all IPs from the other ProxySQL nodes into each other node:

|

1 2 3 4 5 6 |

INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.138.180.183',6032,100,'PRIMARY'); INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.138.244.108',6032,99,'SECONDARY'); INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.138.244.244',6032,98,'SECONDARY'); LOAD PROXYSQL SERVERS TO RUNTIME; SAVE PROXYSQL SERVERS TO DISK; |

At this moment, we have all nodes synced.

In the next example from the log file, we can see when node1 detected node2.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

[root@proxysql1 ~]# $ tail /var/lib/proxysql/proxysql.log ... 2018-05-10 11:19:51 [INFO] Cluster: Fetching ProxySQL Servers from peer 10.138.244.108:6032 started 2018-05-10 11:19:51 [INFO] Cluster: Fetching ProxySQL Servers from peer 10.138.244.108:6032 completed 2018-05-10 11:19:51 [INFO] Cluster: Loading to runtime ProxySQL Servers from peer 10.138.244.108:6032 2018-05-10 11:19:51 [INFO] Destroyed Cluster Node Entry for host 10.138.148.242:6032 2018-05-10 11:19:51 [INFO] Cluster: Saving to disk ProxySQL Servers from peer 10.138.244.108:6032 2018-05-10 11:19:52 [INFO] Cluster: detected a new checksum for proxysql_servers from peer 10.138.180.183:6032, version 6, epoch 1525951191, checksum 0x3D819A34C06EF4EA . Not syncing yet ... 2018-05-10 11:19:52 [INFO] Cluster: checksum for proxysql_servers from peer 10.138.180.183:6032 matches with local checksum 0x3D819A34C06EF4EA , we won't sync. 2018-05-10 11:19:52 [INFO] Cluster: closing thread for peer 10.138.148.242:6032 2018-05-10 11:19:52 [INFO] Cluster: detected a new checksum for proxysql_servers from peer 10.138.244.244:6032, version 4, epoch 1525951163, checksum 0x3D819A34C06EF4EA . Not syncing yet ... 2018-05-10 11:19:52 [INFO] Cluster: checksum for proxysql_servers from peer 10.138.244.244:6032 matches with local checksum 0x3D819A34C06EF4EA , we won't sync ... |

Another example is to add users to the table mysql_users. Remember these users are to enable MySQL connections between the application (frontend) and MySQL (backend).

We will add a new username and password on any server; in my test I’ll use node2:

|

1 2 3 4 5 |

admin proxysql2 ((none))>INSERT INTO mysql_users(username,password) VALUES ('user1','crazyPassword'); Query OK, 1 row affected (0.00 sec) admin proxysql2 ((none))>LOAD MYSQL USERS TO RUNTIME; Query OK, 0 rows affected (0.00 sec) |

In the log file from node3, we can see the update immediately:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

[root@proxysql3 ~]# $ tail /var/lib/proxysql/proxysql.log ... 2018-05-10 11:30:57 [INFO] Cluster: detected a new checksum for mysql_users from peer 10.138.244.108:6032, version 2, epoch 1525951873, checksum 0x2AF43564C9985EC7 . Not syncing yet ... 2018-05-10 11:30:57 [INFO] Cluster: detected a peer 10.138.244.108:6032 with mysql_users version 2, epoch 1525951873, diff_check 3. Own version: 1, epoch: 1525950968. Proceeding with remote sync 2018-05-10 11:30:57 [INFO] Cluster: detected a peer 10.138.244.108:6032 with mysql_users version 2, epoch 1525951873, diff_check 4. Own version: 1, epoch: 1525950968. Proceeding with remote sync 2018-05-10 11:30:57 [INFO] Cluster: detected peer 10.138.244.108:6032 with mysql_users version 2, epoch 1525951873 2018-05-10 11:30:57 [INFO] Cluster: Fetching MySQL Users from peer 10.138.244.108:6032 started 2018-05-10 11:30:57 [INFO] Cluster: Fetching MySQL Users from peer 10.138.244.108:6032 completed 2018-05-10 11:30:57 [INFO] Cluster: Loading to runtime MySQL Users from peer 10.138.244.108:6032 2018-05-10 11:30:57 [INFO] Cluster: Saving to disk MySQL Query Rules from peer 10.138.244.108:6032 2018-05-10 11:30:57 [INFO] Cluster: detected a new checksum for mysql_users from peer 10.138.244.244:6032, version 2, epoch 1525951857, checksum 0x2AF43564C9985EC7 . Not syncing yet ... 2018-05-10 11:30:57 [INFO] Cluster: checksum for mysql_users from peer 10.138.244.244:6032 matches with local checksum 0x2AF43564C9985EC7 , we won't sync. 2018-05-10 11:30:57 [INFO] Cluster: detected a new checksum for mysql_users from peer 10.138.180.183:6032, version 2, epoch 1525951886, checksum 0x2AF43564C9985EC7 . Not syncing yet ... 2018-05-10 11:30:57 [INFO] Cluster: checksum for mysql_users from peer 10.138.180.183:6032 matches with local checksum 0x2AF43564C9985EC7 , we won't sync. ... |

In this example, we will see and find out what happens if one node is down or has a network glitch, or other issue. I’ll stop ProxySQL node3:

|

1 2 |

<span style="font-weight: 400;">[root@proxysql3 ~]# service proxysql stop</span> <span style="font-weight: 400;">Shutting down ProxySQL: DONE!</span> |

On ProxySQL node1, we can check that node3 is unreachable:

|

1 2 3 4 |

[root@proxysql1 ~]# tailf /var/lib/proxysql/proxysql.log 2018-05-10 11:57:33 ProxySQL_Cluster.cpp:180:ProxySQL_Cluster_Monitor_thread(): [WARNING] Cluster: unable to connect to peer 10.138.244.244:6032 . Error: Can't connect to MySQL server on '10.138.244.244' (107) 2018-05-10 11:57:33 ProxySQL_Cluster.cpp:180:ProxySQL_Cluster_Monitor_thread(): [WARNING] Cluster: unable to connect to peer 10.138.244.244:6032 . Error: Can't connect to MySQL server on '10.138.244.244' (107) 2018-05-10 11:57:33 ProxySQL_Cluster.cpp:180:ProxySQL_Cluster_Monitor_thread(): [WARNING] Cluster: unable to connect to peer 10.138.244.244:6032 . Error: Can't connect to MySQL server on '10.138.244.244' (107) |

And another check can be run in any ProxySQL node like node2, for example:

|

1 2 3 4 5 6 7 8 9 |

admin proxysql2 ((none))>SELECT hostname, checksum, FROM_UNIXTIME(changed_at) changed_at, FROM_UNIXTIME(updated_at) updated_at FROM stats_proxysql_servers_checksums WHERE name='proxysql_servers' ORDER BY hostname; +----------------+--------------------+---------------------+---------------------+ | hostname | checksum | changed_at | updated_at | +----------------+--------------------+---------------------+---------------------+ | 10.138.180.183 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 | 2018-05-10 12:01:59 | | 10.138.244.108 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:38 | 2018-05-10 12:01:59 | | 10.138.244.244 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 | 2018-05-10 11:56:59 | +----------------+--------------------+---------------------+---------------------+ 3 rows in set (0.00 sec) |

In the previous result, we can see node3 (10.138.244.244) is not being updated; the column updated_at should have a later datetime. This means that node3 is not running (or is down or network glitch).

At this point, any change to any of the tables, mysql_query_rules, mysql_servers, mysql_users, proxysql_servers, will be replicated between nodes 1 & 2.

In this next example, while node3 is offline, we will add another user to mysql_users table.

|

1 2 3 4 5 |

admin proxysql2 ((none))>INSERT INTO mysql_users(username,password) VALUES ('user2','passwordCrazy'); Query OK, 1 row affected (0.00 sec) admin proxysql2 ((none))>LOAD MYSQL USERS TO RUNTIME; Query OK, 0 rows affected (0.00 sec) |

That change was propagated to node1:

|

1 2 3 4 5 6 7 8 9 |

[root@proxysql3 ~]# $ tail /var/lib/proxysql/proxysql.log ... 2018-05-10 12:12:36 [INFO] Cluster: detected a peer 10.138.244.108:6032 with mysql_users version 3, epoch 1525954343, diff_check 4. Own version: 2, epoch: 1525951886. Proceeding with remote sync 2018-05-10 12:12:36 [INFO] Cluster: detected peer 10.138.244.108:6032 with mysql_users version 3, epoch 1525954343 2018-05-10 12:12:36 [INFO] Cluster: Fetching MySQL Users from peer 10.138.244.108:6032 started 2018-05-10 12:12:36 [INFO] Cluster: Fetching MySQL Users from peer 10.138.244.108:6032 completed 2018-05-10 12:12:36 [INFO] Cluster: Loading to runtime MySQL Users from peer 10.138.244.108:6032 2018-05-10 12:12:36 [INFO] Cluster: Saving to disk MySQL Query Rules from peer 10.138.244.108:6032 ... |

We keep seeing node3 is out of sync about 25 minutes ago.

|

1 2 3 4 5 6 7 8 9 |

admin proxysql2 ((none))>SELECT hostname, checksum, FROM_UNIXTIME(changed_at) changed_at, FROM_UNIXTIME(updated_at) updated_at FROM stats_proxysql_servers_checksums WHERE name='mysql_users' ORDER BY hostname; +----------------+--------------------+---------------------+---------------------+ | hostname | checksum | changed_at | updated_at | +----------------+--------------------+---------------------+---------------------+ | 10.138.180.183 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 | 2018-05-10 12:21:35 | | 10.138.244.108 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:38 |2018-05-10 12:21:35 | | 10.138.244.244 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 |2018-05-10 12:21:35 | +----------------+--------------------+---------------------+---------------------+ 3 rows in set (0.00 sec) |

Let’s start node3 and check if the sync works. node3 should connect to the other nodes and get the last changes.

|

1 2 3 4 5 6 7 8 9 10 |

[root@proxysql3 ~]# tail /var/lib/proxysql/proxysql.log ... 2018-05-10 12:30:02 [INFO] Cluster: detected a peer 10.138.244.108:6032 with mysql_users version 3, epoch 1525954343, diff_check 3. Own version: 1, epoch: 1525955402. Proceeding with remote sync 2018-05-10 12:30:02 [INFO] Cluster: detected a peer 10.138.180.183:6032 with mysql_users version 3, epoch 1525954356, diff_check 3. Own version: 1, epoch: 1525955402. Proceeding with remote sync … 2018-05-10 12:30:03 [INFO] Cluster: detected peer 10.138.180.183:6032 with mysql_users version 3, epoch 1525954356 2018-05-10 12:30:03 [INFO] Cluster: Fetching MySQL Users from peer 10.138.180.183:6032 started 2018-05-10 12:30:03 [INFO] Cluster: Fetching MySQL Users from peer 10.138.180.183:6032 completed 2018-05-10 12:30:03 [INFO] Cluster: Loading to runtime MySQL Users from peer 10.138.180.183:6032 2018-05-10 12:30:03 [INFO] Cluster: Saving to disk MySQL Query Rules from peer 10.138.180.183:6032 |

Looking at the status from the checksum table, we can see node3 is now up to date.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

admin proxysql2 ((none))>SELECT hostname, checksum, FROM_UNIXTIME(changed_at) changed_at, FROM_UNIXTIME(updated_at) updated_at FROM stats_proxysql_servers_checksums WHERE name='mysql_users' ORDER BY hostname; +----------------+--------------------+---------------------+---------------------+ | hostname | checksum | changed_at | updated_at | +----------------+--------------------+---------------------+---------------------+ | 10.138.180.183 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 | 2018-05-10 12:21:35 | | 10.138.244.108 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:38 |2018-05-10 12:21:35 | | 10.138.244.244 | 0x3D819A34C06EF4EA | 2018-05-10 11:19:39 |2018-05-10 12:21:35 | +----------------+--------------------+---------------------+---------------------+ 3 rows in set (0.00 sec)admin proxysql2 ((none))>SELECT hostname, checksum, FROM_UNIXTIME(changed_at) changed_at, FROM_UNIXTIME(updated_at) updated_at FROM stats_proxysql_servers_checksums WHERE name='mysql_users' ORDER BY hostname; +----------------+--------------------+---------------------+---------------------+ | hostname | checksum | changed_at | updated_at | +----------------+--------------------+---------------------+---------------------+ | 10.138.180.183 | 0x3928F574AFFF4C65 | 2018-05-10 12:12:24 | 2018-05-10 12:31:58 | | 10.138.244.108 | 0x3928F574AFFF4C65 | 2018-05-10 12:12:23 | 2018-05-10 12:31:58 | | 10.138.244.244 | 0x3928F574AFFF4C65 | 2018-05-10 12:30:19 | 2018-05-10 12:31:58 | +----------------+--------------------+---------------------+---------------------+ 3 rows in set (0.00 sec) |

Now we have 3 ProxySQL nodes up to date. This example didn’t add any MySQL servers, hostgroups, etc, because the functionality is the same. The post is intended as an introduction to this new feature and how you can create and test a ProxySQL cluster.

Just remember that this is still an experimental feature and is subject to change with newer versions of ProxySQL.

This feature is really needed if you have more than one ProxySQL running for the same application in different instances. It is easy to maintain and configure for a single person and is easy to create and attach new nodes.

Hope you find this post helpful!

http://www.proxysql.com/blog/proxysql-cluster

http://www.proxysql.com/blog/proxysql-cluster-part2

http://www.proxysql.com/blog/proxysql-cluster-part3-mysql-servers

https://github.com/sysown/proxysql/wiki/ProxySQL-Cluster

Where can we download ProxySQL 1.4.2

if Proxysql cluster is configured with 3 nodes then which IP we will use for application connection?

I hope that Proxysql can implement VIP management without additional configuration, such as Keepalived, which will increase the cost