This is a followup to my previous post Apache Spark with Air ontime performance data.

This is a followup to my previous post Apache Spark with Air ontime performance data.

To recap an interesting point in that post: when using 48 cores with the server, the result was worse than with 12 cores. I wanted to understand the reason is was true, so I started digging. My primary suspicion was that Java (I never trust Java) was not good dealing with 100GB of memory.

There are few links pointing to the potential issues with a huge HEAP:

http://stackoverflow.com/questions/214362/java-very-large-heap-sizes

https://blog.codecentric.de/en/2014/02/35gb-heap-less-32gb-java-jvm-memory-oddities/

Following the last article’s advice, I ran four instances of Spark’s slaves. This is an old technique to better utilize resources, as often (as is well known from old MySQL times) one instance doesn’t scale well.

I added the following to the config:

|

1 2 3 |

export SPARK_WORKER_INSTANCES=4 export SPARK_WORKER_CORES=12 export SPARK_WORKER_MEMORY=25g |

The full description of the test can be found in my previous post Apache Spark with Air ontime performance data

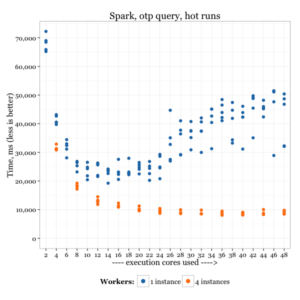

The results:

Although the results for four instances still don’t scale much after using 12 cores, at least there is no extra penalty for using more.

It could be that the dataset is just not big enough to show the setup’s full potential.

I think there is a clear indication that with the 25GB HEAP size, Java performs much better than with 100GB – at least with Oracle’s JDK (there are comments that a third-party commercial JDK may handle this better).

This is something to keep in mind when working with Java-based servers (like Apache Spark) on high end servers.