Say you have a cluster with 3 nodes using Percona XtraDB Cluster (PXC) 5.6 and one asynchronous replica connected to node1. If asynchronous replication is using GTIDs, moving the replica so that it is connected to node2 is trivial, right? Actually replication can easily break for reasons that may not be obvious at first sight.

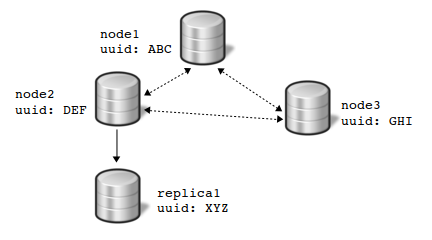

Let’s assume we have the following setup with 3 PXC nodes and one asynchronous replica:

Regarding MySQL GTIDs, a Galera cluster behaves like a distributed master: transactions coming from any node will use the same auto-generated uuid. This auto-generated uuid is related to the Galera uuid, it’s neither ABC, nor DEF, nor GHI.

Transactions executed for instance on node1 but not replicated to all nodes with Galera replication will generate a GTID using the uuid of the node (ABC). This can happen for writes on MyISAM tables if wsrep_replicate_myisam is not enabled.

Such local transactions bring the same potential issues as errant transactions do for a regular master-slave setup using GTID-based replication: if node3 has a local transaction, when you connect replica1 to it, replication may break instantly.

So do not assume that moving replica1 from node2 to node3 is a safe operation if you don’t check errant transactions on node3 first.

And if you find errant transactions that you don’t want to get replicated to replica1, there is only one good fix: insert a corresponding empty transaction on replica1.

Both kinds of GTIDs are using the same format: <source_id:trx_number>.

For Galera, <source_id> is generated when the cluster is bootstrapped. This <source_id> is shared by all nodes.

For MySQL, <source_id> is the server uuid. So it is easy to identify from which server a transaction originates.

Knowing the Galera GTID of a transaction will give you no clue about the corresponding MySQL GTID of the same transaction, and vice versa. You should simply consider them as separate identifiers.

What can be surprising is that writing to node1 will generate a MySQL GTID where <source_id> is not the server uuid:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

node1> select @@server_uuid; +--------------------------------------+ | @@server_uuid | +--------------------------------------+ | 03c236a0-f860-11e3-9b80-9cebe8067a3f | +--------------------------------------+ node1> select @@global.gtid_executed; +------------------------------------------+ | @@global.gtid_executed | +------------------------------------------+ | b1f3f33a-0789-ee1c-43f3-f8373e12f1ea:1 | +------------------------------------------+ |

Even more surprising is that if you write to node2, you will see a single GTID set as if both transactions had been executed on the same server:

|

1 2 3 4 5 6 |

node2> select @@global.gtid_executed; +------------------------------------------+ | @@global.gtid_executed | +------------------------------------------+ | b1f3f33a-0789-ee1c-43f3-f8373e12f1ea:2 | +------------------------------------------+ |

Actually this is reasonable: the cluster acts as a distributed master regarding MySQL replication, so it makes sense that all nodes share the same <source_id>.

And by the way, if you are puzzled about how this ‘anonymous’ <source_id> is generated, look at this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

mysql> show global status like 'wsrep_local_state_uuid'; +------------------------+--------------------------------------+ | Variable_name | Value | +------------------------+--------------------------------------+ | wsrep_local_state_uuid | 4e0c0cc5-f876-11e3-bc0c-07c8c1ed0e15 | +------------------------+--------------------------------------+ node1> select @@global.gtid_executed; +------------------------------------------+ | @@global.gtid_executed | +------------------------------------------+ | b1f3f33a-0789-ee1c-43f3-f8373e12f1ea:1 | +------------------------------------------+ |

If you ‘sum’ both <source_id>, you will get ffffffff-ffff-ffff-ffff-ffffffffffff.

Now the question is: given that any transaction executed on any node of the cluster is replicated to all nodes, how can a local transaction (a transaction only found on one node) appear?

The most common reason is probably a write on a MyISAM table if wsrep_replicate_myisam is not enabled, simply because writes on MyISAM tables are not replicated by Galera by default:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# Node1 mysql> insert into myisam_table (id) values (1); mysql> select @@global.gtid_executed; +----------------------------------------------------------------------------------+ | @@global.gtid_executed | +----------------------------------------------------------------------------------+ | 03c236a0-f860-11e3-9b80-9cebe8067a3f:1, b1f3f33a-0789-ee1c-43f3-f8373e12f1ea:1-8 | +----------------------------------------------------------------------------------+ # Node2 mysql> select @@global.gtid_executed; +------------------------------------------+ | @@global.gtid_executed | +------------------------------------------+ | b1f3f33a-0789-ee1c-43f3-f8373e12f1ea:1-8 | +------------------------------------------+ |

As you can see the GTID of the local transaction on node1 uses the uuid of node1, which makes it easy to spot.

Are there other statements that can create a local transaction? Actually we found that this is also true for FLUSH PRIVILEGES, which is tracked in the binlogs but not replicated by Galera. See the bug report.

As you probably know, these local transactions can hurt when you connect an async replica on a node with a local transaction: the replication protocol used by GTID-based replication will make sure that the local transaction will be fetched from the binlogs and executed on the async slave. But of course, if the transaction is no longer in the binlogs, that triggers a replication error!

I will repeat here what I always say about MySQL GTIDs: it is a great feature but replication works a bit differently from regular position-based replication. So make sure you understand the main differences otherwise it is quite easy to be confused.