Today’s blog post diving into the waters of the MySQL buffer pool is a cross-post from Groupon’s engineering blog, and is Part 1 of 2. Thank you to Kyle Oppenheim at Groupon for contributing to this project and post. We’ll be posting Part 2 on Thursday. I’ll be at the Percona Live MySQL Conference and Expo next week in Santa Clara, California so look for me there – I’d love to connect and talk more about MySQL buffer pools or anything else that’s on your mind!

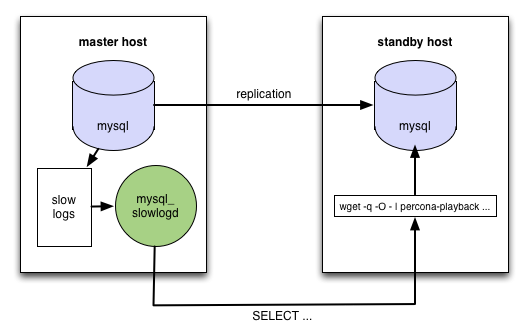

There are numerous solutions for MySQL high availability. Many rely on MySQL’s asynchronous replication to maintain a warm standby server which is flipped into service if the active master server has an issue. At Groupon, our standard MySQL database configuration follows this active/passive pattern. There is a virtual IP that points to the active server of a pair. The passive server has mysqld running and replicating from the active master. In theory, failing over is the simple matter of moving the virtual IP. In practice, it’s slightly more complicated. To manage this process, we use a tool co-developed with Percona.

“Warm standby server”? Did you catch that? What does that mean? In most of these high-availability solutions it means that mysqld is running on the standby server and that replication is not lagging. Unfortunately, that’s often not sufficient for failover during peak traffic. The standby server does not process query traffic except for replication. The buffer pool and adaptive hash index on the standby server will not have recently accessed pages. When it starts handling queries after failover, the lower cache hit rates can lead to outages. Specifically, at Groupon, our servers would be heavily I/O bound after failover as the buffer pool pages were loaded from disk.

Working with Groupon, we have developed a solution to keep the standby server’s caches hot. (See my Fosdem 2013 slides for information about discarded designs and benchmarks.)

First, we set long_query_time to 0 in order to log every query. (See part two for handling massive slow log volume.) The slow logs are served, via HTTP, by mysql_slowlogd. This daemon is similar to running tail -f slow.log, except that it knows how to follow the log stream across log rotation events. On the standby server, the logs are replayed with Percona Playback by streaming the slow log from the active server.

|

1 |

wget -q -O - http://master_server:3307/slow | percona-playback --mysql-host 127.0.0.1 --mysql-username playback --mysql-password PaSSwOrd --mysql-schema schema_name --query-log-stdin --dispatcher-plugin thread-pool --thread-pool-threads-count 100 --session-init-query "set innodb_fake_changes=1" > /var/log/playback.log 2>&1 & |

Our awesome development team added a few features to Percona Playback to make it work better for this use case. You will need version 0.6 or later to get these features. Be aware that playback output is really verbose, in production, most likely you want it to redirect to /dev/null, and only have a log file for debugging purposes.

We benchmarked with slow query logs captured from our production systems. We restored a production database backup to our test database so that our test database was consistent before applying the captured query traffic. This is an important step because update statements that match no rows or insert statements that have duplicate key errors may be faster than an actual database write.

The slow logs were split into chunks, each containing roughly 1M queries. We warmed the cold database with the first chunk and replayed the second chunk after the warmup.

The y axis is logarithmic, so the difference between the IO usage is 2 orders of magnitude. All graphs looked like this (we did 39 measurements), the next graph shows chunk 4’s workload warmed up with chunk 3.

The result is similar for every single graph, each chunk warmed up the buffer pool for the next one.

As an additional experiment we tried replaying the same chunk again. We expected everything to be cached if we warmed the cache with the exact same data. All the graphs from such self-warming experiments look like this one. The green part of the graph lines up with the blue part.

Check back Thursday for Part 2!

Nice post, Petya. Looking forward to read the second part

What is the performance impact from writing every query to disk? I see the daemon does sampling which I was about to ask about while reading the article.

‘@Joshua, writing every query to disk can be very expensive. At Groupon, we write slow logs to a different disk array on our large databases. So this wasn’t as scary as it may be in your situation. We are also using the slow log rate limiting feature in Percona Server — https://www.percona.com/doc/percona-server/5.5/diagnostics/slow_extended_55.html.

Rotating the large slow logs requires some special consideration. We will cover that in part 2.

Hi Peter,

Congratulation to the nice post and good luck for the show on next week!

nice post.

waiting for part 2.

Peter,

e are building a dev system that does something similar, and I have had a lot of success writing sql queries (taken through Proxy at this stage) to a fifo buffer, and reading via a small seperate app from that, passing down the line to a RabbitMQ server. This appears to have minimal impact on the actual DB system, and gets around the IO issues on the server box.

may help… may not…

Peter C

Really interesting post Peter – can’t say I understood all of it!

What’s the link to part two? Thanks