Suppose you have turned on innodb_file_per_table (which means that each table has its own tablespace), and you have to drop tables in a background every hour or every day. If its once every day then you can probably schedule the table dropping process to run during off-peak hours. But I have seen cases where the tables had to be dropped more frequently (like every other hour), or when there was no such thing as off-peak hours, in such cases you need consistent performance. But dropping tables is known to cause stalls that exhibit themselves as reduced QPS during the table drops or stalls. Percona Server since version 5.1 has a feature know as “Lazy Drop Table” that alleviates the problem to a great extent but does not get rid of it completely. In the new releases of MySQL (versions >= 5.5.23) work has been done on reducing the stalls caused by DROP TABLE, these have been done in the form of fixes for bug 51325 and bug 64284.

So what is this blog post about? In this blog post I am going to give a high level picture of how Lazy Drop Table works and how the new DROP Table works in MySQL >= 5.5.23. After the explanation I will share the results of benchmarks that I ran to compare both the implementations. Note that whatever we are discussing here holds for when you are using innodb_file_per_table.

Before going into showing how Lazy Drop Table and the new DROP Table in MySQL >= 5.5.23 work, I would like to mention quickly, that how drop table in MySQL < 5.5.23 used to work. When dropping the table, a mutex on bufferpool would be held for the entire operation and then two scans of the LRU list would be done invalidating pages that belong to the table being dropped. Obviously this is going to take time which depends on the number of pages in the buffer pool at that time, and the longer this takes, the more stalls you have because, importantly, the bufferpool mutex is held for the entire duration. Now let’s keep this old behavior of DROP TABLE as a baseline, and let’s continue to look at the implementations.

The main function that is responsible for cleaning the bufferpool in the event of drop table is buf_LRU_mark_space_was_deleted(space_id), here space_id is the id of the tablespace corresponding to the table being dropped. Following are the steps performed in this function:

The main function that is responsible for cleaning the bufferpool is buf_LRU_flush_or_remove_pages(space_id, BUF_REMOVE_FLUSH_NO_WRITE). Here space_id is the id of the tablespace corresponding to the table being dropped and BUF_REMOVE_FLUSH_NO_WRITE is a flag that is passed to this function meaning that only the entries in the flush_list should be removed. Following are the steps performed in this function:

Ok, now that I have shared a high level picture of both the implementations let’s take a look at the most important and major differences. You can see that the most important and the major differences in the two implementations is the use of buffer pool mutex in Oracle MySQL, this mutex is not used by “Lazy Drop Table” implementation. While the MySQL’s version of drop table relies on taking the buffer pool mutex and a mutex on the flush list, “Lazy Drop Table” implementation relies on taking a mutex on the LRU list (which is a list of pages ordered by their last access time) and a shared reader-writer lock on the Adaptive Hash Index latch. The buffer pool mutex is a major point of contention as it is taken at a lot of different places, while the affect of LRU list mutex is cheaper in comparison. However, a lock on the AHI latch can cause contention for writes, since its a shared lock, so while read queries can still access AHI, write queries would have to wait till the latch is unlocked.

Now about the improvements in Oracle MySQL’s implementation. The good thing is that there is no scan of the LRU list, and pages belonging to the tablespace being dropped are left alone in the LRU list and are evicted by the normal LRU process when these pages age towards the tail of the LRU. The other good thing is that in Oracle MySQL’s implementation the buffer pool mutex and flush list mutex are released after every 1024 pages being evicted. Both these things are an improvement over the previous implementation of DROP TABLE, but do they make enough difference. I feel that the buffer pool mutex and flush list mutex is still going to cause stalls. Well only benchmarks can give us the true picture.

I have tried to be realistic with the benchmarks. I have not tried to create-and-drop tables as fast as I can in the background, as that is not a realistic benchmark. In real life workload we will not be doing create/drop as fast as possible. Neither would I be looking at QPS of the entire benchmark as a single number, as what most people are often concerned about is uniform performance over a period of time. I would also like to point out one thing about benchmarks – we have been always advising people to look beyond average performance numbers because they almost never really matter in production, it is not a question if average performance is bad but what stalls and pileups you have.

Ok now some details about the benchmark.

First of all before running the benchmark, 30 tables were created and populated with 1000 rows, the tables all had a very simple structure:

|

1 |

CREATE TABLE t_x(i int(11) not null auto_increment primary key) |

And during the benchmark, these 30 tables were then dropped at 30 second intervals in the background, while in the foreground sysbench was run for 15 minutes with 8 threads which would execute read/write queries against a 20M rows table:

|

1 |

sysbench --test=oltp --oltp-table-size=20000000 --mysql-table-engine=innodb --mysql-socket=/tmp/mysql_sandbox5524.sock --mysql-db=test --oltp-table-name=sbtest_20m --max-time=900 --num-threads=8 --max-requests=0 run |

Note that the tables that were being dropped had been initialized before the sysbench run, so that the table creation time does not skew the results of the benchmark. During the entire run of the benchmark, all the queries were captured in the slow log by setting long_query_time to 0. Then for analysis purposes the queries in the slow log were grouped by their timestamp, giving us a QPS no. for every second of the benchmark run. Finally, average QPS was taken per every five seconds.

The size of the dataset used is 4.6G, while the buffer pool size is 6G. The value of innodb_max_dirty_pages_pct was set at 90, while the InnoDB log files were sized at 4G. The configuration and dataset sizes were chosen, so that the dataset completely fits in memory, and to prevent checkpointing from skewing the benchmark results. Prior, to running the benchmark, I also made sure that the buffer pool was completely warmed up with the dataset that sysbench will be using entirely in memory. Furthermore I filled up the bufferpool enough to make sure that the server represents one that is in active use. The buffer pool was filled up with 315K pages out of which ~100K were dirty.

Ok now some details about the hardware. The host has a CPU with two cores, 8G of memory and a software RAID5 array consisting of 4 disks.

The version of MySQL used for benchmarking pre 5.5.23 DROP TABLE implementation is MySQL 5.5.15, the version of MySQL used for benchmarking the new DROP TABLE implementation is MySQL 5.5.24, and the version of Percona Server used for benchmarking Lazy Drop Table is Percona Server 5.5.24 (innodb_lazy_drop_table=1)

Now so much for the details of the benchmark, lets see the graphs.

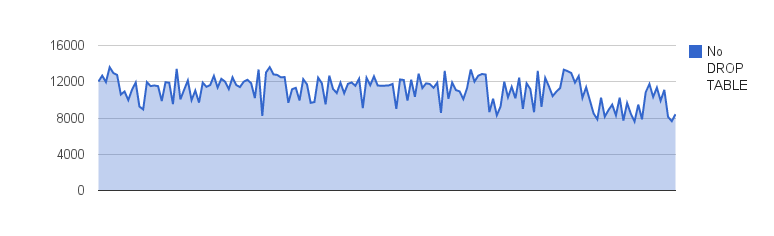

First of all comes the benchmark results showing the QPS over 5 second periods when “NO” DROP TABLE is running in the background:

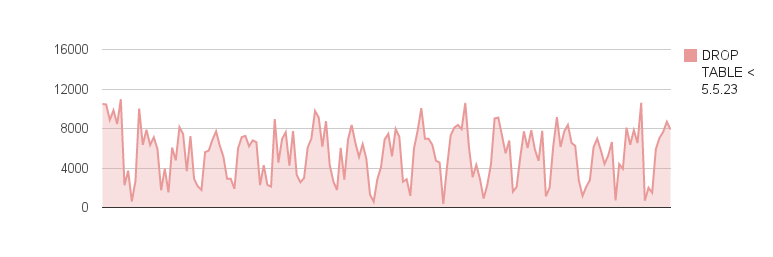

Next comes the benchmark results showing the QPS over 5 second periods with the old DROP TABLE implementation (pre MySQL 5.5.23) and DROP TABLE running in the background:

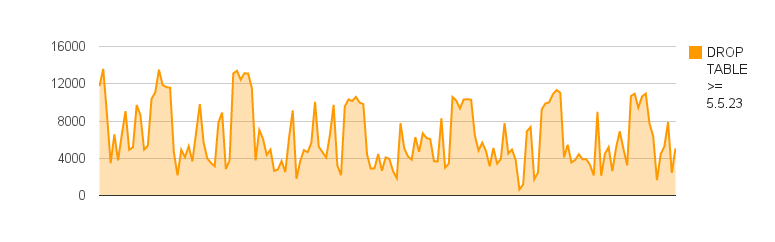

Next comes the benchmark results showing the QPS over 5 second periods with the new DROP TABLE implementation (MysQL version >= 5.5.23) and DROP TABLE running in the background:

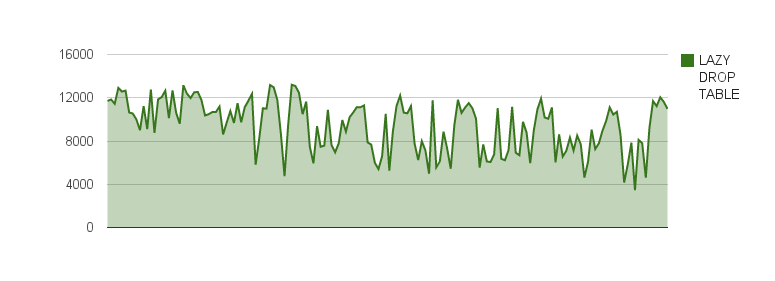

Finally comes the benchmark results showing the QPS over 5 second periods with the Lazy Drop Table implementation in Percona Server and DROP TABLE running in the background:

So we can compare the graphs above, and see that there are dips in QPS whenever table is dropped, MySQL 5.5.23 does show an improvement over MySQL version prior to 5.5.23, as the dips are not as regular and as low as in the old implementation of drop table, but the “Lazy Drop Table” implementation wins here. The dips are there in the case of “Lazy Drop Table” but the dips in QPS are not as low or as pronounced as compared to MySQL.

The new DROP TABLE related fixes in MySQL >= 5.5.23 have certainly reduced the performance hit, and the performance dips are not as pronounced as in older versions of MySQL. However, Percona Server with “Lazy Drop Table” still fares a lot better than MySQL. The dips are there, but not as low as in MySQL, though there is still room for improvement. I certainly like the idea of not scanning the LRU list at all during the DROP TABLE, and I think this is something that could be implemented in “Lazy Drop Table” to further improve the overall performance during background DROP TABLE operations.

Ovais, please confirm that you were using at least four buffer pools for this test.

One key purpose of multiple buffer pools is to reduce contention on the buffer pool mutex and four to eight is likely to be a sensible value for a server with a buffer pool in the six gigabyte range. Possibly more where buffer pool mutex contention is believed to be a serious issue for the workload. In general, tests that have not verified no benefit from multiple buffer pools and which use only one should be viewed with caution. The actual optimal value should be determined with tests, four to eight is just a range that is likely to be reasonable.

Nice to see that you took some care to load the buffer pool and flush list. Many people may have missed doing that.

Apparently not using multiple buffer pools is one of the most commonly seen gaps in the settings described in non-Oracle reports, which are often in-memory workloads where the mutex can be very significant. While the difference will not always be important, it generally causes me to use considerable caution when evaluating the results. Others should not take this reservation as an assertion that it will make a substantial difference to the results in this report, I have not specifically evaluated that, merely noted that I use care when I see it not mentioned or not in use.

This is just my opinion. For an official view of Oracle, consult a PR person.

James Day, MySQL Senior Principal Support Engineer, Oracle

It’s hard for me to see where the DROP TABLE really happened. Perhaps you can annotate the graphs with vertical lines to indicate these events.

This is very interesting, and I’ve always wondered why DROP TABLE doesn’t just mark pages (which completely belong to said table) as “good for recycling” in the pool without placing so many locks.

I am using Percona Server 5.1 extensively, and am surprised to learn of this feature. I wasn’t aware of it, as my DROP TABLE operations perform badly. Anything to set up in advance for it to work?

Scanning the LRU for DROP TABLE is inefficient when pages from other tables are on the LRU. AFAIK, the only table in use for this benchmark was the dropped table. I don’t think this is realistic and the results don’t surprise me.

The overhead for lazy drop table is a function of the length of the LRU. The overhead for new drop table is a function of the length of the flush list. Each have additional overheads because work is done for each page encountered from the dropped table.

For this test the ratio of LRU to flush list lengths is 3:1 and all pages on each are from the dropped table. I doubt this reflects the typical drop table and it minimizes the overhead from scanning the LRU. Your test makes lazy drop table look better than it will in production.

For servers I support the ratio is usually 10:1 and the dropped table is never the majority of pages in the flush list.

Repeating the test with a larger LRU, multiple tables in the buffer pool and on a modern server (2-sockets, NUMA) would be interesting to me.

I also think the base case (no drop table) should be reported for each binary.

‘@James,

I have use a single buffer pool instance for the test. All the versions of MySQL used in the test had InnoDB configured with a single buffer pool instance, so its all even in that respect. Yes multiple buffer pool instances provide general scalability improvements, and reduce contention, but the contention on the buffer pool mutex is still going to be there for the buffer pool which is having its’ pages scanned. You might also compare this benchmark to a case where you have a 24G bufferpool configured with 4 buffer pool instances, in that case the contention is going to be similar to what is shown in the tests here. I also do not think that having too many buffer pool instances is a realistic approach, but that I think would be a topic for a separate blog post 🙂

‘@Baron Schwartz,

Ok as soon as I get time I will change the graphs and add vertical lines corresponding to where the table drops occurred.

‘@Shlomi,

You have to setup a config variable for the “lazy drop table” feature to work. The variable to set is “innodb_lazy_drop_table”: https://www.percona.com/docs/wiki/percona-server:features:misc_system_variables#innodb_lazy_drop_table

Its a dynamic variable as well, so you can turn this feature on without having to restart mysqld:

SET GLOBAL innodb_lazy_drop_table=1;

‘@Mark,

I think I might not have explained properly the tables involved in the benchmark and how they were dropped. So first of all there is this table “sbtest_20m” which was populated with 20M rows using sysbench, and there are 30 other tables each with 1000 rows that have been created prior to the benchmark. Now when the benchmark is done, each one of those 30 tables is dropped by a background process at 30 second intervals, and in the foreground we have sysbench (8 threads) running queries against the table “sbtest_20m”. So what this means is that we have pages from many tables both in the LRU list and in the flush list, and the vast majority of the pages in the LRU list or the flush list belong to the table “sbtest_20m” and not to the tables being dropped.

Am I clear now with how the tests were run? Do you still think the benchmark is not realistic?

Sounds better although I think the LRU:flush_list ratio should still be made smaller than 3:1. Shutdown takes too long for huge buffer pools with 30% dirty pages.

You probably motivated Dimitri to run a round of tests and figure things out. So we will learn more.

Mark,

You are right that this many dirty pages would slow down the shutdown, but we could always decrease the value of innodb_max_dirty_pages_pct to reduce the no. of dirty pages or may be use a feature available in Percona Server https://www.percona.com/doc/percona-server/5.5/scalability/innodb_io_55.html#innodb_checkpoint_age_target.

To me LRU_list to flush_list ratio of 3:1 looked just the right ratio to me that would be somewhere in between a mixed workload and a write heavy workload. I do know many MySQL users who are write heavy. But yes I should probably do another benchmark with a bigger iron and a 5:1 LRU_list to flush_list ratio.

I hope I motivated Dimitri enough 🙂

Ovais, lets consider what happens with a single buffer pool first. If it is locked, no accesses work. So you expect to see a drop to close to zero throughput while the lock is in place. Compare this to the graph you have provided for 5.5.23, which shows regular drops to low throughput.

Now consider what happens when there are four buffer pools. When one is locked, accesses to the other three continue as normal. This reduces the peak drop from 100% to 25%. Now consider ten pools, the maximum drop during a lock of one is ten percent.

Lets consider two of the comparisons you have specified:

1. ‘While the MySQL’s version of drop table relies on taking the buffer pool mutex and a mutex on the flush list, “Lazy Drop Table” implementation relies on taking a mutex on the LRU list’

2. ‘Now about the improvements in Oracle MySQL’s implementation. … The other good thing is that in Oracle MySQL’s implementation the buffer pool mutex and flush list mutex are released after every 1024 pages being evicted. Both these things are an improvement over the previous implementation of DROP TABLE, but do they make enough difference. I feel that the buffer pool mutex and flush list mutex is still going to cause stalls.’

I find your reply to my question to be quite refreshing and commend you for being willing to make your post a transparent example.

Views are my own, not those of Oracle. If you want an official view of Oracle, consult a PR person.

James Day, MySQL Senior Principal Support Engineer, Oracle

Ovais,

Are we sure that the buffer pool mutex is the bottleneck? Have you collected ‘show engine innodb mutex’ output to verify which mutex is the hottest one?

If it is the buffer pool mutex then I believe we already have a solution for this in MySQL 5.6. We have introduced an array of rw-locks to control access to the buffer pool pages. For workloads like the one you described i.e.: in-memory workload with no LRU eviction happening, access to a page in buffer pool won’t need acquisition of buffer pool mutex in MySQL 5.6.

regards,

inaam

I think the most important metric is the max stall during DROP TABLE. Prior to getting any fixes from Oracle or Percona it was pretty bad. When I measured performance for my variation of the DROP TABLE improvement, I only measured throughput. I should have measured max stall duration too. Perhaps you can learn from my mistake. Without a fix our servers stall for ~1 second during DROP TABLE and the impact from that is visible.

‘@James,

I still think that configuring multiple bufferpool instances would not have been relevant to this test specifically. Yes you are right multiple bufferpools will reduce contention, but the affect is going to be equal in both Percona Server and in MySQL, every bufferpool instance is going to have its own LRU_list and its own flush_list, so whatever affect multiple bufferpool instances would have would even out, because the LRU_list mutex that would be taken in the case of Percona Server, would be taken for every bufferpool instance separately, similarly in the case of MySQL server the bufferpool mutex would be taken separately. But the comparison would still show the same pattern, because the LRU_list mutex is still going to be cheaper 🙂

‘@Mark,

Since I have the QPS numbers for every second, so the lowest QPS for the longest consecutive seconds, should tell us about max stalls. Let me tell that to you for MySQL and Percona Server versions:

– MySQL 5.5.15: longest stall was for 6 seconds

– MySQL 5.5.24: longest stall was for 2 seconds

– Percona Server 5.5.24 (lazy_drop_table=1): longest stall was for 1 second

Ovais,

I wonder if you have query log kept from each of the cases so we can analyze to see how long are the stalls in each of the cases (how long selects from sysbench are taking)

‘@Inaam,

I did not collect ‘show engine innodb mutex’, however, I am sure about the bufferpool mutex, because the only difference in the benchmark of NO_DROP_TABLE and the benchmark of DROP_TABLE is the additional table drops in the background, all the other queries are exactly the same.

Bufferpool mutex is taken at key places in the InnoDB code:

– to allocate a new block in the bufferpool

– when a page is made young

– when a page is accessed

– when a page is read to the bufferpool

– when pages are flushed from the bufferpool

– when page needs to be freed

– when readahead is done

– when insert buffer merges happen

and at other places.

So its definitely going to cause contention for queries that need to get access to a page, or when bufferpool flushing happens in the background 10_seconds loop.

The fixes you mention that are part of MySQL 5.6, should certainly decrease a lot of contention issues. MySQL 5.6 certainly is one of the most awaited releases (at least for me) 🙂

‘@Peter,

Yes I do have the slow query logs from the benchmark sessions.

Hi Ovais,

Right from a user’s perspective without worrying about mutexes :), just a few observations from your blog post , and some questions:

I believe the scenario you have covered in the post is a rare occurrence in real time systems for most applications (as per my experience), and the fact that it relies on innodb_file_per_table, which is in itself not recommended for innodb performance (again, last time I read the MySQL manual, that was the case). Also, there are some other MySQL features that require rather immediate attention like sub query performance, so I wonder if there are any changes being carried out in Percona server to address those high priority issues. This post from Feb 2008 mentions something about that, but we are in 2012, and yet no such luck.

http://www.mysqlperformanceblog.com/2008/02/06/mysql-60-subquery-optimizations-are-published/

Lastly, I could not find a Windows binary for any of the Percona servers/tools in the download section. Nothing in the FAQ section mentioned the reason for that either. Would be nice if you can explain why there is no Windows binary release, while MariaDB and MySQL consistently provide such with each of their releases?

Our needs are very different. I care a lot more about DROP TABLE stalls than subquery optimizations. DROP TABLE is done as part of online-schema change. But you are in luck, a lot of work has been done and more is in progress for subquery optimization. Thanks go to the MariaDB project and the official MySQL team at Oracle.

‘@Mark,

Quick question: “Our needs” means the customers needs or that of the Percona/MySQL developers?

Would you also care to explain the absence of Windows binaries for Percona server?

Thanks.

By my needs I speak of features I need when I use MySQL at work. I don’t work for Percona. My employer is a Percona customer, as in we pay them and we use some of their software.

I am sure Percona would be more active with Windows if they had enough customers willing to pay them to work on it.

Samnan,

The DROP TABLE stalls are a serious problem for a very large number of the largest and most important MySQL users. You might not see it, but you probably use several websites or services that have fought a hard battle with it.

I assume these results also apply to TRUNCATE TABLE, anything that differs with DROP / TRUNCATE PARTITION that would not allow it to apply as well?

‘@Mike,

Well TRUNCATE TABLE, is basically, drop and re-create of the table, so this applies to it as well. Similarly it applies to DROP/TRUNCATE PARTITION. In fact dropping a partitioned table with 100 partitions is even more scary, as that implies 100 scans to drop each and every partition separately.

Thanks Ovais.. I’m glad you did your tests with the default single buffer pool, from my experience (mobile gaming), I’ve found the benefit of increasing the number of multiple buffer pools from X -> Y to be very much work load and hardware config dependent (even from 1 to 4)… certainly increasing the number can reduce contention if the buffer pool mutex is at issue, but I’ve also found it can make an already taxed system more volatile. I wouldn’t necessarily set a pool per GB as a default.

‘@Mike,

Yes choosing the number of bufferpool instances depends a lot on workload and the amount of memory available, I would also not necessarily set a pool per GB.

And I did not use multiple bufferpool instances as I only had a 6G bufferpool, and for those who have multiple bufferpool instances 6G could very well be the size of a single bufferpool instance. Also, the impact of having multiple bufferpool instances would be equal for both the implementations since both rely on the structures that are created for each of the bufferpool instance separately.

mike, as Ovais wrote, it’s more workload-affected than something you want to set based only on total buffer pool size or core count, though there have been assorted formulas suggested that use one or both of those. I’d start at 4 and go up or down from there depending on how the measured contention looks, assuming at least a fair number of concurrently running threads.

Ovais, you’ve written that the MySQL Server implementation has the buffer pool mutex as a bottleneck, while the XtraDB one doesn’t have it as a bottleneck. The expected performance impact of multiple buffer pools for the one blocking on the per-buffer-pool mutex is very different from the one not blocking on it.

As a comparative exercise, tell me why you think that this benchmark shows the non-thread pool read-only test slowing after 2048 connections: http://blog.montyprogram.com/mariadb-5-5-performance-on-windows/ . A quick look at the settings should tell you the answer; it’s one of the common easy tuning wins that I expect you’ll see almost immediately when you look at the settings.

I still think that the XtraDB implementation will be faster than the one in 5.5 for the workload you’re using, with the reservation Mark noted. But I doubt it’ll be as great when the server is better tuned. I agree with what you originally wrote here: “Well only benchmarks can give us the true picture”. Simply try 4 pools and see if it makes much difference or not to your test. Yes or no and I’ll thank you because then I’ll have some idea of whether your test actually means something other than possible misconfiguration. I think you’ve done too much good work to just leave that unknown.

Are you able to redraw the graphs so that they all have the same scale (i.e. 0-14,000 for all graphs)?

‘@Alfie,

I have adjusted the scale of the graphs so that they all have the same scale.

‘@James,

Thank you for your detailed reply, I will certainly rerun the benchmark with multiple buffer pool instances, and post my results.

There is a bug in DROP TABLE on MySQL. Because of it my production servers hanged.

https://bugs.launchpad.net/percona-server/+bug/1026926

Now I’m using innodb_lazy_drop_table.