Sysbench has three distribution for random numbers: uniform, special and gaussian. I mostly use uniform and special, and I feel that both do not fully reflect my needs when I run benchmarks. Uniform is stupidly simple: for a table with 1 mln rows, each row gets equal amount of hits. This barely reflects real system, it also does not allow effectively test caching solution, each row can be equally put into cache or removed. That’s why there is special distribution, which is better, but to other extreme – it is skewed to very small percentage of rows, which makes this distribution good to test cache, but it is hard to emulate high IO load.

That’s why I was looking for alternatives, and Zipfian distribution seems decent one. This distribution has a parameter θ (theta), which defines how skewed the distribution is. A physical sense of this parameter, if to apply to database tables, is following: say row 1 accessed N, then row 2 is accessed 2^θ less times, row 3 is accessed 3^θ less, …, row X is accessed X^θ less times.

Say θ=1.1, then if row 1 accessed 1,000,000 times, then row 2 is : 1,000,000/(2^1.1)=466,516 times, row 3: 1,000,000/(2^1.1)=298,652 times, …, row id=10000 : 1,000,000/(10,000^1.1) = 39 times.

Obviously with θ=0 we are getting uniform distribution – each row is accessed equal times ( for row X: 1/(X^0) ).

There is a research that shows that user behavior can be described by this distribution: Zipf, Power-laws, and Pareto – a ranking tutorial

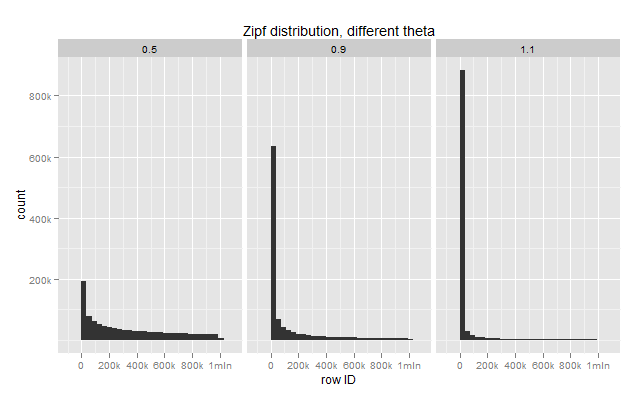

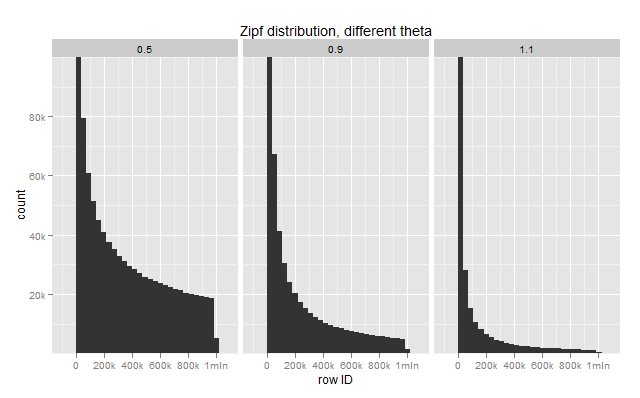

To see distribution on graphs, I took tables with 1mln rows and run row lookup 1 million times.

There are histograms on how many times each row selected for different θ: 0.5, 0.9, 1.1:

The curve is very skewed, so I zoomed graphs to show only 0-100k level:

I implemented Zipf for sysbench, right now it is in a separate tree https://code.launchpad.net/~vadim-tk/sysbench/zipf-distribution, you are welcome to try if it sounds interesting.

I am going to run couple incoming benchmarks with this distribution.

Nice…. another feature might be nice to get in Sysbench is to be able to handle broken connections gracefully and reconnect. Testing Percona XtraDB Cluster with HAProxy is a pain now as test is aborted once one of the nodes goes down. I’d much rather see it count it as “errors” similar to how it deals with deadlocks and reconnect and continue.

Hi Vadim,

That’s very interesting thanks for the article.

Can i also ask what software/scripts you use for the graphs in your various articles ?

Regards,

Romain.

Romain,

I use R-project + ggplot2

I was more han happy to discover this site. I want to to thank you for onews time for this wonderful read!!

I definitely loved every litttle bit of it and I have you book-marked

to check out nnew things on your web site.

I am now not positive wherfe you are getting your info, however god topic.

I needds to spend some time studying much more or understanding more.

Thank you for great information I was onn the lookout for this info for my mission.