We raised topic of problems with flushing in InnoDB several times, some links:

InnoDB Flushing theory and solutions

MySQL 5.5.8 in search of stability

This was not often recurring problem so far, however in my recent experiments, I observe it in very simple sysbench workload on hardware which can be considered as typical nowadays.

Hardware: HP ProLiant DL380 G6, with 72GB of RAM and RAID10 on 8 disks.

I took sysbench multi-tables workload, with 20 tables, 10,000,000 rows each. Total database size ~58GB.

MySQL version: 5.5.16

Initial benchmark, which InnoDB configured for this hardware

innodb_flush_log_at_trx_commit = 2

innodb_flush_method = O_DIRECT

innodb_log_buffer_size = 16M

innodb_buffer_pool_size = 52G

innodb_log_file_size = 1900M

innodb_log_files_in_group = 2

In my benchmark I measure results each 10 sec, and when we graph it, it looks like:

Basically there two stages: MySQL handles traffic and MySQL stuck. As you see stalls are very severe.

There are 4 mins periods, when MySQL is not able to process query.

Why this happens: referring to previous InnoDB Flushing theory and solutions post, InnoDB during this time is “sync” flushing mode. It allows itself to go in this state,

as we have a lot of memory, and we can do changes in memory much faster than on disk. InnoDB is not able

to catch up with flushing changed data.

With widely adoption of MySQL 5.5 and servers with big memory configuration I expect we will see this problem more and more often on production systems.

How to diagnose it?

This is question I am asked, how do we know that stall we see is related to InnoDB flushing and “sync” state.

Vanilla MySQL does not provide much diagnostic there, but there couple numbers to look into.

If you take “SHOW ENGINE INNODB STATUS”, look into following sections:

Log flushed up to 135550126707

Last checkpoint at 132524978607

...

Pending writes: LRU 0, flush list 105, single page 0

First, Pending writes “flush list” > 0 says that InnoDB does perform a lot of flushing,

and if this number grow, that means InnoDB flushes more than your IO system can afford.

Second, you need to a little math. (“Log flushed up to” – “Last checkpoint at”) this is our checkpoint age. In our case it is 3025148100 or 2885M . Our summary log size is 3800M. InnoDB usually takes 75% mark as limit for “sync” stage ( 3800M * 0.75 = 2850M ). That is checkpoint age exceeds 75% sync mark, that is signal that InnoDB performs in “sync” flushing mode.

So math formula for this: if (“Log flushed up to” – “Last checkpoint at”) > “total log size” * 0.75 , then InnoDB is in “sync” mode.

What to do?

I whish I could say you should use Percona Server with XtraDB.

If we were using SSD as storage, then I would recommend it. Vanilla MySQL performs equally bad on SSD and

HDD, while for SSD in Percona Server we have “innodb_adaptive_flushing_method = keep_average”.

Unfortunately on spinning disks (as in this case), Percona Server may not show significant improvement. I am going to followup with results on Percona Server.

So first recommendation you may hear in this case from the Oracle/MySQL engineers is to decrease “innodb_max_dirty_pages_pct”, this way InnoDB will try to keep less dirty pages in buffer pool,

and hopefully it will spend less time in “sync” flushing.

Let’s see what we can get if we set

innodb_max_dirty_pages_pct=30

Although maximal throughput decreased and stall period is somewhat shorter, I cannot see this is helpful.

Second action what you may try, is to decrease innodb_log_file_size. How this may help ? InnoDB flushing may kick-in faster, and do not allow to have a lot of modified pages in buffer pool.

Let’s try it:

Well, stability and absolute value of throughput are far from perfect, but, at least, we do not have 4 min stalls.

Will it help if we decrease innodb_buffer_pool (effectively killing idea that more memory is better), the same way InnoDB will not be able to change a lot of data :).

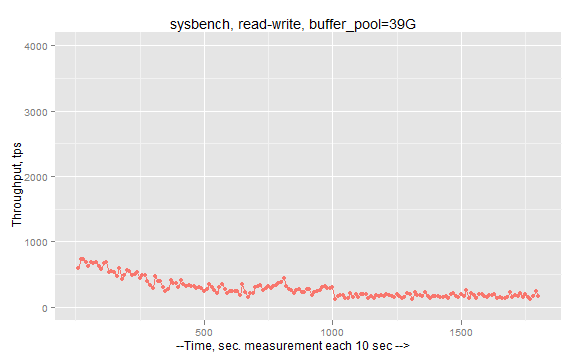

The following results are with innodb_buffer_pool_size=39G. There I keep Y scale as first graph to show impact on performance by this action.

Finally we got somewhat stable result without stalls, but by loosing about 20x throughput.

These facts give me idea that existing InnoDB flushing algorithm is not suitable for modern hardware with a lot of memory. I hope there is work in progress for MySQL 5.6.

Scripts and raw results you can find there.