I mentioned problems with InnoDB flushing in a previous post. Before getting to ideas on a solution, let’s define some terms and take a look into theory.

The two most important parameters for InnoDB performance are innodb_buffer_pool_size and innodb_log_file_size. InnoDB works with data in memory, and all changes to data are performed in memory. In order to survive a crash or system failure, InnoDB is logging changes into InnoDB transaction logs. The size of the InnoDB transaction log defines how many changed blocks we can have in memory for a given period of time. The obvious question is: Why can’t we simply have a gigantic InnoDB transaction log?

The answer is that the size of the transaction log affects recovery time after a crash. The bigger the log, the longer the recovery time.

Okay, so we have innodb_log_file_size. Let’s imagine it as some distance:

Our current state is checkpoint_age, which is the age of the oldest modified non-flushed page. Checkpoint_age is located somewhere between 0 and innodb_log_file_size. Point 0 means there are no modified pages. Checkpoint_age can’t grow past innodb_log_file_size, as that would mean we would not be able to recover after a crash.

In fact, InnoDB has two protection points: “async” and “sync”. When checkpoint_age reaches “async”, InnoDB tries to flush as many pages as possible, while still allowing other queries. You can see the effect from this on the graph in my previous post, when throughput drops down to the floor. The “sync” stage is even worse. When we reach “sync”, InnoDB blocks other queries while trying to flush pages and return checkpoint_age to a point before “async”. This is done to prevent checkpoint_age from exceeding innodb_log_file_size. These are both abnormal operational stages for InnoDB and should be avoided at all cost. In current versions of InnoDB, the “sync” point is at about 7/8 of innodb_log_file_size, and the “async” point is at about 6/8 = 3/4 of innodb_log_file_size.

So, there is one critically important balancing act: On the one hand you want “checkpoint_age” as large as possible, as it defines performance. But, on the other hand, you should not reach the “async” point.

Our idea is to define another point T (target), which is before “async”, in order to have a gap for flexibility, and we will try at all cost to keep checkpoint_age from going past T. We assume that if we can keep “checkpoint_age” in the range 0 – T, we will achieve stable throughput.

Now, what factors affect checkpoint_age? When we execute queries that change pages (i.e., INSERT/UPDATE/DELETE), we perform writes to the log, we change pages, and checkpoint_age is growing. When we perform flushing of changed pages, checkpoint_age is going down.

So that means the main way we have to keep checkpoint_age within point T is to change the number of pages being flushed per second. That way, we can keep checkpoint_age down.

If this doesn’t help–and checkpoint_age keeps growing beyone T toward “async”–we have a second control mechanism: We can add a delay into INSERT/UPDATE/DELETE operations. This way we prevent checkpoint_age from growing and reaching “async”.

To summarize, the idea of our algorithm is : We keep checkpoint_age within point T by increasing or decreasing the number of pages flushed per second. If checkpoint_age continues to grow, we add “throttling” to prevent it. The throttling depends on the position of checkpoint_age — the closer to “async”, the bigger the throttling needed.

As mentioned in the previous post, the current implementation of flushing neighbors is problematic. When we want to flush N pages in order to control checkpoint_age, we expect that flushing pages will have an effect. In the current implementation, however, we may ask to flush 8 pages, and really only 1 of the pages flushed was useful (see previous post). So, we propose a fix for that: If we want to flush page P, we also flush only real neighbors, i.e.: "......DDDDPDDDD....". In this way, not so many additional pages will be flushed, and it will be real sequential I/O.

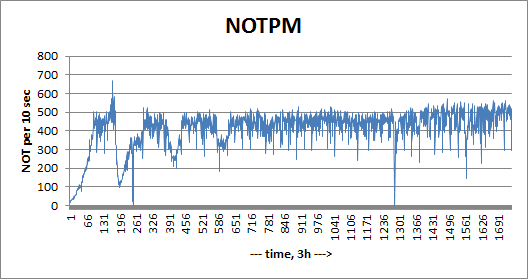

We have some initial results which will follow, and they are promising. Here is graph we have showing our current experimental implementation. (If you want to look at the source code, it is here.)

You can see some jumps after the initial warmup stage, but the later throughput is tending toward a stable line.

Vadim, this is very interesting, thanks for sharing! In what version of Percona are you planning to include this patch?

Intresting. Baron mentioned in the previous post, that this patch will be good for the cloud. What does the same benchmark show on a percona server instance running on EC2 using EBS? On EBS, you can’t really distinguish random io from sequential (you share the real block device with many users, so sequential io will become random there), which kind of defeats the whole purpose of a bunch of optimizations in today’s database engines based on the assumption that they are running on rotating disks, where sequential and random io is different. Does the patch make a difference there? Of course, this may not be true for other cloud providers.

This matches what I said previously: There can be no hard boundaries. Instead the throttling is inversely proportional to the remaining room before reaching T.

Pavel,

Current implementation is against Percona Server 5.1.55, so we may support 5.1 tree.

And it will be ported to 5.5

How are you implementing the delay? Is it per commit, per statement, or per block dirty (or something else entirely).

I can think of Pros and Cons to each approach, kind of curious to see how you did it.

Patrick,

I did plan that details for next post.

For delays we insert sleep() in place where transaction writes into transactional log.

That way we delay growth of LSN and checkpoint age.

In another post you discussed things that Postgres and MySQL can learn from each other. This looks a lot like the checkpoint algorithm from Postgres 8.3, controlled by checkpoint_completion_target. It is good to see this in MySQL as well, their spread checkpointing ideas make a lot of sense, to me at last.

There’s a similar oracle parameter that tries to control how aggressive the database is on flushing FAST_START_MTTR_TARGET. If I remember correctly though the oracle parameter is actually trying to force checkpoints in order to keep your recovery time down, rather than for fear of rolling back onto an active redo log. I think its actually obsolete in 11g though, not sure what the replacement (if any) is.

I don’t actually know what the internals of the Oracle checkpoint logic are; presumptively it gets more aggressive as redo fills up and the number of inactive log files declines, but I’m not sure of the details of the implementation.

I will say I’ve rarely seen an Oracle database crap out on me because of Checkpointing. I’ve seen them bog way, way down, but they don’t generally have stop events b/c checkpoint too old (at least in my experience).

Running out of redo is another matter; I’ve definitely run my share of Oracle instances out of redo, with predictably bad consequences.

Kristian, PostgreSQL doesn’t have a checkpoint age target, but rather a completion target. The two systems are different in so many ways that it is hard to compare them. PG has no fixed-size log, so it doesn’t have a hard deadline (except for filling up the disks with logs). It does periodic checkpoints, not continuously moving checkpoints. It uses buffers completely differently. Its checkpoint spread algorithm is basically “I have to do a checkpoint every X seconds or Y bytes of log files, so let me aim to complete this one by the time Z percent of that time has passed.” It is actually quite a bit simpler than InnoDB’s algorithm, which isn’t a bad thing in itself, but most importantly, Greg Smith’s benchmarks show that PostgreSQL is significantly prone to checkpoint stalls, so I think PostgreSQL needs a more sophisticated flushing and checkpointing algorithm too, although it might not need to resemble anything we’re doing here (again, extremely different systems). I have registered a “checkpoint blues” BoF session at the MySQL and Beyond Conference, and I hope we get a good discussion going. http://en.oreilly.com/mysql2011/public/schedule/detail/18569

Vadim – very interesting post. I’ll be interested to watch how this develops.

In the meantime, any rules of thumb for the ratio between innodb_buffer_pool_size and innodb_log_file_size? I think I have probably been setting my innodb_log_file_size too small.

Thanks

Nathan,

There is no single rule.

You may read http://www.mysqlperformanceblog.com/2008/11/21/how-to-calculate-a-good-innodb-log-file-size/

to get some ideas.

Nathan,

There is no single rule.

You may read http://www.mysqlperformanceblog.com/2008/11/21/how-to-calculate-a-good-innodb-log-file-size/

to get some ideas.

Vadim – very interesting post. I’ll be interested to watch how this develops.

In the meantime, any rules of thumb for the ratio between innodb_buffer_pool_size and innodb_log_file_size? I think I have probably been setting my innodb_log_file_size too small.

Thanks

Kristian, PostgreSQL doesn’t have a checkpoint age target, but rather a completion target. The two systems are different in so many ways that it is hard to compare them. PG has no fixed-size log, so it doesn’t have a hard deadline (except for filling up the disks with logs). It does periodic checkpoints, not continuously moving checkpoints. It uses buffers completely differently. Its checkpoint spread algorithm is basically “I have to do a checkpoint every X seconds or Y bytes of log files, so let me aim to complete this one by the time Z percent of that time has passed.” It is actually quite a bit simpler than InnoDB’s algorithm, which isn’t a bad thing in itself, but most importantly, Greg Smith’s benchmarks show that PostgreSQL is significantly prone to checkpoint stalls, so I think PostgreSQL needs a more sophisticated flushing and checkpointing algorithm too, although it might not need to resemble anything we’re doing here (again, extremely different systems). I have registered a “checkpoint blues” BoF session at the MySQL and Beyond Conference, and I hope we get a good discussion going. http://en.oreilly.com/mysql2011/public/schedule/detail/18569

There’s a similar oracle parameter that tries to control how aggressive the database is on flushing FAST_START_MTTR_TARGET. If I remember correctly though the oracle parameter is actually trying to force checkpoints in order to keep your recovery time down, rather than for fear of rolling back onto an active redo log. I think its actually obsolete in 11g though, not sure what the replacement (if any) is.

I don’t actually know what the internals of the Oracle checkpoint logic are; presumptively it gets more aggressive as redo fills up and the number of inactive log files declines, but I’m not sure of the details of the implementation.

I will say I’ve rarely seen an Oracle database crap out on me because of Checkpointing. I’ve seen them bog way, way down, but they don’t generally have stop events b/c checkpoint too old (at least in my experience).

Running out of redo is another matter; I’ve definitely run my share of Oracle instances out of redo, with predictably bad consequences.

In another post you discussed things that Postgres and MySQL can learn from each other. This looks a lot like the checkpoint algorithm from Postgres 8.3, controlled by checkpoint_completion_target. It is good to see this in MySQL as well, their spread checkpointing ideas make a lot of sense, to me at last.

Patrick,

I did plan that details for next post.

For delays we insert sleep() in place where transaction writes into transactional log.

That way we delay growth of LSN and checkpoint age.

How are you implementing the delay? Is it per commit, per statement, or per block dirty (or something else entirely).

I can think of Pros and Cons to each approach, kind of curious to see how you did it.

Pavel,

Current implementation is against Percona Server 5.1.55, so we may support 5.1 tree.

And it will be ported to 5.5

This matches what I said previously: There can be no hard boundaries. Instead the throttling is inversely proportional to the remaining room before reaching T.

Intresting. Baron mentioned in the previous post, that this patch will be good for the cloud. What does the same benchmark show on a percona server instance running on EC2 using EBS? On EBS, you can’t really distinguish random io from sequential (you share the real block device with many users, so sequential io will become random there), which kind of defeats the whole purpose of a bunch of optimizations in today’s database engines based on the assumption that they are running on rotating disks, where sequential and random io is different. Does the patch make a difference there? Of course, this may not be true for other cloud providers.

Vadim, this is very interesting, thanks for sharing! In what version of Percona are you planning to include this patch?

Linux kernel also faces a similar issue with dirty pages — with both background and actual synchronous flushing (where the application which triggered is made to flush). The earlier mode naturally didn’t get many friends. So there is a new dynamic writeback throttling for linux — http://kernelnewbies.org/LinuxChanges#head-92a945b289279844f033269faefda0cb148673ff . Worth a good read. May be we can have something similar for mysql and may be easier to implement (don’t have to worry about disk bandwidth etc). The innodb thread scheduling can perhaps take care of these ? A thread with lesser tickets (has entered more into innodb kernel than others) gets more penalised in terms of sleep (as mentioned in the blog)