In my recent benchmarks, such as this one about the Virident TachIon card, I used different values for innodb_buffer_pool_size, like 13GB, 52GB, and 144GB, for testing the tpcc-mysql database with size 100G. This was needed in order to test different memory/dataset size ratios. But why is it important, and how does it affect how InnoDB works internally? Let me show some details.

Internally, InnoDB uses two lists for flushing (writing pages from the Innodb buffer pool memory to disk): the LRU list and the flush list. You can see a tracking of these lists in SHOW ENGINE INNODB STATUS:

|

1 2 3 |

... Pending writes: LRU 0, flush list 129, single page 0 ... |

It is important to understand which list is being used for flushing, because that defines what MySQL InnoDB tuning should apply.

What is important about this? If your workload is mostly bound by the LRU list, then innodb_adaptive_flushing, innodb_max_dirty_pages_pct, innodb_io_capacity, and innodb_log_file_size do not matter, and changing these settings won’t help.

Ironically, these settings are most important for tuning MySQL 5.5 to get stable performance (as you may see from Dimitri’s post, http://dimitrik.free.fr/blog/archives/2010/12/mysql-performance-analyzing-perconas-tpcclike-workload-on-mysql-55.html). But you can’t really detect what kind of flushing is used. There is a variable in SHOW STATUS, innodb_buffer_pool_pages_flushed, but it shows the sum of flushing from both the LRU list and the flush list.

In Percona Server 5.5, I added the SHOW STATUS variable innodb_buffer_pool_pages_LRU_flushed, which shows only pages flushed by the LRU list.

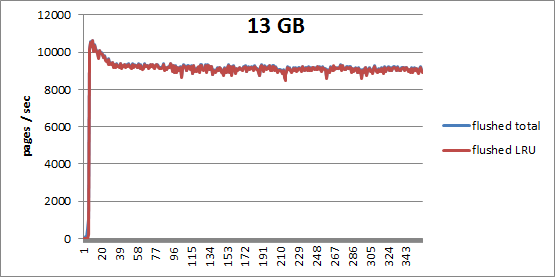

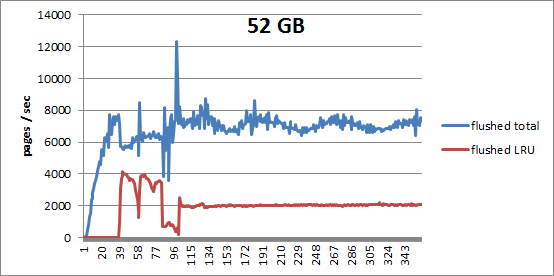

So, let’s see what flushing we have using 13GB and 52GB for innodb_buffer_pool_size settings in Percona Server 5.5, using the Cisco UCS C250 server and the Virident tachIOn PCI-E Flash card.

As you can see, in the 13G case (where memory to data ratio is about 1/10), all flushing comes from the LRU list,

while with 52G, the LRU list does not play into it much.

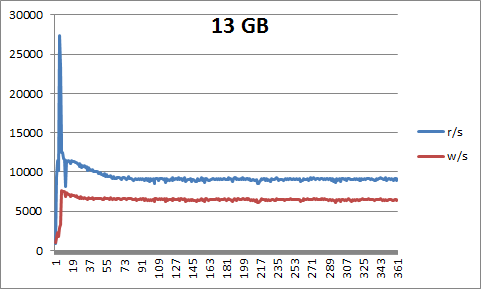

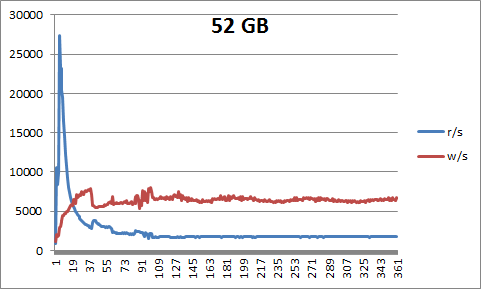

Here are also graphs of reads and writes per second for both cases:

(BTW, I am really impressed at what a stable level of reads and writes per second the Virident tachIOn card provides.)

And here is a graph with the percentage of dirty pages for each case:

As you can see, with 13GB we are under the limit of innodb_max_dirty_pages_pct=75, and

with 52GB we are reaching the 75% maximum for dirty pages.

How can you detect in regular MySQL that your flushing comes from the LRU list? You could periodically check SHOW ENGINE INNODB STATUS, and see something like

|

1 |

Pending writes: LRU N, flush list 0, single page 0 |

where N>0, and flush list is 0.

A second way to detect it is when the number of dirty pages is less than innodb_max_dirty_pages_pct and checkpoint age is less than 3/4 of innodb_log_file_size * innodb_log_files_in_group. If you have these conditions and observe intensive writes, InnoDB is using LRU list flushing.

So why is it important to know what the mix of InnoDB flushing types is in your workload? There are a couple reasons:

(Post edited by Fred Linhoss)

Yes, LRU flushing is one of the challenges for performance improvement within InnoDB. And things may yet become worse here as you may also observe some periodic users threads locks during such a flushing.. – Mark partially resolved this issue within a facebook patch. Hope to see a real improvement here with MySQL 5.6, but let’s see..

Rgds,

-Dimitri

Instrumentation in official InnoDB for understanding this behavior is inadequate. Percona makes diagnosis easier. Apps that are LRU list bound might suffer from mutex contention and other inefficiencies from buf_flush_free_margin. This is much more likely to be a problem on pure flash/ssd servers that can sustain more than 10k IOPs.

The code that does LRU list flushing isn’t efficient and it is done by threads that run user queries. It is not done by a background thread. So it will be a source of intermittent stalls. I have begin to evaluate a fix for this (http://bazaar.launchpad.net/~mysqlatfacebook/mysqlatfacebook/5.1/revision/3615) and this is one area in which XtraDB can improve on official InnoDB.

Mark,

Thank you, I will try Percona Server in LRU_list flushing mode with your patch.

My results with it are mixed. I need more time that is being spent on other problems. I think it can help when the host has flash/SSD and the dirty page write rate is not too high (or other problems will hit). But I can’t say at this point that it fixes a problem.

Vadim,

What is not clear from this post is why does LRU flushes happen to begin with ? If I’m below innodb_max_dirty_pages_pct in the buffer pool when there are some clean pages which can be simply discarded without flushing them ?

Is my understanding what Innodb wants to find a free page in the tail of LRU and if this does not happen it will flush and wait instead of going further or can you explain more ?

In general I think waiting on such flushes from user threads is poor design as this is not a activity needed to serve request. It would be much better if some background threads can ensure there is no need for user threads to do flushes in most of circumstances.

We also might wish to extend out Innodb profiling in slow query log to include lru_flush_wait_time as this may be IO bound wait which is currently invisible.

Peter,

You are correct in the regard that InnoDB

1) looking into free list and if there is any, it gets it from there

2) in loop tries to free pages from tail of LRU_list. But InnoDB does it in iterations with

quite complex logic inside each iteration, so I can’t follow this logic.

However there is one variable we may look after, for diagnostic:

Innodb_buffer_pool_wait_free

This variables shows how many times InnoDB could not find free block free_list or get free block from tail of LRU_list.

Again, the logic to find pages that can be freed from the tail of LRU_list seems very complicated, and probably can be re-factored and optimized.

Vadim. Interesting.

So are you saying LRU flushes would only happen if Innodb_buffer_pool_wait_free is not zero ? I very rarely see this growing to any significant value.

Also when you’re saying “free” here are we actually looking at free block or clean block ? There are typically only few really free blocks maintained in buffer pool.

Peter, Vadim,

Agree the InnoDB LRU flushing logic is a little involved. Here is a rough higher level picture without going into subtle details:

1) if a block is available in the free list grab it.

2) else scan around 10% or the LRU list to find a clean block

3) if a clean block is found grab it

4) else trigger LRU flush and increment Innodb_buffer_pool_wait_free

5) after the LRU flush is finished try again

6) if able to find a block grab it otherwise repeat the process scanning deeper into the LRU list

There are some other areas to take care of like having an additional LRU for compressed pages with uncompressed frames etc.

And Innodb_buffer_pool_wait_free is not indicative of total number of LRU flushes. It tracks flushes that are triggered above. There are other places in the code which will trigger an LRU flush as well.

And yes, as of now, the LRU flush happens in the user thread. I agree that this may not be the most optimal way of doing the things and that this design needs to be revisited.

Inaam,

Thank you for explanation, I appreciate it. It really helps to understand what happens on that stage.