Preamble: On performance, workload and scalability:

MySQL has always been focused on OLTP workloads. In fact, both Percona Server and MySQL 5.5.7rc have numerous performance improvements which benefit workloads that have high concurrency. Typical OLTP workloads feature numerous clients (perhaps hundreds or thousands) each reading and writing small chunks of data. The recent improvements to MySQL make it scale better for this workload when more resources (such as additional CPUs) are added. By scaling better I mean that it is able to take advantage of all available resources in order to handle the workload. One reason that it works best for this workload is that a single query executes in a single thread. MySQL never takes advantage of more than a single CPU when aggregating data and fetching rows from the buffer pool, with respect to a single query, but it can run many queries at once.

There are workloads other than OLTP and the recent optimizations to MySQL still leave a lot of low hanging fruit where these are concerned. This is particularly true for OLAP workloads. While I’m not going to diverge into a discussion of how OLAP varies from OLTP, it suffices to say that a typical OLAP workload features a low number of concurrent queries which each examine large amounts of data. Since a single query is single threaded in MySQL, the new optimizations don’t really help with this workload.

The following tests assume a workload consisting of a small number of concurrent queries (or only one) to demonstrate how much improvement could be made to MySQL so that is could better utilize all available resources (that is, scale better) when running small numbers of queries which examine a lot of data.

What is Shard-Query?

Shard-Query was initially conceived as a utility to add parallelism to horizontally partitioned data sets by running queries against each host in parallel, with the added feature of supporting aggregation. Then I hit upon the idea of taking SQL constructs like IN and BETWEEN and making these queries execute in parallel on a each host. If you have a sharded data set, then this gives you the opportunity for additional parallelism for each query. If you have only a single server, but it has enough resources to answer queries in parallel, then it can be used to add parallelism to queries which use IN or BETWEEN clauses. This added parallelism can have significant performance advantages as demonstrated in this blog post.

Many database servers can add this parallelism natively, but most of those are not open source. In the future, Shard-Query can be extended to other database servers such as PostgreSQL or Firebird fairly easily.

What machine did I use?

I used MySQL 5.5.7rc on a powerful Cisco UCS server with 12 real cores and 384GB of ram. The amount of ram is significantly larger than my already hefty 55GB testing data set, so this means that if MySQL could fully utilize all cores for my workload, then this test would be CPU bound.

What data did I use?

I loaded 55GB of the same data used in this earlier post into a partitioned InnoDB table.

Partitioning example:

|

1 2 3 4 5 6 |

/*!50500 PARTITION BY RANGE COLUMNS(FlightDate) (PARTITION p2 VALUES LESS THAN ('1988-01-01') ENGINE = InnoDB, PARTITION p3 VALUES LESS THAN ('1988-02-01') ENGINE = InnoDB, ... PARTITION p253 VALUES LESS THAN ('2008-12-01') ENGINE = InnoDB, PARTITION p254 VALUES LESS THAN (MAXVALUE) ENGINE = InnoDB) */ |

What queries did I use?

I used a version of the queries in that same blog post. The original queries tend to filter on the Year column. I partitioned the table into months using the FlightDate column using the improved MySQL 5.5 partitioning options which work directly on columns without the need to use TO_DAYS. To accommodate my partitioning schema I modified the queries to use the FlightDate column instead of the Year column. See the “full disclosure” section at the end for the complete SQL.

These tests were done using ‘run_query.php’, which is the example application which comes with Shard-Query. As the name implies, it takes a list of queries in a file (or stdin) and a config file. It runs the SQL via ShardQuery and it prints the results.

Test #1

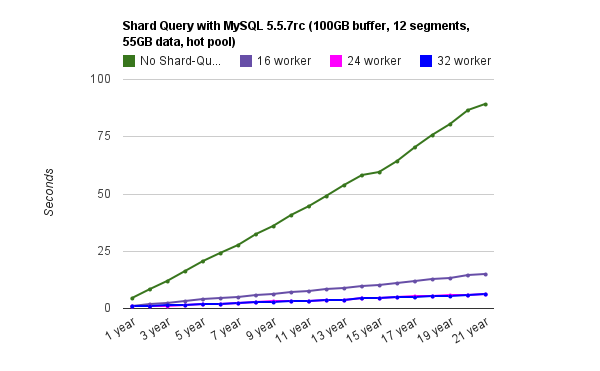

This set of queries tests the most basic aggregation (count(*)) on a range of records. This table is partitioned by month which means that MySQL can use partition pruning to reduce the amount of data which must be examined. With this in mind, I modified Vadim’s queries to use the FlightDate column in the WHERE clause instead of Year.

Each iteration reads an additional year of data. That is, the first query reads one year, and the last query reads 21 years of collected flight data.

For example (the final query):

|

1 2 3 |

select count(*) from ontime.ontime where FlightDate BETWEEN '1988-01-01' and '2008-12-31'; |

The reason that Shard-Query performs better is that it turns the OLAP query into something more like OLTP. Instead of getting one big chunk of data in one query, it runs many smaller queries each requesting significantly less data. On the other hand, MySQL 5.5.7 does not do this on its own. This is the low hanging fruit I was talking about. Even though the data is partitioned, MySQL will examine each partition serially. In the end, this means that things get slower as the query has to examine larger volumes of data.

Regarding the performance of Shard-Query, this machine has 12 real cores and 12 virtual cores, so we don’t see any advantage after increasing the number of workers past 24. The query becomes CPU bound at that point. If I needed more performance I could divide the data between two or more shards, or if possible, I could add more CPU cores. Regardless, even with a single server Shard-Query will perform much better than regular MySQL as the volume of data grows. Remember that this workload fits entirely in the buffer pool so adding CPUs will help only until we run out of memory bandwidth.

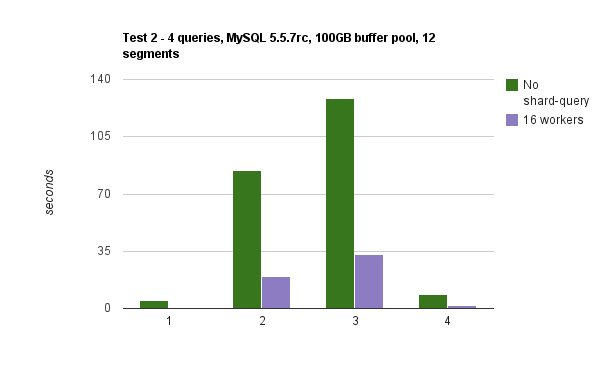

Test #2

My second test involved the next four queries on Vadim’s list. The purpose of this test is to demonstrate that Shard-Query works with GROUP BY and other constructs.

|

1 2 3 4 5 6 7 |

Q1 - SELECT count(*) from ontime.ontime where FlightDate between '1988-01-01' and '1988-12-31'; Q2 - SELECT DayOfWeek, count(*) AS c FROM ontime WHERE FlightDate BETWEEN '2000-01-01' AND '2008-12-31' GROUP BY DayOfWeek ORDER BY c DESC; Q3 - SELECT Origin, count(*) AS c FROM ontime WHERE DepDelay>10 AND FlightDate BETWEEN '2000-01-01' AND '2008-12-31' GROUP BY Origin ORDER BY c DESC LIMIT 10; Q4 - SELECT carrier, count(*) FROM ontime WHERE DepDelay>10 AND FlightDate between '2007-01-01' and '2007-12-31' GROUP BY carrier ORDER BY 2 DESC; |

The remainder of Vadim’s queries use subqueries in the FROM clause, which Shard-Query does not yet add parallelism to. I plan to add support for those queries though, and I’ll post a follow-up when I do.

— Full Disclosure —

This is a text file containing the information.

I’d love to see more of this kind of work, because it fits my typical use cases quite well. I’d dearly love to see it work on non partitioned tables as well, although I’m aware that’s a more difficult problem.

There are plenty of corner cases that sound like they might be low hanging fruit:

select count(*) from something where value = “dog” or value=”cat” or value=”pony”

Could conceivable be done with 3 parallel index dives.

It does support IN, so value IN(‘dog’,’cat’,’pony’) will work as well.

I have yet to support UNION and UNION ALL statements which I will be adding support for at the same time as subqueries in the from clause. Once I add that capability You could rewrite the OR clause to use UNION.

It will work fine without the partitioning as long as your system has enough resources to answer queries in parallel. I mostly used them to increase the speed of loading the data.

I’ve checked support for UNION, UNION ALL, and subqueries in the FROM clause into SVN.

If you check it out, please report any bugs you encounter so that I can improve the tool.

If you can not rewrite your queries to use one of these other constructs, then I might add support for disjunctions in the WHERE clause at a later date. However, the usual way to rewrite such a query is to use UNION, and so I think it suffices here.

Just make sure to monitor your gearman worker count to scale your parallelism.

Hi,

This is a simple test, so there is no real management of the Gearman workers. This is something that I’ve been thinking about recently. I’ve been thinking of structuring the database into three different types of nodes:

storage nodes – One or more MySQL MMM pairs, each with one or more replicas for massive read scale-out, many high frequency cores and extensive local disk bandwidth and extensive memory for buffer pool

worker node(s) – One more more boxes with many lower frequency cores and limited local disk bandwidth and memory

directory node – MySQL MMM pair with shard directory and “shared tables”, significant ram and network bandwidth, many cores, limited local disk bandwidth

Depending on the workload, it is likely possible to forgo the worker nodes, and just use gearman workers on the other nodes.

There should probably be a gearmand running on at least the directory and each worker server. Shard-Query doesn’t currently retry failed queries, and simply returns the remainder of the results without including the broken query (if possible). Eventually, it should retry on a list of VIPS for the storage node, in order to gracefully handle node failure without having to restart the entire distributed query.

Justin,

Got it. You may consider using mysqlnd’s async functionality as well… might remove some structuring complication.

You can see the approximate performance of using mysqlnd by using the ‘fetch’ method instead of store. The problem with using mysqlnd for async is that collecting the results from each server is still single threaded. If you have 32 queries each running independently, each can insert into the “worker” table independently, increasing parallelism.

This also, btw, allows an important optimization for union, but one I have not implemented yet. Right now, the de-duplication is done with a SELECT DISTINCT … over the worker table, after each worker has completed. In the future, there will be a PRIMARY KEY which covers all the attributes projected by the query. Each worker will insert with INSERT IGNORE … resulting in increased parallelism for the de-duplication.

I should clarify that all of the queries will of course run in parallel, but collecting the results for each of the queries is serialized using mysqlnd. Shard-Query (using the store method) executes the queries in parallel, and they insert in parallel. The ‘fetch’ method, on the other hand, runs the queries in parallel, but processes the results from each query serially. It is consistently slower than the ‘store’ method, but the fetch method is more convenient in some cases for easier processing of the results from each query.

That’s correct, result sets are fetched from mysqlnd serially, which definitely is slower, but the parallel query execution speed is a benefit. I’m not familiar with your gearman implementation, but doesn’t fetching data/response from each gearman worker also have to happen in series?

Hi,

When using the ‘fetch’ method, a TEMPORARY table is used, and each query registers results which are inserted into the TEMPORARY table serially.

When using the ‘store’ method, a CONCRETE table is used, which is visible to multiple sessions. The table is created with CREATE TABLE IF NOT EXISTS, so the first query to register results will create the table, and the rest will use it for storage. This allows every worker to insert in parallel, which is more efficient.

The workers using the store method call the callback after they have completed inserting. Once all the workers have called their callback, then the result is aggregated over the rows in the table, and then the table is dropped.

This allows the possibility for some leftover tables if something bad happens during query processing, but this is still a work-in-progress.

Though I suppose mysqlnd could be used in the same fashion.

It might also make it easier to manage the number of concurrent workers for a single query.

I’ll definitely think about it.

Hi Vadim,

Just a penny. In http://www.mysqlperformanceblog.com/2009/10/02/analyzing-air-traffic-performance-with-infobright-and-monetdb/ and http://homepages.cwi.nl/~mk/ontimeReport MonetDB was evaluated. I re-ran the queries posed here

on the latest version using a no-shard version. Talking about low hanging fruit… ;).

I get a 6.5 sec cold and 1.sec response on a no-shard

for a 21 year interval on first query

Q1 0.5 sec (0.8 sec cold)

Q2 2.7 sec (3 sec cold)

Q3 4.1 sec (5.7 sec cold)

Q4 1.4 sec (1.5 sec cold)

Hi Justin,

how hard is it / would it be to integrate cluster-db / shard-query with mondrian for doing olap cubes?

Also, how hard would it be to modify shard-query to work with sqlite3 db’s vs. mysql. I’m thinking of using individual sqlite3 dbs running on a variety of platforms and doing queries against sqlite3 using cluster-db..

The load/performance for doing sqlite would be ok if i partition the tables often enough, since queries would be read-only, and the benefit would be to be able to run on platforms like tablets etc. along with standard machines. This could handle scaleout issues much easier (i think) than using a central infinidb with mondrian, since load could grow with machines much more easily. That’s one of the great things i see with your work here with shard-query.

Hi Matt,

I’m working on a MySQL proxy interface to shard-query. Once this is completed, then you will be able to point Mondrian at it like any other MySQL data source. Combine scale-out with Shard-Query with aggregate tables from Flexviews and you can crunch numbers like the dickens, as they say. 🙂

It would not be incredibly difficult to change the database engine which Shard-Query uses.

Shard-Query extends the MySQLDAL class that is based on the SimpleDAL interface in the include/ directory. You would need to create a class (like include/mysql.php) which implements the interface and provides functions for the necessary calls. There are probably some rough edges in this stuff, but I would be happy to help in any way. I’d certainly appreciate the input on how to make it more “pluggable”.

I’d love to see how this compares to Oracle’s parallel query. In theory having the parallelism inside the RDBMS implementation should give better results because all concurrent queries are (or at least could be) aware of each other and the planner can dynamically decide how to partition the parallel query.

So as Jan 2013, is this still happening with MySQL? or it has an optimizer now?

BTW, great article, thanks for sharing the results.

Marcos,

MySQL still doesn’t have any parallel query capabilities.

I’m giving a talk on Shard-Query later this week at FOSDEM in Belgium and again at PLMCE in April.

Shard-Query now automatically uses partitions for parallelism and has just about complete coverage of the SELECT and most other DML statements as well as DDL and the issues and suggestions made in previous posts are addressed.

–Justin