This is my last post in series on FlashCache testing when the cache is placed on Intel SSD card.

This time I am using tpcc-like workload with 1000 Warehouses ( that gives 100GB of data) on Dell PowerEdge R900 with 32GB of RAM, 22GB allocated for buffer pool and I put 70GB on FlashCache partition ( just to simply test the case when data does not fit into cache partition).

Please note in this configuration the benchmark is very write intensive, and it is not going be easy for FlashCache, as in background it has to write blocks to RAID anyway, and write rate in final place is limited by RAID. So all performance benefits will come from read hits

The full report and results are available on benchmark Wiki

https://www.percona.com/docs/wiki/benchmark:flashcache:tpcc:start.

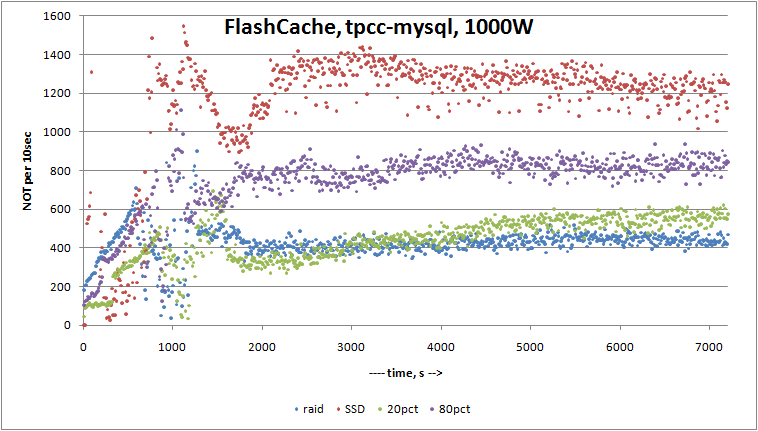

Short version of results are on graph:

In summary:

on RAID final result: 2556.592 TpmC

on Intel SSD X25-M: 7084.483 TpmC

on FlashCache with 20% dirty pages: 2632.892 TpmC

on FlashCache with 80% dirty pages: 4468.883 TpmC

So with 20% dirty pages the benefit are really miserable, and it is quite explainable ( see note above about write intensive workload), but really on the graph we can see that probably 2h was not enough to warmup FlashCache enough.

And this is interesting problem with FlashCache what I see. Warmup by simple copying files does not work (you need O_DIRECT with proper blocksize), and you only rely on InnoDB in this case, and it takes about 30min+ to fill FlashCache. Solution there would be PRELOAD TABLE / INDEX, and it is in our roadmap for XtraDB.

With 80% dirty pages the performance gain in 1.7x and it is pretty decent, as you can get it for 500$ ( price of Intel X25-M card) ( for this particular workload, your experience may vary!).

On this stage I consider FlashCache as pretty stable and ready for an evaluation on real workloads ( kudos to Facebook team, they provide really stable release).

I actually did pretty bad test – just turned off power on SSD drive in the middle of tpcc-mysql run,

just SSD power, not whole server. No wonder FlashCache complained on failed writes, and after that I restarted full system. I was expecting that database is going to trash, but after restart FlashCache was able to attach previous cache, and MySQL was able to start and finish crash recovery. I am impressed.

In my next rounds I am looking to run similar benchmarks on FusionIO card.

P.S. And if CentOS team reads this post – please change default IO scheduler from CFQ to Deadline. Seriously, it makes so much damage on performance on servers with IO intensive workloads, so it should be the first action after CentOS installation. And I doubt that there big usage of CentOS on desktop systems anyway.

Forgot to switch a newly installed varnish machine full of ssds to deadline, astoundingly bad performance with this workload. Captured a cpu usage graph, pretty easy to pick out the before and after: http://twitpic.com/1qqtjz

Are you certain about switching the default scheduler for CentOS to deadline? I’ve heard it is not a silver bullet and after googling, I found this page: http://www.redhat.com/magazine/008jun05/features/schedulers/

It looks like deadline io scheduler slows down read/write transactions while improving sequential read performance.

Vadim,

The numbers look kind of low to me – probably in all cases results were very much CPU bound even in case of X25-M. I think it would be more realistic to use something as FusionIO as a cache, otherwise your system would not be balanced in terms of getting peak price/performance. I get your point though of even very simple $500 flash hard drive can be handy as a cache to supplement 8 drives RAID array.

FractalizeR,

Sure it is not silver bullet, but in systems that allows many outstanding IO requests ( RAID10, SSD) CFQ just dies vs Deadline in OLTP workloads.

See for example http://www.mysqlperformanceblog.com/2009/01/30/linux-schedulers-in-tpcc-like-benchmark/

Also we have bunch of customers cases when performance problem was healed just by one command

echo deadline > /sys/block/sda/queue/scheduler

So, can deadline actually slow some systems or not? I mean, you recommended CentOS guys to change default scheduler to this value. Will it be really good for ALL installations or only for some? You see, changing default OS io scheduler value is a big decision 😉 I am also just trying to figure out, should I do that on my servers… Although, they are not IO-intensive.

FractalizeR,

As I understand Ubuntu uses deadline by default and nothing bad happened 🙂

So I do not see big problem to switch it for CentOS distributions also.

Aha, now I see 😉 Thanks 😉

Vadim,

– In your test did you use BBU for the RAID 10? Any BBU for the SSD?

– If I put my data on SSD, does it make much difference whether I use BBU or not?

– Any reason why you recommend deadline instead of noop?

Andy,

There is BBU on RAID, but no BBU on SSD, it is directly attached and it has write cache enabled.

I did not test SSD with BBU, so I can’t provide good feedback there.

Disabling write cache on SSD really affects write performance a lot.

The reason I recommend deadline is that it shows almost the same results with noop on my RAID system,

but noop really makes sense if your IO subsystem can manage IO requests properly ( Perc6/i does that),

otherwise better to delegate this work to OS ( deadline scheduler).

This is interesting. Just yesterday I noticed that our I/O is really bottlenecking our system and am looking into a pair of the intel SSD’s, but the SLC version as our dedicated server host offers them at a decent price (monthly). I was going to use one of them for our sphinx indexes and have been debating using the second one for flash cache or to house MySQL directly. Our data set is about 30GB, but we only have 12GB of memory on the machine currently. It looks like using the second drive as a cache might be the way to go. Was the setup difficult on CentOS? More reading for me 🙂

Vadim,

Thanks for the benchmark!

If we write like 1TB data to flashcache, when the SSD is full, does the write performance decrease to below 300 IOPS since no TRIM is available in flashcache?

Thanks!

Tom

Tom,

Yes, I think the write performance will be problematic with full SSD, but I did not test that

specifically.

Vadim,

Thanks for the input!

Do you think it is only making sense to use expensive SSD or flash-drive such as X25E or fusion io drive with FlashCache due to the SSD write performance limitation?

Would SSD made of MLC be problematic with FlashCache as a server cache after the cache becomes full? Therefore, in a real production environment, we should always spend big to get SLC SSD?

Thanks,

Tom

Tom,

It is hard to say. If you have very write intensive workload, maybe FlashCache will not work as expected in any case.

For FusionIO you may look into their DirectCache cache instead of FlashCache.

Virident tachIOn cards (only SLC now) shows acceptable performance with full disk, so they may work better with FlashCache.

What is there to TRIM during this test? There are lots of old block versions to collect, but the flash/ssd device will already know that. Are any files being deleted?

Is the tpcc-mysql workload uniform (all rows or disk pages equally likely to be used)? If yes, then flashcache mostly acts like a huge write cache and might not be worth the extra cost. But many workloads are highly skewed in production and for that case flashcache can make a big difference.

Mark,

TRIM probably won’t help there.

What I mean is that we can expect general performance degradation due fact that card will be fully filled.

Mark,

TRIM probably won’t help there.

What I mean is that we can expect general performance degradation due fact that card will be fully filled.

What is there to TRIM during this test? There are lots of old block versions to collect, but the flash/ssd device will already know that. Are any files being deleted?

Is the tpcc-mysql workload uniform (all rows or disk pages equally likely to be used)? If yes, then flashcache mostly acts like a huge write cache and might not be worth the extra cost. But many workloads are highly skewed in production and for that case flashcache can make a big difference.