Peter took a look at Redis some time ago; and now, with the impending 1.2 release and a slew of new features, I thought it time to look again.

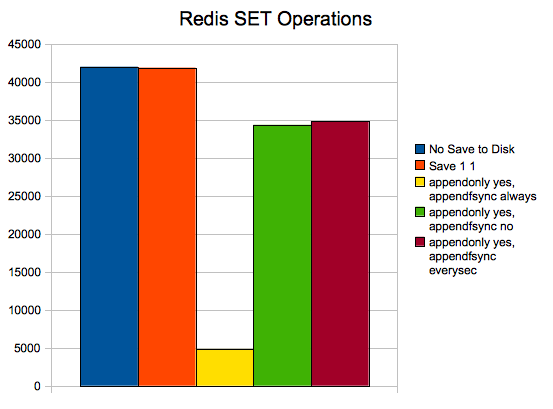

One of the more interesting features in 1.2 is the ability to operate in “append-only file persistence mode”, meaning Redis has graduated from a semi-persistent to a fully-persistent system! Using the redis-benchmark script included, I ran the following command

|

1 |

./redis-benchmark |

in five modes:

1 – In-Memory

I set “save 900000000 900000000” so nothing would be written to disk during the tests.

2 – Semi-Persistent

I set “save 1 1” so that changes would be flushed to disk every second (assuming there was at least one change the previous second).

3 – Fully Persistent

I set appendonly yes and appendfsync always, which calls an fsync for every operation.

4 – Semi-Persistent

I set appendonly yes and appendfsync no, which is about as persistent as MyISAM. An fsync is never explicitly called, rather we’re relying on the OS.

5 – Semi-Persistent

I set appendonly yes and appendfsync everysec, which explicitly calls an fsync every second.

(By default, redis-benchmark invokes 50 parallel clients, with a keep alive of 1, using a 3-byte payload.)

I am quite surprised at the performance penalty here for full durability.

Aside from the numbers, there are a couple of other interesting things I noticed during the benchmarking (note that the full benchmark suite was run, not just SET):

– In case 2, the dump.rdb file was 40k. While in case 3, 4, and 5, appendonly.aof was 1.9M. If you’re going to to use appendonly in production, be sure to consider the additional storage requirements.

– In every case where appendonly was enabled, I received the following error many times as the test was completing (note that this did not occur during the SET operations):

Error writing to client: Broken pipe

To be fair, this is RC, not GA.

* All data easily fit into memory during these tests.

* The FusionIO used was: 160 GB SLC ioDrive

* XFS was the filesystem

* redis version was a git clone at Thu Dec 10 10:41:50 PST 200

Resources

RELATED POSTS

Hello Ryan,

thanks for this tests! A few remarks:

– I think fsync set to “always” is so slow because Redis is single threaded and fsync() is slow, it has to wait the syscall finish before to return serving other clients.

– Broken pipe should be harmless, it’s just a metter of how redis-benchmark works and timing issues. It should be possible to see the error even without persistences with more than 50 clients, even now that 1.2 supports epoll.

– It’s strange that on Linux against loopback you get only 40,000/s, should be at least 100,000/s in a entry level server.

Thanks again! I’m looking forward for other tests given that the title “round 1” make me hope about a second round 😉

Cheers,

Salvatore

The numbers you’ve got seems quite low indeed. I just run yesterday redis_benchmark in a slicehost 1gb instance and got 30,000 set/s with save 60 10000 and 24,000 set/s with appendonly yes, fsync everysec.

Ryan,

Why did you test it on FusionIO ? This does not look a very good use case for it – Redis whenever it periodically dumps its memory or whenever it is using appendonly log does sequential writes and SSDs in general are not cost efficient in such cases – Spindles with BBU cache in the RAID should do as good or even better (the 5000 fsync/sec you seems to be getting is not impressive)

Also I’m wondering if Redis has got “Group Commit” implemented in append only mode – will it do single fsync per few operations if multiple transactions are pending ?

‘@peter

My hope was that, by using FusionIO, there would be a less pronounced penalty for appendonly yes / appendfsync always. This (obviously) turned out not to be the case.

@diego

I’ll do some testing later with “save 60 10000” and other parameters, the primary goal of this run was to look at the cost of appendonly yes/appendfsync always. And the 30,000 set/s I got with fsync everysec aren’t *much* of an improvement over your 24k, but that was somewhat expected as sequential writes on SSDs (as Peter says) “aren’t cost efficient”.

@antirez

I thought the 40k on loopback was a bit low, but my real interest was the new appendonly yes/fsync always. There are more benchmarks I’m thinking about doing, I’m hoping “Round 2” will be next week!

— Ryan Lowe

Ryan,

This is interesting as it well highlights common misconception about what SSDs are fast for. SSDs (and I unclude FusionIO here) are fast for random reads and writes. Sequential Reads single Intel SSD will have speed similar to the good hard drive (at some extent due to SATA interface limits) and FusionIO similar to the good RAID with few hard drives. Writes are more expensive for SSDs so for Sequential Writes standard hard drives are often the winners. It is the most interesting when it comes for small sequential writes with fsync() calls – the Raid has DRAM with BBU which has very low latency for writes. The SSDs as I see write directly to flash which is fast but slower than DRAM. I would expect fsync() speed to become very similar in the end as nothing prevents FusionIO etc have some DRAM with battery or capacitors but SSD do not get the leverage here against legacy hard drives.

Hello,

I think that SSD will do a difference in Redis once we’ll have Virtual Memory implemented. Redis will random-access the swap file likely.

Antirez,

Right. As soon as you get disk based storage for Redis SSDs will make a lot of difference.