I guess it is first reaction on new storage engine – show me benefits. So there is benchmark I made on one our servers. It is Dell 2950 with 8CPU cores and RAID10 on 6 disks with BBU, and 32GB RAM on board with CentOS 5.2 as OS. This is quite typical server we recommend to run MySQL on. What is important I used Noop IO scheduler, instead of default CFQ. Disclaimer: Please note you may not get similar benefits on less powerful servers, as most important fixes in XtraDB are related to multi-core and multi-disks utilization. Also results may be different if load is CPU bound.

I compared MySQL 5.1.30 trees – MySQL 5.1.30 with standard InnoDB, MySQL 5.1.30 with InnoDB-plugin-1.0.2 and MySQL 5.1.30 with XtraDB (all plugins statically compiled in MySQL)

For benchmarks I used scripts that emulate TPCC load and datasize 40W (about 4GB in size), 20 client connections. Please note I used innodb_buffer_pool_size = 2G and innodb_flush_method=O_DIRECT to emulate IO bound load.

InnoDB parameters:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

innodb_additional_mem_pool_size = 16M innodb_buffer_pool_size = 2G innodb_data_file_path = ibdata1:10M:autoextend innodb_file_io_threads = 4 innodb_thread_concurrency = 16 innodb_flush_log_at_trx_commit = 1 innodb_log_buffer_size = 8M innodb_log_file_size = 256M innodb_log_files_in_group = 3 innodb_max_dirty_pages_pct = 90 innodb_flush_method=O_DIRECT innodb_file_per_table = 1 |

And for XtraDB I additionally used:

|

1 2 3 4 |

innodb_io_capacity = 10000 innodb_adaptive_checkpoint = 1 innodb_write_io_threads = 16 innodb_read_io_threads = 16 |

So what is in result:

Result is in NOTPM (New Order Transactions Per Minute), more is better. As you see XtraDB is somewhat 1.5x better than InnoDB in standard 5.1.30 and even more than InnoDB-plugin-1.0.2

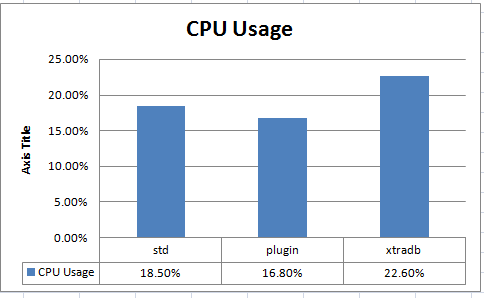

And there is CPU utilization for all tested engines:

As you see XtraDB also utilizes CPUs better.

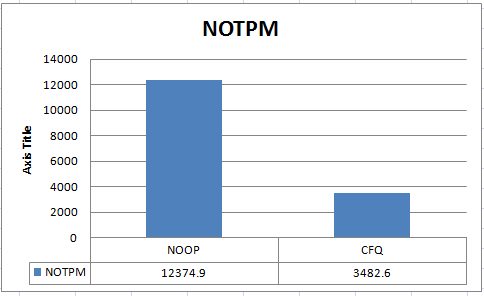

Finally let me show you why I took NOOP IO scheduler instead of CFQ, there are result for XtraDB with both:

4X difference is just giant one. And it is important to remember as Linux kernels 2.6.18+ (which are used on CentOS / RedHat 5.2) are coming with CFQ scheduler as default.

So

|

1 |

echo 'noop' > /sys/block/sda/queue/scheduler |

should be one of first things to do on new server (sure you also need to change kernel startup parameter to make it automatic after reboot).

Resources

RELATED POSTS

Good news! That is a huge benefit – just changing the plugin… 🙂

Great,

have you seen similar performanceimprovements (cencerning the noop-scheduler) for the std.InnoDB?

Is there a specific reason why for having 3 logfiles in the group instead of the default 2?

Interesting new data about the scheduler performance. I believe deadline scheduler was always the way to go for typical MySQL workload.

Have you tested it against the noop scheduler?

Very interesting data, and your hardware sounds quite like the hardware we’re just setting up for a new db server.

What filesystem and RAID-related settings have you used in the test setup? Are you using hardware RAID, or is it software only?

Rick

Looks very well !

Any chance to do same benchmark (same hardware) with FreeBSD 7.1 ?

Good stuff, a note on noop, depending on the hardware I have seen mixed results with this. For instance certain drives/controllers do better with noop, and others worse… i have even seen boosts in performance using deadline. It all seems to be very hardware dependent.

Hi,

One thing I wanted to add if you’re looking for similar gains with MySQL 5.0 you can use Percona Patches https://www.percona.com/percona-lab.html.

You can see these benchmark results here https://www.percona.com/files/presentations/percona-patches-opensql-2008.pdf

Matt,

Indeed schedulers are workload specific and hardware specific and workload specific and we will write more about it. In this case we simply showed we had to change a scheduler from default to get very significant performance increase.

The problem with Schedulers on OS level is they do not know a lot about underlaying hardware often assuming it is single drive you’re dealing with optimizing for seeks etc. If it is RAID with large number of hard drives, or SSD the assumptions IO schedulers operate are just wrong. NOOP reduces extra kernel load and just lets hardware do its magic. It does not always makes it best though it also can be side effect.

Another couple of notes here

1) Even though Vadim called it IO bound benchmark, it is not completely the case. With data just 2x from the memory size this is mixed benchmark which loads both CPU and IO subsystem intensively, so it both cares about IO performance but also about contention issues. It would be interesting to see results for completely CPU bound and IO bound benchmarks too. I would guess at this data size TPC-C should be write prevailing… which is different from what a lot of people in reality have.

2) The “emulated” IO workload, by small buffer pool size and innodb_flush_method=O_DIRECT is close but not always the same as real IO workload because there is OS cache, which is bypassed for Data but not for logs. When logs can be completely cached in memory this can a bit improve log write performance.

I’ve been using deadline on my servers, and found it to have significant benefits (along the same order as noop in the above benchmark). Why noop instead of deadline?

Just a note if you want to use TPCC scripts you can get them from your perconatools project on Launchpad, https://launchpad.net/perconatools

Nils,

No, there is no specific requirement to have 3 innodb_log_file

rtfm,

We have no plans to run FreeBSD 7.1 on this hardware anytime soon.

Vadim, benchmarks of XtraDB on FreeBSD 7.0 or 7.1 is a very very very interesting things 🙂

Can you publish any conditions which can motivate your team to do this?

mkr,

Basically we need time :). Beside that we just have no FreeBSD on board, so we need again time to install it. What is not very convenient for us, as current servers are in semy-production stage, and re-installing them back and forth is not good enough.

If someone could give us equal server Dell 2950 with installed FreeBSD, we could run it there.

What’s the y-axis in the last graph?

If you’re saying noop is 4x faster it probably depends on the RAID controller doing its own IO scheduling and CFQ confusing it…

In our benchmarks noop always loses (obviously).

Though on SSD it was faster.

Vadim, I can make some donation (paypal or webmoney) which will help you to buy one more HDD, and you’ll able to boot FreeBSD on the same server to run these and future benchmarks. These benchmarks (and Percona MySQL/plugins builds) are important for FreeBSD community, I hope %)

Vadim.

I would be willing to donate some money (via paypal) to purchase a second hard drive to run these benchmarks on AmigaOS.

They are important to our community 😛

Kevin,

Y-axis is NOTPM – New Order Transaction Per Minute, more is better.

Sure, you are right, best scheduler depends on hardware. MegaRAID on Dell boxes seems quite smart here.

What controller did you test this on? Just curious

mrk,

regarding benchmarks – I think we need 6 disks there, one not enough :). Also server really lives in DataCenter and goes into production really soon. But point taken, we will try to organize things, I appreciate your interest.

please drop me your contact to vadim @ thisblogdomain.

Kevin,

Controller LSI MegaRAID (PERC6)

What about deadline vs cfq? Can we get more specifics about the Dell 2950? BIOS, PERC firmware version etc, disk model?

Very nice work. BTW, can you release official XtraDB binaries for Solaris 10 and OpenSolaris 2008.11? The move toward ZFS is inevitable and native Linux ZFS is probably 2 years away, and there will be more and more people running opensolaris on x86_64 boxes.

TS,

We will do that as soon as we see interest from customers, so far we saw OpenSolaris only in non-production environment.

I’d be very curious to see if anyone has performed any tests (w/ xtradb and noop scheduler) on machines utilizing the 3ware 9000 series controllers? The results are quite incredible and make me a bit trigger happy to try this on a few of our production db boxes.

Thanks,

I too would like to see Percona w/ XtraDB binaries for Solaris 10 🙂

Brad,

You already contacted us I guess – you can easily make it happens 🙂

Yea,64bit Solaris/OpenSolaris binaries would be great 🙂