Following my previous blogs on py-tpcc benchmark for MongoDB, Evaluating the Python TPCC MongoDB Benchmark and Evaluating MongoDB Under Python TPCC 1000W Workload, and the recent release of Percona Server for MongoDB 4.4, I wanted to evaluate 4.2 vs 4.4 in similar scenarios.

Following my previous blogs on py-tpcc benchmark for MongoDB, Evaluating the Python TPCC MongoDB Benchmark and Evaluating MongoDB Under Python TPCC 1000W Workload, and the recent release of Percona Server for MongoDB 4.4, I wanted to evaluate 4.2 vs 4.4 in similar scenarios.

For the client and server, I will use identical bare metal servers, connected via a 10Gb network.

The node specification:

|

1 |

# Percona Toolkit System Summary Report ######################<br> Date | 2020-09-14 16:52:46 UTC (local TZ: EDT -0400)<br> Hostname | node3<br> System | Supermicro; SYS-2028TP-HC0TR; v0123456789 (Other)<br> Platform | Linux<br> Release | Ubuntu 20.04.1 LTS (focal)<br> Kernel | 5.4.0-42-generic<br>Architecture | CPU = 64-bit, OS = 64-bit<br># Processor ##################################################<br> Processors | physical = 2, cores = 28, virtual = 56, hyperthreading = yes<br> Models | 56xIntel(R) Xeon(R) CPU E5-2683 v3 @ 2.00GHz<br> Caches | 56x35840 KB<br># Memory #####################################################<br> Total | 251.8G<br> Swappiness | 0<br> DirtyPolicy | 80, 5<br> DirtyStatus | 0, 0<br> |

The drive I used for the storage in this benchmark is a Samsung SM863 SATA SSD.

For MongoDB I used:

The script to start Percona Server for MongoDB in docker with memory limits:

|

1 |

> bash startserver.sh 4.4 1<br>=== script startserver.sh ===<br>docker run -d --name db$2 -m 50g <br> -v /mnt/data/psmdb$2-$1:/data/db <br> --net=host <br> percona/percona-server-mongodb:$1 --replSet "rs$2" --port $(( 27016 + $2 )) <br> --logpath /data/db/server1.log --slowms=10000 --wiredTigerCacheSizeGB=25 <br><br>sleep 10<br><br>mongo mongodb://127.0.0.1:$(( 27016 + $2 )) --eval "rs.initiate( { _id : 'rs$2', members: [ { _id: 0, host: '172.16.0.3:$(( 27016 + $2 ))' } ] })"<br> |

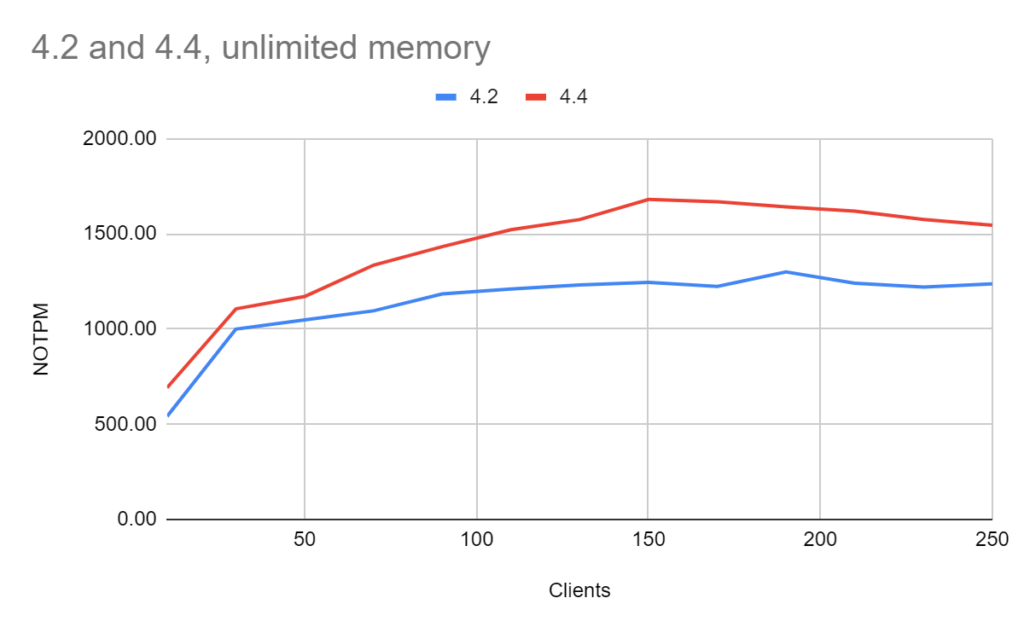

The results are in New Order Transactions per Minute (NOTPM), and more is better:

| Clients | 4.2 | 4.4 |

| 10 | 541.31 | 691.89 |

| 30 | 999.89 | 1105.88 |

| 50 | 1048.50 | 1171.35 |

| 70 | 1095.72 | 1335.90 |

| 90 | 1184.38 | 1433.09 |

| 110 | 1210.18 | 1521.56 |

| 130 | 1231.38 | 1575.23 |

| 150 | 1245.31 | 1680.81 |

| 170 | 1224.13 | 1668.33 |

| 190 | 1300.11 | 1641.45 |

| 210 | 1240.86 | 1619.58 |

| 230 | 1220.89 | 1575.57 |

| 250 | 1237.86 | 1545.01 |

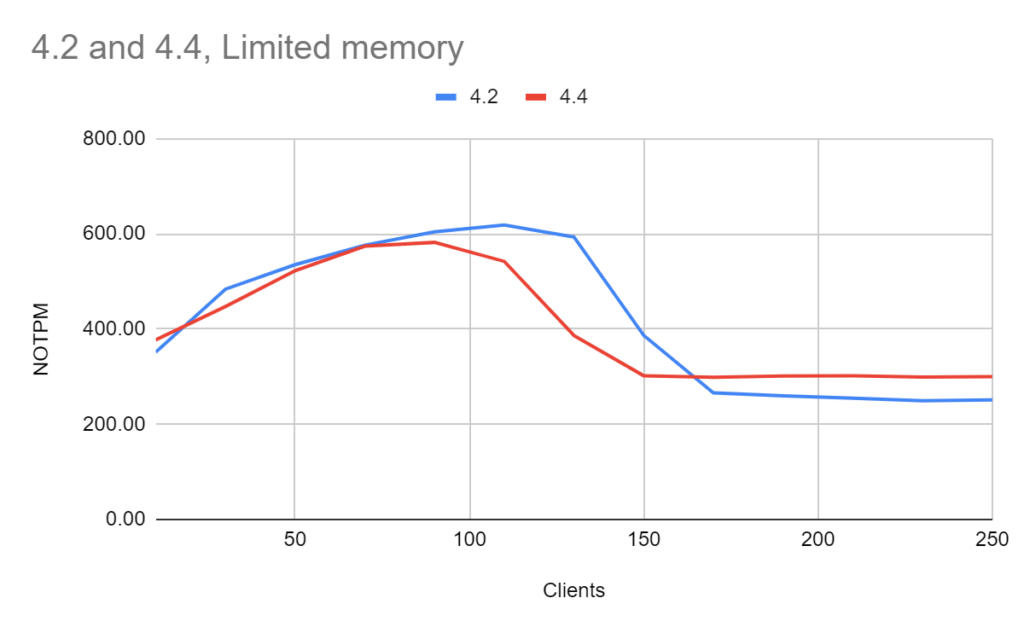

The results are in New Order Transactions per Minute (NOTPM), and more is better:

| Clients | 4.2 | 4.4 |

| 10 | 351.45 | 377.29 |

| 30 | 483.88 | 447.22 |

| 50 | 535.34 | 522.59 |

| 70 | 576.30 | 574.14 |

| 90 | 604.49 | 582.10 |

| 110 | 618.59 | 542.11 |

| 130 | 593.31 | 386.33 |

| 150 | 386.67 | 301.75 |

| 170 | 265.91 | 298.80 |

| 190 | 259.56 | 301.38 |

| 210 | 254.57 | 301.88 |

| 230 | 249.47 | 299.15 |

| 250 | 251.03 | 300.00 |

Actually I wanted to perform more benchmarks on 4.4 vs 4.2, but some interesting behavior in 4.4 made me reconsider my plans and I’ve gotten distracted trying to understand the issue, and I share this in the post MongoDB 4.4 Performance Regression: Overwhelmed by Memory.

Besides that, in my tests, 4.4 outperformed 4.2 in case of unlimited memory, but I want to consider a variation of throughput during the benchmark so we are working on a py-tpcc version that would report data with 1-sec resolution. Also, I want to re-evaluate how 4.4 would perform in a long-running benchmark, as the current length of the benchmark is 900 sec.

In the case with limited memory, 4.4 did identically or worse than 4.2 with concurrency over 100 clients.

Both versions did not handle the increased number of clients well, showing worse results with 150 clients compared to 10 clients.

Learn more about the history of Oracle, the growth of MongoDB, and what really qualifies software as open source. If you are a DBA, or an executive looking to adopt or renew with MongoDB, this is a must-read!