Problem Statement

Problem StatementHaving backups of binary logs is fairly normal these days. The more recent binary logs are copied offsite, the better RPO (Recovery Point Objective) can be achieved. I was asked multiple times recently if something could be done to “stream” the binary logs to S3 as close to real-time as possible. Unfortunately, there is no readily available solution that would do that. Here, I show what can be done and also show a proof of concept implementation, which is not suitable for production use.

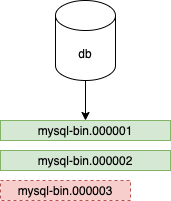

In this example, the instance has two binary log files (mysql-bin.000001 and mysql-bin.000002) already closed and mysql-bin.000003 being written. A trivial solution for backing up these binary log files would be to back up just the closed ones (the one that is not written). The default size of the binary log file is 1 GB. This means with this solution we would have a 1 GB binlog not backed up in the worst-case scenario. On average, we would have 500M binary logs not backed up. These numbers can be made better by lowering the max_binlog_size parameter, but that will lead to a slew of files and frequent rotation.

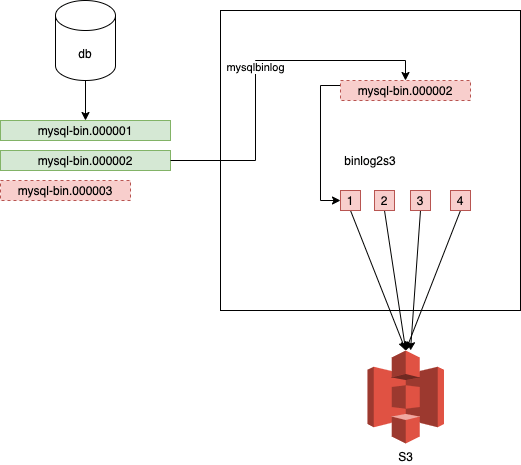

It is possible to upload files to S3 in chunks using multipart uploads. With this the file can be uploaded in chunks, the minimum chunk size is 5 MB. This means that a binlog can be read by another process while it’s written, and uploaded to S3 in 5 MB chunks. That’s definitely better than the 500 MB of the file copying or setting the max_binlog_size to 5M. Another thing that our backup solution could do is stream the binary logs to a remote location before it uploads them to S3.

The chunks are assembled together again on S3 when the multipart upload is finished.

The files produced by the mysqlbinlog command can be read and if we have 5M, they can be uploaded to S3. The last chunk of a multipart upload can be less than 5M. With this, it’s guaranteed that the file can be uploaded. The file is read in chunks while it’s written.

The flow of the proof of concept implementation is the following.

In this example, I have a server with two binlogs:

|

1 2 3 4 5 6 7 8 |

mysql> show binary logs; +------------------+-----------+-----------+ | Log_name | File_size | Encrypted | +------------------+-----------+-----------+ | mysql-bin.000001 | 105775625 | No | | mysql-bin.000002 | 85147151 | No | +------------------+-----------+-----------+ 2 rows in set (0.00 sec) |

The max_binlog_size is 100M for the sake of convenience.

|

1 2 3 4 5 |

$ binlog2s3 --binary /usr/local/bin/mysqlbinlog --hostname db1.172.17.17.12.nip.io --port 3306 --username repl --password repl --start-file mysql-bin.000001 --tempdir /Users/pboros/tmpdir --bucket_name pboros-binlogtest Waiting for binlog files to appear 2021-07-01 17:45:41.672730 Creating multipart uploader for mysql-bin.000001 2021-07-01 17:45:42.460344 Uploading part 1 for mysql-bin.000001 size 5242880 2021-07-01 17:45:51.465913 Uploading part 2 for mysql-bin.000001 size 5242880 |

The temporary directory has the binary logs:

|

1 2 3 4 5 6 |

$ ls -la total 372896 drwxr-xr-x 4 pboros staff 128 Jul 1 17:45 . drwxr-xr-x+ 73 pboros staff 2336 Jun 30 18:04 .. -rw-r----- 1 pboros staff 105256799 Jul 1 17:45 mysql-bin.000001 -rw-r----- 1 pboros staff 85663391 Jul 1 17:45 mysql-bin.000002 |

In this case, streaming the binary logs from the beginning is much faster than uploading them to S3 (because I am streaming from a virtual machine locally, and I am uploading to S3 on a home internet connection).

Soon enough the binlog will be uploaded:

|

1 2 3 4 5 6 7 |

2021-07-01 17:48:23.865630 Uploading part 19 for mysql-bin.000001 size 5242880 2021-07-01 17:48:33.350739 Uploading part 20 for mysql-bin.000001 size 5242880 2021-07-01 17:48:41.708166 Uploading part 21 for mysql-bin.000001 size 399199 2021-07-01 17:48:42.160303 Finishing multipart upload for mysql-bin.000001 2021-07-01 17:48:42.407308 Creating multipart uploader for mysql-bin.000002 2021-07-01 17:48:43.521756 Uploading part 1 for mysql-bin.000002 size 5242880 2021-07-01 17:48:52.517424 Uploading part 2 for mysql-bin.000002 size 5242880 |

Part 17 will be bigger because it has less than a 5M buffer from the time when there were new binary logs, and when new data became available. It could read an additional 5M on top of that.

|

1 2 3 4 5 6 7 |

$ ls -la total 593496 drwxr-xr-x 5 pboros staff 160 Jul 1 17:52 . drwxr-xr-x+ 73 pboros staff 2336 Jun 30 18:04 .. -rw-r----- 1 pboros staff 105267370 Jul 1 17:52 mysql-bin.000002 -rw-r----- 1 pboros staff 105255295 Jul 1 17:52 mysql-bin.000003 -rw-r----- 1 pboros staff 66061395 Jul 1 17:52 mysql-bin.000004 |

|

1 2 3 |

$ aws s3 ls s3://pboros-binlogtest/ 2021-07-01 17:45:43 105256799 mysql-bin.000001 2021-07-01 17:48:43 105267370 mysql-bin.000002 |

The uploaded parts are accessible with the S3 API (and they can be assembled to binlogs):

|

1 |

$ aws s3api list-multipart-uploads --bucket pboros-binlogtest |

The S3 bucket can have a policy to auto-delete not finished multipart uploads periodically (for example unfinished multipart uploads that are older than 7 days).

The proof of concept code is available at https://github.com/pboros/binlog2s3.

Resources

RELATED POSTS

Nice to see your post Peter. From China Guangjing Chen

Is this code working https://github.com/pboros/binlog2s3. ?, for me it always shows Waiting for binlog files to appear even though there are new log files avaialble