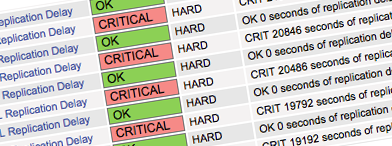

Working day to day with Percona Remote DBA customers, we have been facing an issue from time to time when MySQL replication lag is flapping between 0 and XXXXX constantly – i.e. Seconds_Behind_Master is 0 for a few secs, then it’s like 6287 or 25341, again 0 and so on. I would like to note the 5 different scenarios and symptoms of this – some are obvious and some are not really.

Working day to day with Percona Remote DBA customers, we have been facing an issue from time to time when MySQL replication lag is flapping between 0 and XXXXX constantly – i.e. Seconds_Behind_Master is 0 for a few secs, then it’s like 6287 or 25341, again 0 and so on. I would like to note the 5 different scenarios and symptoms of this – some are obvious and some are not really.

Symptoms: MySQL error log on a slave shows the slave thread is connecting/disconnecting from a master constantly.

Solution: check whether all nodes in the replication have unique server-ids.

Scenario: you stop MySQL on the first master, then you stop the second one. Afterwards, you perform some migration or maintenance. Suddenly, you realize that it would be better to change server-ids to match the end part of new IP addresses. You bring everything back online and noticed the strange behaviour like some portion of binlogs cycle between masters due to log_slave_updates enabled. But how come if new server-ids are different? It’s really could be true when the some data were written to the second master prior its shutdown when the first one was already off and both nodes started recognizing cycled data as not their own, thus applying them and passing down the replication as sender’s server-id does not match server-id written in the binlogs data itself prior shutdown. So we have got an infinite loop.

Solution: simply reset the slave position on one of the masters a new, so finally the “ghost” binlogs will stop cycling.

Symptoms: according to the “SHOW SLAVE STATUS” output, one time the slave thread queues events showing some delay, another time waits for master showing 0 lag and so on. Considering the real master position, indeed there should be XXXXX delay. Another symptoms are IO saturation and high disk IOPS number but a disk is only busy a half as shown by pt-diskstats. In my case I was observed 1500 iops on master, 10x more – 15000 iops being 60% busy on slave. You may think it could be a row-based replication (binlog_format=ROW) and constant updates on a master but still why the delay is flapping and disk IO is high but not on 100% of capacity?

Possible reason: check whether you do not have enabled sync_relay_log, sync_relay_log_info, sync_master_info which make slave to sync data to disk on every event, e.g. sync_relay_log ensures one write to the relay log per statement if autocommit is enabled, and one write per transaction otherwise. Enabling those options makes your system slow unless the disk has a battery-backed cache, which makes synchronization very fast.

Symptoms: there is a poor network link between master and slave. With saturated link the slave IO_thread will have a hard time keeping up with master. SQL thread reports zero lag while it just waits for more events stored by IO_thread. Once IO_thread is able to write more into relay log, SQL_thread will report XXX seconds behind again.

Solution: check the network throughput.

Scenario: if you open a transaction on master and then for some reason (maybe application bug or frontend problems) you commit it some time later, it will show it as replication latency on the slave. So for example, if you wait one hour before commit, it will show 3600 seconds of latency on the slave while that transaction is being executed.

Solution: use pt-heartbeat tool.

Can you explain how simply resetting the master slave position in #2 will fix the problem? What about missed statements that weren’t executed after the skip?

Rolf, I didn’t write that there are missed statements and actually, there were no such ones as was proven by running the table checksumming further, also the app was turned off. It was just my assumption of what it could be…

Roman, and it was an excellent summary. With given “solutions” it can be dangerous for those who are looking at how to fix an issue they have.

“Enabling those options makes your system slow unless the disk has a battery-backed cache, which makes synchronization very fast.” MySQL recognizes the fact that you have a battery backed cache?

‘@Rolf–

MySQL itself has no clue as to whether or not your machine has a battery-backed write cache; that all happens at the OS level and below. A RAID controller with BBWC (and write-back caching enabled – write-through caching is a performance killer) is generally going to return that a write or an fsync has completed back to the OS as soon as it hits controller memory rather than waiting for the bits to physically hit the disk. Hence, much higher throughput.

I think I’ve seen this issue in the past when the server time was set incorrectly when mysql started, and then updated after mysql had been running. The replication lag would flap between 0 and the time difference, say, 3600 if the adjustment was 1 hour.

Restarting mysql would fix the problem.

This was it for me! I noticed the systemd uptime for the mysql server was 5 hours greater than my system uptime, and the lag we saw was flapping between 0 and 18000+ seconds. I imagine the only way that discrepancy can happen would be system time being set improperly at service start time. Restarting mysql fixed the lag issue. This should be the 6th thing to check in the original post!

Could you go into more depth as to why, and how #5 occurs?

Had this kind of issue before and I’ve been wrong with sysads and network engineers thinking that there’s an ongoing network issue. it ends up that the setup of a junior dba copied the config from another slave without changing the server_id.

great article that summarized all common issues about replication lag

What if it jumps between 0 and 3600 mainly?

6 Seconds_Behind_Master: 3653

6 Seconds_Behind_Master: 3710

6 Seconds_Behind_Master: 3713

7 Seconds_Behind_Master: 3650

41 Seconds_Behind_Master: 3601

1595 Seconds_Behind_Master: 3600

77325 Seconds_Behind_Master: 0

so most are 0 or 3600 EXACTLY. Why 3600? I’m logging these every minute. One minute it is 0, next minute 3600 and next minute back to 0. Makes no sense to me.

I have seen the flapping value since 4.0 days. It would eventually go away. I never found a viable clue as to why it was happening.

#1 (dup server-Id) leads to huge number of binlogs.

#5 (eg, a big ALTER clogging replication) does not sound like a “flapping” case. Rather a sudden big number that eventually (and gradually?) goes away.