Over the years, I’ve had the opportunity to architect all sorts of configurations using Postgres as a backend. I’ve always found it very cool and satisfying to implement sophisticated business rules, often in more ways than one has fingers and toes. So, it’s not an understatement when I say that Citus is one of the more interesting technologies that I’ve come across when scaling PostgreSQL.

Citus is an extension that horizontally scales PostgreSQL across multiple machines by sharding and creating redundant copies of tables. Its query engine not only parallelizes incoming SQL queries for time series but also has the ability to create column-wise tables making it ideal for OLAP analysis duties.

Among its many capabilities, a Citus cluster can:

Pondering the case of high availability and redundancy, one replicates data by creating a replica via streaming replication.

Now let’s stretch our imagination and consider a second method of high availability, ala Citus.

The best way to describe the Citus way of doing things is to reflect how data is managed by a disk RAID array. Depending on the configuration, one can tune a hardware RAID for either performance or redundancy. The same can be said for Citus data sharding.

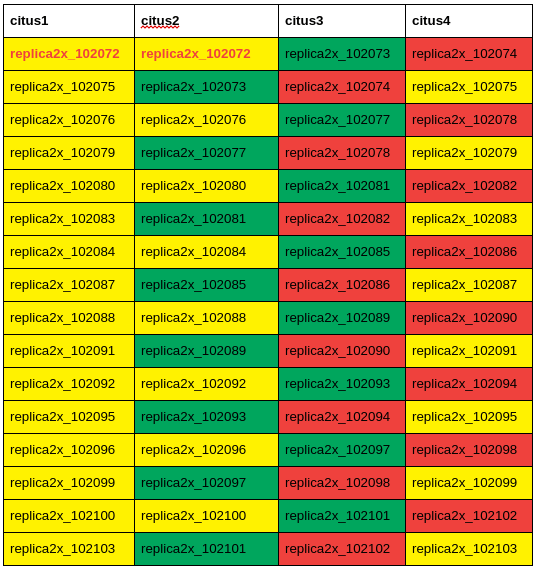

Here is an example of a table named replica2x, which has 2X redundancy across a cluster of four (4) nodes. The colors indicate duplicated shards of the table. For example, if node citus1 goes offline, the sharded table it holds still has copies on nodes citus2 and citus4. Likewise, it can be said that if node citus2 goes offline, the same data is still available on nodes 1, 3, and 4.

I’ll be upfront: I love working with Linux Containers, LXD. Much of what I will show you makes heavy use of them. You won’t need LXD to replicate this POC, of course, but I can’t say enough how flexible and versatile such an environment can be when prototyping a cluster of Postgres nodes, let alone an entire multi-data center infrastructure on a single workstation.

There are two parts to this POC;

Referring to this earlier blog, you’ll see how to get and install Citus into your environment.

The Citus cluster consists of a five (5) node cluster:

By using LXD, I created a single templated container with Citus on Ubuntu 20.04, where the various nodes were copied from this template.

|

1 2 3 4 5 6 7 |

for u in citus-coord-01 citus1 citus2 citus3 citus4 do echo "==== $u ====" lxc rm --force $u 2>/dev/null lxc cp template-ubuntu-2004-citusdb $u lxc start $u done |

And here’s the resultant cluster:

|

1 |

lxc ls -c ns4 citus |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

+----------------+---------+----------------------+ | NAME | STATE | IPV4 | +----------------+---------+----------------------+ | citus1 | RUNNING | 10.231.38.140 (eth0) | +----------------+---------+----------------------+ | citus2 | RUNNING | 10.231.38.151 (eth0) | +----------------+---------+----------------------+ | citus3 | RUNNING | 10.231.38.171 (eth0) | +----------------+---------+----------------------+ | citus4 | RUNNING | 10.231.38.204 (eth0) | +----------------+---------+----------------------+ | citus-coord-01 | RUNNING | 10.231.38.34 (eth0) | +----------------+---------+----------------------+ |

It’s understood that on each of the five nodes:

Inspecting the nodes confirms Citus has been correctly installed:

|

1 2 3 4 5 6 7 8 9 10 |

for u in citus1 citus2 citus3 citus4 citus-coord-01 do echo "==== NODE: $u ====" lxc exec $u -- su - postgres -c 'psql db01'<<_eof_ select extname, extversion from pg_extension; _eof_ done | less -S |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

==== NODE: citus1 ==== extname | extversion ----------------+------------ plpgsql | 1.0 citus_columnar | 11.1-1 citus | 11.1-1 ==== NODE: citus2 ==== extname | extversion ----------------+------------ plpgsql | 1.0 citus_columnar | 11.1-1 citus | 11.1-1 ==== NODE: citus3 ==== extname | extversion ----------------+------------ plpgsql | 1.0 citus_columnar | 11.1-1 citus | 11.1-1 ==== NODE: citus4 ==== extname | extversion ----------------+------------ plpgsql | 1.0 citus_columnar | 11.1-1 citus | 11.1-1 ==== NODE: citus-coord-01 ==== extname | extversion ----------------+------------ plpgsql | 1.0 citus_columnar | 11.1-1 citus | 11.1-1 |

Properly installed, the Citus runtime variables are now available:

|

1 2 3 |

lxc exec citus1 -- su postgres -c psql db01<<_eof_ | less -S select name,setting,unit from pg_settings where name ~ 'citus' order by 1; _eof_ |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 |

name | setting | unit ----------------------------------------------------+-----------------+------ citus.all_modifications_commutative | off | citus.background_task_queue_interval | 5000 | ms citus.cluster_name | default | citus.coordinator_aggregation_strategy | row-gather | citus.count_distinct_error_rate | 0 | citus.cpu_priority | 0 | citus.cpu_priority_for_logical_replication_senders | inherit | citus.defer_drop_after_shard_move | on | citus.defer_drop_after_shard_split | on | citus.defer_shard_delete_interval | 15000 | ms citus.desired_percent_disk_available_after_move | 10 | citus.distributed_deadlock_detection_factor | 2 | citus.enable_binary_protocol | on | citus.enable_create_role_propagation | on | citus.enable_deadlock_prevention | on | citus.enable_local_execution | on | citus.enable_local_reference_table_foreign_keys | on | citus.enable_repartition_joins | off | citus.enable_statistics_collection | off | citus.explain_all_tasks | off | citus.explain_analyze_sort_method | execution-time | citus.limit_clause_row_fetch_count | -1 | citus.local_hostname | localhost | citus.local_shared_pool_size | 50 | citus.local_table_join_policy | auto | citus.log_remote_commands | off | citus.max_adaptive_executor_pool_size | 16 | citus.max_cached_connection_lifetime | 600000 | ms citus.max_cached_conns_per_worker | 1 | citus.max_client_connections | -1 | citus.max_high_priority_background_processes | 2 | citus.max_intermediate_result_size | 1048576 | kB citus.max_matview_size_to_auto_recreate | 1024 | MB citus.max_shared_pool_size | 100 | citus.max_worker_nodes_tracked | 2048 | citus.multi_shard_modify_mode | parallel | citus.multi_task_query_log_level | off | citus.node_connection_timeout | 30000 | ms citus.node_conninfo | sslmode=require | citus.propagate_set_commands | none | citus.recover_2pc_interval | 60000 | ms citus.remote_task_check_interval | 10 | ms citus.shard_count | 32 | citus.shard_replication_factor | 1 | citus.show_shards_for_app_name_prefixes | | citus.skip_constraint_validation | off | citus.skip_jsonb_validation_in_copy | on | citus.stat_statements_track | none | citus.task_assignment_policy | greedy | citus.task_executor_type | adaptive | citus.use_citus_managed_tables | off | citus.use_secondary_nodes | never | citus.values_materialization_threshold | 100 | citus.version | 11.1.4 | citus.worker_min_messages | notice | citus.writable_standby_coordinator | off | |

Log into the coordinator in order to declare and configure the cluster:

|

1 2 3 4 5 6 7 8 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ select citus_set_coordinator_host('citus-coord-01', 5432); insert into pg_dist_node(nodename) values ('citus1') ,('citus2') ,('citus3') ,('citus4'); _eof_ |

And here’s the cluster’s organization:

|

1 2 3 4 5 6 7 8 |

db01=# select nodeid,nodename,groupid,isactive from pg_dist_node order by 1; nodeid | nodename | groupid | isactive --------+----------------+---------+---------- 1 | citus-coord-01 | 0 | t 2 | citus1 | 1 | t 3 | citus2 | 2 | t 4 | citus3 | 3 | t 5 | citus4 | 4 | t |

Table myevents is created, and the newly inserted records are evenly distributed across the cluster of nodes.

Login to the coordinator and execute the following commands. Notice that all DML and SQL statements are executed on the coordinator node:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ -- create the table create table myevents ( device_id bigint, event_id bigserial, event_time timestamptz default now(), data jsonb not null, primary key (device_id, event_id) ); -- distribute the events among the nodes select create_distributed_table('myevents', 'device_id'); -- populate the table insert into myevents (device_id, data) select s % 100, ('{"measurement":'||random()||'}')::jsonb from generate_series(1,1000000) s; _eof_ |

Querying the coordinator:

|

1 2 3 4 5 6 7 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ | less -S select * from myevents where device_id = 1 order by event_time desc, event_id desc limit 10; _eof_ |

|

1 2 3 4 5 6 7 8 9 10 11 12 |

device_id | event_id | event_time | data ----------+----------+-------------------------------+------------------------------------- 1 | 999901 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.2868659956537316} 1 | 999801 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.7931493079697731} 1 | 999701 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.4875322951757288} 1 | 999601 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.6491362745752653} 1 | 999501 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.9629266554851366} 1 | 999401 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.1185674800281864} 1 | 999301 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.5133762596297742} 1 | 999201 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.7307634886202119} 1 | 999101 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.2997471209159892} 1 | 999001 | 2023-08-16 14:51:12.618467-07 | {"measurement": 0.9692520484104021} |

This demonstrates clearly that table myevents is hash-sharded across every node member:

|

1 2 3 4 5 6 7 |

for u in citus1 citus2 citus3 citus4 do echo "==== NODE: $u ====" lxc exec $u -- su - postgres -c 'psql db01'<<_eof_ dt+ _eof_ done | less -S |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 |

==== NODE: citus1 ==== List of relations Schema | Name | Type | Owner | Persistence | Access method | Size | Description -------+-----------------+-------+----------+-------------+---------------+---------+------------- public | myevents_102008 | table | postgres | permanent | heap | 2832 kB | public | myevents_102012 | table | postgres | permanent | heap | 2840 kB | public | myevents_102016 | table | postgres | permanent | heap | 5624 kB | public | myevents_102020 | table | postgres | permanent | heap | 2840 kB | public | myevents_102024 | table | postgres | permanent | heap | 1904 kB | public | myevents_102028 | table | postgres | permanent | heap | 5632 kB | public | myevents_102032 | table | postgres | permanent | heap | 2840 kB | public | myevents_102036 | table | postgres | permanent | heap | 6560 kB | ==== NODE: citus2 ==== List of relations Schema | Name | Type | Owner | Persistence | Access method | Size | Description -------+-----------------+-------+----------+-------------+---------------+---------+------------- public | myevents_102009 | table | postgres | permanent | heap | 4696 kB | public | myevents_102013 | table | postgres | permanent | heap | 976 kB | public | myevents_102017 | table | postgres | permanent | heap | 1904 kB | public | myevents_102021 | table | postgres | permanent | heap | 3768 kB | public | myevents_102025 | table | postgres | permanent | heap | 1904 kB | public | myevents_102029 | table | postgres | permanent | heap | 2840 kB | public | myevents_102033 | table | postgres | permanent | heap | 1904 kB | public | myevents_102037 | table | postgres | permanent | heap | 2840 kB | ==== NODE: citus3 ==== List of relations Schema | Name | Type | Owner | Persistence | Access method | Size | Description -------+-----------------+-------+----------+-------------+---------------+------------+------------- public | myevents_102010 | table | postgres | permanent | heap | 1904 kB | public | myevents_102014 | table | postgres | permanent | heap | 1904 kB | public | myevents_102018 | table | postgres | permanent | heap | 1904 kB | public | myevents_102022 | table | postgres | permanent | heap | 4696 kB | public | myevents_102026 | table | postgres | permanent | heap | 8192 bytes | public | myevents_102030 | table | postgres | permanent | heap | 2832 kB | public | myevents_102034 | table | postgres | permanent | heap | 976 kB | public | myevents_102038 | table | postgres | permanent | heap | 4696 kB | ==== NODE: citus4 ==== List of relations Schema | Name | Type | Owner | Persistence | Access method | Size | Description -------+-----------------+-------+----------+-------------+---------------+---------+------------- public | myevents_102011 | table | postgres | permanent | heap | 5632 kB | public | myevents_102015 | table | postgres | permanent | heap | 1904 kB | public | myevents_102019 | table | postgres | permanent | heap | 1904 kB | public | myevents_102023 | table | postgres | permanent | heap | 1904 kB | public | myevents_102027 | table | postgres | permanent | heap | 2840 kB | public | myevents_102031 | table | postgres | permanent | heap | 2832 kB | public | myevents_102035 | table | postgres | permanent | heap | 1904 kB | public | myevents_102039 | table | postgres | permanent | heap | 4696 kB | |

ATTENTION: Please note that you may have to edit your own queries as the values may be different for your setup.

Update shard replication factor from 1X to 2X:

|

1 2 3 4 5 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ show citus.shard_replication_factor; alter system set citus.shard_replication_factor=2; select pg_reload_conf(); _eof_ |

|

1 2 3 4 |

# validate lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ show citus.shard_replication_factor; _eof_ |

|

1 2 3 |

citus.shard_replication_factor -------------------------------- 2 |

Table myevents2x is created and populated with a redundancy of 2X across the cluster:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ | less -S -- create a new table with 2X redundancy create table myevents2x ( device_id bigint, event_id bigserial, event_time timestamptz default now(), data jsonb not null, primary key (device_id, event_id) ); -- distribute the events among the nodes select create_distributed_table('myevents2x', 'device_id'); -- confirm table has been added across the cluster select * from master_get_active_worker_nodes() order by 1; -- populate the table insert into myevents2x (device_id, data) select s % 100, ('{"measurement":'||random()||'}')::jsonb from generate_series(1,1000000) s; _eof_ |

Here’s the output:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

CREATE TABLE create_distributed_table -------------------------- (1 row) node_name | node_port -----------+----------- citus1 | 5432 citus2 | 5432 citus3 | 5432 citus4 | 5432 (4 rows) INSERT 0 1000000 |

Locate shard myevents2x_102040, which should be on nodes citus1 and citus2:

|

1 2 3 4 5 6 7 |

for u in citus1 citus2 citus3 citus4 do echo "==== NODE: $u ====" lxc exec $u -- su - postgres -c 'psql db01'<<_eof_ select tablename from pg_tables where tablename~'myevents2x' order by 1 _eof_ done | less -S |

Here’s the output:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 |

==== NODE: citus1 ==== tablename ------------------- myevents2x_102040 myevents2x_102043 myevents2x_102044 myevents2x_102047 myevents2x_102048 myevents2x_102051 myevents2x_102052 myevents2x_102055 myevents2x_102056 myevents2x_102059 myevents2x_102060 myevents2x_102063 myevents2x_102064 myevents2x_102067 myevents2x_102068 myevents2x_102071 (16 rows) ==== NODE: citus2 ==== tablename ------------------- myevents2x_102040 myevents2x_102041 myevents2x_102044 myevents2x_102045 myevents2x_102048 myevents2x_102049 myevents2x_102052 myevents2x_102053 myevents2x_102056 myevents2x_102057 myevents2x_102060 myevents2x_102061 myevents2x_102064 myevents2x_102065 myevents2x_102068 myevents2x_102069 ==== NODE: citus3 ==== tablename ------------------- myevents2x_102041 myevents2x_102042 myevents2x_102045 myevents2x_102046 myevents2x_102049 myevents2x_102050 myevents2x_102053 myevents2x_102054 myevents2x_102057 myevents2x_102058 myevents2x_102061 myevents2x_102062 myevents2x_102065 myevents2x_102066 myevents2x_102069 myevents2x_102070 ==== NODE: citus4 ==== tablename ------------------- myevents2x_102042 myevents2x_102043 myevents2x_102046 myevents2x_102047 myevents2x_102050 myevents2x_102051 myevents2x_102054 myevents2x_102055 myevents2x_102058 myevents2x_102059 myevents2x_102062 myevents2x_102063 myevents2x_102066 myevents2x_102067 myevents2x_102070 myevents2x_102071 |

Locate and return the first three records of shard myevents2x_102040 on nodes citus1 and citus2:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

lxc exec citus1 -- su - postgres -c 'psql db01'<<_eof_ | less -S qecho ==== citus1 ==== qecho select * from myevents2x_102040 order by 1,2 limit 3 select * from myevents2x_102040 order by 1,2 limit 3; c 'host=citus2 dbname=db01 user=postgres' qecho ==== citus2 ==== qecho select * from myevents2x_102040 order by 1,2 limit 3 select * from myevents2x_102040 order by 1,2 limit 3; c 'host=citus-coord-01 dbname=db01 user=postgres' qecho ==== coordinator ==== qecho select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2; _eof_ |

Here’s the output:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

==== citus1 ==== select * from myevents2x_102040 order by 1,2 limit 3 device_id | event_id | event_time | data -----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} You are now connected to database "db01" as user "postgres" on host "citus2" (address "fd42:cb6a:5384:9a60:216:3eff:fe38> ==== citus2 ==== select * from myevents2x_102040 order by 1,2 limit 3 device_id | event_id | event_time | data -----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} You are now connected to database "db01" as user "postgres" on host "citus-coord-01" (address "fd42:cb6a:5384:9a60:216:3> ==== coordinator ==== select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 device_id | event_id | event_time | data -----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} |

The next few steps demonstrate our ability to continuously query and return those records found in shard myevents2x_102040.

|

1 |

lxc stop citus1 |

|

1 2 3 4 5 6 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ | less -S qecho "==== citus1 is shutdown ====" qecho ==== querying coordinator ==== qecho select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2; _eof_ |

Here’s the output:

|

1 2 3 4 5 6 7 8 |

"==== citus1 is shutdown ====" ==== querying coordinator ==== select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 device_id | event_id | event_time | data -----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} |

|

1 2 |

lxc start citus1 lxc stop citus2 |

|

1 2 3 4 5 6 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ | less -S qecho "==== citus2 is shutdown ====" qecho ==== querying coordinator ==== qecho select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2; _eof_ |

Here’s the output; note that it’s exactly the same as the previous test:

|

1 2 3 4 5 6 7 8 |

"==== citus2 is shutdown ====" ==== querying coordinator ==== select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 device_id | event_id | event_time | data -----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} |

|

1 |

lxc start citus2 |

|

1 2 3 4 5 6 |

lxc exec citus-coord-01 -- su - postgres -c 'psql db01'<<_eof_ | less -S qecho "==== cluster restored ====" qecho ==== querying coordinator ==== qecho select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2; _eof_ |

Here’s the output, of course!

|

1 2 3 4 5 6 7 8 |

"==== cluster restored ====" ==== querying coordinator ==== select * from myevents2x where device_id=8 and event_id in (8,108,208) order by 1,2 device_id | event_id | event_time | data ----------+----------+-------------------------------+------------------------------------- 8 | 8 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.5055415404961443} 8 | 108 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.9145586163936634} 8 | 208 | 2023-08-17 07:10:51.622082-07 | {"measurement": 0.8032392420487922} |

Data redundancy is the hallmark of high availability. But with Citus, we’ve raised the bar. Can you think of a better system that can stay up without losing time initiating failovers when a node fails?

Have fun!

Percona Distribution for PostgreSQL provides the best and most critical enterprise components from the open-source community in a single distribution, designed and tested to work together.

Resources

RELATED POSTS

when any one of the worker is down, then the insert statement is throwing error.

ERROR: connection to the remote node Work1A:5432 failed with the following error: server closed the connection unexpectedly

This probably means the server terminated abnormally

before or while processing the request.