Continuing with the checkpointing topic I started a month ago with my blog post MongoDB Checkpointing Woes, this time I want to review how PostgreSQL performs in this area. After this, I will be taking a look at MySQL and MariaDB. If anything, it will be fair not only to complain about MongoDB but to review how other databases handle it, as well.

Continuing with the checkpointing topic I started a month ago with my blog post MongoDB Checkpointing Woes, this time I want to review how PostgreSQL performs in this area. After this, I will be taking a look at MySQL and MariaDB. If anything, it will be fair not only to complain about MongoDB but to review how other databases handle it, as well.

To evaluate PostgreSQL I will use a not identical but similar scenario: using sysbench-tpcc with 1000 Warehouses, and as with sysbench you can produce tpcc-like workload for PostgreSQL:

Sysbench-tpcc Supports PostgreSQL (No, Really This Time)

Tuning PostgreSQL for sysbench-tpcc

The hardware I use is:

|

1 |

System | Supermicro; SYS-F619P2-RTN; v0123456789 (Other)<br> Platform | Linux<br> Release | Ubuntu 18.04.4 LTS (bionic)<br> Kernel | 5.3.0-42-generic<br>Architecture | CPU = 64-bit, OS = 64-bit<br> Threading | NPTL 2.27<br> SELinux | No SELinux detected<br>Virtualized | No virtualization detected<br># Processor ##################################################<br> Processors | physical = 2, cores = 40, virtual = 80, hyperthreading = yes<br> Models | 80xIntel(R) Xeon(R) Gold 6230 CPU @ 2.10GHz<br> Caches | 80x28160 KB<br># Memory #####################################################<br> Total | 187.6G<br> |

With the storage on SATA SSD INTEL SSDSC2KB960G8 (Intel Enterprise-grade SSD D3-S4510).

The PostgreSQL config is:

|

1 |

shared_buffers = '140GB'<br>work_mem = '4MB'<br>random_page_cost = '1'<br>maintenance_work_mem = '2GB'<br><br>wal_level = 'replica'<br>max_wal_senders = '3'<br><br>synchronous_commit = 'on'<br>seq_page_cost = '1'<br>synchronous_commit = 'on'<br><br>checkpoint_completion_target = '0.9'<br>checkpoint_timeout = '900'<br><br>max_wal_size = '20GB'<br>min_wal_size = '12GB'<br><br>autovacuum_vacuum_scale_factor = '0.4'<br>effective_cache_size = '200GB'<br>bgwriter_lru_maxpages = '1000'<br>bgwriter_lru_multiplier = '10.0'<br>logging_collector = 'ON'<br>wal_compression = 'ON'<br>log_checkpoints = 'ON'<br>archive_mode = 'OFF'<br>full_page_writes = 'ON'<br>fsync = 'ON'<br> |

The short settings overview:

The benchmark command line is:

|

1 |

./tpcc.lua --pgsql-user=sbtest --pgsql-password=sbtest --pgsql-db=sbtest --time=3600 --threads=56 --report-interval=1 --tables=10 --scale=100 --use_fk=0 --trx_level=RC --db-driver=pgsql --report_csv=yes run <br> |

This means that the benchmark will run for 1 hour, with reporting throughput every 1 sec.

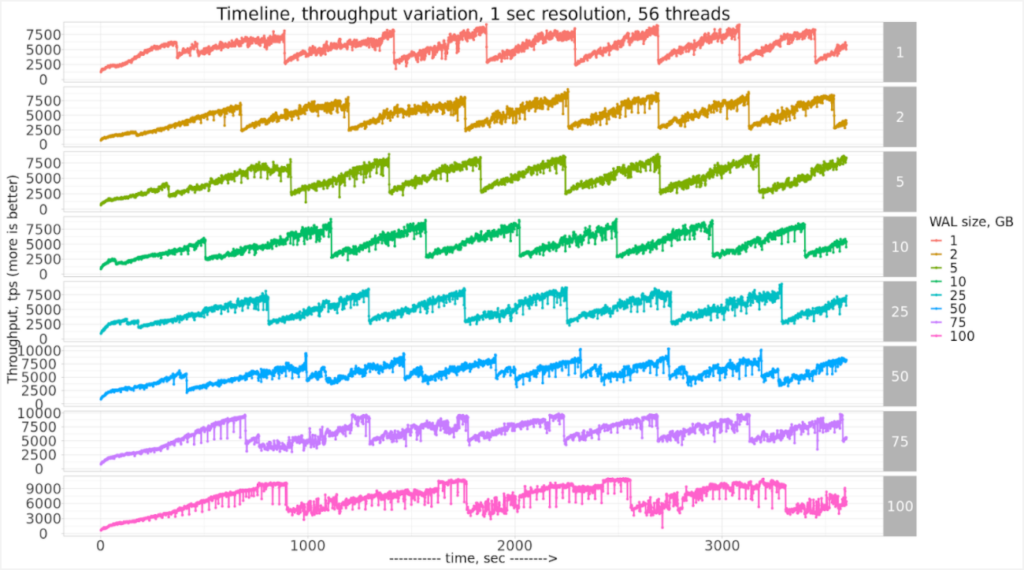

Let’s see what results I’ve got with this setup:

That’s an interesting pattern!

Although there are no drops to the floor, we see a saw-like pattern, where throughput raises to ~8000 tps and then drops to ~3000tps (that’s 2.6 times drop!).

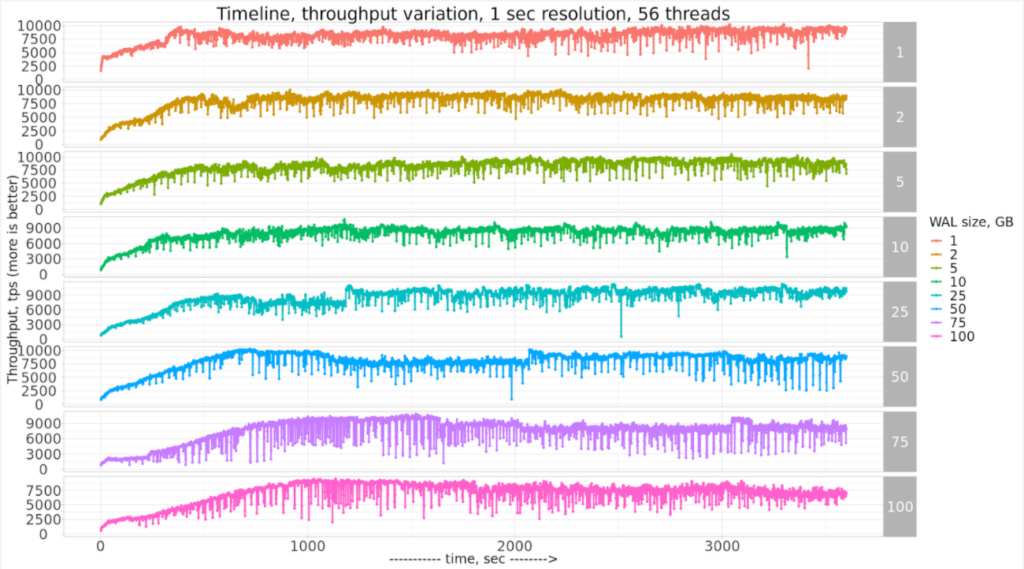

It was suggested to check how PostgreSQL would perform with full_page_writes = 'OFF' (this is not a data-safe setting and I would not recommend to use it in production!)

This seems to improve the saw-like pattern, but there are micro-drops that are concerning.

If we zoom in only to 50GB WAL size, we can see it in detail:

I would be interested to hear ideas on how PostgreSQL results in 1-sec resolution can be improved! If you are interested in the raw results and notebooks, it is available here in GitHub.

Our white paper “Why Choose PostgreSQL?” looks at the features and benefits of PostgreSQL and presents some practical usage examples. We also examine how PostgreSQL can be useful for companies looking to migrate from Oracle.

Resources

RELATED POSTS

When not disabling full page writes to the wal, consider running with sizeof(wal) ~= sizeof(shared buffer). Assuming wal compression doesn’t reduce the size of a page, then the worst case is that each page is written to the wal once so the wal should be large enough to store all pages that are in shared buffers. This might not fix the sawtooth pattern but might make it less frequent.

One thing not mentioned in the previous paragraph is that sizeof(wal) might need to be more than sizeof(shared buffers), by 2X or 3X to satisfy the condition.

It will be interesting to compare Postgres and InnoDB for similar amounts of redo relative to the buffer pool size.

Mark,

I’ve posted InnoDB results here https://www.percona.com/blog/2020/07/29/checkpointing-in-mysql-and-mariadb/