On a recent project, we were tasked with loading several billion records into MongoDB. That prompted us to dig a bit deeper into WiredTiger knobs & turns, which turned out to be a very interesting experience.

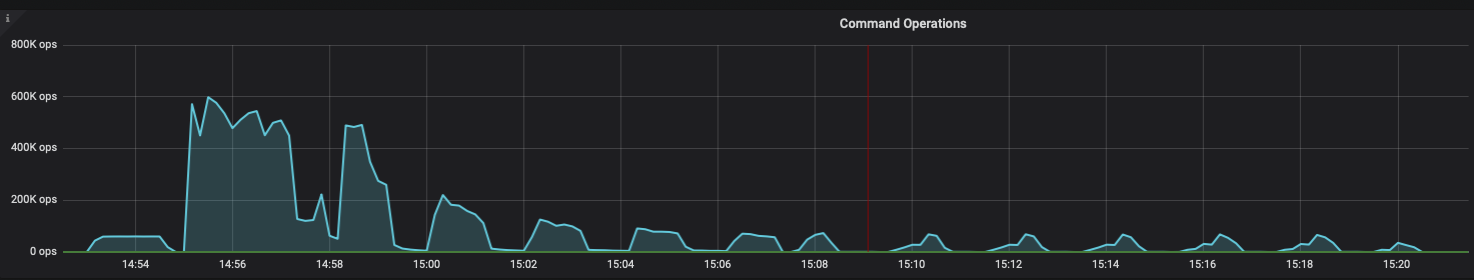

What we noticed is the load started at a decent rate, but after some time it started to slow down considerably. Doing some research by looking at metrics, we noticed WiredTiger checkpoint time was increasing more and more as time passed. We went from only a few seconds to checkpoints taking even a few minutes(!). During checkpoints, performance basically tanked:

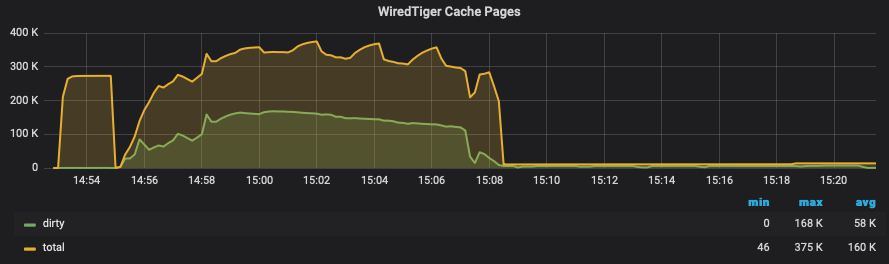

As of MongoDB 4.2, the WiredTiger engine does a full checkpoint every 60 seconds (controlled by checkpoint=(wait=60)). This means that all dirty pages in the WiredTiger cache have to be flushed to disk every 60 seconds. Remember the default value of WiredTiger cache is 50% of available RAM, so we have to somehow limit the number of dirty pages or suffer (more on this later).

As noted in Vadim’s post Checkpoint Strikes Back, full checkpoints can cause some “spiky” performance. There are ways to mitigate the impact, as we will see, but not eliminate it completely.

By the way, you might be wondering why the default WiredTiger cache value is only 50% of available RAM, instead of something like 80-90%. The reason is MongoDB takes advantage of the operating system buffering. Inside WiredTiger cache, only uncompressed pages are kept, while the operating system caches the (compressed) pages as they are written to the database files. By leaving enough free memory to the operating system, we increase the chance of getting the page from the operating system buffers, instead of doing a disk read on a page fault.

Evicting is mainly about removing least recently used pages from the WiredTiger cache, to make room for whichever other pages need to be accessed soon. As in most databases, there are dedicated background threads to perform this work. Let’s look at the available parameters to tune next.

|

1 |

eviction_trigger=95,eviction_target=80 |

These parameters are expressed as a percentage of the total WiredTiger cache and control the overall cache usage. The used amount means the sum of clean+dirty pages. Let’s see an example:

Consider a server with 200 Gb of RAM and WiredTiger cache set to 100 Gb. The eviction threads will try to keep the memory usage at around 80 Gb (eviction_target). If the pressure is too high, and cache usage increases to as high as 95 Gb (eviction_trigger), then application/client threads will be throttled. How? they will be asked to help the background threads perform eviction before being allowed to do their job, helping to relieve some of the pressure, at the expense of increasing latency to the clients. If even this is not enough, and the cache reaches 100% of the configured cache size, operations will stall.

|

1 |

eviction_dirty_trigger=20,eviction_dirty_target=5 |

This pair of parameters control the amount of dirty content in the cache. Basically, eviction threads will engage when the amount of dirty pages is 5% or more of the total cache size. We let that amount grow to as much as 20% before we call the application threads for help again, increasing latency for clients (the same “trick” as before).

Remember that in a sharp or full checkpoint, all dirty pages have to be flushed to disk. This will use all of your disk write capacity for as long as it takes. That explains the reason why these values have “low” defaults, as we want to limit the amount of work that the database has to do at each checkpoint.

These parameters are again, expressed as a percentage of total WiredTiger cache usage. The lowest we can go is 1% (no floating-point values are allowed). 1% can still be quite a lot on a server with a high memory! A 256G cache is not uncommon these days, and 1% of that is 2.56 Gb. To be flushed all at once, one time per minute.

This can be too much for your disks, depending on what kind of hardware you have at your disposal. The only way to reduce that amount further is to reduce the size of the WiredTiger cache, which has other consequences. It would be nice to have the option to express eviction_dirty_target number in MB instead.

|

1 |

eviction=(threads_min=4,threads_max=4) |

By default, MongoDB allocates four background threads to perform eviction. We have the option of specifying a minimum and maximum, however, it is not very clear how the effective number of threads is determined (I guess I need to go dig into the source code). Also, the maximum number of threads is hardcoded to 20 for some reason.

For this particular case, the default four threads weren’t enough to keep up with the rate of dirty page generation, as evidenced by Percona Monitoring and Management (PMM) graphics:

So to minimize stalls, what we need to do is keep the number of dirty pages under control, so that the time a checkpoint takes is “reasonable” (let’s say in the < 10s range). Keeping in mind more threads means more IO bandwidth and more CPU resources as well (due to compression).

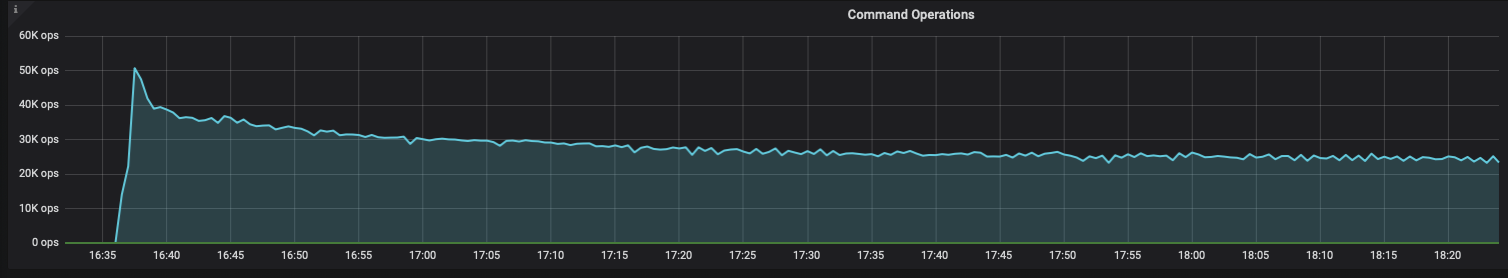

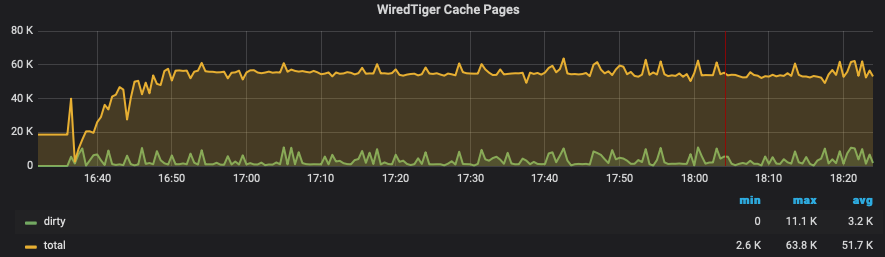

After doing some experiments with the available hardware, we decided to increase the number of eviction threads to the maximum 20, reduce the dirty thresholds to the one to five-percent range, and also set a small WiredTiger cache of 1 Gb, which would limit the number of dirty pages to 10-50 Mb.

To change the settings on the fly, we can run the following command:

|

1 |

db.adminCommand( { "setParameter": 1, "wiredTigerEngineRuntimeConfig": "eviction=(threads_min=20,threads_max=20),checkpoint=(wait=60),eviction_dirty_trigger=5,eviction_dirty_target=1,eviction_trigger=95,eviction_target=80"}) |

Be aware client threads are blocked while this command completes. In my experience, it is usually just a few seconds, but I’ve seen cases on very busy servers where it can take minutes. To be on the safe side, plan a maintenance window to deploy this.

If we want to make the settings persistent, one option is to edit the systemd unit file (/usr/lib/systemd/system/mongod.service on RH/Centos), and pass the wiredTigerEngineConfigString argument as the following example:

|

1 |

OPTIONS='-f /etc/mongod.conf --wiredTigerEngineConfigString "eviction=(threads_min=20,threads_max=20),eviction_dirty_target=1"' |

This is how things looked after:

I hope this post shed some light on some of the mechanisms at work inside WiredTiger and helps you better configure MongoDB.

Even if the default settings work fine for most use cases, you might have to tune things if writes get high. My impression is there are not really many parameters available at this time, and the documentation about the internals has room for improvement.

Looking at the current status of MongoDB with WiredTiger, it reminds me of a lot of the challenges MySQL had when first incorporating the InnoDB storage engine. I think it is worth re-examining the decision to use sharp checkpoints.

Looking at metrics was key to understand what was going on, and to track the effects of the changes in parameters. I strongly suggest you deploy a trending tool like PMM if you don’t already have one.

Enhance your MongoDB database management with this on-demand webinar. Led by Divyanshu Soni, Percona’s MongoDB expert, you’ll learn to navigate common MongoDB issues using Percona Management and Monitoring (PMM). Discover essential techniques in query analytics, identifying critical performance metrics, and simplifying database management. Transform how you handle bugs, performance problems, and crashes in MongoDB.

Resources

RELATED POSTS

If we reduce wiredTiger cache, will not future queries read from disk more frequently? Is this a trade off ?

Yes that is indeed what will happen. So after done with bulk loading you should increase WT cache again.

Thanks Ivan for the great write-up! One questions though: how long did the bulk import take with mongodb’s default settings and how long did it take with your settings?

Hi Kay, unfortunately I don’t have the actual numbers to share since we didn’t finish the full load with the default settings. For this case we were estimating something like 21 days with default settings. In the end it finished in about a week.

Wow! great article. Thanks for sharing.

good article Ivan. Thanks for sharing

Thank you Sateesh! good to hear from you

I think lowering thresholds from eviction_dirty_trigger=20,eviction_dirty_target=5 to eviction_dirty_trigger=5,eviction_dirty_target=1 only works if worker thread are really able to keep up. Lowering threshold for application threads from 20 to 5 only works if with given load you are able to ensure you stay mostly below 20. That configuration unfortunately didn’t help much for my load.