In this penultimate post from our series on building an enterprise-grade PostgreSQL environment we cover the parameters we have enabled to configure detailed logging in the demo setup we will showcase in our upcoming webinar.

In this penultimate post from our series on building an enterprise-grade PostgreSQL environment we cover the parameters we have enabled to configure detailed logging in the demo setup we will showcase in our upcoming webinar.

Like other RDBMS, PostgreSQL allows you to maintain a log of activities and error messages. Until PostgreSQL 9.6, PostgreSQL log files were generated in pg_log directory (inside the data directory) by default. Since PostgreSQL 10, pg_log has been renamed to simply log. However, this directory can be modified to a different location by modifying the parameter log_directory.

Unlike MySQL, PostgreSQL writes the error and activity log to the same log file thus it may grow to several GBs when detailed logging is enabled. In these cases, logging becomes IO-intensive thus it is recommended to store log files in a different storage to the one hosting the data directory.

Here’s a list of parameters used to customize logging in PostgreSQL. All of them need to be modified in the postgresql.conf or postgresql.auto.conf files.

logging_collector: in order to log any activity in PostgreSQL this parameter must be enabled. The backend process responsible for logging database activity is called logger, it gets started when logging_collector is set to ON. Changing this parameter requires a PostgreSQL restart.

log_min_duration_statement: this parameter is used primarily to set a time threshold: queries running longer than such should be logged (as “slow queries”). Setting it to -1 disables logging of statements. Setting it to 0 enables the logging of every statement running in the database, regardless of its duration. The time unit should follow the actual value, for example: 250ms, 250s, 250min, 1h. Changing this parameter does not require a PostgreSQL restart – a simple reload of the configuration is enough.reload but not a restart. For example:

log_min_duration_statement = 5s logs every statement running for 5 seconds or longer.

log_line_prefix: helps you customize every log line being printed in the PostgreSQL log file. You can log the process id, application name, database name and other details for every statement as required. The following log_line_prefix may be helpful in most scenarios:

log_line_prefix = '%t [%p]: [%l-1] user=%u,db=%d,app=%a,client=%h'

The above setting records the following for every statement being logged:

%t : Time stamp without milliseconds

%p : Process id

%l-1 : Number of the log line for each session or process, starting at 1

%u : User name

%d : Database name

%a : Application name

%h : Remote host name or IP address

With the above settings employed for log_line_prefix, the log appears as follows:

|

1 2 |

2018-06-08 12:36:26 EDT [21315]: [1-1] user=postgres,db=percona,app=psql,client=192.168.0.12 LOG: duration: 2.611 ms statement: select * from departments where id = 26; 2018-06-08 12:36:49 EDT [21323]: [1-1] user=postgres,db=percona,app=psql,client=192.168.0.12 LOG: duration: 2.251 ms statement: select count(*) from departments; |

To see more on log_line_prefix, You can refer to the PostgreSQL documentation for further details on this feature.

log_duration: the enabling of this parameter records the duration of every completed statement in PostgreSQL log, and this irrespective of log_min_duration_statement. Have in mind that, as it happens with log_min_duration_statement, the enabling of log_duration may increase log file usage and add affect the server’s general performance. For this reason, if you already have log_min_duration_statement enabled it is often suggested to disable log_duration, unless there’s a specific need to keep track of both.

log_lock_waits: when log_lock_waits is enabled a log message is recorded when a session waits longer than deadlock_timeout to acquire a lock.

log_checkpoints: logs all checkpoints and restart points to the PostgreSQL log file.

log_rotation_size: defines the size limit for each log file; once it reaches this threshold the log file is rotated.

Example:

log_rotation_size = '500MB' – every log file is limited to a size of 500 MB.

log_rotation_age: determines the maximum life span for a log file, forcing its rotation once this threshold is reached. This parameter is usually set in terms of hours, or maybe days, however the minimum granularity is a minute. However, if log_rotation_size is reached first, the log gets rotated anyway, irrespective of this setting.

Example:

log_rotation_age = 1d

log_statement: controls what type of SQLs are logged. The recommended setting is DDL, which logs all DDLs that are executed. Tracking those allow you to later audit when a given DDL was executed, and by who. By monitoring and understanding the amount of information it may write to the log file you may consider modifying this setting. Other possible values are none, mod (includes DDLs plus DMLs) and all.

log_temp_files: logs information related to a temporary table file whose size is greater than this value (in KBs).

log_directory: defines the directory in which log files are created. Once more, please note that if you have enabled detailed logging it is recommended to have a separate disk—different from the data directory disk—allocated for log_directory.

Example:

log_directory = /this_is_a_new_disk/pg_log

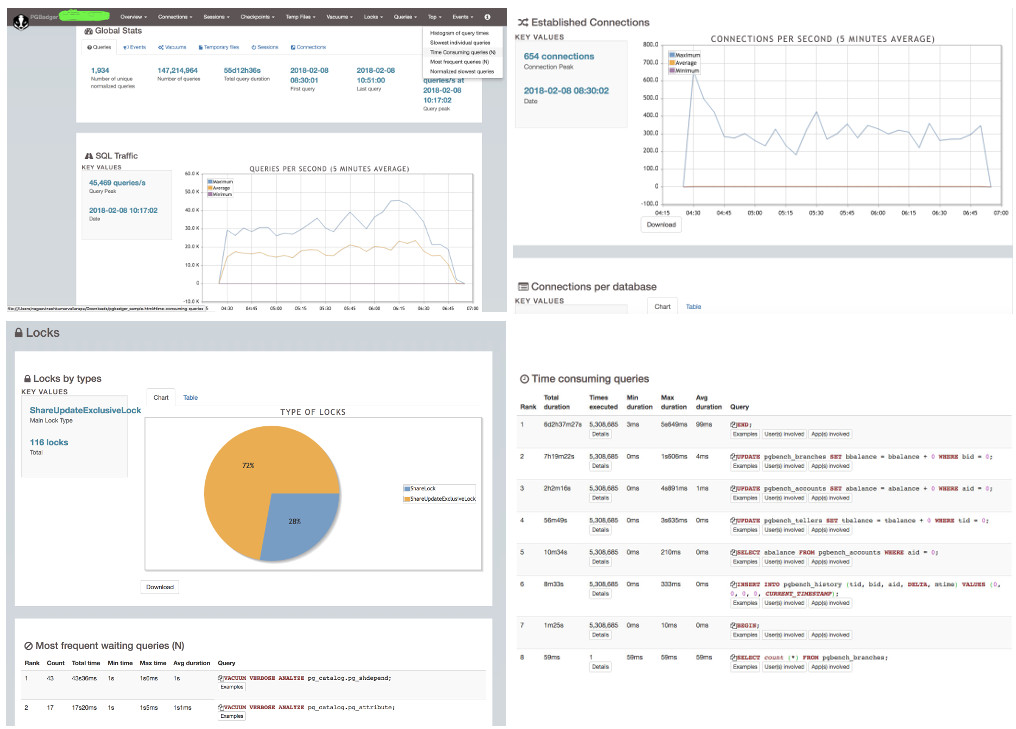

You cannot have a separate error log and a slow query log. Everything is written to one log file, which may be periodically rotated based on time and size. Over a period of time, this log file size may increase to several MB’s or even GB’s depending on the amount of logging that has been enabled. It could get difficult for a DBA/developer to parse the log files and get a better view about what is running slowly and how many times a query has run. To help with this taks you may use pgBadger, a log analyzer for PostgreSQL, to parse log files and generate a rich HTML-based report that you can access from a browser. An example report can be seen in the screenshots below:

Transform your PostgreSQL application into an enterprise-grade powerhouse. Our on-demand webinar guides you through enhancing security, ensuring high availability, and scaling effectively using connection poolers and load balancers.

Resources

RELATED POSTS

Nice post. I like it. Its very help for me. Thanks for this post…………

https://www.scora.in/index.php/online-test-software/

just great 🙂

I wish this blog was available few months ago.

i happened to prepare similar document for my organization after putting lot of efforts.

I will start following your blogs from now on 🙂

Waiting for PMM – Tool from Percona for Postgres.