You may have seen in the last couple of weekly news posts that Baron mentioned we are working on a new adaptive flushing algorithm in InnoDB. In fact, we already have three such algorithms in Percona Server (reflex, estimate, keep_average). Why do we need one more? Okay, first let me start by showing the current problems, and then we will go to solutions.

The basic problem is that, unfortunately, none of the existing flushing implementations (including both MySQL native adaptive flushing and that in Percona Server) can handle it properly. Our last invention, “keep_average”, is doing a very good job on systems based on SSD/Flash storage, but it is not so good for regular slow hard drives.

Let me state the following: If you have a lot of memory (and this is not rare nowadays, for example Cisco UCS C250), your database fits into memory, and your write rate is significant, then the slow disk is, by definition, not able to keep up with all the changes you do in memory.

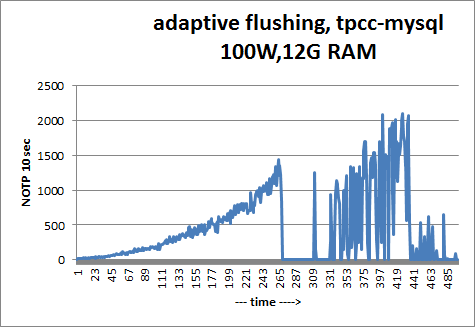

Here is a graph for the tpcc-mysql benchmark (100W ~ 10GB of data, 12G innodb_buffer_pool_size, 1G innodb_log_file_size),

MySQL 5.5.10 with innodb_adaptive_flushing=ON (default)). The problem here is that the system has quite slow hard drives (2 hard disks in software RAID0).

As you can see, after the initial warmup, throughput drops constantly, all the way to zero, and may stay in that state for a long time (minutes). This comes from the fact that MySQL performs changes in memory at a faster rate than it can write to disks. Eventually it gets into an “async” state, where InnoDB tries to flush as many pages as possible in order to keep the checkpoint age fitting into the InnoDB transaction logs, and this makes the situation even worse (these are the periods of zero throughput on the graph).

MySQL’s general recommendation for this case is to increase innodb_io_capacity and decrease innodb_max_dirty_pages_pct so as to have fewer dirty pages. But I call that tuning by shooting yourself in the left foot. (You can’t run fast with a broken foot, right ?) And actually it does not work, as MySQL is not able to keep the number of dirty pages within the given innodb_max_dirty_pages_pct limit.

Another possible solution would be to increase innodb_log_file_size, but that: 1) only delays the problem until later; 2) increases recovery time (and that is an important factor with slow disks); and 3) MySQL does not support innodb_log_file_size > 4GB (it is supported in Percona Server).

To make things more interesting, let’s touch on the topic of flushing of neighbor pages. Performing some research, we found it works in a way we really did not expect. My understanding was that flushing neighbor pages was implemented to perform sequential writes where possible and avoid random writes, which is quite critical for hard drives. What we found is that InnoDB does it in the following way. Say we want to flush page P. InnoDB is looking in an area of 128 pages around page P, and flushes all the pages in that area that are dirty. To illustrate, say we have an area of memory like this: ...D...D...D....P....D....D...D....D where each dot is a page that does not need flushing, each “D” is a dirty page that InnoDB will flush, and P is our page.

So, as a result of how it works, instead of performing 1 random write, InnoDB will perform 8 random writes. I do not have a good explanation for why it is implemented this way.

To make the situation even worse, the count of flushed neighbor pages is counted toward the number of pages we asked to be flushed. That is, for example, we see that to make an improvement in the checkpoint_age we need to flush 8 pages. InnoDB flushes page P and its 7 neighbors (which are not really neighbors), and then stops after this (alas, 8 pages flushed). That is, instead of flushing 8 pages what we would expect to be flushed, InnoDB flushes 1 needed page and 7 random pages, which may not even be relevant for improving the checkpoint age.

This makes calculating how many pages we need to flush extremely hard (read “impossible”), and this is one of the reasons why innodb_adaptive_flushing is not able to keep up.

What if we disable flushing of neighbor pages (we have the option innodb_flush_neighbor_pages in Percona Server)? Well, it may help to some extent, but remember that hard disks love sequential operations, and your throughput in this case may turn out significantly worse.

So what is the solution? This was exactly the topic of our research. We set these goals:

We have made good progress, and I will keep you posted on our ideas and results.

Vadim – please excuse my ignorance in innodb and file io inner workings – but I don’t understand why the 8 random IO operations of the surrounding “P” pages are need and done. Could these be queued into another buffer that would make them sequential and then write once to disk when it can?

Dave,

I think the problem is that on an IO starved machine, there is no “when it can” e.g. there is no point at which the disk doesn’t have priority IO to do.

The idea behind the neighbor flushing appears to be “well, I”m already going to have to move the seek head over to location X, so I may as well write through some other crap I have in memory that belongs in that general area”.

Basically if you’re taking a hit for moving the write head anyway, you may as well write more than one block when you’re over there, even if the extra 8 blocks aren’t priority blocks; the time they add to the overall operation isn’t consequential compared to the seek time you already took.

I don’t have any brilliant suggestions on how to solve the general case problem though; data file writes (as opposed to log writes) are, by definition, random writes. One of the points of having a transaction log is to do sequential writes there in realtime and then flush through your random writes later in another thread that can do some ladder seeking and some some of the write activity.

The flush-neighbors optimization appears not to work quite as it was intended. Even if the blocks are nearby, they are not completely contiguous, so they aren’t done as one single write. I think we are finding a few things in InnoDB that don’t work quite as they appear to have been intended, or as they are documented. (It’s hard to infer the designer’s intention sometimes, though.)

I can’t recall a benchmark for how much faster it is to write some blocks that are fairly close to each other, as opposed to blocks that are randomly distributed. I don’t want to guess at the results, even though I think I can compute it in theory. Moving the head may have some startup cost (or stop-cost), so it might be almost as slow to move it a track or two as to seek far across the disk. Maybe someone else knows and can comment. Regardless, we are seeing some significant opportunities for optimizing here.

I suppose I should point out there was one “solution” to this I worked with back in the day when people did crazy stuff like write their own database engines, but it’d require a fundamental, and performance impacting, change to the storage format that I wouldn’t recommend.

Some old school databases didn’t actually store data pointers in the indexes.

Instead the indexes stored an associator number.

There was a seperate btree that mapped an associator -> a physical address.

The associator file was generally quite small relative to the data blocks, so it was quite plausible to keep it in sync with a minimum of random writes.

What you’d do then is write your data blocks essentially at random, just write them wherever the head happened to be just get them down on disk.

You’d then go back and write your associators out in semi-sequenced order.

The downside of these system are pretty obvious: A) your associator file really better fit in memory or every read was two reads, and B) table scans were horrible since data blocks were not stored sequentially (although good scan functions would pre-scan the associators and arrange for an optimized read).

Most modern systems I’ve worked with like to try to minimize table fragmentation in the data files, but some old school ones kind of embraced it. When you get down to it though, there’s probably a reason that nobody does that anymore; the more modern technique seems to have won out :).

Baron,

I don’t have any hard numbers on whether there’s a startup time on disk seeks e.g. what’s the relative cost of moving 1 track as opposed to 10 tracks.

I do know though that with modern enterprise grade drives with multiple read heads, the “enveloped assumption” these days is that seek time = 0 on the head e.g. you just assume the head seeks to the track in zero time.

Once the head is over the track though you have to wait for, on average, 1/2 a rotation, so on, say a 15k drive you’re waiting (60/15000) * 0.5 or 2ms on average for a random IO in ideal conditions. Most people I work with typically add a 25% “fudge” to that, so the usual envelope numbers I use are:

15k drive -> 2.5 ms seek (400 IOPS/sec)

10k drive -> 3.75 ms seek (267 IOPS/sec)

7.5k drive -> 5 ms seek (200 IOPS/sec)

I’m embarassed to admit I don’t have the envelope numbers for SSD available. There’s no head motion there, but the writes still take time, so the number of IOPS ends up being limited by the ability of the write time.

Patrick,

The numbers you’re posting are very far from the practice what I could be able to see almost anywhere. I normally use 100 IOPS for 7.2K drive to 200 IOPS for 15K RPM 2.5″ drive and these are typically in the right ballpark.

Something else is interesting for a lot of IO, for example log IO you get synchronous serial writes which will require full disk rotation, not half you estimate in average.

Also which drives have multiple read heads ?

Dave,

There is a lot more gain from merging several pages in the single IO, such as in the case of DDPDD and yes in this case it makes sense to flush lower priority pages because they can tag along for free. However if you have holes it does not work well in case of IO pressure. Think even if such extra 8 pages have only half the “normal” cost to flush them because they are on the same track it still means cost of 4 extra IOs is added to advance checkpoint.

We also most likely will not remove support for Innodb current merging approach, but rather add another one which performs better in number of workloads.

Peter,

Regarding the seek times, yah, I rarely see numbers this good in practice, that’s theoretical max. I’ve seen SANs which do a lot of sophisticated ladder seeking get pretty close, but I don’t think I’ve ever seen a drive hit this in practice, so your numbers are probably more accurate.

As for drives with mutliple read heads, I think that’s relatively common these days isn’t it? We buy mostly Dell, but they’re basically rebranded Seagate Cheetahs and they’ve had 4 heads in them forever; I think the newer, 300G ones actually have 8. Might be I’m spoiled by generally buying data center drives though, do lower end one still have single read heads these days?

— Pat

Patrick,

Interesting I have not heard about it. Neither I could find any description of multiple read head technology on google. Do you have any pointers ?

Many drives have multiple platters each having its own head of course but I assume you mean multiple heads on the same platter.

Peter,

I think we’re talking about the same thing :). Everything I can recall buying for a data center has one read/write head per platter side. Naturally on a drive with more platters, you get more heads which is why I was surprised anything had only one head.

There are though (or at least were) some drives that put multiple heads per platter side. The conner chinook had two per side back in the mid 1990s I think (spun slow though).

Dunno if anybody is offering that technology these days; I think most people so interested in performance that the complexity of two independent read heads is worthwhile are probably buying SSDs these days.

— Pat

Patrick,

OK. This makes sense. I of course meant one head per platter. You made in your description like it is multiple heads per platter as multiple platters with one head per platter do not help you with average seek time – there will be only one platter which contains data in specific place, this

is where seek will need to happen.

In general you’re right SSD is a way to go if you need fast storage. Though there is a lot of systems with legacy storage and we’d like to ensure it also works well 🙂

Yah, re-reading my original post its pretty clearly badly worded, so no surprise it was confusing.

As for the SSDs, I’ve been wondering for a few years now if a storage engine optimized for an SSD makes sense. A lot of the nasty complexity and tricks that modern databases go through is all designed to turn random IO into sequential IO.

If you’re on an SSD though, random vs sequential is essentially the same performance, so a much simpler storage engine that just wrote stuff where it was convenient and didn’t deal with extend management or any of that other stuff might be both simpler (more stable) and faster (less aggregate work).

I’m sure there are subltties I’m not thinking through well like whole cell writes and whatnot, but it does strike me as an opportunity to radically simplify the storage layer.

I made a very similar comment yesterday, it seems for some reason it was considered as a spam.

Very good post, i like deep dives:).

One solution to the one-write problem is that we always flush range of pages. For example we have a range of page, which contains a page we want to flush and other dirty pages, for example D.PDD. If we flush all the 5 pages, this can be done with only one io, but we will flush a page we don’t need to. Some computationally cheap heauristics could be used to determine which range is the cheapest to flush (making assumptions about the distribution of dirty pages around the page we want to flush). I don’t know if this approach is good, but flushing neighbour pages may hurt some workloads if a dirty page changes again until it must be checkpointed, but this is true for the current implementation too.

I also wrote that there is difference between random io and random io when it comes to rotating disks. For blocks physically close to each other, head move isn’t necessary, the hard drive only has to lower/raise the electro magnet, which is a significantly cheaper operation. In fact, this can be measured and graphed easily. Run random io benchmark on the whole hard disk, and run the same benchmark again on a small partition at the beginning of the disk. The number of the second benchmark will be better because of this effect. The cost of moving the head can be visualized with a sequential io benchmark too, the beginning of the disk will be always faster, you can see “steps pattern” when you graphing throughput(time), and begin the benchmark from the beginning of the disk.

I can’t wait to read about your solution.

Peter Boros,

I’m not sure why your previous comment was considered spam. I don’t see it in the moderation panel.

To benchmark the cost of head movement we’d need to benchmark something other than the beginning of the disk, the ZCAV effect will always make the beginning much faster. And this is why you see the “step” pattern too as you move from one zone to the next.

Now, about flushing pages, one of the things that is a Hard Problem is understanding the interaction between different types of workloads. In the case where the data fits in memory it doesn’t apply, but in the case where data is much bigger than memory and some flushing is being motivated by the need to make room to read in a page that isn’t in the buffer pool, flushing a page might be very harmful. Imagine that I flush a hot page just because it’s close to another one that needs flushing, and then some other thread needs to read a page — well, that hot page is clean, so we might replace it under some circumstances, and then need to read it right back in again. Our ultimate goal is to try to find a flushing algorithm that is optimal for many different types of scenarios such as these. We’re starting small 🙂

Baron,

About spam: there is nothing wrong with that. After I saw my comment didn’t make it, i tried to resend it, it said duplicate entry, I suspected some moderation queue or caching. Filtering spam is a job of computers, not humans, but computers make errors. There is natural redundancy in human communication, which solves this problem (I commented again today). If I had had the opportunity to choose, I would rather choose this approach when I have to resend sometimes then reading the advertising junk here:) or make you guys moderating ads instead of writing exciting posts:).

You are right about ZCAV, maybe this effect could be eliminated by repeating random io benchmarks on different parts of the disks, and aggregate them.

I understand that the low memory scenario is very different than the one where data fits in memory but we can’t keep up with writes. The low memory scenario and hot pages leads to another desired feature: pin pages to the buffer pool or pin the tail of a table to the buffer pool, when the workload is mostly append-only, and I know I need the last data from a table, and don’t want other table’s pages to trash the cache.

“we need to have a throttling mechanism”. Yes. The solution to this problem necessarily involves continuous flushing and continuous new dirty pages. There can not be an algorithm which only flushes on some specific event, it has to happen as fast as the disk can do it.

It seems to me that the server should just write as fast as it can in some order that is deemed as optimal (maybe a mix of “advance oldest log number” and “contiguous on disk”). When new writes can only happen if max 75% of pages are dirty then the throttling follows automatically.

Throttling gets you more consistent performance (which is good), but it doesn’t really help your throughput does it? The idea behind a throttle would basically be to slow down query response enough that the disk subsystem could keep up so you don’t get the “fast until we overload the system with dirty buffers, then sporadically slow when various panic flush routines kick in” problem.

I’d be equally interested in trying to solve the throughput problem, or at least move the bar. Part of me does wonder though if its worth solving on magnetic disk; I suspect anybody who has this class of problem is going to SSD anyway, so a flush routine that was super efficient on SSD and only kicked in if the disk had performance characteristics similar to same would be worthwhile.

Note that I’m saying this despite the fact that all my production servers use winchester drives; this particular class or problem is one I don’t see for my workloads outside of the lab (I have commit throttling issues and read issues, but flushing not as much). As you can probably tell though I do find it rather interesting.

The problem is, no matter how fast the drives are, they can’t hope to keep up with every in-memory workload. A lot of people still want to run MySQL on slow storage, and often with lots of RAM (read: “the cloud”), so this is an important area to improve. But yeah, we want to improve everything else, too 🙂

Hi Vadim!

Thanks for sharing it and dig the problem! :-))

Well, Adaptive Flushing is yet far from optimal in MySQL 5.5, and if it’s not matching your workload – all the tuning you have to play with is “dirty pages percentage” + “IO capacity” + “redo log size”.. BTW, if you’re not looking for high performance, but rather for performance stability, you may get it by using small redo log files (say 128MB) – it’ll involve “furious flushing” much more often, but it’ll be also shorter, and your TPS drops will be smaller in depth.. – and will give you a kind of “throttling” solution if you have to deal with what you have 😉

Then I’m curious:

– why XtraDB “estimate” mode was not ok here?..

– are you sure there was no LRU flushing involving in parallel?..

– what is impact when “neighbor flushing” is OFF / ON ?..

– did you try to test without O_DIRECT ?..

– did you test with innodb_flush_log_at_trx_commit=1 or other?..

Well, I think the main problem is still coming from the fact that we’re able to write REDO logs faster than data files (seq. vs random), and if the limitation is really coming from the storage, the only option you have in this case is to delay REDO writes.. – setting innodb_flush_log_at_trx_commit=1 will be probably too radical, but adding a short auto-adaptive delays between REDO writes will help (or DML delays like InnoDB is already doing for purge lag)..

Curious to see more details about your testing! 🙂

Rgds,

-Dimitri

Looks like you really have a problem with comments on your site :-))

(seems to be a delay issue?)

Repost again:

Hi Vadim!

Thanks for sharing it and dig the problem! :-))

Well, Adaptive Flushing is yet far from optimal in MySQL 5.5, and if it’s not matching your workload – all the tuning you have to play with is “dirty pages percentage” + “IO capacity” + “redo log size”.. BTW, if you’re not looking for high performance, but rather for performance stability, you may get it by using small redo log files (say 128MB) – it’ll involve “furious flushing” much more often, but it’ll be also shorter, and your TPS drops will be smaller in depth.. – and will give you a kind of “throttling” solution if you have to deal with what you have 😉

Then I’m curious:

– why XtraDB “estimate” mode was not ok here?..

– are you sure there was no LRU flushing involving in parallel?..

– what is impact when “neighbor flushing” is OFF / ON ?..

– did you try to test without O_DIRECT ?..

– did you test with innodb_flush_log_at_trx_commit=1 or other?..

Well, I think the main problem is still coming from the fact that we’re able to write REDO logs faster than data files (seq. vs random), and if the limitation is really coming from the storage, the only option you have in this case is to delay REDO writes.. – setting innodb_flush_log_at_trx_commit=1 will be probably too radical, but adding a short auto-adaptive delays between REDO writes will help (or DML delays like InnoDB is already doing for purge lag)..

Curious to see more details about your testing! 🙂

Rgds,

-Dimitri

Patrick Casey, throttling is preferrable to “panic flush routines”. The panic flush routine causes the 0-throughput ranges seen in the chart posted by Baron. Those are to be avoided. The optimal solution would be a smooth throttling.

Tobi,

I don’t disagree at all; if the choice is between throttling and dealing with panic flushes (and server stalls), I’ll choose throttling any day.

In a perfect world though I’d rather not have to throttle, I’d like the database to keep up with my workload.

I think all of use here on the board are in agreement that this is a hard problem, so throttling may be the only practical solution; I’d just prefer a throughput solution.

— Pat

Peter,

If you have “D.PDD” situation, where “.” is not in the buffer pool you can’t really flush the whole range with single IO, you can only do it with 2IOs. I’m not sure if in some conditions they will be performed with same disk rotation.. in my benchmarks I could not see it. Yes If the situation is “DCPDD” where C is clean page you can flush all pages we just have to be careful to see how flushing the page which was not changed will play with all other aspects of Innodb.

Patrick,

When we speak about throttling we’re speaking about some throttling done to even out performance so instead of having 100 q/sec for 5 min and when 0 for 5 min our goal is to give you

50 q/sec for 10 minutes, as much as advancing your flushing speed allows you to get.

Peter,

With the . notation I meant the page is in the buffer pool, in the post these notations are for areas of memory. However, 2 ios could be better than 5, but in this case we could do only 1 io by flushing the PDD range.

I didn’t think about other aspects and this is intresting. Do you mean that we can’t make sure the clean page won’t change while we are running the decision logic what to flush? In this specific DCPDD case, it maybe ok in some cases, but what if we want to flush a DDDCCCPDDD range? Is there any way to tell that the middle C page didn’t become dirty while we are doing all this? Apart from some kind of range flushing mutex, which doesn’t sound good. Even serializable isolation won’t provide a locking mechanism covering this.

Peter,

Right now we flush all dirty pages in the area, such as PDD will be done with one write. We have been thinking about experimenting about flushing clean pages too if it allows to get more bulky writes. What I mean is mainly internal interaction in Innodb. The code change to push different dirty pages to the disk is a lot more simple and safe than understanding if it is safe to make Innodb to flush pages which are clean already.

Peter, what’s the potential failure mode of flushing a clean page? Naively it’d seem like a perfectly safe, albeit unnecessary, operation.

Patrick,

It is mostly code things. Innodb Is not designed to flush clean pages from buffer pool so we need to ensure we can do it properly. Also there are good questions like do clean pages need to go to double write buffer ? Probably not, if so how can we write them but skip from being put to double write buffer.

Dimitri,

There a lot parameters to play with, but we want to make it as tuning-less as possible.

One of parameters what is allowable to change is innodb_log_file_size, as it will

define balance between performance and recovery time.

So setting innodb_log_file_size as small as possible is not considering.

If go this way – we can just flush all pages as fast as possible, in fact I tried

this way of flushing, and performance is pretty stable.. around 5-10 NOTPM

> Then I’m curious:

>– why XtraDB “estimate†mode was not ok here?..

We still fall into async mode. algorithm is not able to keep up flushing with needed rate

>– are you sure there was no LRU flushing involving in parallel?..

I am pretty much sure. LRU flushing is active when we need to

replace dirty page to read new pages. as we have a lot of free pages

there, the LRU flushing is not working

> – what is impact when “neighbor flushing†is OFF / ON ?..

In some cases “neighbor flushing†OFF may help, but in fact

average throughput is worse than average throughput with ON.

> – did you try to test without O_DIRECT ?..

Nope

> – did you test with innodb_flush_log_at_trx_commit=1 or other?..

Nope

Dimitri,

There a lot parameters to play with, but we want to make it as tuning-less as possible.

One of parameters what is allowable to change is innodb_log_file_size, as it will

define balance between performance and recovery time.

So setting innodb_log_file_size as small as possible is not considering.

If go this way – we can just flush all pages as fast as possible, in fact I tried

this way of flushing, and performance is pretty stable.. around 5-10 NOTPM

> Then I’m curious:

>– why XtraDB “estimate” mode was not ok here?..

We still fall into async mode. algorithm is not able to keep up flushing with needed rate

>– are you sure there was no LRU flushing involving in parallel?..

I am pretty much sure. LRU flushing is active when we need to

replace dirty page to read new pages. as we have a lot of free pages

there, the LRU flushing is not working

> – what is impact when “neighbor flushing” is OFF / ON ?..

In some cases “neighbor flushing” OFF may help, but in fact

average throughput is worse than average throughput with ON.

> – did you try to test without O_DIRECT ?..

Nope

> – did you test with innodb_flush_log_at_trx_commit=1 or other?..

Nope

Patrick,

It is mostly code things. Innodb Is not designed to flush clean pages from buffer pool so we need to ensure we can do it properly. Also there are good questions like do clean pages need to go to double write buffer ? Probably not, if so how can we write them but skip from being put to double write buffer.

Peter, what’s the potential failure mode of flushing a clean page? Naively it’d seem like a perfectly safe, albeit unnecessary, operation.

Peter,

Right now we flush all dirty pages in the area, such as PDD will be done with one write. We have been thinking about experimenting about flushing clean pages too if it allows to get more bulky writes. What I mean is mainly internal interaction in Innodb. The code change to push different dirty pages to the disk is a lot more simple and safe than understanding if it is safe to make Innodb to flush pages which are clean already.

Peter,

With the . notation I meant the page is in the buffer pool, in the post these notations are for areas of memory. However, 2 ios could be better than 5, but in this case we could do only 1 io by flushing the PDD range.

I didn’t think about other aspects and this is intresting. Do you mean that we can’t make sure the clean page won’t change while we are running the decision logic what to flush? In this specific DCPDD case, it maybe ok in some cases, but what if we want to flush a DDDCCCPDDD range? Is there any way to tell that the middle C page didn’t become dirty while we are doing all this? Apart from some kind of range flushing mutex, which doesn’t sound good. Even serializable isolation won’t provide a locking mechanism covering this.

Patrick,

When we speak about throttling we’re speaking about some throttling done to even out performance so instead of having 100 q/sec for 5 min and when 0 for 5 min our goal is to give you

50 q/sec for 10 minutes, as much as advancing your flushing speed allows you to get.

Peter,

If you have “D.PDD” situation, where “.” is not in the buffer pool you can’t really flush the whole range with single IO, you can only do it with 2IOs. I’m not sure if in some conditions they will be performed with same disk rotation.. in my benchmarks I could not see it. Yes If the situation is “DCPDD” where C is clean page you can flush all pages we just have to be careful to see how flushing the page which was not changed will play with all other aspects of Innodb.

Tobi,

I don’t disagree at all; if the choice is between throttling and dealing with panic flushes (and server stalls), I’ll choose throttling any day.

In a perfect world though I’d rather not have to throttle, I’d like the database to keep up with my workload.

I think all of use here on the board are in agreement that this is a hard problem, so throttling may be the only practical solution; I’d just prefer a throughput solution.

— Pat

Patrick Casey, throttling is preferrable to “panic flush routines”. The panic flush routine causes the 0-throughput ranges seen in the chart posted by Baron. Those are to be avoided. The optimal solution would be a smooth throttling.

Looks like you really have a problem with comments on your site )

)

(seems to be a delay issue?)

Repost again:

Hi Vadim!

Thanks for sharing it and dig the problem! )

)

Well, Adaptive Flushing is yet far from optimal in MySQL 5.5, and if it’s not matching your workload – all the tuning you have to play with is “dirty pages percentage” + “IO capacity” + “redo log size”.. BTW, if you’re not looking for high performance, but rather for performance stability, you may get it by using small redo log files (say 128MB) – it’ll involve “furious flushing” much more often, but it’ll be also shorter, and your TPS drops will be smaller in depth.. – and will give you a kind of “throttling” solution if you have to deal with what you have

Then I’m curious:

– why XtraDB “estimate” mode was not ok here?..

– are you sure there was no LRU flushing involving in parallel?..

– what is impact when “neighbor flushing” is OFF / ON ?..

– did you try to test without O_DIRECT ?..

– did you test with innodb_flush_log_at_trx_commit=1 or other?..

Well, I think the main problem is still coming from the fact that we’re able to write REDO logs faster than data files (seq. vs random), and if the limitation is really coming from the storage, the only option you have in this case is to delay REDO writes.. – setting innodb_flush_log_at_trx_commit=1 will be probably too radical, but adding a short auto-adaptive delays between REDO writes will help (or DML delays like InnoDB is already doing for purge lag)..

Curious to see more details about your testing!

Rgds,

-Dimitri

The problem is, no matter how fast the drives are, they can’t hope to keep up with every in-memory workload. A lot of people still want to run MySQL on slow storage, and often with lots of RAM (read: “the cloud”), so this is an important area to improve. But yeah, we want to improve everything else, too

Throttling gets you more consistent performance (which is good), but it doesn’t really help your throughput does it? The idea behind a throttle would basically be to slow down query response enough that the disk subsystem could keep up so you don’t get the “fast until we overload the system with dirty buffers, then sporadically slow when various panic flush routines kick in” problem.

I’d be equally interested in trying to solve the throughput problem, or at least move the bar. Part of me does wonder though if its worth solving on magnetic disk; I suspect anybody who has this class of problem is going to SSD anyway, so a flush routine that was super efficient on SSD and only kicked in if the disk had performance characteristics similar to same would be worthwhile.

Note that I’m saying this despite the fact that all my production servers use winchester drives; this particular class or problem is one I don’t see for my workloads outside of the lab (I have commit throttling issues and read issues, but flushing not as much). As you can probably tell though I do find it rather interesting.

“we need to have a throttling mechanism”. Yes. The solution to this problem necessarily involves continuous flushing and continuous new dirty pages. There can not be an algorithm which only flushes on some specific event, it has to happen as fast as the disk can do it.

It seems to me that the server should just write as fast as it can in some order that is deemed as optimal (maybe a mix of “advance oldest log number” and “contiguous on disk”). When new writes can only happen if max 75% of pages are dirty then the throttling follows automatically.

Baron,

About spam: there is nothing wrong with that. After I saw my comment didn’t make it, i tried to resend it, it said duplicate entry, I suspected some moderation queue or caching. Filtering spam is a job of computers, not humans, but computers make errors. There is natural redundancy in human communication, which solves this problem (I commented again today). If I had had the opportunity to choose, I would rather choose this approach when I have to resend sometimes then reading the advertising junk here:) or make you guys moderating ads instead of writing exciting posts:).

You are right about ZCAV, maybe this effect could be eliminated by repeating random io benchmarks on different parts of the disks, and aggregate them.

I understand that the low memory scenario is very different than the one where data fits in memory but we can’t keep up with writes. The low memory scenario and hot pages leads to another desired feature: pin pages to the buffer pool or pin the tail of a table to the buffer pool, when the workload is mostly append-only, and I know I need the last data from a table, and don’t want other table’s pages to trash the cache.

Peter Boros,

I’m not sure why your previous comment was considered spam. I don’t see it in the moderation panel.

To benchmark the cost of head movement we’d need to benchmark something other than the beginning of the disk, the ZCAV effect will always make the beginning much faster. And this is why you see the “step” pattern too as you move from one zone to the next.

Now, about flushing pages, one of the things that is a Hard Problem is understanding the interaction between different types of workloads. In the case where the data fits in memory it doesn’t apply, but in the case where data is much bigger than memory and some flushing is being motivated by the need to make room to read in a page that isn’t in the buffer pool, flushing a page might be very harmful. Imagine that I flush a hot page just because it’s close to another one that needs flushing, and then some other thread needs to read a page — well, that hot page is clean, so we might replace it under some circumstances, and then need to read it right back in again. Our ultimate goal is to try to find a flushing algorithm that is optimal for many different types of scenarios such as these. We’re starting small

I made a very similar comment yesterday, it seems for some reason it was considered as a spam.

Very good post, i like deep dives:).

One solution to the one-write problem is that we always flush range of pages. For example we have a range of page, which contains a page we want to flush and other dirty pages, for example D.PDD. If we flush all the 5 pages, this can be done with only one io, but we will flush a page we don’t need to. Some computationally cheap heauristics could be used to determine which range is the cheapest to flush (making assumptions about the distribution of dirty pages around the page we want to flush). I don’t know if this approach is good, but flushing neighbour pages may hurt some workloads if a dirty page changes again until it must be checkpointed, but this is true for the current implementation too.

I also wrote that there is difference between random io and random io when it comes to rotating disks. For blocks physically close to each other, head move isn’t necessary, the hard drive only has to lower/raise the electro magnet, which is a significantly cheaper operation. In fact, this can be measured and graphed easily. Run random io benchmark on the whole hard disk, and run the same benchmark again on a small partition at the beginning of the disk. The number of the second benchmark will be better because of this effect. The cost of moving the head can be visualized with a sequential io benchmark too, the beginning of the disk will be always faster, you can see “steps pattern” when you graphing throughput(time), and begin the benchmark from the beginning of the disk.

I can’t wait to read about your solution.

Yah, re-reading my original post its pretty clearly badly worded, so no surprise it was confusing.

As for the SSDs, I’ve been wondering for a few years now if a storage engine optimized for an SSD makes sense. A lot of the nasty complexity and tricks that modern databases go through is all designed to turn random IO into sequential IO.

If you’re on an SSD though, random vs sequential is essentially the same performance, so a much simpler storage engine that just wrote stuff where it was convenient and didn’t deal with extend management or any of that other stuff might be both simpler (more stable) and faster (less aggregate work).

I’m sure there are subltties I’m not thinking through well like whole cell writes and whatnot, but it does strike me as an opportunity to radically simplify the storage layer.

Patrick,

OK. This makes sense. I of course meant one head per platter. You made in your description like it is multiple heads per platter as multiple platters with one head per platter do not help you with average seek time – there will be only one platter which contains data in specific place, this

is where seek will need to happen.

In general you’re right SSD is a way to go if you need fast storage. Though there is a lot of systems with legacy storage and we’d like to ensure it also works well

Peter,

I think we’re talking about the same thing . Everything I can recall buying for a data center has one read/write head per platter side. Naturally on a drive with more platters, you get more heads which is why I was surprised anything had only one head.

. Everything I can recall buying for a data center has one read/write head per platter side. Naturally on a drive with more platters, you get more heads which is why I was surprised anything had only one head.

There are though (or at least were) some drives that put multiple heads per platter side. The conner chinook had two per side back in the mid 1990s I think (spun slow though).

Dunno if anybody is offering that technology these days; I think most people so interested in performance that the complexity of two independent read heads is worthwhile are probably buying SSDs these days.

— Pat

Patrick,

Interesting I have not heard about it. Neither I could find any description of multiple read head technology on google. Do you have any pointers ?

Many drives have multiple platters each having its own head of course but I assume you mean multiple heads on the same platter.

Peter,

Regarding the seek times, yah, I rarely see numbers this good in practice, that’s theoretical max. I’ve seen SANs which do a lot of sophisticated ladder seeking get pretty close, but I don’t think I’ve ever seen a drive hit this in practice, so your numbers are probably more accurate.

As for drives with mutliple read heads, I think that’s relatively common these days isn’t it? We buy mostly Dell, but they’re basically rebranded Seagate Cheetahs and they’ve had 4 heads in them forever; I think the newer, 300G ones actually have 8. Might be I’m spoiled by generally buying data center drives though, do lower end one still have single read heads these days?

— Pat

Dave,

There is a lot more gain from merging several pages in the single IO, such as in the case of DDPDD and yes in this case it makes sense to flush lower priority pages because they can tag along for free. However if you have holes it does not work well in case of IO pressure. Think even if such extra 8 pages have only half the “normal” cost to flush them because they are on the same track it still means cost of 4 extra IOs is added to advance checkpoint.

We also most likely will not remove support for Innodb current merging approach, but rather add another one which performs better in number of workloads.