While a scale-out solution has traditionally been popular for MySQL, it’s interesting to see what room we now have to scale up – cheap memory, fast storage, better power efficiency. There certainly are a lot of options now – I’ve been meeting about a customer/week using Fusion-IO cards. One interesting choice I’ve seen people make however, is buying an SSD when they still have a lot of pages read/second – I would have preferred to buy memory instead, and use the storage device for writes.

Here’s the benchmark I came up with to confirm if this is the case:

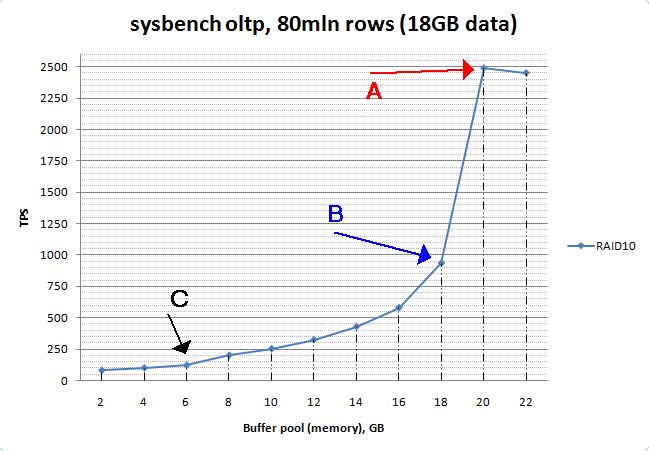

To start with, we have a test on the RAID10 storage to establish a baseline. The Y axis is transactions/second (more is better), the X axis is the size of innodb_buffer_pool_size:

Let me point out three interesting characteristics about this benchmark:

Let me point out three interesting characteristics about this benchmark:

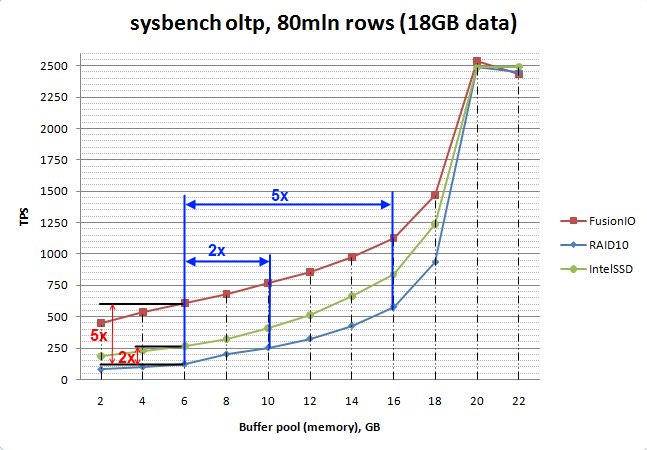

Where the C arrow was, in this graph a Fusion-IO card improves performance by about five times (or 2x with an Intel SSD). To get the same improvement with memory, you would have needed to add 60% more memory -or- 260% more memory for a 5x improvement. Imagine a situation where your C point is when you have 32GB of RAM and 100GB of data. Than it gets interesting:

Where the C arrow was, in this graph a Fusion-IO card improves performance by about five times (or 2x with an Intel SSD). To get the same improvement with memory, you would have needed to add 60% more memory -or- 260% more memory for a 5x improvement. Imagine a situation where your C point is when you have 32GB of RAM and 100GB of data. Than it gets interesting:

The workload here is designed to keep as much of the data hot as possible, but I guess the main lesson here is not to underestimate the size of your “active set” of data. For some people who just append data to some sort of logging table it may only need to be a small percentage – but in other cases it can be considerably higher. If you don’t know what your working set is – ask us!

Important note: This graph and these results are valid only for sysbench uniform. In your particular workload the points B and C may be located in differently.

Raw results:

| Buffer pool, GB | FusionIO | Intel SSD | RAID 10 |

| 2 | 450.3 | 186.33 | 80.67 |

| 4 | 538.19 | 230.35 | 99.73 |

| 6 | 608.15 | 268.18 | 121.71 |

| 8 | 679.44 | 324.03 | 201.74 |

| 10 | 769.44 | 407.56 | 252.84 |

| 12 | 855.89 | 511.49 | 324.38 |

| 14 | 976.74 | 664.38 | 429.15 |

| 16 | 1127.23 | 836.17 | 579.29 |

| 18 | 1471.98 | 1236.9 | 934.78 |

| 20 | 2536.16 | 2485.63 | 2486.88 |

| 22 | 2433.13 | 2492.06 | 2448.88 |

Vadim, I was wondering what you think about SSDs for some more special edge cases. i.e.

In terms of ‘operational pains’ warming a cache post-restart on a device capable of tens of thousands of IOPS should be better? Also – for a (single threaded) slave you may care more about service time than throughput of the device.

> There are already appliances on the market which use 8 Intel SSD devices

Eight? How about 24: http://www.siliconmechanics.com/i27100/storage-server.php

Of course, when you put 24 64GB SLC drives in there, it’s suddenly $25k, so that might not be super practical. 😉

The answer here appears to be “buy more memory” doesn’t it?

A 20G box on RAID 10 runs rings around a 16G box on Fusion IO, and for the price of one Fusion IO card, I can buy a lot of memory.

I can conceive of plenty of cases where I want fast storage, but if my workload looked like this I’d throw memory at the box for years before I considered upgrading the storage system.

Even a mid range 2U dell server will take > 128G of memory these days.

We just did an exhaustive test of SSD.

Long story short, I think the Intel X-25E SSDs *can* replace a MySQL DB but you have to shard it.

Instead of one big DB box with 64GB of memory and 6x SAS you’ll need to use 6 DB instances each with it’s own replication thread to another server and have the data on 6 SSDs backed by a JBOD.

The problem is that MySQL replication is single threaded and this basically destroys performance because adding 6 or 100 SSDs still yields the same performance as one SSD.

On the product information for the FusionIO cards, they mention (or encourage this as an option) to make the FusionIO card the swap partition for a linux server (I have it in a pdf a sales guy gave me).

If that is in fact the case, would you be able to store all the database in memory along with the FusionIO-swap and let the system shift around with the blocks that are more commonly used?

*Correction*

When I meant “store all the database in memory” I meant, like the article was refering, to have the memory as large as the database size.

Hi,

is on this hardware (RAID 10 with BBU, SSD,…) the setting:

XFS Filesystem mounted with nobarrier option

reliable for production use?

regards Frank

‘@4: That is actually a benchmark that I would like to see or try myself: “How much load in terms of qps can you add to a MySQL master until the slave starts to lag? Now, switching from SBR to RBR, how does this affect size of the binlogs and how does it affect performance?”

Frank,

As long as you have a battery backed write cache the nobarrier option is safe, and in fact recommended.

See the XFS FAQ(http://xfs.org/index.php/XFS_FAQ):

Q. Should barriers be enabled with storage which has a persistent write cache?

Many hardware RAID have a persistent write cache which preserves it across power failure, interface resets, system crashes, etc. Using write barriers in this instance is not recommended and will in fact lower performance. Therefore, it is recommended to turn off the barrier support and mount the filesystem with “nobarrier”. But take care about the hard disk write cache, which should be off.

Does sysbench oltp provide the whole dataset of the same temperature (no hot/cold data sets)? If yes then I think in the real world the whole picture could be different. Let’s for example naively take the Pareto principle into account. 20% of data provide 80% of io. In this case if we have buffer pool only for 20% (4gb), we cover 80% of io, which results in 600tps instead of 100tps.

Interesting read. I took notice that it is an oldish article(2010) when you mentioned that a 100 GB ssd is expensive. SSD prices went down faster than memory prices, I guess your point of buying an ssd in “Case C” is not more accurate than ever 🙂