ARM processors have been around for a while. In mid-2015/2016 there were a couple of attempts by the community to port MongoDB to work with this architecture. At the time, the main storage engine was MMAP and most of the available ARM boards were 32-bits. Overall, the port worked, but the fact is having MongoDB running on a Raspberry Pi was more a hack than a setup. The public cloud providers didn’t yet offer machines running with these processors.

The ARM processors are power-efficient and, for this reason, they are used in smartphones, smart devices and, now, even laptops. It was just a matter of time to have them available in the cloud as well. Now that AWS is offering ARM-based instances you might be thinking: “Hmmm, these instances include the same amount of cores and memory compared to the traditional x86-based offers, but cost a fraction of the price!”.

But do they perform alike?

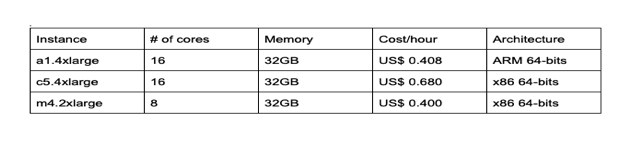

In this blog, we selected three different AWS instances to compare: one powered by an ARM processor, the second one backed by a traditional x86_64 Intel processor with the same number of cores and memory as the ARM instance, and finally another Intel-backed instance that costs roughly the same as the ARM instance but carries half as many cores. We acknowledge these processors are not supposed to be “equivalent”, and we do not intend to go deeper in CPU architecture in this blog. Our goal is purely to check how the ARM-backed instance fares in comparison to the Intel-based ones.

These are the instances we will consider in this blog post.

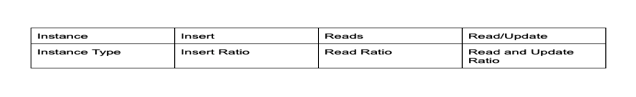

We will use the Yahoo Cloud Serving Benchmark (YCSB, https://github.com/brianfrankcooper/YCSB) running on a dedicated instance (c5d.4xlarge) to simulate load in three distinct tests:

We will run each test with a varying number of concurrent threads (32, 64, and 128), repeating each set three times and keeping only the second-best result.

All instances will run the same MongoDB version (4.0.3, installed from a tarball and running with default settings) and operating system, Ubuntu 16.04. We chose this setup because MongoDB offer includes an ARM version for Ubuntu-based machines.

All the instances will be configured with:

We start with the setup of the benchmark software we will use for the test, YCSB. The first task was to spin up a powerful machine (c5d.4xlarge) to run the software and then prepare the environment:

The YCSB program requires Java, Maven, Python, and pymongo which doesn’t come by default in our Linux version – Ubuntu server x86. Here are the steps we used to configure our environment:

Installing Java

|

1 |

sudo apt-get install java-devel |

Installing Maven

|

1 |

wget http://ftp.heanet.ie/mirrors/www.apache.org/dist/maven/maven-3/3.1.1/binaries/apache-maven-3.1.1-bin.tar.gz<br>sudo tar xzf apache-maven-*-bin.tar.gz -C /usr/local<br>cd /usr/local<br>sudo ln -s apache-maven-* maven<br>sudo vi /etc/profile.d/maven.sh<br> |

Add the following to maven.sh

|

1 |

export M2_HOME=/usr/local/maven<br>export PATH=${M2_HOME}/bin:${PATH}<br> |

Installing Python 2.7

|

1 |

sudo apt-get install python2.7<br> |

Installing pip to resolve the pymongo dependency

|

1 |

sudo apt-get install python-pip |

Installing pymongo (driver)

|

1 |

sudo pip install pymongo<br> |

Installing YCSB

|

1 |

curl -O --location https://github.com/brianfrankcooper/YCSB/releases/download/0.5.0/ycsb-0.5.0.tar.gz<br>tar xfvz ycsb-0.5.0.tar.gz<br>cd ycsb-0.5.0<br> |

YCSB comes with different workloads, and also allows for the customization of a workload to match our own requirements. If you want to learn more about the workloads have a look at https://github.com/brianfrankcooper/YCSB/blob/master/workloads/workload_template

First, we will edit the workloads/workloada file to perform 1 billion inserts (for our first test) while also preparing it to later perform only reads (for our second test):

|

1 |

recordcount=1000000<br>operationcount=1000000<br>workload=com.yahoo.ycsb.workloads.CoreWorkload<br>readallfields=true<br>readproportion=1<br>updateproportion=0.0<br><br> |

We will then change the workloads/workloadb file so as to provide a mixed workload for our third test. We also set it to perform 1 billion reads, but we break it down into 70% of read queries and 30% of updates with a scan ratio of 5%, while also placing a cap on the maximum number of scanned documents (2000) in an effort to emulate real traffic – workloads are not perfect, right?

|

1 |

recordcount=10000000<br>operationcount=10000000<br>workload=com.yahoo.ycsb.workloads.CoreWorkload<br>readallfields=true<br>readproportion=0.7<br>updateproportion=0.25<br>scanproportion=0.05<br>insertproportion=0<br>maxscanlength=2000<br><br> |

With that, we have the environment configured for testing.

With all instances configured and ready, we run the stress test against our MongoDB servers using the following command :

|

1 |

./bin/ycsb [load/run] mongodb -s -P workloads/workload[ab] -threads [32/64/128] <br> -p mongodb.url=mongodb://xxx.xxx.xxx.xxx.:27017/ycsb0000[0-9] <br> -jvm-args="-Dlogback.configurationFile=disablelogs.xml"<br> |

The parameters between brackets varied according to the instance and operation being executed:

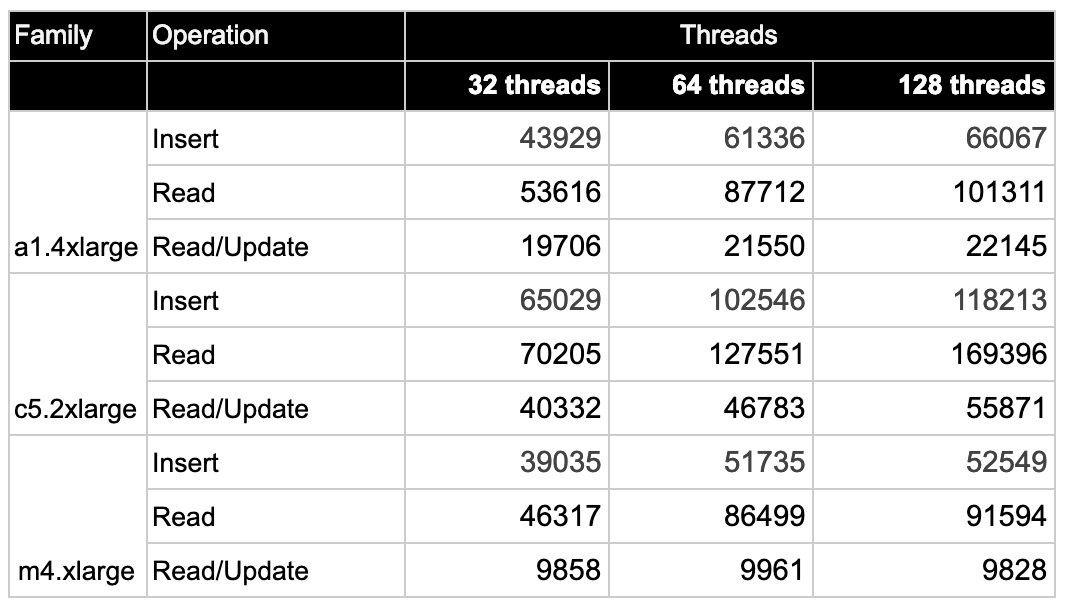

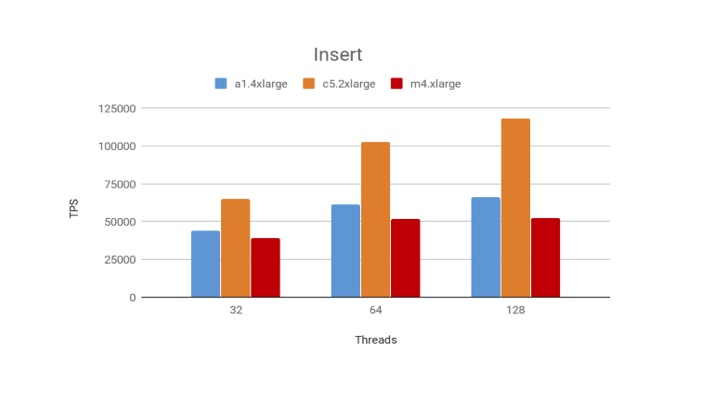

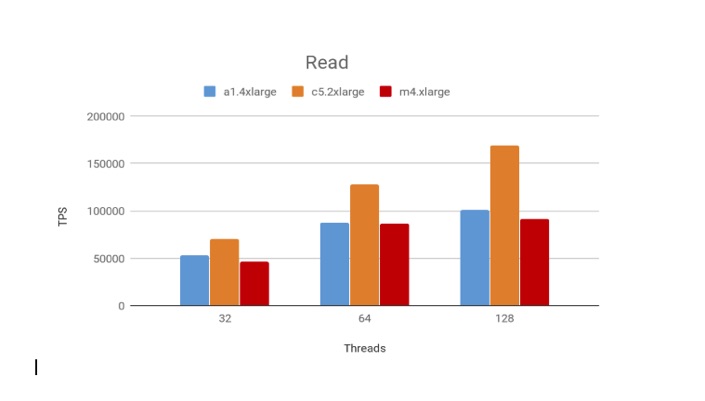

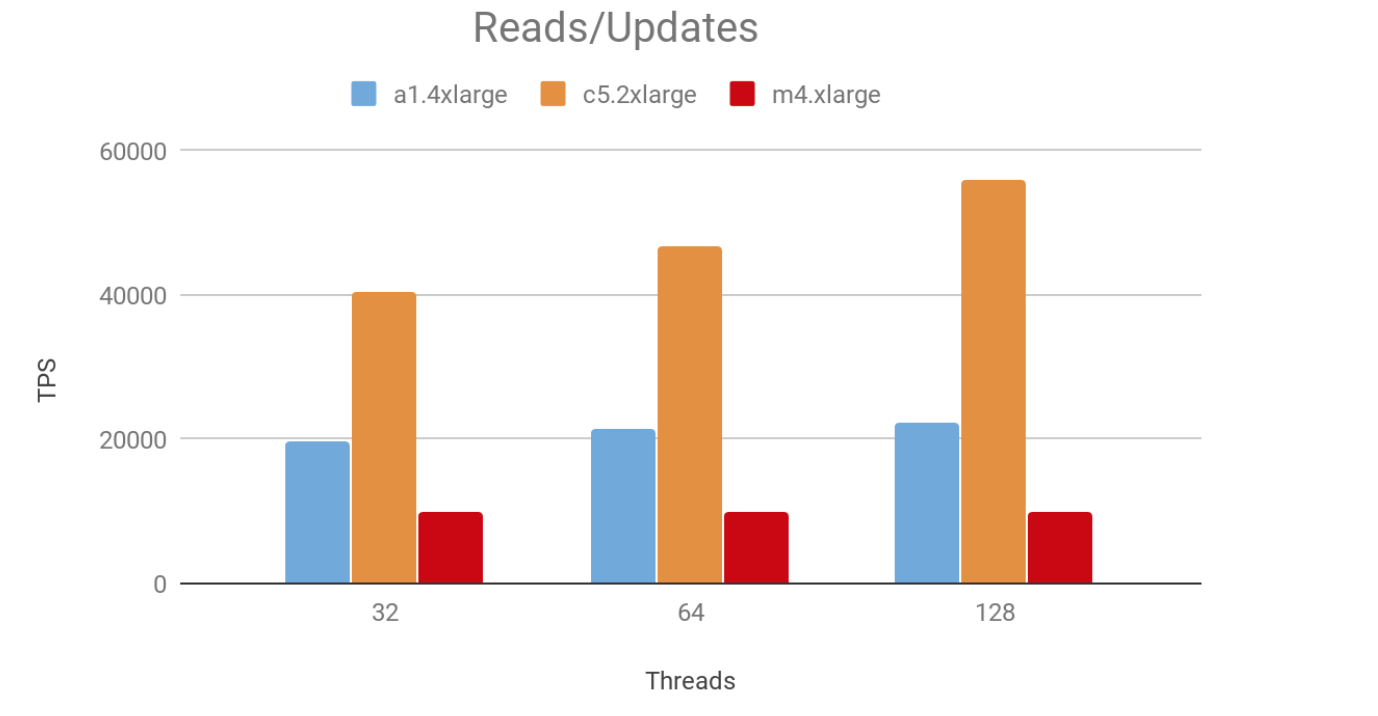

Without further ado, the table below summarizes the results for our tests:

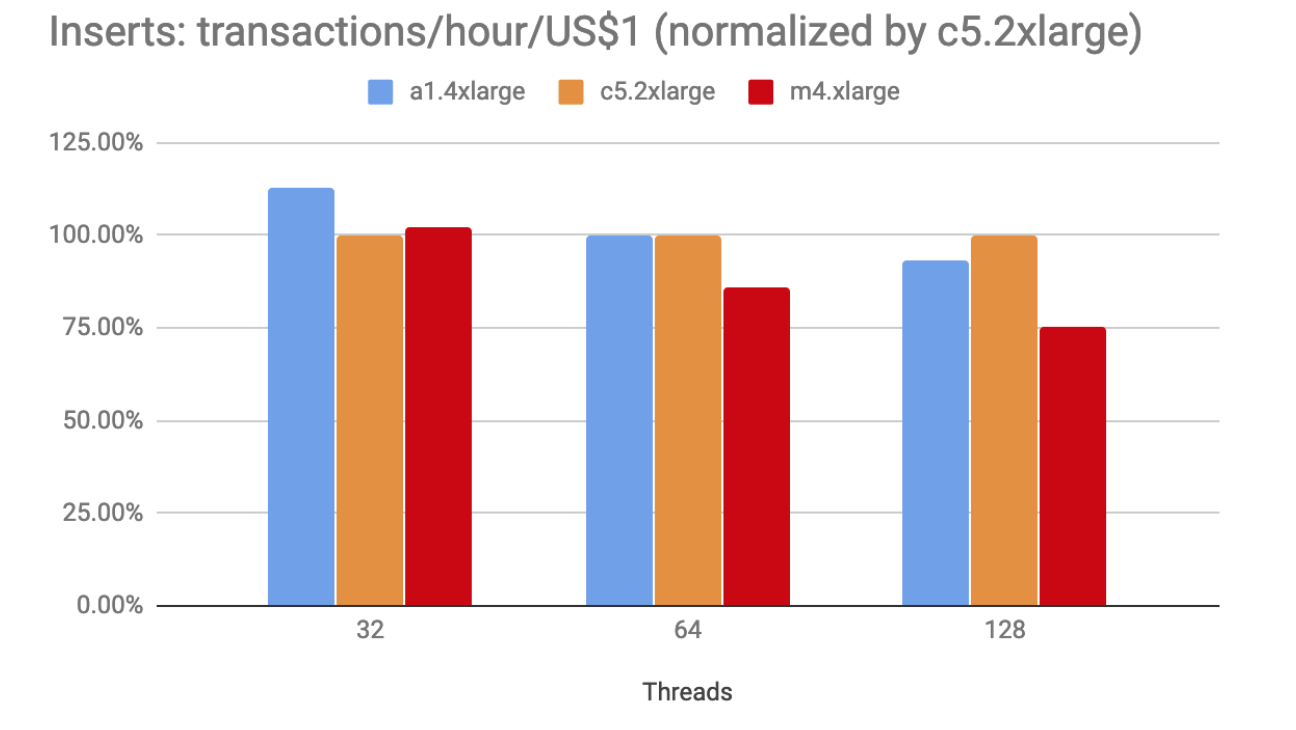

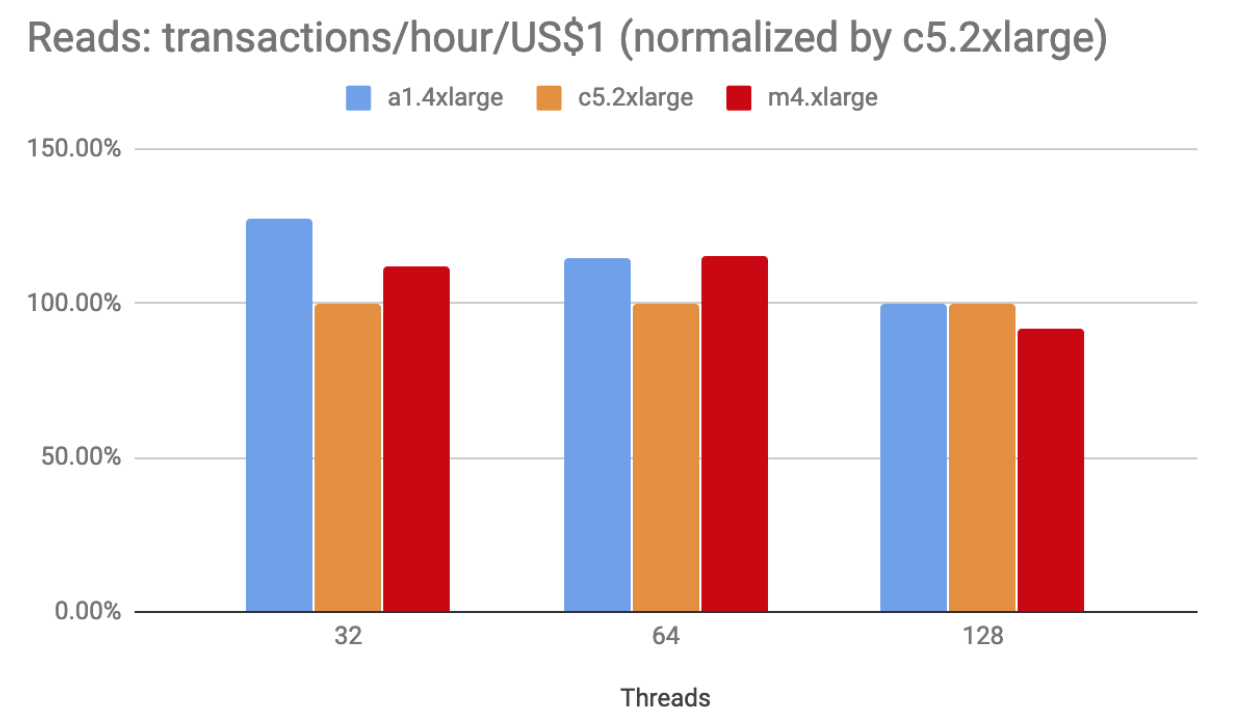

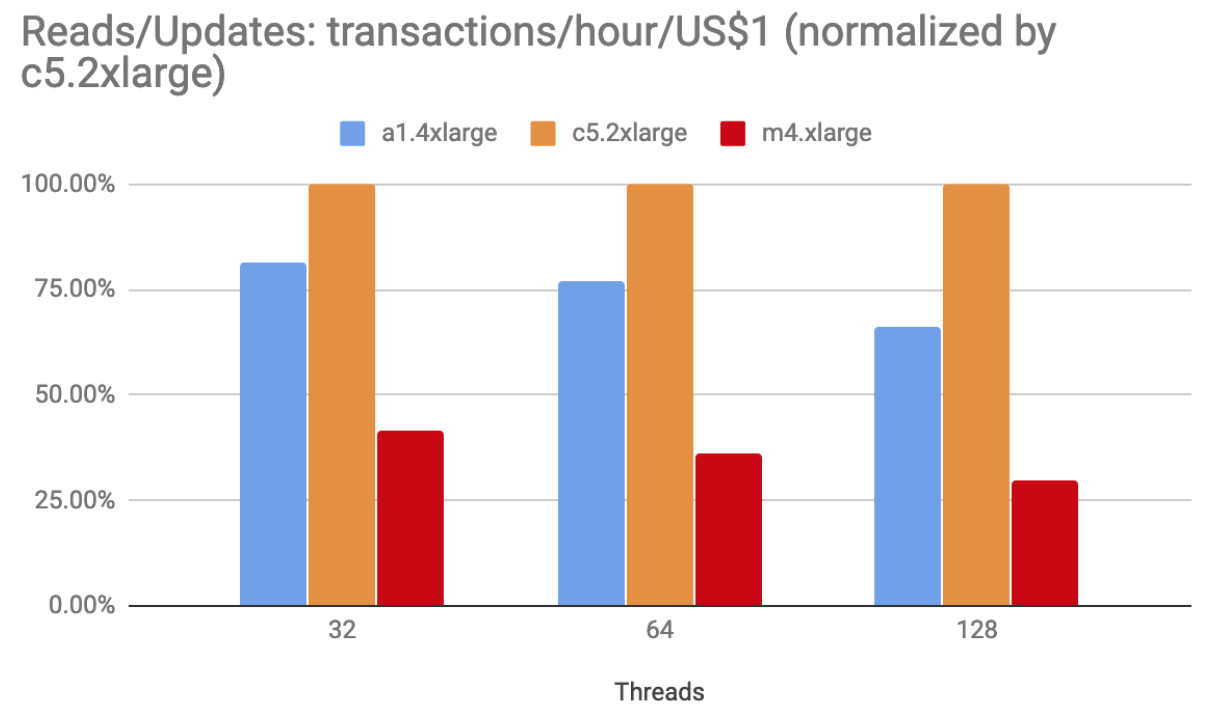

Considering throughput alone – and in the context of those tests, particularly the last one – you may get more performance for the same cost. That’s certainly not always the case, which our results above also demonstrate. And, as usual, it depends on “how much performance do you need” – a matter that is even more pertinent in the cloud. With that in mind, we had another look at our data under the “performance cost” lens.

As we saw above, the c5.4xlarge instance performed better than the other two instances for a little over 50% more (in terms of cost). Did it deliver 50% more (performance) as well? Well, sometimes it did even more than that, but not always. We used the following formula to extrapolate the OPS (Operations Per Second) data we’ve got from our tests into OPH (Operations Per Hour), so we could them calculate how much bang (operations) for the buck (US$1) each instance was able to provide:

transactions/hour/US$1 = (OPS * 3600) / instance cost per hour

This is, of course, an artificial metric that aims to correlate performance and cost. For this reason, instead of plotting the raw values, we have normalized the results using the best performer instance as baseline(100%):

The intent behind these was only to demonstrate another way to evaluate how much we’re getting for what we’re paying. Of course, you need to have a clear understanding of your own requirements in order to make a balanced decision.

We hope this post awakens your curiosity not only about how MongoDB may perform on ARM-based servers, but also by demonstrating another way you can perform your own tests with the YCSB benchmark. Feel free to reach out to us through the comments section below if you have any suggestions, questions, or other observations to make about the work we presented here.

Resources

RELATED POSTS

Thank you for sharing details that allow us to reproduce this. The open question is how large the price/perf difference needs to be to motivate a migration to ARM.

Very interesting and well written. Thank you for the insights!

Thanks for the well-written article…a couple of comments/questions:

– the result charts refer to different instance types than the table in the beginning of the article, can you confirm which instance types were used? i.e., c5.2xlarge or c5.4xlarge and, m4.2xlarge or m4.xlarge

– was there a reason you stopped at 128 ycsb threads when the performance was still increasing (compared to previous run at 64 threads)?

– I like your method of presenting normalized performance per $

Hi HarpPDX,

We used m4.2xlarge and c5.4xlarge, I will fix the graphs. Thanks for letting us know.

Regarding the threads with about 150 threads we hit the arm limitation and the performance didn’t increased. CPU usage went to 100% and context switch killed the performance.

We will do a follow up article with more data soon.

Did you happen to connect this to PMM? I am curious as to the CPU scaling perspective, after say 12 cores was x86 or ARM more effictive?

Can you rerun these tests comparing the new Graviton2 instances? It would be interesting to see how this compares