In this post, we will manage ProxySQL Cluster with “core” and “satellite” nodes. What does that mean? Well, in hundreds of nodes, only one change in one of those nodes will replicate to the entire cluster.

In this post, we will manage ProxySQL Cluster with “core” and “satellite” nodes. What does that mean? Well, in hundreds of nodes, only one change in one of those nodes will replicate to the entire cluster.

Any mistake or changes will replicate to all nodes, and this can make it difficult to find the most recently updated node or the node of true.

Before continuing, you need to install and configure ProxySQL Cluster. You can check my previous blogs for more information:

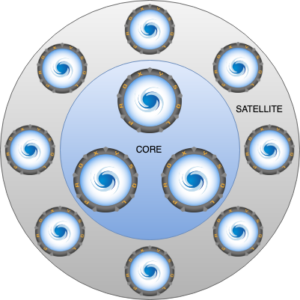

The idea to use “core” and “satellite” nodes is to limit only a few nodes as masters (aka core) and the rest of the nodes as slaves (aka satellite). Any change in the “core” nodes will be replicated to all core/satellite nodes, but any change in a “satellite” node will not be replicated. This is useful to manage big amount of nodes because we are minimizing manual errors and false/positive changes, doing the difficult task of finding the problematic node over all the nodes in the cluster.

This works in ProxySQL version 1.4 and 2.

When you configure a classic ProxySQL Cluster, all nodes listen to all nodes, but with this feature, all nodes will only listen to a couple of nodes or the nodes you want to use as “core” nodes.

Any change in one or more nodes not listed in the “proxysql_servers” table will not be replicated, due to the fact that there aren’t nodes listening in the admin port waiting for changes.

Each node opens one thread per server listed in the proxysql_server table and connects to the IP on admin port (default admin port is 6032), waiting for any change in four tables – mysql_servers, mysql_users, proxysql_servers, mysql_query_rules. The only relationship between a core and satellite node is a satellite node connects to the core node and it waits for any change.

It’s easy, we will configure only the IPs of core nodes in all cluster nodes, including “core and satellite” nodes, into the proxysql_servers tables. If you read my previous posts and configured a ProxySQL Cluster, we will clean the previous config for the next tables to test from scratch:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

delete from mysql_query_rules; LOAD MYSQL QUERY RULES TO RUNTIME; SAVE MYSQL QUERY RULES TO DISK; delete from mysql_servers; LOAD MYSQL SERVERS TO RUNTIME; SAVE MYSQL SERVERS TO DISK; delete from mysql_users; LOAD MYSQL USERS TO RUNTIME; SAVE MYSQL USERS TO DISK; delete from proxysql_servers; LOAD PROXYSQL SERVERS TO RUNTIME; SAVE PROXYSQL SERVERS TO DISK; |

Suppose we have 100 ProxySQL nodes, for example, and here is the list of hostnames and IPs from our instances:

|

1 2 3 4 |

proxysql_node1 = 10.0.0.1 proxysql_node2 = 10.0.0.2 ... proxysql_node100 = 10.0.0.100 |

And we want to configure and use only 3 core nodes, so we select the first 3 nodes from the cluster:

|

1 2 3 |

proxysql_node1 = 10.0.0.1 proxysql_node2 = 10.0.0.2 proxysql_node3 = 10.0.0.3 |

And the rest of the nodes will be the satellite nodes:

|

1 2 3 4 |

proxysql_node4 = 10.0.0.4 proxysql_node5 = 10.0.0.5 ... proxysql_node100 = 10.0.0.100 |

We will use the above IPs to configure the proxysql_servers table, with only those 3 IPs over all nodes. So all ProxySQL nodes (from proxysql_node1 to proxysql_node100) will listen for changes only on those 3 nodes.

|

1 2 3 4 5 6 7 8 |

DELETE FROM proxysql_servers; INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.0.0.1',6032,0,'proxysql_node1'); INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.0.0.2',6032,0,'proxysql_node2'); INSERT INTO proxysql_servers (hostname,port,weight,comment) VALUES ('10.0.0.3',6032,0,'proxysql_node3'); LOAD PROXYSQL SERVERS TO RUNTIME; SAVE PROXYSQL SERVERS TO DISK; |

Now all nodes from proxysql_node4 to proxysql_node100 are the satellite nodes.

We can see something like this in the proxysql.log file:

|

1 2 3 4 5 6 7 |

2019-08-09 15:50:14 [INFO] Created new Cluster Node Entry for host 10.0.0.1:6032 2019-08-09 15:50:14 [INFO] Created new Cluster Node Entry for host 10.0.0.2:6032 2019-08-09 15:50:14 [INFO] Created new Cluster Node Entry for host 10.0.0.3:6032 ... 2019-08-09 15:50:14 [INFO] Cluster: starting thread for peer 10.0.0.1:6032 2019-08-09 15:50:14 [INFO] Cluster: starting thread for peer 10.0.0.2:6032 2019-08-09 15:50:14 [INFO] Cluster: starting thread for peer 10.0.0.3:6032 |

I’ll create a new entry mysql_users table in the core node to test if the replication from core to satellite is working fine.

Connect to proxysql_node1 and run the next queries:

|

1 2 3 |

INSERT INTO mysql_users(username,password, active, default_hostgroup) VALUES ('user1','123456', 1, 10); LOAD MYSQL USERS TO RUNTIME; SAVE MYSQL USERS TO DISK; |

Now from any satellite node, for example, proxysql_node4, check the ProxySQL log file to find if there are updates. If this is working fine we see something like this:

|

1 2 3 4 5 6 7 8 |

2019-08-09 18:49:24 [INFO] Cluster: detected a new checksum for mysql_users from peer 10.0.1.113:6032, version 3, epoch 1565376564, checksum 0x5FADD35E6FB75557 . Not syncing yet ... 2019-08-09 18:49:26 [INFO] Cluster: detected a peer 10.0.1.113:6032 with mysql_users version 3, epoch 1565376564, diff_check 3. Own version: 2, epoch: 1565375661. Proceeding with remote sync 2019-08-09 18:49:27 [INFO] Cluster: detected a peer 10.0.1.113:6032 with mysql_users version 3, epoch 1565376564, diff_check 4. Own version: 2, epoch: 1565375661. Proceeding with remote sync 2019-08-09 18:49:27 [INFO] Cluster: detected peer 10.0.1.113:6032 with mysql_users version 3, epoch 1565376564 2019-08-09 18:49:27 [INFO] Cluster: Fetching MySQL Users from peer 10.0.1.113:6032 started 2019-08-09 18:49:27 [INFO] Cluster: Fetching MySQL Users from peer 10.0.1.113:6032 completed 2019-08-09 18:49:27 [INFO] Cluster: Loading to runtime MySQL Users from peer 10.0.1.113:6032 2019-08-09 18:49:27 [INFO] Cluster: Saving to disk MySQL Query Rules from peer 10.0.1.113:6032 |

Then check if the previous update exists in the mysql_users table on proxysql_node4 or any other satellite node. These updates should exist in the mysql_users and runtime_mysql_users tables.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

admin ((none))>select * from runtime_mysql_users; +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ | username | password | active | use_ssl | default_hostgroup | default_schema | schema_locked | transaction_persistent | fast_forward | backend | frontend | max_connections | +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ | user1 | *6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 | 1 | 0 | 0 | | 0 | 1 | 0 | 1 | 0 | 10000 | | user1 | *6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 | 1 | 0 | 0 | | 0 | 1 | 0 | 0 | 1 | 10000 | +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ admin ((none))>select * from mysql_users; +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ | username | password | active | use_ssl | default_hostgroup | default_schema | schema_locked | transaction_persistent | fast_forward | backend | frontend | max_connections | +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ | user1 | *6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 | 1 | 0 | 0 | | 0 | 1 | 0 | 1 | 0 | 10000 | | user1 | *6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 | 1 | 0 | 0 | | 0 | 1 | 0 | 0 | 1 | 10000 | +----------+-------------------------------------------+--------+---------+-------------------+----------------+---------------+------------------------+--------------+---------+----------+-----------------+ |

Now the final test is to create a new MySQL user into a satellite node, connect to proxysql_node4, and run the next queries to create a new username:

|

1 2 3 |

INSERT INTO mysql_users(username,password, active, default_hostgroup) VALUES ('user2','123456', 1, 10); LOAD MYSQL USERS TO RUNTIME; SAVE MYSQL USERS TO DISK; |

From the proxysql log on proxysql_node4, we see the next output:

|

1 2 3 4 |

[root@ip-10-0-1-10 ~]# tail -f /var/lib/proxysql/proxysql.log -n100 ... 2019-08-09 18:59:12 [INFO] Received LOAD MYSQL USERS TO RUNTIME command 2019-08-09 18:59:12 [INFO] Received SAVE MYSQL USERS TO DISK command |

The last thing to check is the proxysql log file in the core node, to see if there are updates from the table mysql_users. Below is the output from proxysql_node1:

|

1 2 3 4 |

[root@proxysql proxysql]# tail /var/lib/proxysql/proxysql.log -n100 ... 2019-08-09 19:09:21 [INFO] ProxySQL version 1.4.14-percona-1.1 2019-08-09 19:09:21 [INFO] Detected OS: Linux proxysql 4.14.77-81.59.amzn2.x86_64 #1 SMP Mon Nov 12 21:32:48 UTC 2018 x86_64 |

As you can see there are no updates, because the core nodes are not listening for changes from satellite nodes. Core nodes only listen for changes in other core nodes.

And finally, this feature is really useful when you have many servers to manage. Hope you can test this!

Resources

RELATED POSTS

“Native ProxySQL Clustering” function is still exprimental and not suitable for production usage ?

How to configure satellite nodes in a cluster? How does core nodes(listed in proxysql servers) know about satellite nodes which are not configured ?