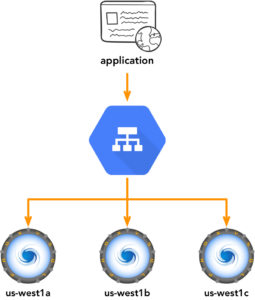

There are three different ways ProxySQL can direct traffic between your application and the backend MySQL services.

Without going through too much detail – each has its own limitations. In the first form, the application needs to know about all MySQL servers at any given point in time. With the third form, a large number of application servers, especially in the age of Kubernetes, where apps can simply recycle easily or be scaled up and down, backend connections can increase exponentially leading to issues.

In the second form, load balancing between a pool of ProxySQL servers is normally the challenge. Do you load balance the load balancers? While there are approaches like balancing from the application, similar to how the MongoDB drivers works, the application still needs to know and maintain a list of healthy backend proxies.

Google Cloud Platform’s (GCP) internal load balancer is a software based managed service which is implemented via virtual networking. This means, unlike physical load balancers, it is designed not to be a single point of failure.

We have played with Internal Load Balancers (ILB) and ProxySQL using the architecture below. There’s a few steps and items involved to be explained.

An instance group will be created to run the ProxySQL services. This needs to be a managed instance group so they are distributed between multiple zones. A managed instance group can auto scale, which you might or might not want. For example, a problematic ProxySQL instance can easily be replaced with a templatized VM instance.

Health checks are the tricky part. GCP’s internal load balancer supports HTTP(S), SSL and TCP health checks. In this case, as long as ProxySQL is responding on the service port or admin port the service is up, right? Yes, but this is not necessarily enough since a port may respond but the instance can be misconfigured and return errors.

With ProxySQL you have to treat it like an actual MySQL instance (i.e. login and issue a query). On the other hand, the load balancer should be agnostic and does not necessarily need to know which backends do or do not work. The availability of backends should be left to ProxySQL as much as possible.

One way to achieve this is to use dummy rewrite rules inside ProxySQL. In the example below, we’ve configured an account called percona that is assigned to a non-existent hostgroup. What we are doing is simply rewriting SELECT 1 queries to return an OK result.

|

1 2 3 4 5 6 7 8 9 |

mysql> INSERT INTO mysql_query_rules (active, username, match_pattern, OK_msg) - > VALUES (1, 'percona', 'SELECT 1', '1'); Query OK, 1 row affected (0.00 sec) mysql> LOAD MYSQL QUERY RULES TO RUNTIME; Query OK, 0 rows affected (0.00 sec) mysql> SAVE MYSQL QUERY RULES TO DISK; Query OK, 0 rows affected (0.01 sec) |

|

1 2 3 4 5 |

[root@west1-proxy-group-9bgs ~]# mysql -upercona -ppassword -P3306 -h127.1 ... mysql> SELECT 1; Query OK, 0 rows affected (0.00 sec) 1 |

It does not solve the problem though where ILB only supports primitive TCP check and HTTP checks. We still need a layer where the response from ProxySQL will be properly translated to ILB in a form it will understand. My personal preference is to expose an HTTP service that queries ProxySQL and responds to ILB HTTP based health check. It provides additional flexibility like being able to check specific or all backends.

Health checks to ProxySQL instances comes from a specific set of IP ranges. In our case, these would be 130.211.0.0/22 and 35.191.0.0/16. Firewall ports needs to be open from these ranges to either the HTTP or TCP ports in the ProxySQL instances.

In our next post, we will use Orchestrator to manage cross region replication for high availability.

Hello,

I am trying to implement this, but when I follow the steps you list above the user cannot log in. I create the user and see it in mysql_users. When I try to login with that user this is what I get:

mysql -upercona -ppassword -h 127.0.0.1 -P 6033

mysql: [Warning] Using a password on the command line interface can be insecure.

ERROR 1045 (28000): ProxySQL Error: Access denied for user ‘percona’@’172.17.0.1’ (using password: YES)

Has anyone gotten this error and if so how did you get past it?

Thank you,

Kevin Runde